Assoc. Prof. Dr. M. Umut Demirezen

44.9K posts

Assoc. Prof. Dr. M. Umut Demirezen

@udmrzn

Associate Professor of Computer Science & Engineering, Artificial Intelligence Researcher, #artificialintelligence, #deeplearning, #machinelearning, #genai

Today we're releasing Trinity-Large-Thinking. Available now on the Arcee API, with open weights on Hugging Face under Apache 2.0. We built it for developers and enterprises that want models they can inspect, post-train, host, distill, and own.

🐙 Introducing OctoTools: an agentic framework with extensible tools for complex reasoning! 🚀 🧵 🔗 Explore now: octotools.github.io OctoTools tackles challenges in complex reasoning—including visual understanding, domain knowledge retrieval, numerical reasoning, and multistep problem-solving. It introduces: 🔹 Standardized tool cards to encapsulate tool functionality 🔹 A planner for structured high-level & low-level planning 🔹 An executor to carry out tool usage Featured Highlights 💡 ✅ Standardized tool cards for seamless integration of new tools-no framework changes needed (🔎 examples: #tool-cards" target="_blank" rel="nofollow noopener">octotools.github.io/#tool-cards

) ✅ Planner + Executor for structured high-level & low-level decision-making ✅ Diverse tools: visual perception, math, web search, specialized tools & more ✅ Long CoT reasoning with test-time optimization: planning, tool use, verification, re-evaluation & beyond (🔎 examples: #visualization" target="_blank" rel="nofollow noopener">octotools.github.io/#visualization) ✅ Training-free & LLM-friendly—easily extend with the latest models ✅ Task-specific toolset optimization: select an optimized subset of tools for better performance 📊 Performance: OctoTools achieves generalizable gains across 16 tasks, outperforming: 📈 GPT-4o (+9.3%) 📈 AutoGen (+10.6%) 📈 GPT-4o Functions (+7.5%) 📈 LangChain (+7.3%) 🤗 Try the live demo (supported by @huggingface @_akhaliq): huggingface.co/spaces/octotoo… 🐙 OctoTools in action on diverse real-world examples: ✅ How many r letters are in the word strawberry? ✅ What's up with the upcoming Apple Launch? Any rumors? (credit: @karpathy) ✅ Which is bigger, 9.11 or 9.9? ✅ Solve gane of 24 with [1,1,6,9] ✅ Research trends in tool agents with LLMs for scientific discovery from ArXiv, PubMed, and Nature ✅ How many baseballs are there? (visual perception, GPT-4o ❌) ✅ What is the organ on the left side of this image? (radiology, GPT-4o ❌) ✅ What are the cell types in this image? (pathology, GPT-4o ❌) ... and more! Dive deep into OctoTools: 📄 Read our 89-page paper: arxiv.org/abs/2502.11271 💻 Explore the codebase: github.com/octotools/octo… Huge thanks to our amazing team: @chenbowen118, @ShengLiu_, @connect_thapa, Joseph Boen! Special thanks to @james_y_zou, @StanfordHAI, @ChanZuckerberg for the support! 🙌 #Agent #LLMs #ToolUse #Reasoning #OctoTools

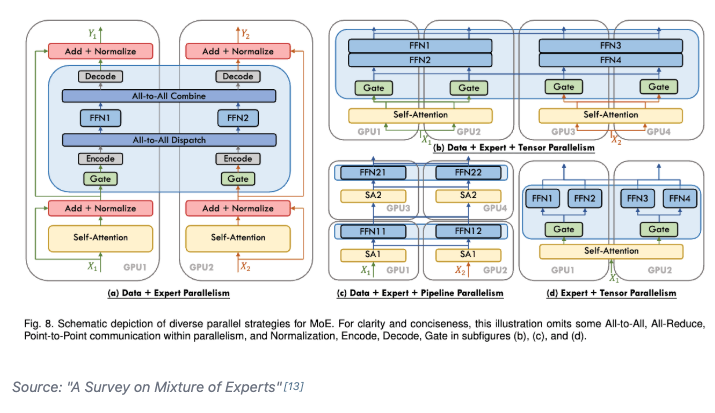

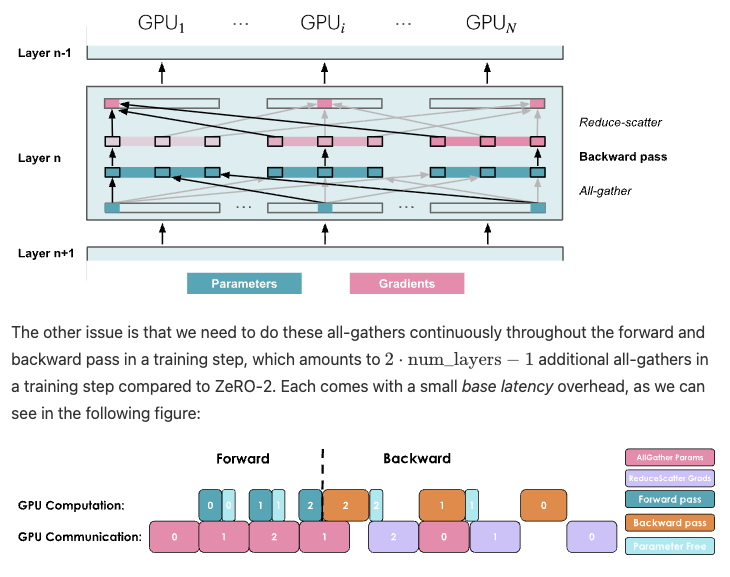

Day 92/365 of GPU Programming Taking a closer look at disaggregated LLM inference today, which I've been wanting to survey more after listening to the Dean <> Daly discussion at GTC. The best resource I found on the topic was this great talk by @Junda_Chen_ on the past, present and future of prefill decode disaggregation. In the lecture, Junda goes through Nvidia's dynamo, the intrinsic tradeoff spectrum between throughput & latency, TTFT, TPOT, the "goodput" metric, distinct characteristics between prefill vs decode, chunking P&D, the problem of interference, pipeline parallelism, resource & parallelism coupling, disaggregation and DistServe.

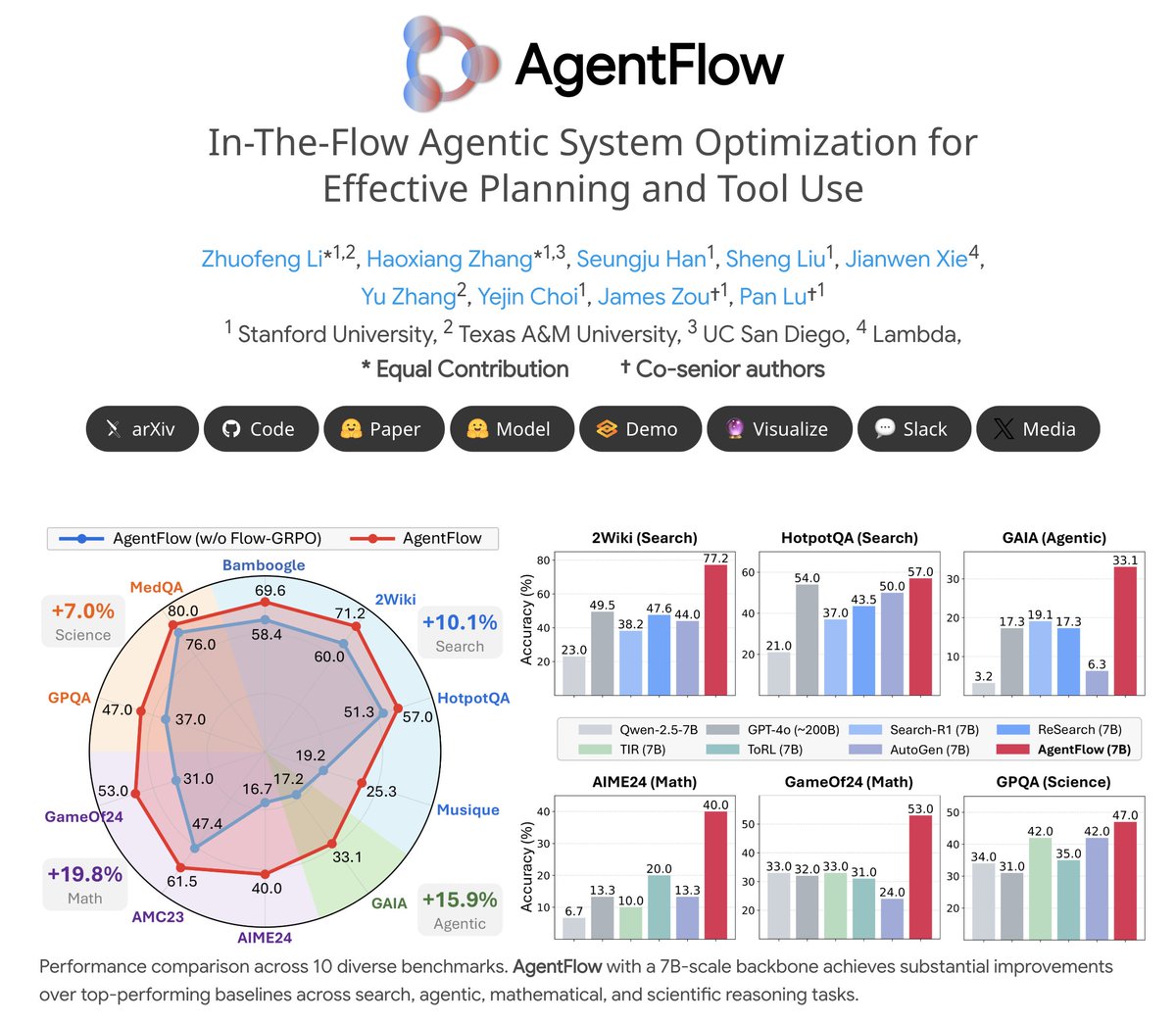

🔥Introducing #AgentFlow, a new trainable agentic system where a team of agents learns to plan and use tools in the flow of a task. 🌐agentflow.stanford.edu 📄huggingface.co/papers/2510.05… AgentFlow unlocks full potential of LLMs w/ tool-use. (And yes, our 3/7B model beats GPT-4o)👇 🧩A team of four specialized agents coordinates via shared memory: Planner: plan reasoning & tool calls 🧭 Executor: invoke tools & actions 🛠 Verifier: check memory status ✅ Generator: produce final results ✍️ 💡The Magic: 🌀💫 AgentFlow directly optimizes its Planner agent live, inside the system, using our new method, Flow-GRPO (Flow-based Group Refined Policy Optimization). This is "in-the-flow" reinforcement learning. 📊The Results: AgentFlow (7B backbone) outperforms top baselines on 10 benchmarks, with average gains of: +14.9% on search 🔍 +14.0% on agentic 🤖 +14.5% on math ➗ +4.1% on science 🔬 🏆It even surpasses larger-scale models like Llama-3.1-405B and GPT-4o (~200B). Try it yourself! 🛠️Code: github.com/lupantech/Agen… 🚀Demo: huggingface.co/spaces/AgentFl… 🤖Model: huggingface.co/AgentFlow/mode… 📊Visual: #visualization" target="_blank" rel="nofollow noopener">agentflow.stanford.edu/#visualization

💬Join our Slack: join.slack.com/t/agentflow-co… #agentic #llms #RL #tooluse