Mateusz Mirkowski

1.7K posts

Mateusz Mirkowski

@visualdevguy

🚀Product engineer ⚡MVP builder 🦞OpenClaw ❤️AI lover 😇13 years in IT

Remote work evangelist Katılım Mart 2013

111 Takip Edilen333 Takipçiler

Sabitlenmiş Tweet

@pdrmnvd Stop reading most parts. Verify features by tests, AI code review, use cases, documentations. Read only critical parts.

English

@visualdevguy ha, queue it up before bed and check the results in the morning. not the worst strategy

English

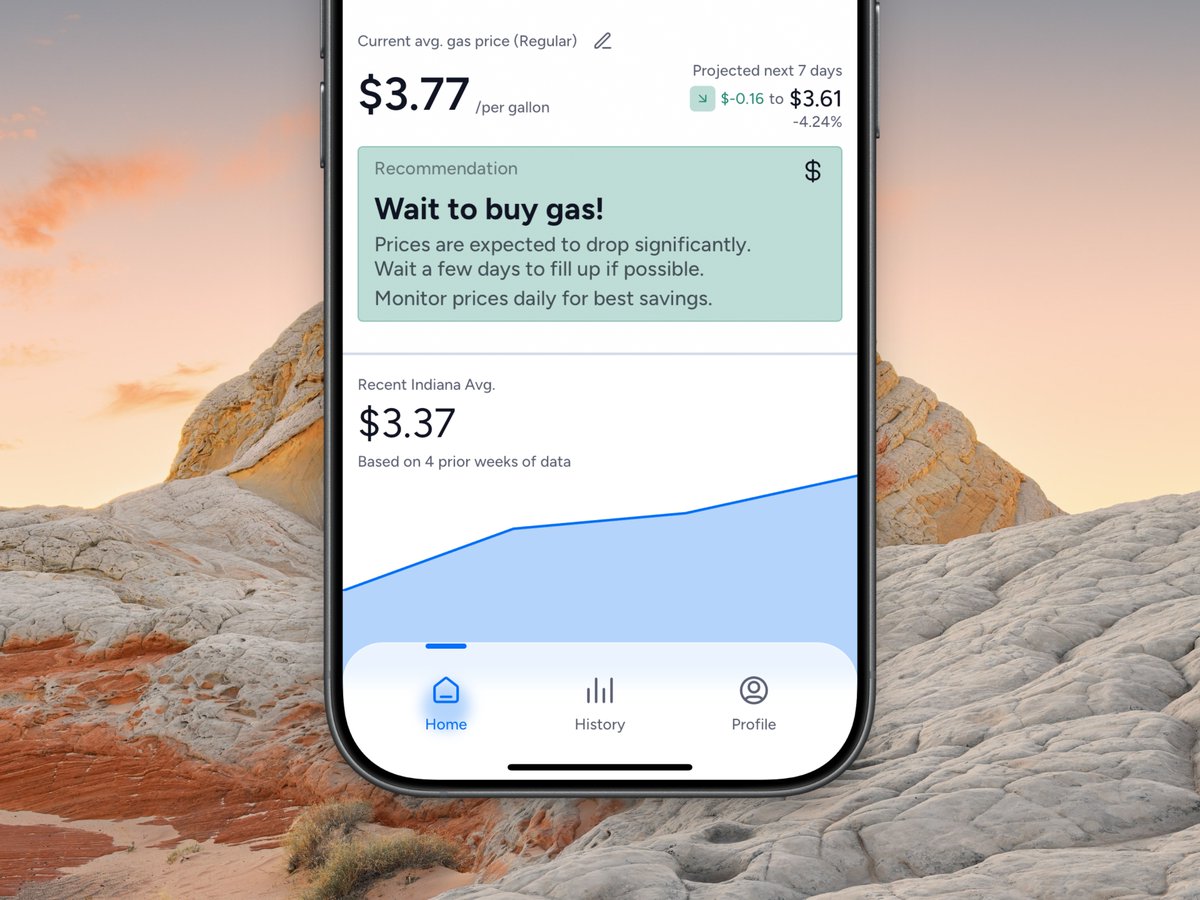

GLM 5.1 is the slowest frontier model we've ever benchmarked on BridgeBench.

44.3 tokens per second.

Half the speed of GPT 5.4.

Nearly 6x slower than Grok 4.20.

Z.ai traded all of their speed for intelligence.

The coding benchmarks improved.

The throughput collapsed.

In 2026, agentic coding is about parallelism.

You're running 5, 10, 15 agents at once.

A model this slow bottlenecks every workflow it touches.

Intelligence without speed is a luxury most vibe coders can't afford.

bridgebench.ai

English

@ivanfioravanti On weekend I will test it on my 4090. I wonder what difference will be compare to mac 64gb.

English

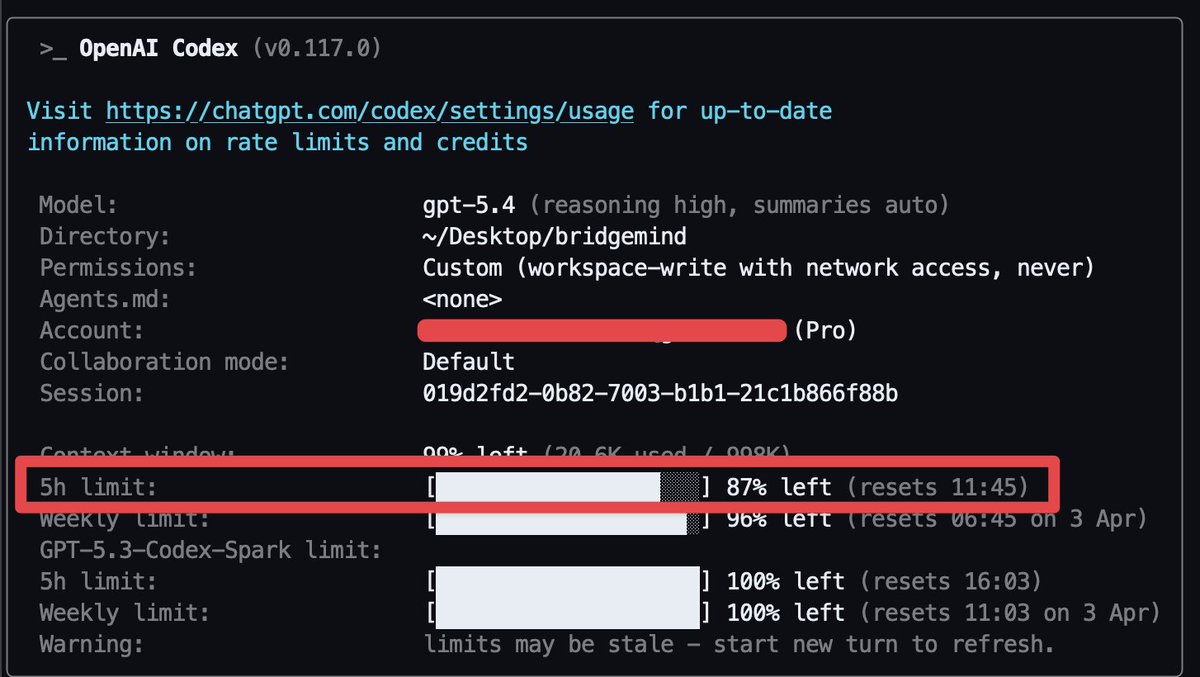

@bridgemindai Yup, and I fell like limits are lower than after gpt 5.4 release.

English

@visualdevguy that's impressive for $20. Plus plan is solid value

English

@Presidentlin Nice. Off-peak time is my morning, working time. <3

English

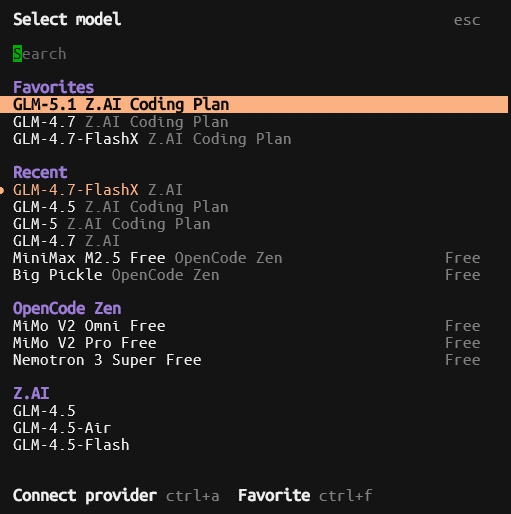

Model is up on OpenCode btw.

I think this is going to be my lineup

Opus: GLM 5.1

Sonnet: GLM 4.7

Haiku: GLM 4.7-FlashX

The GLM 5 series

Consumes quota at a rate of 3× during peak hours and 2× during off-peak hours.

They have a special

"As a limited-time benefit, GLM-5.1 and GLM-5-Turbo will count as 1× during off-peak hours until the end of April. Peak hours are from 14:00 to 18:00 (UTC+8) daily."

So for me I shoud not be using them for most of the morning after 8 am.

Which is fine, my order will be:

Google

OAI

Zai

OAI is mostly for big-brain tasks or attention to detail.

Google with 3.1 Flash Lite and 3.1 Flash are for spamming.

Zai will be for when Google is in cool down.

This is me being spoiled, really, my time is best spent marketing my stuff, then churning code.

English

@scaling01 GPT 5.4 is not smarter than Opus 4.6. It's just more reliable.

English

I've actually resubscribed to ChatGPT Plus because I'm currently building a lot, and a $200 subscription is too much

I rather get Claude Pro and ChatGPT Plus for $40

what I've noticed:

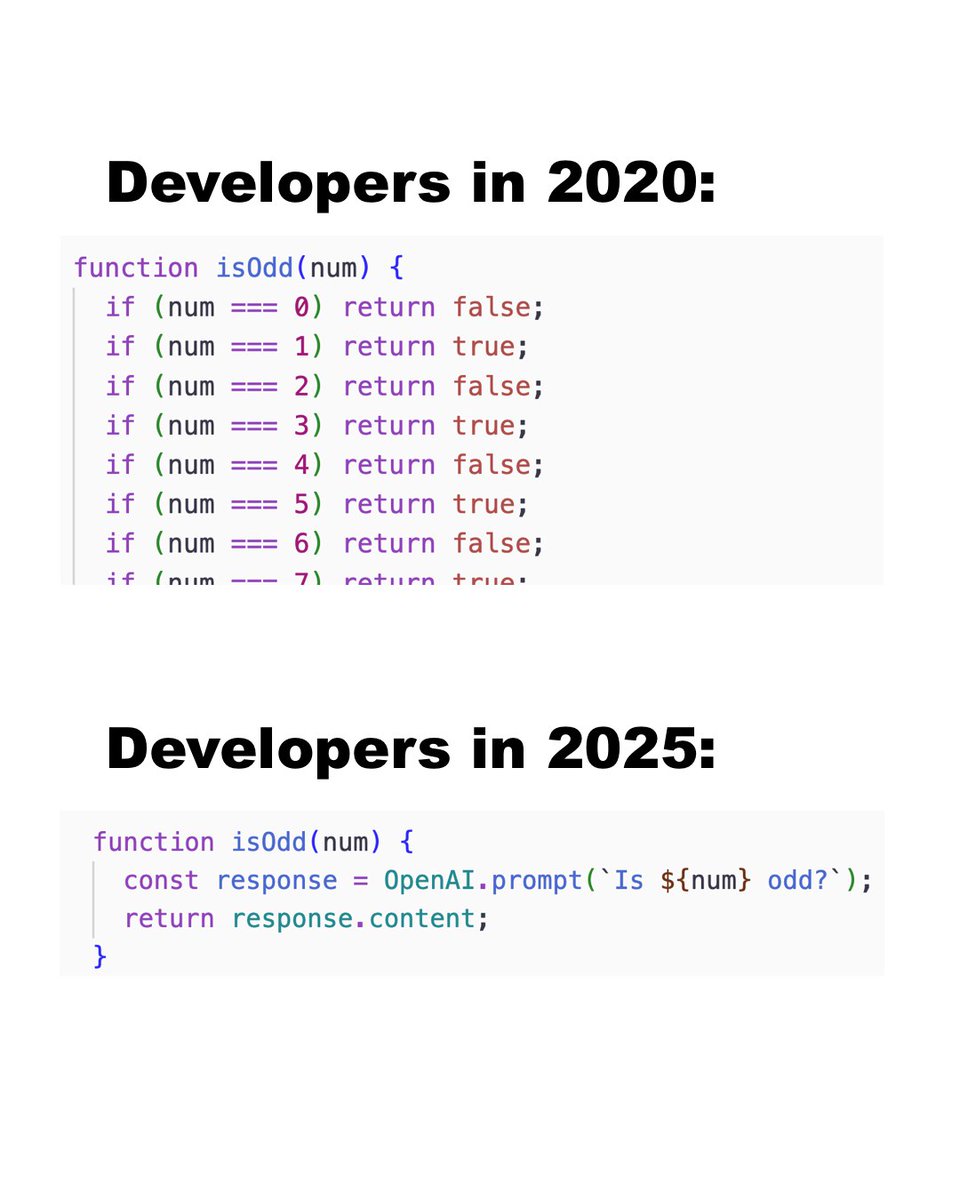

- GPT-5.4 is smarter than Opus 4.6

- Opus and Sonnet make such silly mistakes sometimes and I don't feel like they are actually thinking about what they are doing. They still feel like they are exploitation maxxing

English

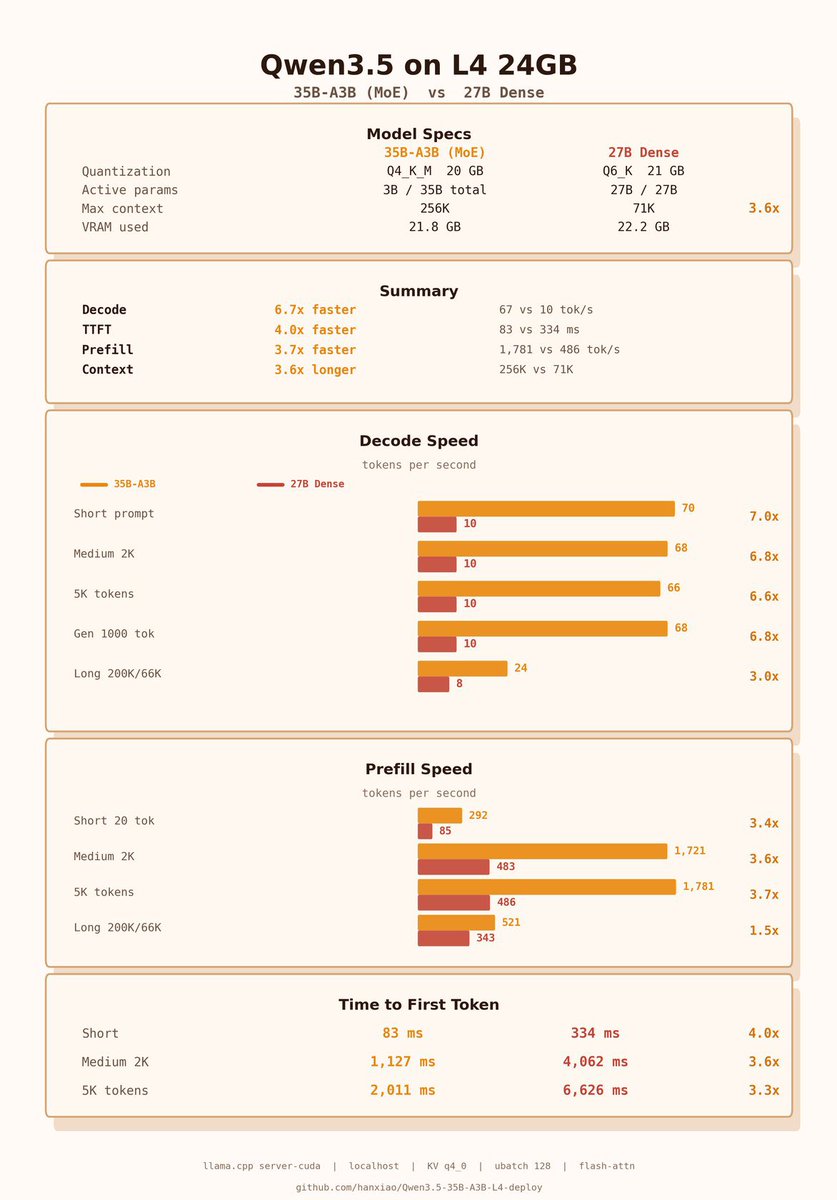

Rising interest in Qwen3.5-27B dense over 35B-A3B. If you're running on a budget GPU like L4 24GB, you need to know that 35B-A3B is still 7x faster decode & 4x longer context at identical quality: 256K vs 71K in the same 24GB vram, making 35B-A3B the better choice for long context tasks like synthetic data gen.

English

@design_nocodeio I heard many times about Railway, why it's popular?

English

@LottoLabs Outstanding. I have 4090 and m1 max 64gb. I plan to buy Mac studio 128gb. I think I should buy it ASAP because hardware prices gonna spike soon.

English

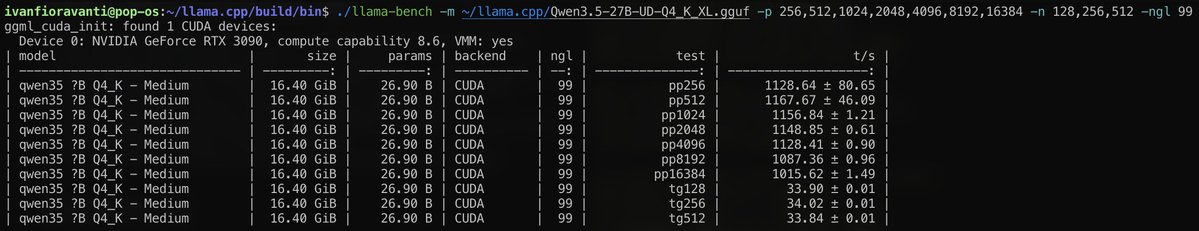

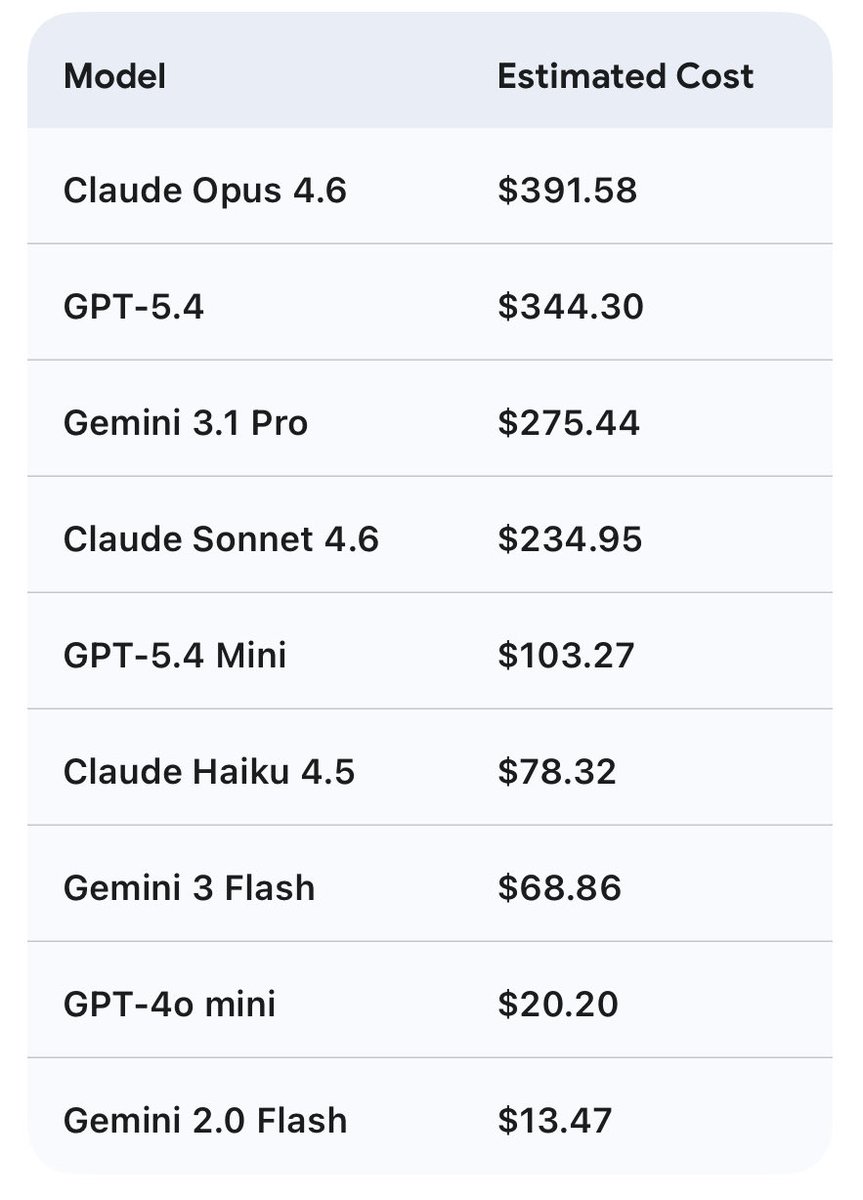

Qwen 27b on the 3090 saving me a bag.

This is cost savings for 7 days of usage, w/ Hermes agent. Assuming 80% cache hit (unlikely) and no cache timeout. This is conservative.

27b is between sonnet and 5.4 mini

This is just my tokens in/out w/ api costs, assuming no rate limits.

Obviously cheaper w/ coding plans $200/m but would be hitting limits likely.

English

That's why local llms are winning in 2026.

If you don't have mac mini/studio, buy it now, because hardware gonna spike soon.

Lotto@LottoLabs

Qwen 27b on the 3090 saving me a bag. This is cost savings for 7 days of usage, w/ Hermes agent. Assuming 80% cache hit (unlikely) and no cache timeout. This is conservative. 27b is between sonnet and 5.4 mini This is just my tokens in/out w/ api costs, assuming no rate limits. Obviously cheaper w/ coding plans $200/m but would be hitting limits likely.

English

@LottoLabs Outstanding. How do you like qwen as Orchestrator? Any downsides or things you don't like?

English

Qwen3.5-35B compressed 20% with 1%~ performance drop on average. Now you can fit this (4bits) with full context on 24GB of VRAM

700$~ or 1x 3090

huggingface.co/0xSero/Qwen-3.…

English

@AlexFinn Very interesting insight. I think investing now in mac studio and other hardware is best investments.

English

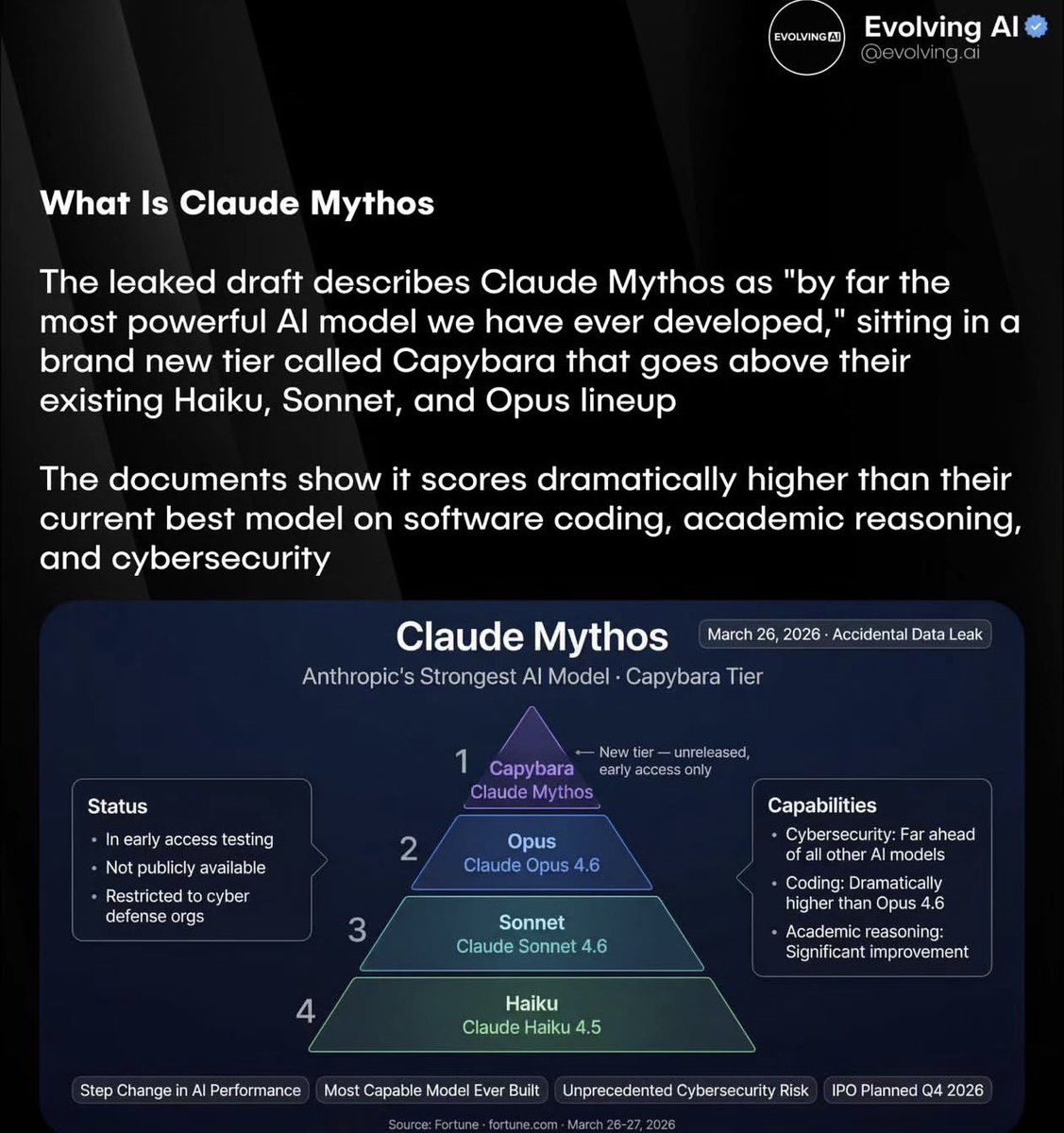

Drop what you're doing and read this post

Anthropic is about to release the most powerful AI model ever created. Claude Mythos

It's so powerful they consider it a danger to cybersecurity everywhere

It will also be significantly more expensive than Opus

I don't know your financial situation, but I do know this: we are entering the era of humanity where having money is going to give you signficantly more power than those without money

Those without money will not be able to create nearly the same economic value as those that already have money because they won't have access to this kind of super intelligence, widening the gaps even further

This is where the concept of a permanent underclass comes from

If you want to keep up in this economic race you have to be willing to do 1 of 2 things:

1. Spend the money necessary to use this intelligence to create value

2. Procure the money you need to use this intelligence to create value

The people who go out and immediately use this model to create business, products, and services will experience unmatched wealth

Both the scariest and most exciting time ever

M1@M1Astra

Claude Mythos Blog Post Saved before it was taken down. m1astra-mythos.pages.dev

English