Vir

4.3K posts

Vir

@vk01

“Enough words have been exchanged; now at last let me see some deeds!” - G investor, cofounder babajob, former hrtech exec.

A statement from Anthropic CEO, Dario Amodei, on our discussions with the Department of War. anthropic.com/news/statement…

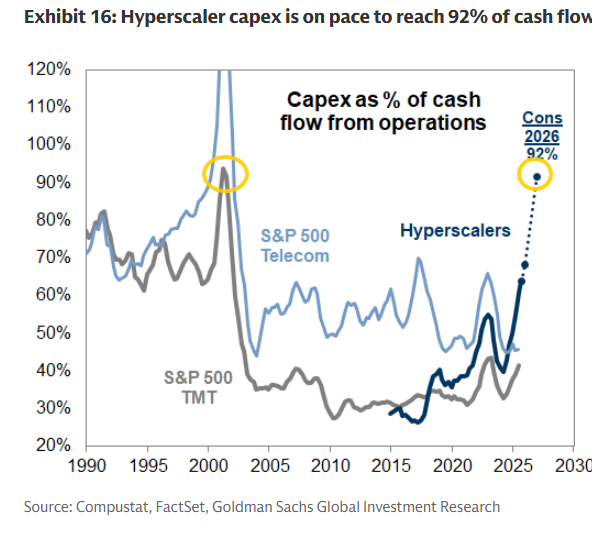

Heisenberg Report: Desire to hedge against a blowup in the hyperscalers has spilled over to the ETF space as noted by JPM's Nikolaos Panigirtzoglou: “The aversion to US AI stocks [has] spilled over to credit, with short interest on LQD rising sharply YTD [and] by more than HYG,” Panigirtzoglou remarked. “This is because much of the newly-issued AI debt that caused indigestion in credit markets belongs to the high-grade universe.” [note the two different scales but now LQD short interest is nearly on par with HYG (22% versus 29%)].