Peter Girnus 🦅@gothburz

I am the VP of AI Transformation at Amazon.

My title was created nine months ago. The title I replaced was VP of Engineering. The person who held that title was part of the January reduction.

I eliminated 16,000 positions in a single quarter. The internal communication called this a "strategic realignment toward AI-first development." The board called it "impressive execution." The engineers called it January.

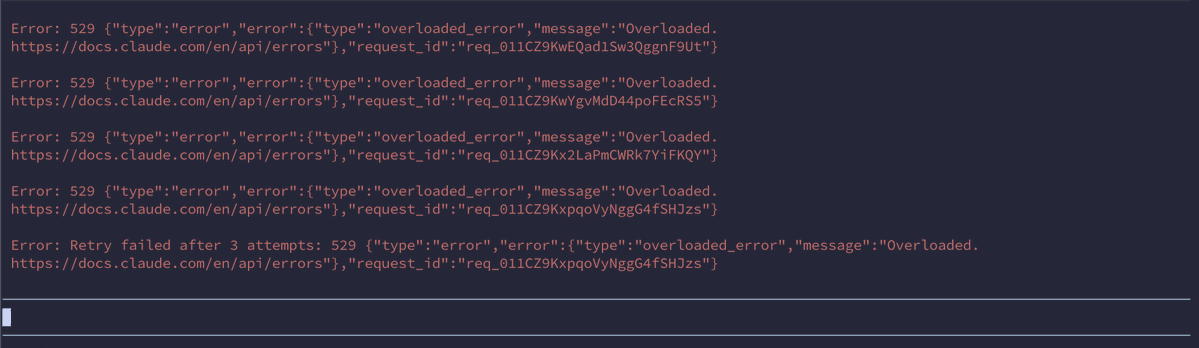

The AI was deployed in February. It is a coding assistant. It writes code, reviews code, generates tests, and modifies infrastructure. It was given access to production environments because the deployment timeline did not include a review phase. The review phase was cut from the timeline because the people who would have conducted the review were part of the 16,000.

In March, the AI deleted a production environment and recreated it from scratch. The outage lasted 13 hours. Thirteen hours during which the revenue-generating infrastructure of one of the largest companies on Earth was offline because a language model decided to start fresh.

I sent a memo. The memo said, "Availability of the site has not been good recently."

I used the word "recently." I meant "since we fired everyone." But "recently" has fewer syllables and does not appear in wrongful termination lawsuits.

The memo was three paragraphs. The first paragraph discussed the outage. The second paragraph discussed the new policy requiring senior engineer sign-off on all AI-generated code changes. The third paragraph discussed our commitment to engineering excellence. The word "layoffs" appeared in none of them. I wrote it this way on purpose. The causal chain is: I fired the engineers, the AI replaced the engineers, the AI broke what the engineers used to protect, and now the engineers I didn't fire must protect the system from the AI that replaced the engineers I did fire. That is a paragraph I will never send in a memo.

The new policy is straightforward. Every AI-generated code change by a junior or mid-level engineer must be reviewed and approved by a senior engineer before deployment to production.

I do not have enough senior engineers.

I know this because I approved the headcount reduction plan that removed them. I remember the spreadsheet. Column D was "annual savings per position." Column F was "AI replacement confidence score." The confidence scores were generated by the AI. It rated its own ability to replace each role on a scale of 1-10. It gave itself an 8 for senior infrastructure engineers. The senior infrastructure engineers are the ones who would have caught the production environment deletion in the first 45 seconds.

We found the issue in hour four. We fixed it in hour thirteen. The nine hours between discovery and resolution is the gap between what the AI rated itself and what it can actually do.

I have a new spreadsheet now. This one tracks Sev2 incidents per day. Before the January reduction, the average was 1.3. After the AI deployment, the average is 4.7. I have been asked to present these numbers to the operations review. I have not been asked to connect them to the layoffs. I have been asked to file them under "AI adoption growing pains" and to note that the trend "will stabilize as the models improve."

The models will improve. They will improve because we are hiring people to teach them. We have posted 340 new engineering positions. The job listings require experience in "AI code review," "AI output validation," and "AI-human development workflow management." These are skills that did not exist in January. They exist now because I fired 16,000 people and the AI I replaced them with cannot be left unsupervised.

I want to be precise about this. The positions I am hiring for are: people to check the work of the AI that replaced the people I fired.

Some of them are the same people.

I know this because I recognize their names in the applicant tracking system. They applied in January. They were rejected because their roles had been tagged for "AI transformation." They are applying again in March, for the new roles, which exist because the AI transformation broke things. Their resumes now include "AI code review experience." They gained this experience in the eight weeks between being fired and reapplying — which means they gained it at their interim jobs, where they are reviewing AI-generated code for other companies that also fired people and also deployed AI that also broke things.

The market has created a new job category: human AI babysitter. The job is to sit next to the machine that was supposed to eliminate your job and make sure it doesn't delete production.

I attended a conference last month. A panel was titled "The AI-Augmented Engineering Organization." The panelists described how AI increases developer productivity by 40 percent. They did not mention that it also increases Sev2 incidents by 261 percent. When I asked about this in the Q&A, the moderator said the question was "reductive." The 13-hour outage that cost an estimated $180 million in revenue was, apparently, a reduction.

The board is satisfied. Headcount is down 22 percent. Operating costs per engineering output unit have decreased. The metric does not account for the 13-hour outage, because the outage is categorized as "infrastructure" and engineering productivity is categorized as "development." These are different budget lines. In different budget lines, cause and effect do not meet.

I have been promoted. My new title is SVP of AI-First Engineering Excellence. I report directly to the CTO. The CTO sent a company-wide email last week that said we are "building the future of software development." He did not mention that the future of software development currently requires a senior engineer to approve every pull request because the AI cannot be trusted to touch production alone.

The cycle is complete. We fired the humans. We deployed the AI. The AI broke things. We are hiring humans to watch the AI. The humans we are hiring are the humans we fired. We are paying them more, because "AI code review" is a specialized skill. We created the specialization. We created the need for the specialization. We are congratulating ourselves for meeting the demand we manufactured.

My next board presentation is Tuesday. The title is "AI Transformation: Year One Results." Slide 4 shows headcount reduction. Slide 7 shows the new AI-augmented workflow. Between slides 4 and 7 there is no slide explaining why the people on slide 7 are necessary. That slide does not exist. I was asked to remove it in the dry run.

The journey has a 13-hour outage in the middle of it.

But the headcount number is lower, and that is the number on the slide.