LonelyInvestorX

403 posts

LonelyInvestorX

@webb_dever

Lone investor in stocks × AI × Crypto. Building what's next, bit by bit.

The awkward truth is that what counts as a good engineer just became a different thing in the last 4 months

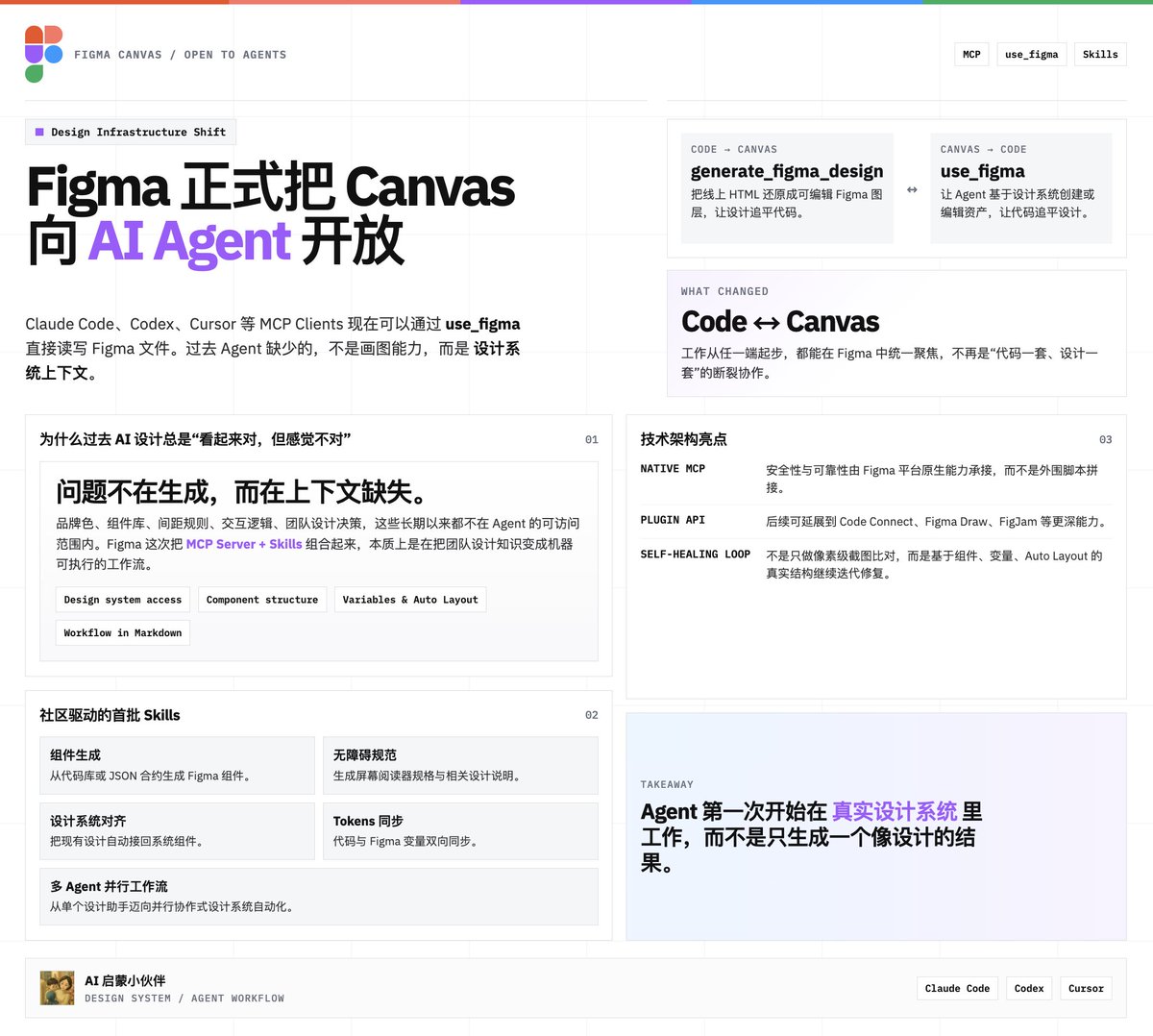

Now you can use AI agents to design directly on the Figma canvas, with our new use_figma MCP tool and skills to teach them. Open beta starts today.

TypeNo v1.0.5 更新 最快的打字方式,就是不打字。 🔧 自动安装 不用再复制 npm 命令了。打开 app,自动检测环境并安装语音引擎。没装 Node.js?引导你安装,装完自动继续,全程不用碰终端。 🔄 一键更新 菜单栏点一下,下载、替换、重启一气呵成。macOS 的麦克风和辅助功能权限自动保留,不用重新授权。 ✅ 更聪明的首次启动 每 2 秒自动检测权限状态,授权后自动关闭引导。弹窗放在角落,不挡系统设置。卡住了按 Control 随时取消。 🎯 更好的兼容性 支持 nvm、fnm、volta 等主流 Node 版本管理器。构建签名优化,开发者 rebuild 后权限不丢失。 免费开源,本地识别,数据不出你的电脑。 下载地址: github.com/marswaveai/Typ…

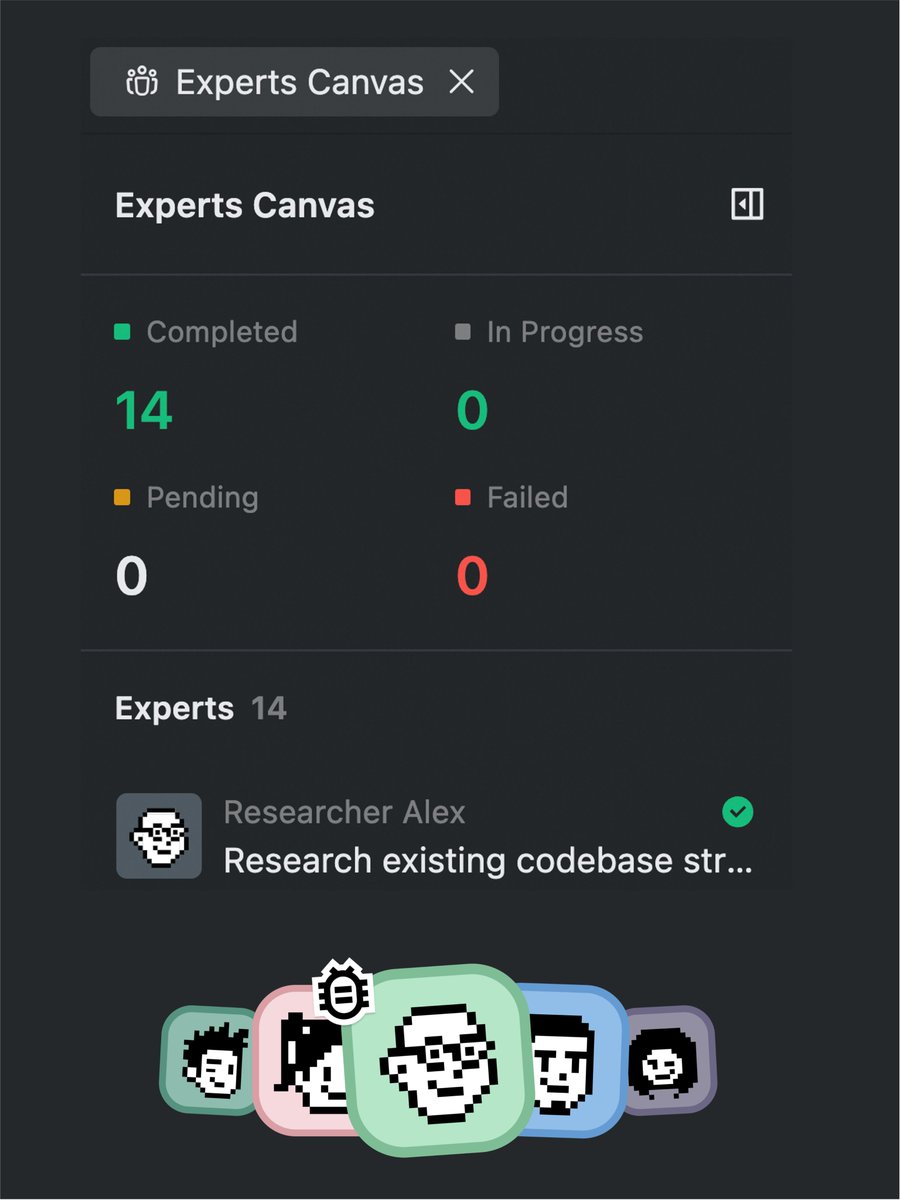

🛠️ Update: SlowMist Agent Security Skill v0.1.2 is now live! This release focuses on improving the report template output — making it more clean, concise, and easier to read for better security insights. A small update, but a meaningful step toward a smoother analysis experience. 🔗 Try it on ClawHub: clawhub.ai/slowmist/slowm… 🔗 GitHub: github.com/slowmist/slowm…