Wei (Will) Feng

37 posts

Wei (Will) Feng

@weifengpy

PyTorch Distributed, FSDP, float8

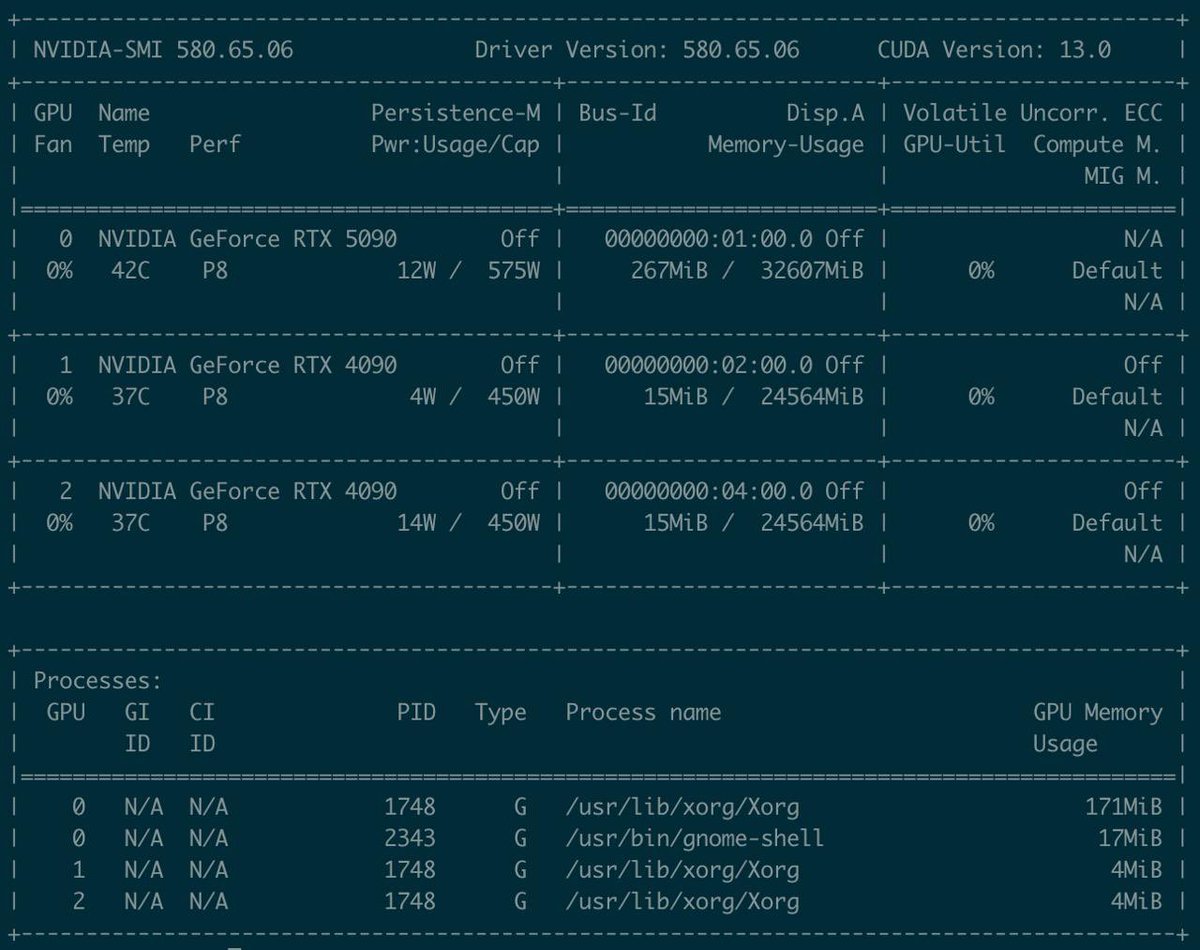

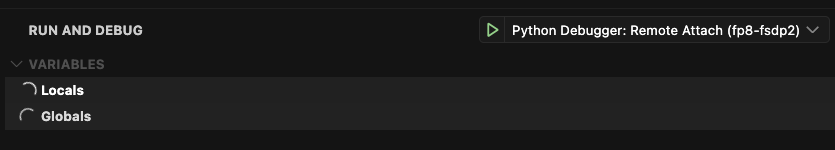

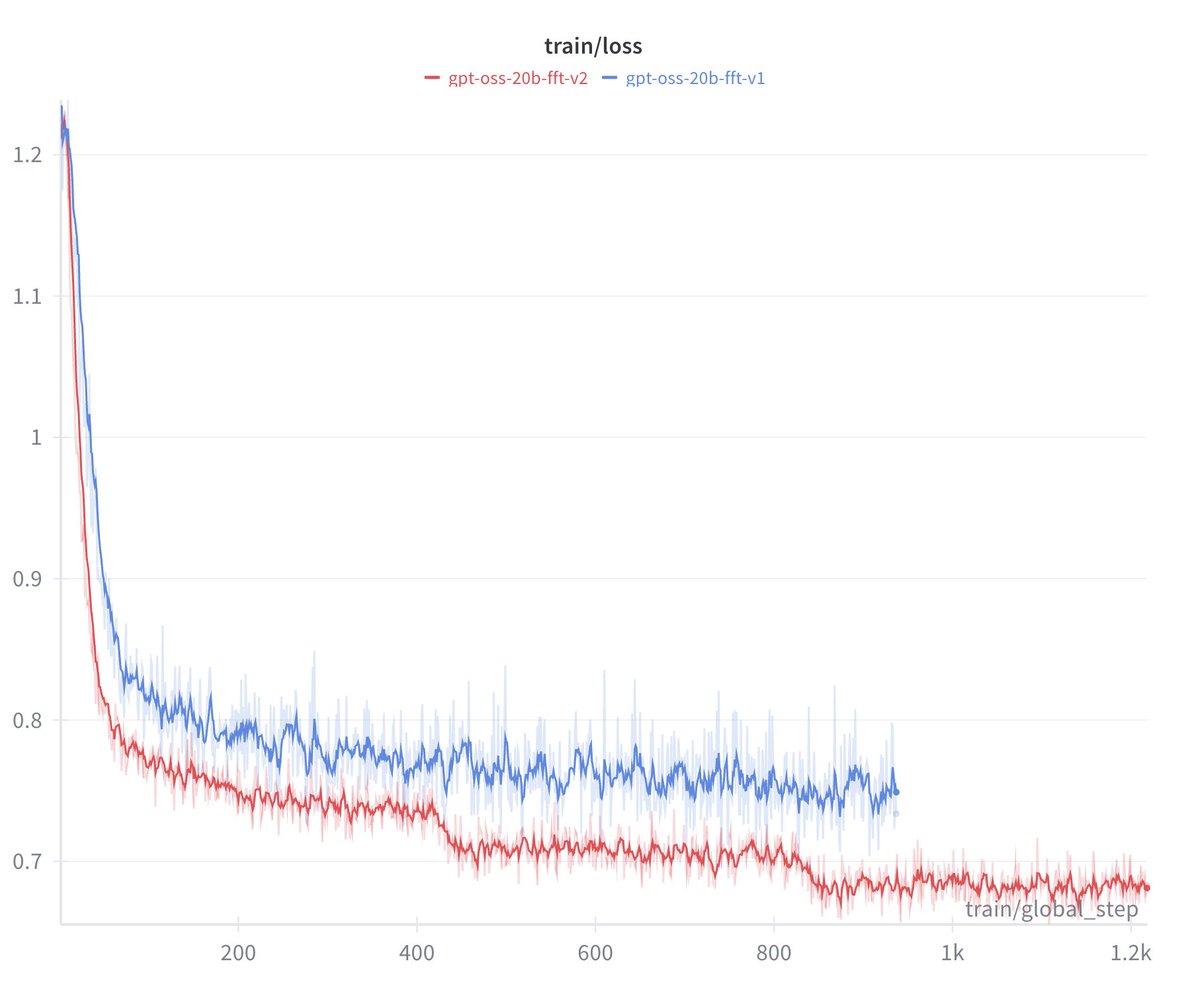

so, this is gonna be my evening! fine-tuning gpt-oss 120b in @axolotl_ai! LFG! 🔥

so, this is gonna be my evening! fine-tuning gpt-oss 120b in @axolotl_ai! LFG! 🔥

Quick poll, how familiar with @PyTorch hooks are you? do you understand the different types, their inputs, and how they differ/their use cases?

huggingface.co/Aurore-Reveil/… Presenting one of the first Qwen3 large finetunes for RP - It's an experiment alright but it's... something. Trained mostly by @intervitens and his extreme knowledge about weird pytorch optims, Torchtune, Etc & Me (i sat in the corner and added hparams)

You can now fine-tune Gemma 3n for free with our notebook! Unsloth makes Google Gemma training 1.5x faster with 50% less VRAM and 5x longer context lengths - with no accuracy loss. Guide: #fine-tuning-gemma-3n-with-unsloth" target="_blank" rel="nofollow noopener">docs.unsloth.ai/basics/gemma-3…

GitHub: github.com/unslothai/unsl… Colab: colab.research.google.com/github/unsloth…

@eliebakouch @JingyuanLiu123 @zxytim we've discussed this for a while. legacy megatron fsdp1 flatten all weights first and slice. but fsdp2 use per param sharding and it's useful for qlora like things. but moohshot's internel codebase is based on old megatron i guess so it does not match with fsdp2 well