Sabitlenmiş Tweet

Wonmin Byeon

96 posts

Wonmin Byeon retweetledi

Wonmin Byeon retweetledi

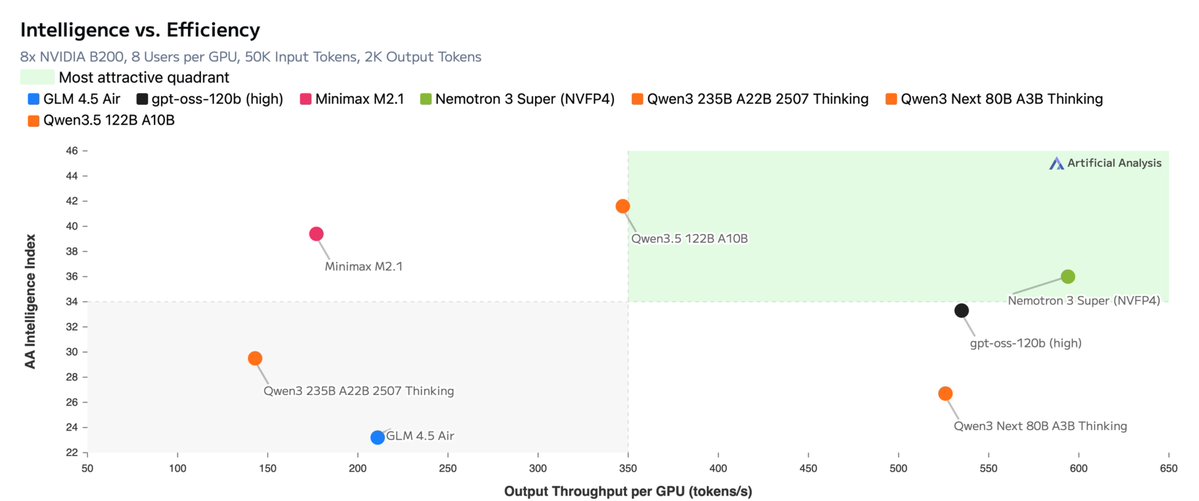

Announcing NVIDIA Nemotron 3 Super!

💚120B-12A Hybrid SSM Latent MoE, designed for Blackwell

💚36 on AAIndex v4

💚up to 2.2X faster than GPT-OSS-120B in FP4

💚Open data, open recipe, open weights

Models, Tech report, etc. here:

research.nvidia.com/labs/nemotron/…

And yes, Ultra is coming!

English

Wonmin Byeon retweetledi

One of the things that makes Nemotron 3 Super so fast is native multi-token prediction.

1. Model predicts several tokens rather than just one, which is essentially free because it's just a bit of extra work for the last layer of the model. The first token is accepted, the others are provisional.

2. On the next pass, the model verifies the extra tokens and either accepts them if they were correct or rejects them if they were wrong. If they were wrong, we still accept one token, so autoregression proceeds even with misprediction.

3. Verification is essentially free because the GPU has a lot of extra compute capability when running at a small batch size that would otherwise be wasted.

4. Speedup then is directly linked to acceptance rates. If the multi-token prediction is more accurate, we get a bigger speedup.

English

@bronzeagepapi It can be used for gated deltanet as well. Hopefully, someone tries it with Qwen3.5 VL ;)

English

🎉Thrilled to share that I will be joining NVIDIA Taiwan (@NVIDIAAI) as a research intern! I'll be working on efficient modeling of Video LLMs under the supervision of Ryo Hachiuma (@RHachiuma). Looking forward to this exciting journey ahead!

English

AI Coffee Break with Letitia: youtu.be/uMk3VN4S8TQ?si…

Arxiv Papers: youtu.be/I_loecu6Gew?si…

AI Papers Podcast: youtu.be/ifqHBtpw7CM?si…

AI Paper Slop: youtu.be/pKObqY3msJk?si…

Our project page and the full paper are here: research.nvidia.com/labs/lpr/storm/

YouTube

YouTube

YouTube

YouTube

English

It’s been months since we introduced STORM, and it’s great to see so many video discussions! ⛈️🎥

To catch up on our token-efficient long video understanding work, check out these reviews (Some are AI-generated but very useful!).

Huge thanks to the creators! 🙌

The links ↓

Wonmin Byeon@wonmin_byeon

🚀 New paper: STORM — Efficient VLM for Long Video Understanding STORM cuts compute costs by up to 8× and reduces decoding latency by 2.4–2.9×, while achieving state-of-the-art performance. Details + paper link in the thread ↓

English

Wonmin Byeon retweetledi

What if your model could learn from its own drafts during RL training? 🚀

🔥 New paper: iGRPO: Iterative Group Relative Policy Optimization

We add a self-feedback loop to GRPO: the model drafts multiple solutions, picks its best one, then learns to refine beyond it.

Core idea:

Stage 1 → explore and select your strongest attempt. Stage 2 → condition on that attempt and beat it.

Same scalar reward. No critics, no generated critiques, no verification text. The best draft is the only feedback the model needs.

📊 Results across 7B / 8B / 14B models:

• Nemotron-H-8B-Base-8K: 41.1% → 45.0% (+3.96 over GRPO)

• DeepSeek-R1-Distill-Qwen-7B: 68.3% → 69.9%

• OpenMath-Nemotron-14B: 76.7% → 78.0%

• OpenReasoning-Nemotron-7B on AceReason-Math: 85.62% AIME24 / 79.64% AIME25 🏆

The same two-stage wrapper also improves DAPO and GSPO. It's not tied to GRPO at all.

📄 Paper: arxiv.org/abs/2602.09000

This work was the result of a wonderful collaboration with @shrimai_ @igtmn @GXiming @SeungjuHan3 @_weiping @YejinChoinka @jankautz

English

Wonmin Byeon retweetledi

🚀Announcing C-RADIOv4: The latest evolution in our agglomerative vision backbone family is here!

We’ve built a unified student model that distills the best capabilities of multiple state-of-the-art teachers into a single, efficient architecture.

DINOv3, SAM3 and SigLIP2 all in one forward pass with better features. Better efficiency, robustness and any resolution.

👐Permissive and open-source!

Read the tech report: arxiv.org/pdf/2601.17237

Models:

huggingface.co/nvidia/C-RADIO…

huggingface.co/nvidia/C-RADIO…

Github: github.com/NVlabs/RADIO

🧵1/N

English

Wonmin Byeon retweetledi

Wonmin Byeon retweetledi

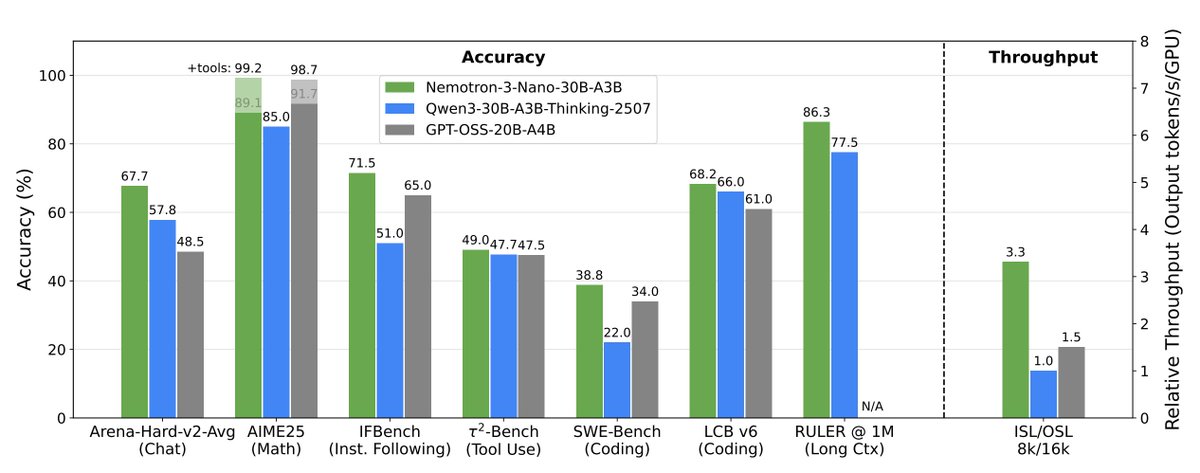

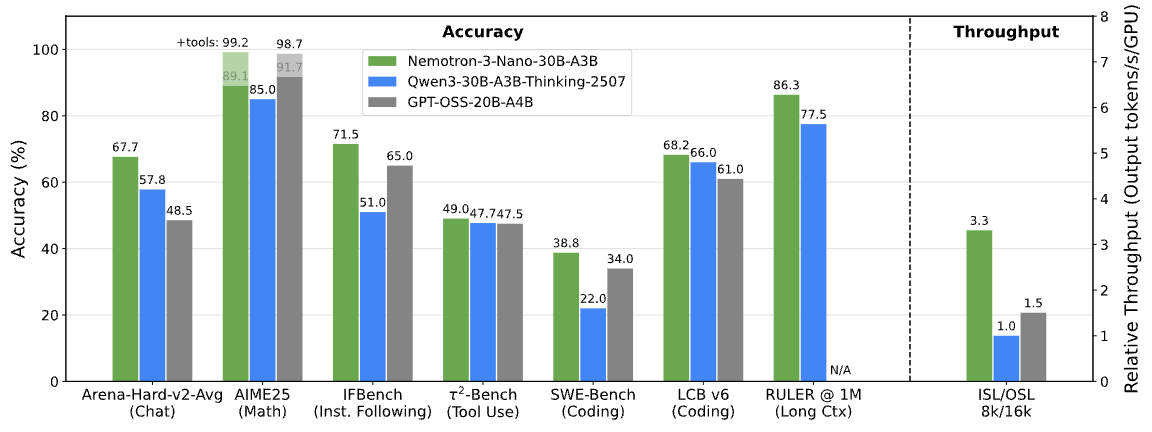

🚀 Nemotron-3 Nano is out.

An open-weight reasoning model built for efficiency, long-context intelligence, and agentic workflows.

Faster inference. Stronger reasoning. Built to scale.

📄 Technical report ↓

research.nvidia.com/labs/nemotron/…

#Nemotron3 #AI #OpenModels #NVIDIA

English

Wonmin Byeon retweetledi

We just released Nemotron 3 Nano 30B-A3B. It is fast, accurate, and truly open. Check it out!

Nemotron 3 Super (~4x larger) and Ultra (~16x larger) are currently being trained and will be released in 2026!

blog-post and technical report: nvda.ws/48RusVt

English

NVIDIA’s hybrid model just got bigger, faster, and stronger.

Bryan Catanzaro@ctnzr

Today, @NVIDIA is launching the open Nemotron 3 model family, starting with Nano (30B-3A), which pushes the frontier of accuracy and inference efficiency with a novel hybrid SSM Mixture of Experts architecture. Super and Ultra are coming in the next few months.

English