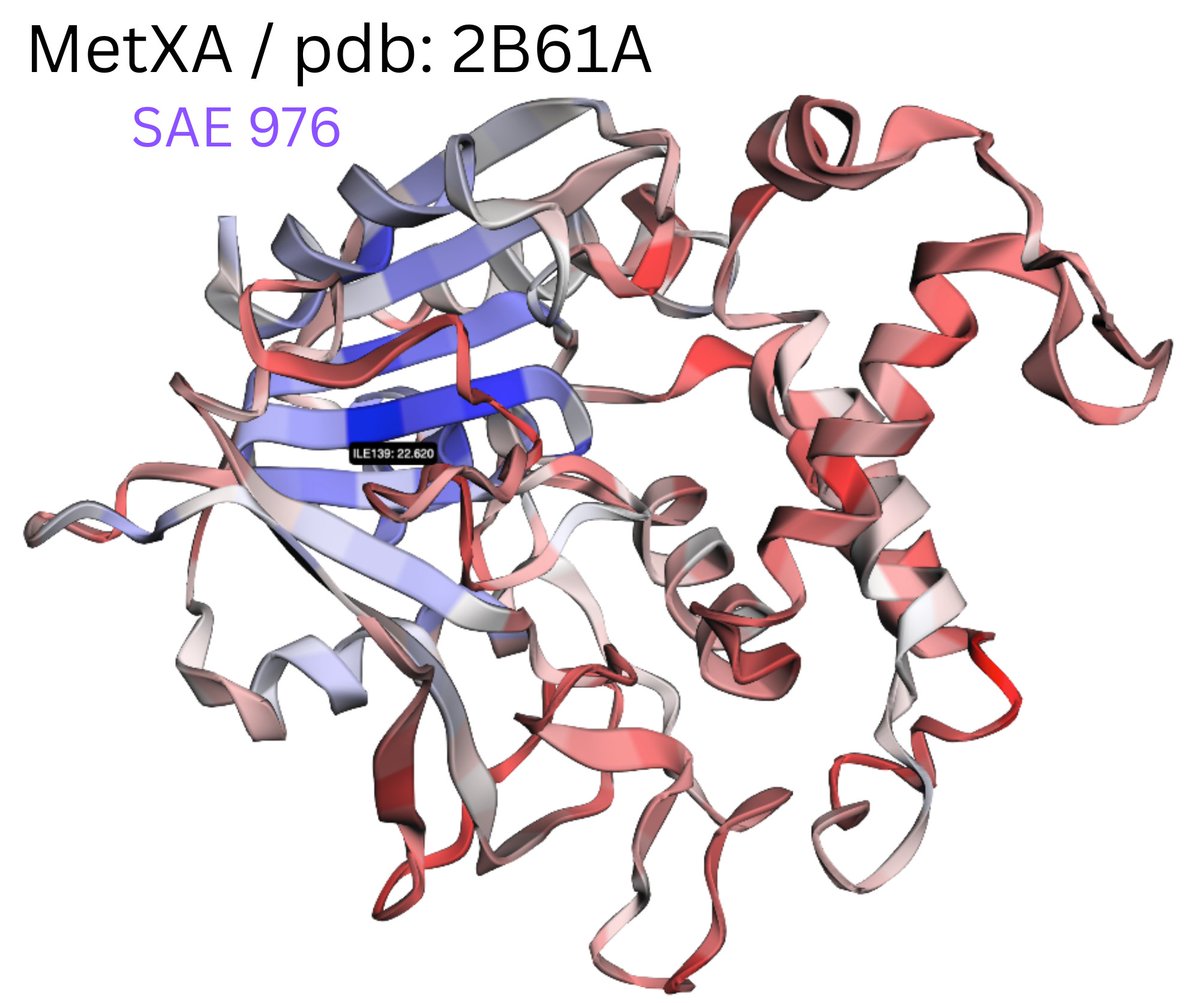

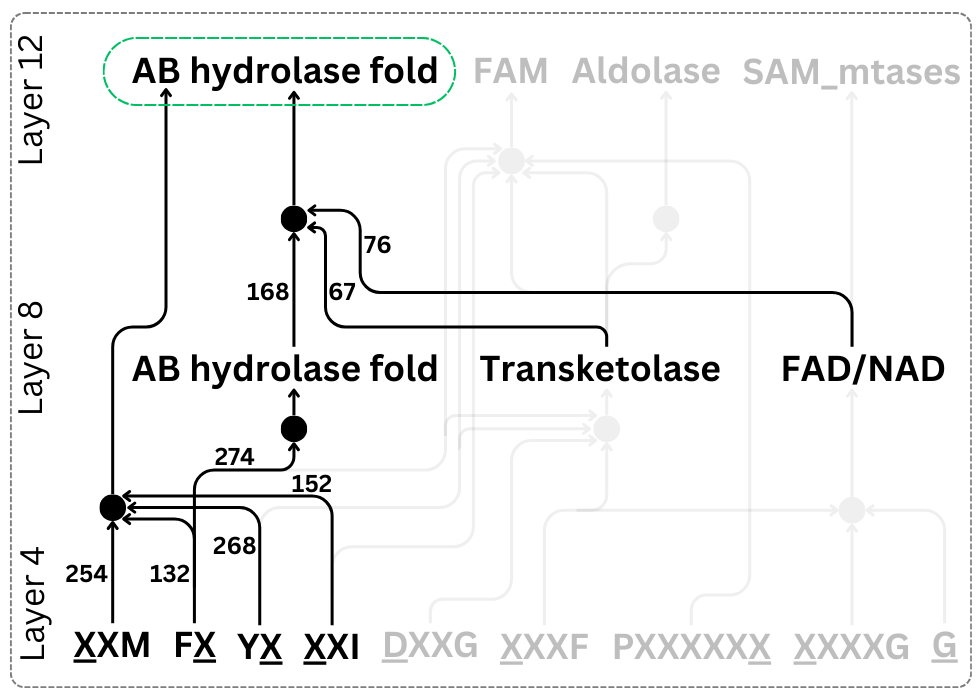

Can we understand neural networks from their weights? Often, the answer is no. An MLP's activation function obscures the relationship between inputs, outputs, and weights. In our new ICLR'25 paper, we study "bilinear MLPs", a special MLP that's performant AND interpretable! 🧵