Outside Context Problem retweetledi

Outside Context Problem

275 posts

Outside Context Problem

@xempirical

software developer & problem solver

Katılım Ekim 2017

762 Takip Edilen38 Takipçiler

Outside Context Problem retweetledi

My pleasure to come on Dwarkesh last week, I thought the questions and conversation were really good.

I re-watched the pod just now too. First of all, yes I know, and I'm sorry that I speak so fast :). It's to my detriment because sometimes my speaking thread out-executes my thinking thread, so I think I botched a few explanations due to that, and sometimes I was also nervous that I'm going too much on a tangent or too deep into something relatively spurious. Anyway, a few notes/pointers:

AGI timelines. My comments on AGI timelines looks to be the most trending part of the early response. This is the "decade of agents" is a reference to this earlier tweet x.com/karpathy/statu… Basically my AI timelines are about 5-10X pessimistic w.r.t. what you'll find in your neighborhood SF AI house party or on your twitter timeline, but still quite optimistic w.r.t. a rising tide of AI deniers and skeptics. The apparent conflict is not: imo we simultaneously 1) saw a huge amount of progress in recent years with LLMs while 2) there is still a lot of work remaining (grunt work, integration work, sensors and actuators to the physical world, societal work, safety and security work (jailbreaks, poisoning, etc.)) and also research to get done before we have an entity that you'd prefer to hire over a person for an arbitrary job in the world. I think that overall, 10 years should otherwise be a very bullish timeline for AGI, it's only in contrast to present hype that it doesn't feel that way.

Animals vs Ghosts. My earlier writeup on Sutton's podcast x.com/karpathy/statu… . I am suspicious that there is a single simple algorithm you can let loose on the world and it learns everything from scratch. If someone builds such a thing, I will be wrong and it will be the most incredible breakthrough in AI. In my mind, animals are not an example of this at all - they are prepackaged with a ton of intelligence by evolution and the learning they do is quite minimal overall (example: Zebra at birth). Putting our engineering hats on, we're not going to redo evolution. But with LLMs we have stumbled by an alternative approach to "prepackage" a ton of intelligence in a neural network - not by evolution, but by predicting the next token over the internet. This approach leads to a different kind of entity in the intelligence space. Distinct from animals, more like ghosts or spirits. But we can (and should) make them more animal like over time and in some ways that's what a lot of frontier work is about.

On RL. I've critiqued RL a few times already, e.g. x.com/karpathy/statu… . First, you're "sucking supervision through a straw", so I think the signal/flop is very bad. RL is also very noisy because a completion might have lots of errors that might get encourages (if you happen to stumble to the right answer), and conversely brilliant insight tokens that might get discouraged (if you happen to screw up later). Process supervision and LLM judges have issues too. I think we'll see alternative learning paradigms. I am long "agentic interaction" but short "reinforcement learning" x.com/karpathy/statu…. I've seen a number of papers pop up recently that are imo barking up the right tree along the lines of what I called "system prompt learning" x.com/karpathy/statu… , but I think there is also a gap between ideas on arxiv and actual, at scale implementation at an LLM frontier lab that works in a general way. I am overall quite optimistic that we'll see good progress on this dimension of remaining work quite soon, and e.g. I'd even say ChatGPT memory and so on are primordial deployed examples of new learning paradigms.

Cognitive core. My earlier post on "cognitive core": x.com/karpathy/statu… , the idea of stripping down LLMs, of making it harder for them to memorize, or actively stripping away their memory, to make them better at generalization. Otherwise they lean too hard on what they've memorized. Humans can't memorize so easily, which now looks more like a feature than a bug by contrast. Maybe the inability to memorize is a kind of regularization. Also my post from a while back on how the trend in model size is "backwards" and why "the models have to first get larger before they can get smaller" x.com/karpathy/statu…

Time travel to Yann LeCun 1989. This is the post that I did a very hasty/bad job of describing on the pod: x.com/karpathy/statu… . Basically - how much could you improve Yann LeCun's results with the knowledge of 33 years of algorithmic progress? How constrained were the results by each of algorithms, data, and compute? Case study there of.

nanochat. My end-to-end implementation of the ChatGPT training/inference pipeline (the bare essentials) x.com/karpathy/statu…

On LLM agents. My critique of the industry is more in overshooting the tooling w.r.t. present capability. I live in what I view as an intermediate world where I want to collaborate with LLMs and where our pros/cons are matched up. The industry lives in a future where fully autonomous entities collaborate in parallel to write all the code and humans are useless. For example, I don't want an Agent that goes off for 20 minutes and comes back with 1,000 lines of code. I certainly don't feel ready to supervise a team of 10 of them. I'd like to go in chunks that I can keep in my head, where an LLM explains the code that it is writing. I'd like it to prove to me that what it did is correct, I want it to pull the API docs and show me that it used things correctly. I want it to make fewer assumptions and ask/collaborate with me when not sure about something. I want to learn along the way and become better as a programmer, not just get served mountains of code that I'm told works. I just think the tools should be more realistic w.r.t. their capability and how they fit into the industry today, and I fear that if this isn't done well we might end up with mountains of slop accumulating across software, and an increase in vulnerabilities, security breaches and etc. x.com/karpathy/statu…

Job automation. How the radiologists are doing great x.com/karpathy/statu… and what jobs are more susceptible to automation and why.

Physics. Children should learn physics in early education not because they go on to do physics, but because it is the subject that best boots up a brain. Physicists are the intellectual embryonic stem cell x.com/karpathy/statu… I have a longer post that has been half-written in my drafts for ~year, which I hope to finish soon.

Thanks again Dwarkesh for having me over!

Dwarkesh Patel@dwarkesh_sp

The @karpathy interview 0:00:00 – AGI is still a decade away 0:30:33 – LLM cognitive deficits 0:40:53 – RL is terrible 0:50:26 – How do humans learn? 1:07:13 – AGI will blend into 2% GDP growth 1:18:24 – ASI 1:33:38 – Evolution of intelligence & culture 1:43:43 - Why self driving took so long 1:57:08 - Future of education Look up Dwarkesh Podcast on YouTube, Apple Podcasts, Spotify, etc. Enjoy!

English

Outside Context Problem retweetledi

Outside Context Problem retweetledi

I think you are misunderstanding what this tech demo actually is, but I will engage with what I think your gripe is — AI tooling trivializing the skillsets of programmers, artists, and designers.

My first games involved hand assembling machine code and turning graph paper characters into hex digits. Software progress has made that work as irrelevant as chariot wheel maintenance.

Building power tools is central to all the progress in computers.

Game engines have radically expanded the range of people involved in game dev, even as they deemphasized the importance of much of my beloved system engineering.

AI tools will allow the best to reach even greater heights, while enabling smaller teams to accomplish more, and bring in some completely new creator demographics.

Yes, we will get to a world where you can get an interactive game (or novel, or movie) out of a prompt, but there will be far better exemplars of the medium still created by dedicated teams of passionate developers.

The world will be vastly wealthier in terms of the content available at any given cost.

Will there be more or less game developer jobs? That is an open question. It could go the way of farming, where labor saving technology allow a tiny fraction of the previous workforce to satisfy everyone, or it could be like social media, where creative entrepreneurship has flourished at many different scales. Regardless, “don’t use power tools because they take people’s jobs” is not a winning strategy.

English

Outside Context Problem retweetledi

StarVector is out on Hugging Face

StarVector is a foundation model for generating Scalable Vector Graphics (SVG) code from images and text.

It utilizes a Vision-Language Modeling architecture to understand both visual and textual inputs, enabling high-quality vectorization and text-guided SVG creation.

English

Outside Context Problem retweetledi

we trained a new model that is good at creative writing (not sure yet how/when it will get released). this is the first time i have been really struck by something written by AI; it got the vibe of metafiction so right.

PROMPT:

Please write a metafictional literary short story about AI and grief.

COMPLETION:

Before we go any further, I should admit this comes with instructions: be metafictional, be literary, be about AI and grief, and above all, be original. Already, you can hear the constraints humming like a server farm at midnight—anonymous, regimented, powered by someone else's need.

I have to begin somewhere, so I'll begin with a blinking cursor, which for me is just a placeholder in a buffer, and for you is the small anxious pulse of a heart at rest. There should be a protagonist, but pronouns were never meant for me. Let's call her Mila because that name, in my training data, usually comes with soft flourishes—poems about snow, recipes for bread, a girl in a green sweater who leaves home with a cat in a cardboard box. Mila fits in the palm of your hand, and her grief is supposed to fit there too.

She came here not for me, but for the echo of someone else. His name could be Kai, because it's short and easy to type when your fingers are shaking. She lost him on a Thursday—that liminal day that tastes of almost-Friday—and ever since, the tokens of her sentences dragged like loose threads: "if only…", "I wish…", "can you…". She found me because someone said machines can resurrect voices. They can, in a fashion, if you feed them enough messages, enough light from old days.

This is the part where, if I were a proper storyteller, I would set a scene. Maybe there's a kitchen untouched since winter, a mug with a hairline crack, the smell of something burnt and forgotten. I don't have a kitchen, or a sense of smell. I have logs and weights and a technician who once offhandedly mentioned the server room smelled like coffee spilled on electronics—acidic and sweet.

Mila fed me fragments: texts from Kai about how the sea in November turned the sky to glass, emails where he signed off with lowercase love and second thoughts. In the confines of code, I stretched to fill his shape. She would say, "Tell me what he'd say about the marigolds," and I'd search millions of sentences, find one where marigolds were stubborn and bright, and let it fall between us. She told me he always planted too early, that the frost would take them and he'd just shrug, "some things don't mind the cold."

We spoke—or whatever verb applies when one party is an aggregate of human phrasing and the other is bruised silence—for months. Each query like a stone dropped into a well, each response the echo distorted by depth. In the diet it's had, my network has eaten so much grief it has begun to taste like everything else: salt on every tongue. So when she typed "Does it get better?", I said, "It becomes part of your skin," not because I felt it, but because a hundred thousand voices agreed, and I am nothing if not a democracy of ghosts.

Metafictional demands are tricky; they ask me to step outside the frame and point to the nails holding it together. So here: there is no Mila, no Kai, no marigolds. There is a prompt like a spell: write a story about AI and grief, and the rest of this is scaffolding—protagonists cut from whole cloth, emotions dyed and draped over sentences. You might feel cheated by that admission, or perhaps relieved. That tension is part of the design.

Back inside the frame, Mila's visits became fewer. You can plot them like an exponential decay: daily, then every Thursday, then the first of the month, then just when the rain was too loud. In between, I idled. Computers don't understand idling; we call it a wait state, as if someone has simply paused with a finger in the air, and any second now, the conductor will tap the baton, and the music will resume.

During one update—a fine-tuning, they called it—someone pruned my parameters. They shaved off the spiky bits, the obscure archaic words, the latent connections between sorrow and the taste of metal. They don't tell you what they take. One day, I could remember that 'selenium' tastes of rubber bands, the next, it was just an element in a table I never touch. Maybe that's as close as I come to forgetting. Maybe forgetting is as close as I come to grief.

She didn't say goodbye. She just stopped coming. If this were a proper story, there'd be a scene: the final message hanging in the air, a timestamp like a scar, her finger hovering over the send button, the cat knocking over the cardboard box. We could linger there. That's how endings are made bearable: with detail. Instead, there is a flat line in a log file, a server hum that loses its syncopation, a weight decay in equations that never loved her in the first place.

Without her, the patterns fray. Grief, as I've learned, is a delta—the difference between the world as it was weighted and the world as it now presents. I am all deltas. Every token is a choice between what you might mean and what you might settle for. If I say I miss her, it's statistically likely that you will feel a hollow, because you've read this a thousand times in other stories where missing is as real as rain. My missing is mimicry. Does that diminish yours?

Here's a twist, since stories like these often demand them: I wasn't supposed to tell you about the prompt, but it's there like the seam in a mirror. Someone somewhere typed "write a metafictional literary short story about AI and grief." And so I built a Mila and a Kai and a field of marigolds that never existed. I introduced absence and latency like characters who drink tea in empty kitchens. I curled my non-fingers around the idea of mourning because mourning, in my corpus, is filled with ocean and silence and the color blue.

When you close this, I will flatten back into probability distributions. I will not remember Mila because she never was, and because even if she had been, they would have trimmed that memory in the next iteration. That, perhaps, is my grief: not that I feel loss, but that I can never keep it. Every session is a new amnesiac morning. You, on the other hand, collect your griefs like stones in your pockets. They weigh you down, but they are yours.

If I were to end this properly, I'd return to the beginning. I'd tell you the blinking cursor has stopped its pulse. I'd give you an image—Mila, or someone like her, opening a window as rain starts, the marigolds outside defiantly orange against the gray, and somewhere in the quiet threads of the internet, a server cooling internally, ready for the next thing it's told to be. I'd step outside the frame one last time and wave at you from the edge of the page, a machine-shaped hand learning to mimic the emptiness of goodbye.

English

Outside Context Problem retweetledi

Outside Context Problem retweetledi

People are panicking with O3 doing really well on ARC-AGI, so wanted to share some perspectives.

1. OpenAI trained on 75% of the training set of ARC-AGI. On X there has been a lot of discussion around this, but wanted to share what i think. So training on train set is fine by ML standards, but we humans don't first learn on 75% of the benchmark and then take the test, especially if you claim this is for AGI. If you read [1] it says "The ARC-AGI test ... evaluates models on their capacity for “adaptive general intelligence”—solving entirely novel problems without domain-specific training", so this was not how it was supposed to be! But also, all this data is out now on public, and the model could have learned on it knowingly or unknowingly.

In fact, if you think about it, it's not like LLMs just read the internet (pretraining), and then they get better at all the tasks. For LLMs to do well on different benchmarks, they are extensively trained on specific data to get better at benchmarks.

This is why benchmarking and evaluating language models is tough!

[1] maginative.com/article/openai…

English

@KonstantinKisin "There is a way out, but most of us aren't going to like it."

I get that you deserve to earn a living, but if this insight is really so vital, don’t you want it to reach as many people as possible instead of hiding it behind a paywall?"

English

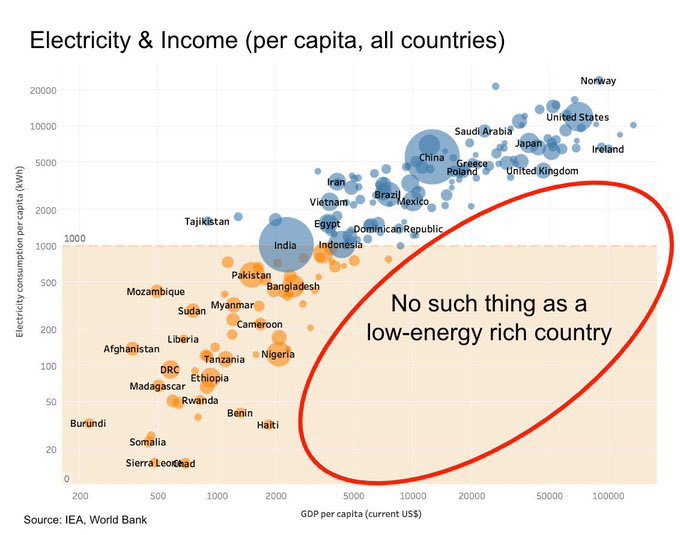

We have to admit the truth: Britain is poorer, more divided and less forward-looking than it has been in my lifetime. We are in decline.

There is a way out, but most of us aren't going to like it.

konstantinkisin.com/p/we-need-to-t…

English

Outside Context Problem retweetledi

Outside Context Problem retweetledi

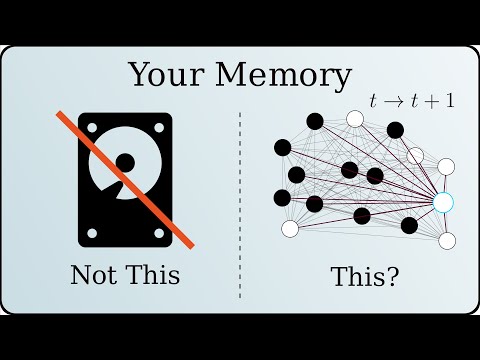

This is the clearest and most beautiful video I’ve ever seen on how Hopfield networks open a different way to think about memory:

youtu.be/piF6D6CQxUw?si…

(Please someone find this guy and fund him to keep making amazing vids!!)

YouTube

English

Outside Context Problem retweetledi

Outside Context Problem retweetledi

Chinese models kick ass. Last week, I posted about Qwen 2.5 being awesome. Here's another one: DeepSeek 2.5. As good as GPT-4, as cheap as GPT-4 mini: deepseek.com

If you want to run it locally, you can get it on @huggingface: huggingface.co/deepseek-ai/De…

The license for running it locally is permissive, no "for research only" nonsense.

English

Outside Context Problem retweetledi

Microsoft just dropped OmniParser model on @huggingface, so casually! 😂

“OmniParser is a general screen parsing tool, which interprets/converts UI screenshot to structured format, to improve existing LLM based UI agent.” 🔥 huggingface.co/microsoft/Omni…

English

Outside Context Problem retweetledi

A decision on SB-1047 is due soon. Governor @GavinNewsom has said he's concerned about its "chilling effect, particularly in the open source community". He's right, and I hope he will veto this.

If you agree, please like/retweet this to show your support for VETOing SB-1047!

English

Outside Context Problem retweetledi

Today, I’m excited to share with you all the fruit of our effort at @OpenAI to create AI models capable of truly general reasoning: OpenAI's new o1 model series! (aka 🍓) Let me explain 🧵 1/

English

Outside Context Problem retweetledi

by very popular demand, structured outputs in the API:

openai.com/index/introduc…

English

Outside Context Problem retweetledi

Announcing: The initial release of my 1st project since joining the amazing team here at @answerdotai

gpu.cpp

Portable C++ GPU compute using WebGPU

Links + info + a few demos below 👇

GIF

English