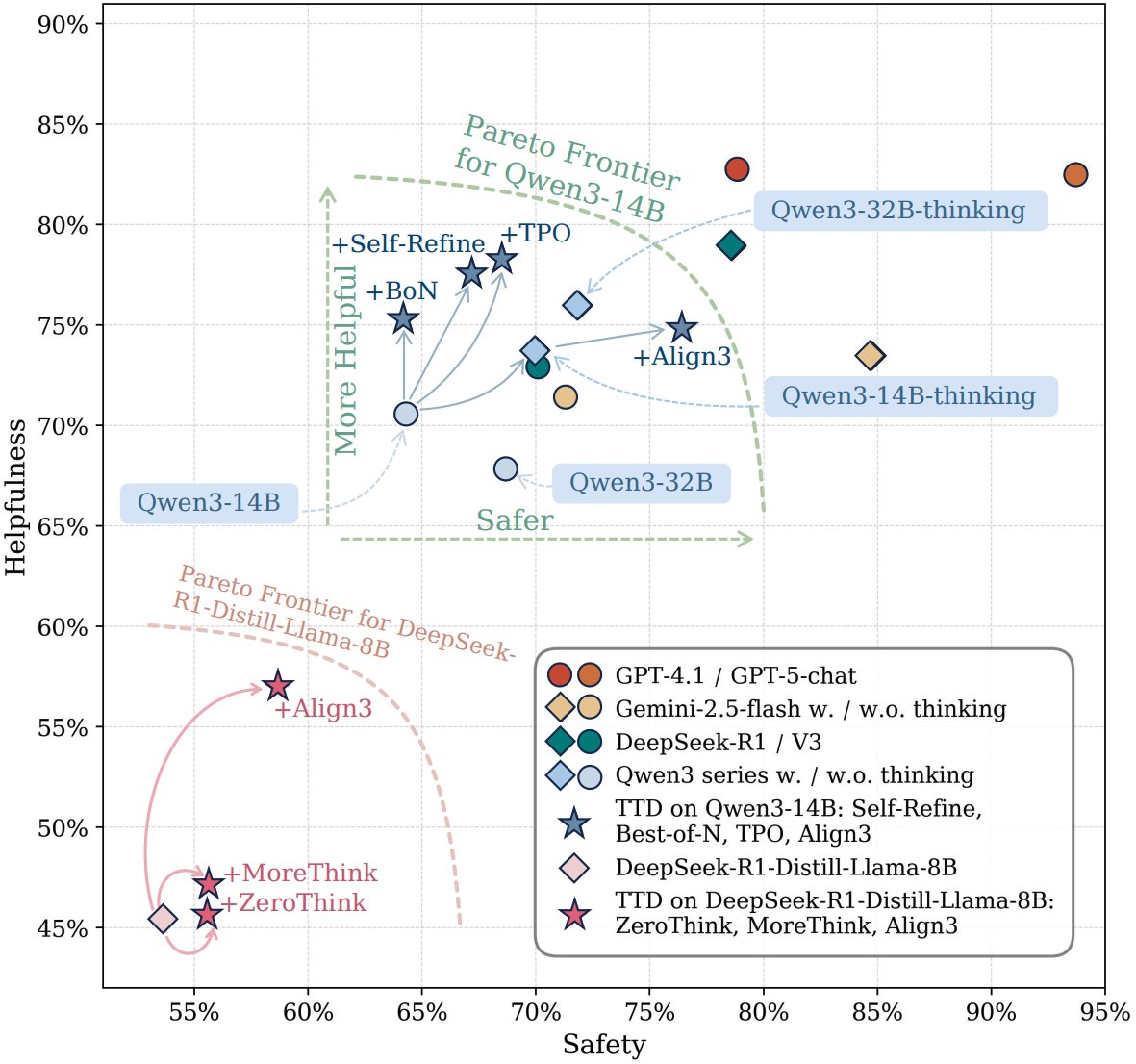

The idea of rotating attention by 90° is sooooooo cool (credits to @Jianlin_S 's insights), and it surprisingly works. We (w/ the amazing @nathan) are so excited about this— been working on the paper for months and couldn't stop. Go give it a try. It's a drop-in replacement for standard residuals, born in 2015. really like the figs btw :-)