Yihao Feng

234 posts

New paper: You can make ChatGPT 2x as creative with one sentence. Ever notice how LLMs all sound the same? They know 100+ jokes but only ever tell one. Every blog intro: "In today's digital landscape..." We figured out why – and how to unlock the rest 🔓 Copy-paste prompt: 🧵

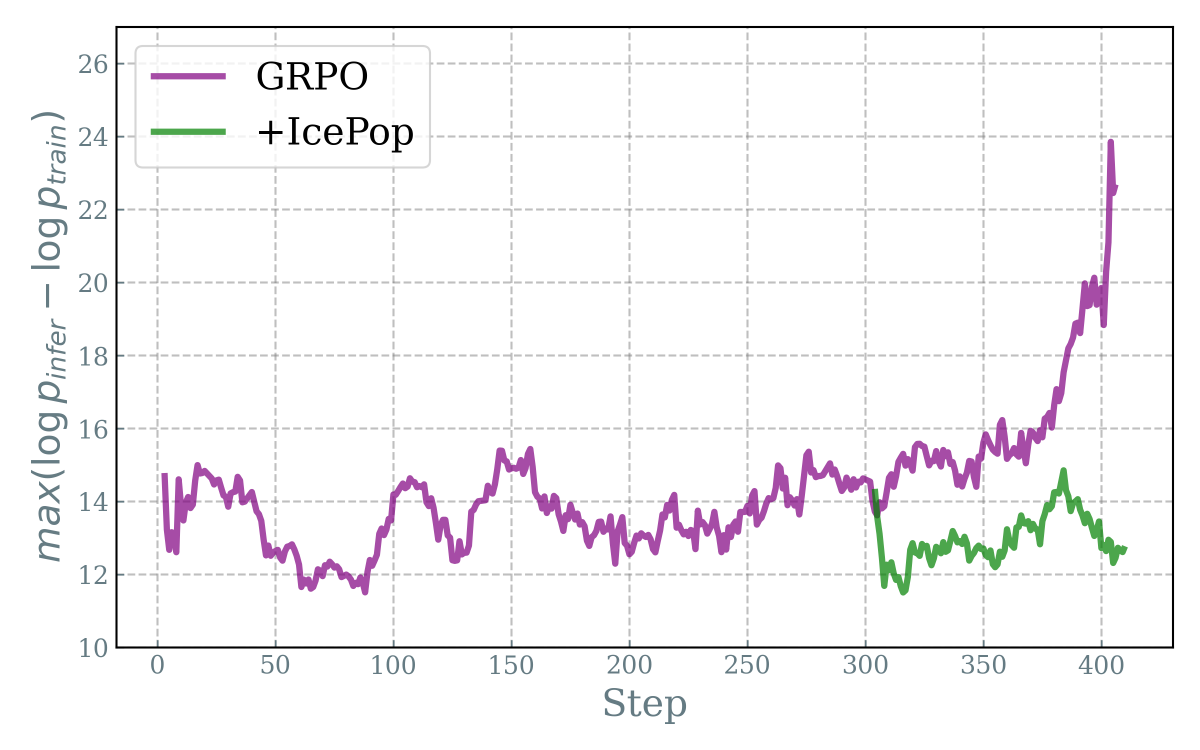

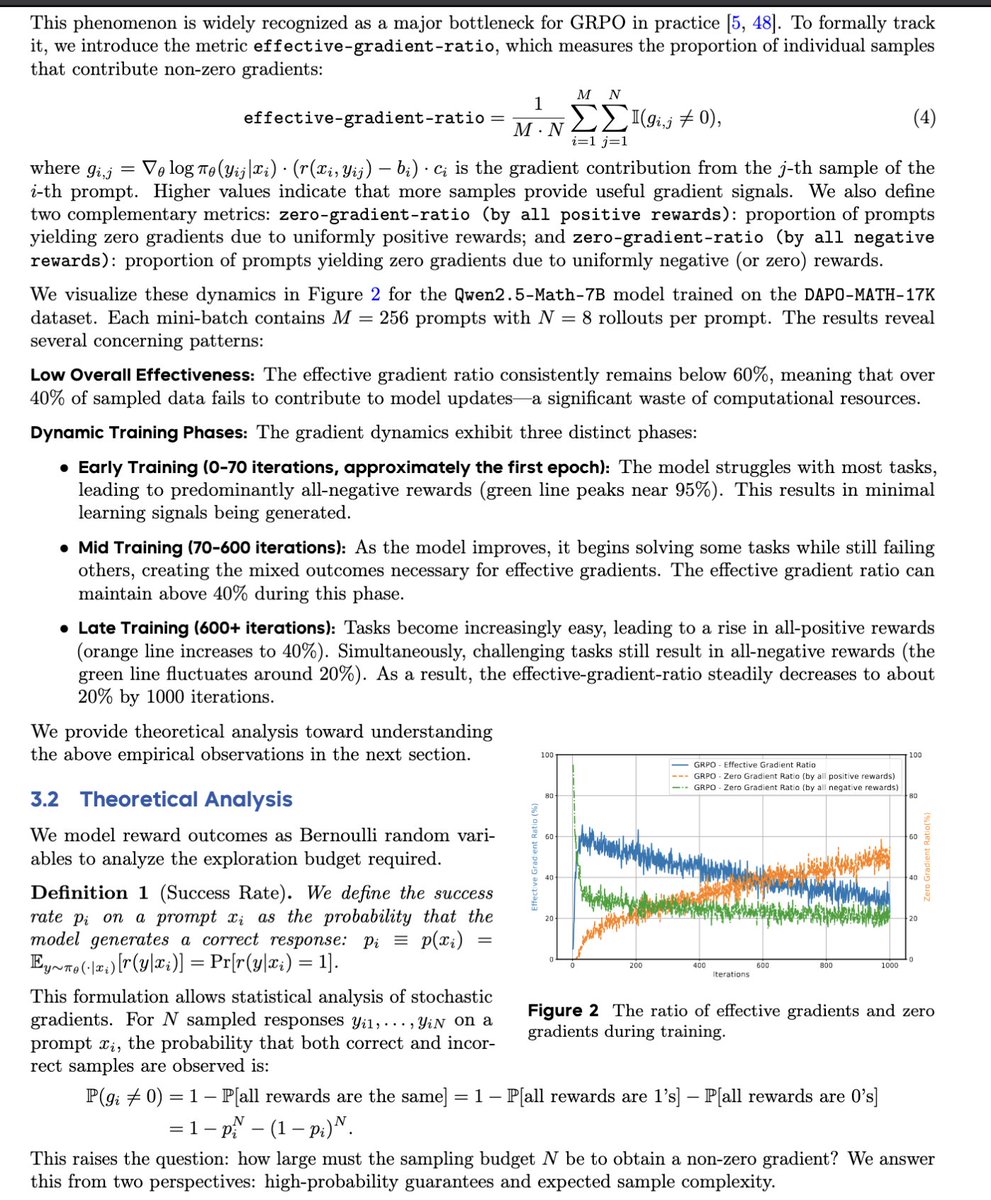

🚀 Excited to share our work at Bytedance Seed! Knapsack RL: Unlocking Exploration of LLMs via Budget Allocation 🎒 Exploration in LLM training is crucial but expensive. Uniform rollout allocation is wasteful: ✅ Easy tasks → always solved → 0 gradient ❌ Hard tasks → always fail → 0 gradient 💡 Our idea: treat exploration as a knapsack problem → allocate rollouts where they matter most. ✨ Results: 🔼 +20–40% more non-zero gradients 🧮 Up to 93 rollouts for hard tasks (w/o extra compute) 📈 +2–4 avg points, +9 peak gains on math benchmarks 💰 ~2× cheaper than uniform allocation 📄 Paper: huggingface.co/papers/2509.25…