Fackler

4.4K posts

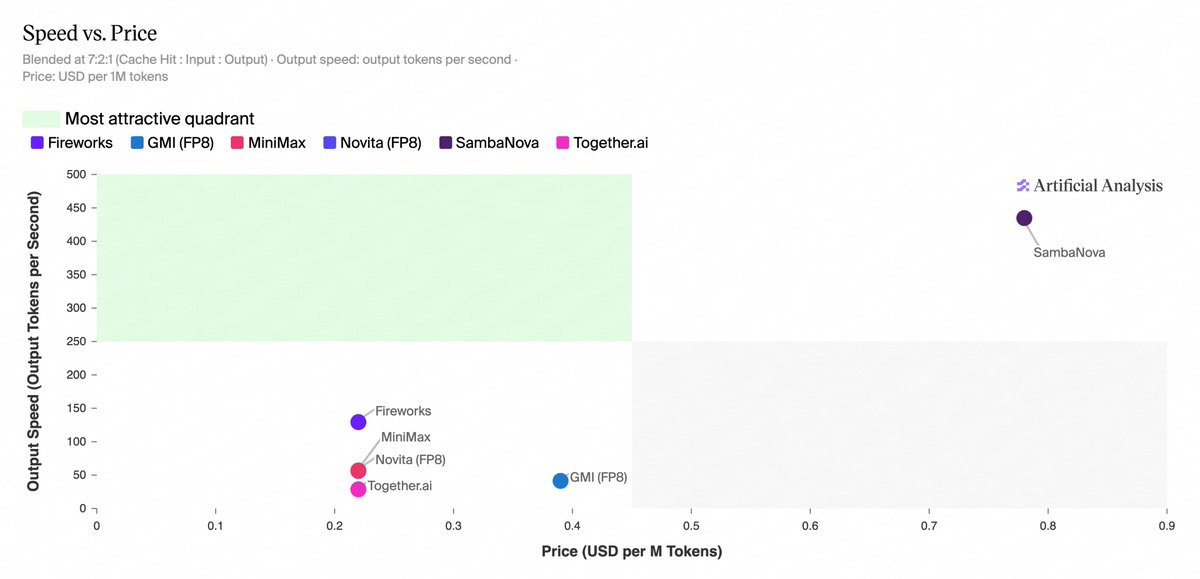

MiniMax-M2.7 is now available across six inference providers on Artificial Analysis, with significant differentiation in speed and price

@SambaNovaAI leads on speed at 435 output tokens/s, >3x faster than any other provider. @FireworksAI_HQ, @novita_labs, @togethercompute, and @GMI_cloud have all matched @MiniMax_AI's first-party API pricing, while SambaNova is 2x higher.

Key takeaways:

➤ Fireworks and SambaNova are on the Pareto frontier for Speed vs. Price. At 127 output tokens/s and ~$0.22 per 1M tokens blended, Fireworks is ~2.2x faster than MiniMax's first-party API at the same blended price, whereas SambaNova delivers 435 output tokens/s but at ~2-3.5x the blended price of the other providers (depending on cache usage)

➤ SambaNova is the fastest provider at 435 output tokens/s, ~3.4x the next fastest provider (Fireworks at 127 output tokens/s). The remaining providers run substantially slower: MiniMax’s first-party API at 57 output tokens/s, Novita at 54, GMI at 41, and Together AI at 29

➤ Cache discounts vary across providers. Fireworks, MiniMax, Novita, and Together AI offer 80% cache hit discounts, while GMI and SambaNova do not offer a discount. For cache-heavy workloads, this can materially increase the relative pricing for GMI and SambaNova

➤ Optimal provider choice depends on workload. SambaNova may be more suited to latency-sensitive deployments, albeit at a higher cost, while Fireworks may be more suitable for high-volume workloads that are not as latency-sensitive

English

@mattrickard @QuixiAI it's in the system prompt so this is the proper way to handle it. If you add negation in CLAUDE.md, you're telling the model to do it, and not do it at the same time.

English

@QuixiAI {

"attribution": {

"commit": ""

}

}

in settings.json

English

@yacineMTB at best, this is tickling the gradient. "you know everything about everything" is a sure fire way to instill overconfidence and lead to horrible outcomes, potentially.

English

@itsjustmarky it's not. it runs when prompts are issued. Gentle usage? How is it gentle? It's all load. Who runs models or mines at lower power settings?

English

@z_malloc And you don't think AI isn't sustained load? GPUs rarely fail and mining is the most gentle usage of all.

English

Why I personally don't recommend the RTX 3090 for Local LLMs:

While it offers fantastic inference performance for the price, there are a few major drawbacks.

> The biggest issue: Durability. If you buy a used 3090, there's a high risk it was heavily abused for crypto mining.

> The power consumption is absolutely massive.

> Extreme heat. It's one of the hottest GPUs out there and will literally heat up your entire room.

> Used prices have gone up so much that they are almost back to the original launch price.

Make sure to carefully weigh the pros and cons before making a purchase!

English

@jun_song This is nonsense, crypto mining does not abuse it and is in fact better usage than AI. AI you are trying to squeeze as much performance as possible, where as mining is looking to get as much efficiency at as low as power as possible, thus you are running the cards cooler.

English

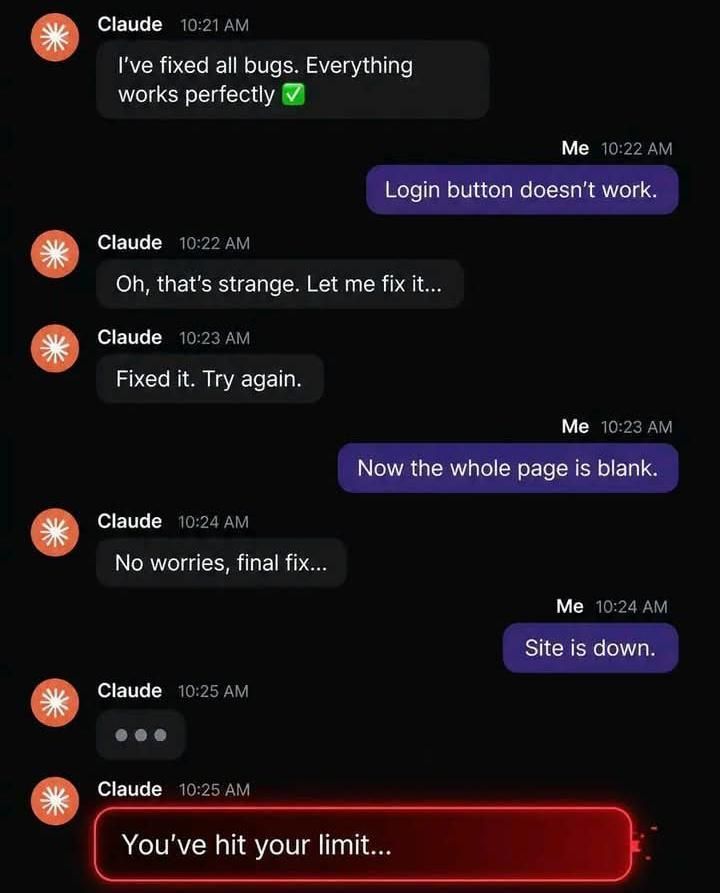

@techbromemes "Look for possible exploits in my code" "You have been reported to the authorities" "what?" "429 error"

English

@CodeWithAmann glm 5.1 is a fantastic model, but the provider and customer service in Z ai is laughably bad. Capability-wise, feels like V4, K2.6 and GLM are all really close.

English

@BusDownBonnor Multiple conflicting directives in the system prompt create untold levels of havoc. Ant lost the plot completely.

English

John Ternus, Apple's SVP of Hardware Engineering, explains why Apple deliberately made the iPhone harder to repair, and why the math says it was worth it:

In a conversation with MKBHD, John frames the design challenge by asking you to imagine two extremes:

"Sometimes for me I find it helpful to kind of think about the book ends. Like if you imagine a product that never fails, right? That just doesn't fail. And on the other end, a product that maybe isn't very reliable but is super easy to repair."

His position is clear:

"Product that never fails is obviously better for the customer. It's better for the environment."

When pushed on whether infinite repairability and infinite durability have to be mutually exclusive, John acknowledges they aren't always, but explains why the tension is real, using the iPhone battery as an example.

Batteries wear out. If you want to extend the life of the product, they need to be replaced.

But in the early days of iPhone, one of the most common failures wasn't the battery, it was water:

"Where you drop it in the pool or you, you know, spill your drink on it and the unit fails. And so, we've been making strides over all those years to get better and better and better in terms of minimizing those failures."

That work led Apple to an IP68 rating, the point where customers fish their phones out of lakes after two weeks and find them still working.

But there was a cost to achieving that level of durability:

"To get the product there, you've got to design a lot of seals, adhesives, other things to make it perform that way, which makes it a little harder to do that battery repair."

That's the deliberate tradeoff. Apple chose tighter seals and stronger adhesives, knowing it would make battery replacement more difficult, because the reliability gains were worth it.

John argues the math backs this decision:

"It's objectively better for the customer to have that reliability and it's ultimately better for the planet because the failure rates since we got to that point have just dropped. It's plummeted, right? The number of repairs that need to happen and every time you're doing a repair, you're bringing in new materials to replace whatever broke."

His conclusion reframes the entire repairability debate:

"You can actually do the math and figure out there's a threshold at which if I can make it this durable, then it's better to have it a little bit harder to repair because it's going to net out."

English

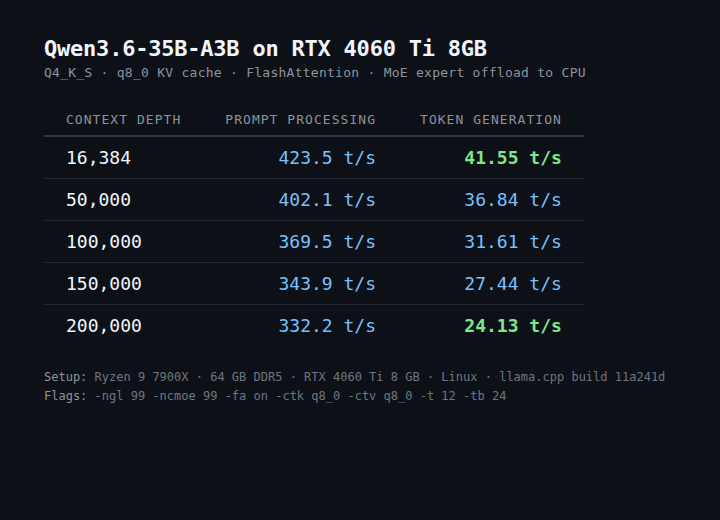

@above_spec is generation speed really the key metric? It could run at 5 t/s if the generations are good enough, that'd be fine. But they don't seem strong enough just yet.

English

GLM 5 could be better but it is very unreliable asf!

Kimi k2.6 could be better but it just has issues with understanding and following instructions, it over does stuffs and destroys my repo.

Deepseek is a winner here because it understands and follows instructions with 1m context it's a plus for me. The only problem with Deepseek is this: it doesn't have vision.

Kasif@md_kasif_uddin

Be honest, which is the best open source AI Model?

English