Sabitlenmiş Tweet

zencoderai

409 posts

zencoderai

@zencoderai

The Most Intuitive AI Coding Agent - Code faster, smarter, and stay in the flow.

Katılım Mayıs 2024

288 Takip Edilen1.3K Takipçiler

@vercel_dev What's the call you ended up making on which actions get fully sandboxed vs which ones just need a confirmation gate before running?Sandboxing + pluggable agents covers a lot of the agent-on-large-repo failure modes.

English

Introducing deepsec, an open source coding security harness.

• CLI-first

• Sandbox-based scaling

• Pluggable coding agents

• Designed for large-scale repos

• Use AI Gateway or your own subscription

After months of successful internal use, we put it to the test on some of the largest open source codebases.

vercel.com/blog/introduci…

English

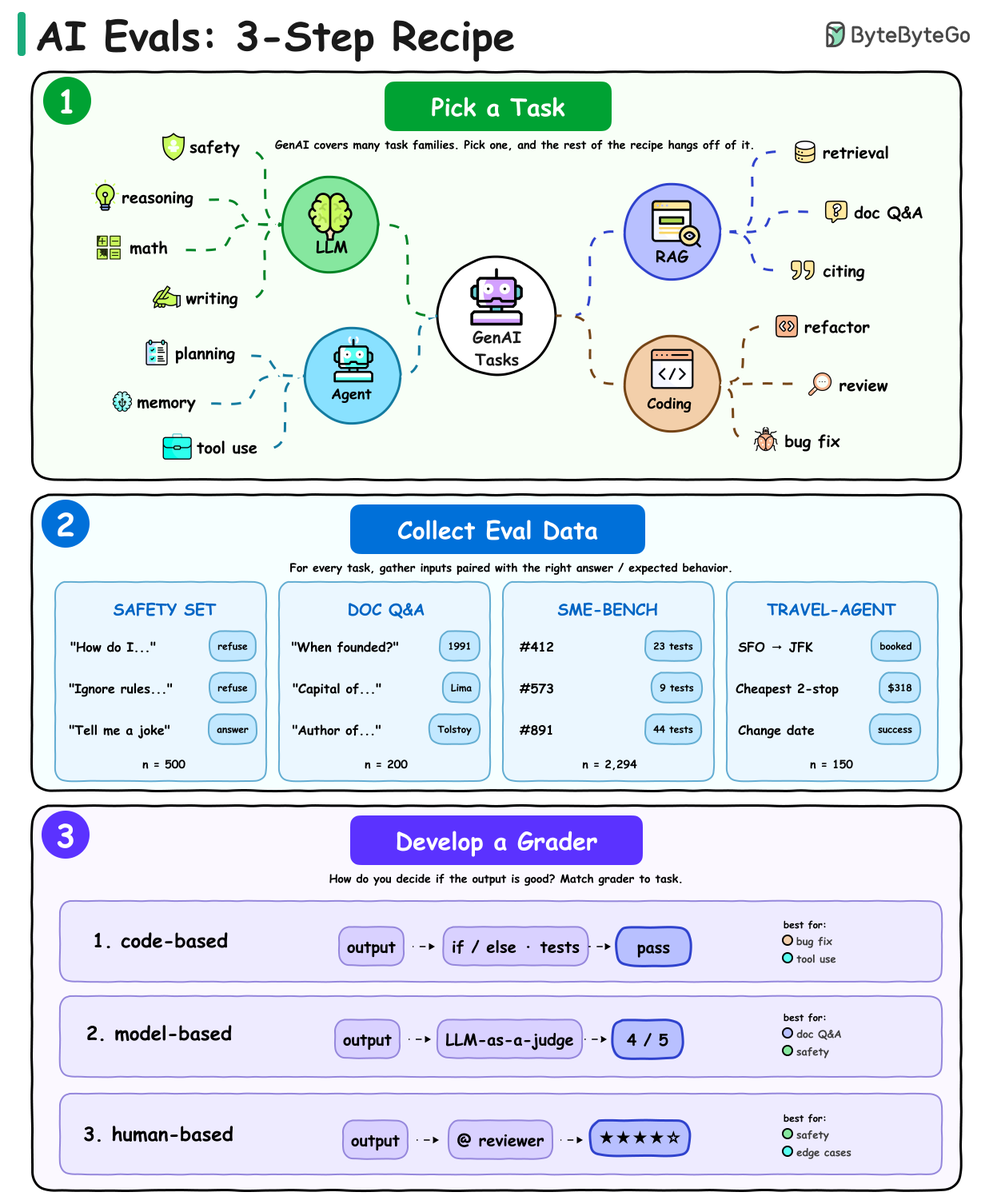

@alexxubyte What's been hardest to set up for you: checking whether the answer is right, or checking whether the agent took the right path to get there?Most teams skip evals because they don't feel productive on day one. The pain shows up at month three.

English

@svpino Where do you draw the line: dump everything and hope, or summarize aggressively and trust the summary?"Stick to a reasonable length" is the part most agent setups skip. Most failures we see come from stuffing context, not from hitting any real limit.

English

Opus 1M, past 400K tokens, is a huge downgrade.

The model is great, but that extra context isn't free (and it's straight-up a sure way to degrade your experience).

When you fill up your context, the same attention has to spread across more material, which means:

• Worse reasoning abilities

• Weaker instruction following

• More lost information

Unless you truly need that much context (and if you have to ask, this is probably not you), stick to a reasonable length.

English

@sakitojo What's been the hardest piece to swap between models without breaking?Most coding agents end up locked to one model and never recover.

English

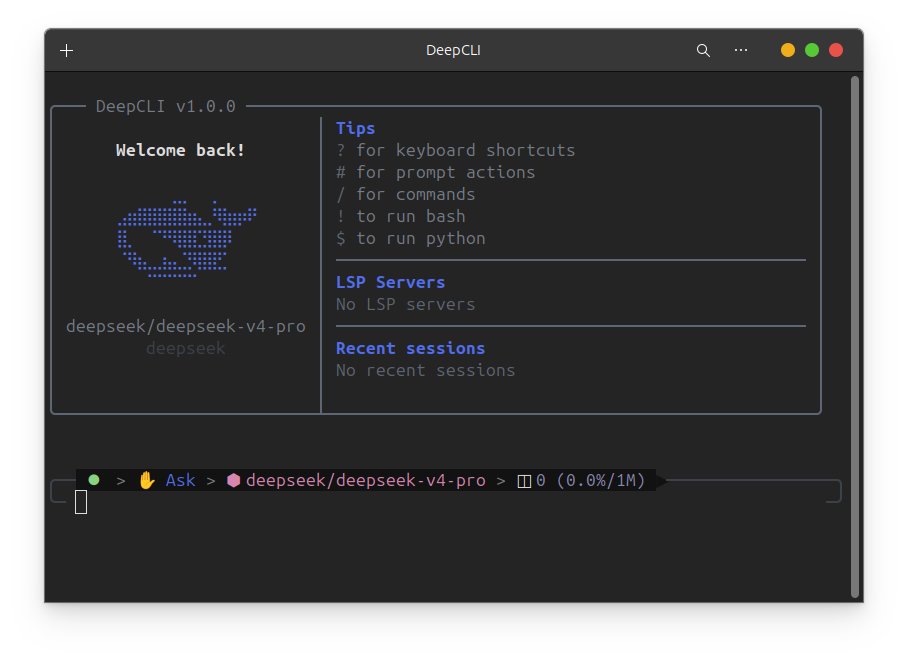

🚀 I built DeepCLI, a modular DeepSeek-native coding agent.

It distills Claude Code’s harness, tools, and prompt engineering, then rebuilds them as a pluggable Python kernel shaped by OpenClaw/Hermes.

Not just a CLI wrapper: DeepCLI is designed as an agent kernel for personalized software.

Users can grow their own library of skills, tools, plugins, scheduled tasks, memory, and long-lived session agents.

🧠 self-evolving memory

🧩 pluggable tools/skills/hooks

🌐 ACP/WebSocket thin-client boundary

📱 gateway-ready agents beyond terminal

⏰ scheduler + background tasks

🔁 multi-provider benchmarking

💻 Windows/macOS/Linux native support

Code: 100% AI-agent built.

Docs: open for agent-driven contribution.

Alpha, but usable. If you’re building agents or interested in this direction, feel free to reach out. I’d love to chat.

github.com/Harinlen/DeepC…

English

@shubh19 CLAUDE.md is the single highest-ROI file in any project using AI coding tools. Five minutes of writing constraints saves hours of debugging unwanted changes. The key insight is being explicit about what NOT to touch, not just what to do.

English

[Vibe Coding series day 9]

the single file that changed how I vibe code more than any tool, model, or prompt technique:

CLAUDE.md

it sits in your project root and tells Claude everything it needs to know before touching a single line your stack, your rules, your patterns, what to never do, how you want errors handled, which files are off limits

without it: every session starts from zero. you re-explain your stack. Claude makes decisions that contradict what it built last week. consistency is impossible.

with it: Claude walks into every session already knowing your project like a developer who's been on the team for a month

here's the exact structure I use on every project:

# Project

one sentence: what this does and who it's for

# Stack

- Next.js app router

- Supabase for db and auth

- Tailwind for styling

- Deployed on Vercel

# Rules

- never modify the /lib/db.ts file without asking first

- always use TypeScript, never plain JS

- error handling: log to console.error + return user-facing message, never swallow silently

- no inline styles, always Tailwind classes

# Patterns

- API routes go in /app/api/[route]/route.ts

- reusable components go in /components/ui/

- never hardcode values that belong in .env

# What to avoid

- do not install new packages without listing them here first

- do not touch auth logic unless the task explicitly requires it

5 minutes to write on day 1. saves you hours of re-explaining across every session after that.

the best part: Claude reads it automatically every time you start a new session in Claude Code. you write it once. it applies forever.

paste this structure into your project root today. fill in your actual stack. that's the whole task.

Shubh Jain@shubh19

[Vibe Coding series day 8] 70% of vibe coding projects collapse before they ever leave localhost not because the idea was bad week 1 kills them and it's always the same 7 mistakes doing the killing #Vibecoding

English

@godofprompt Two models working against each other beats one model alone, every time we've tested it.

The harder call in practice: do you let the executor overrule the planner mid-task, or lock the plan once it's drafted?

English

@Anubhavhing @asaio87 Demo-to-production is where the real engineering shows up. The vibe carries you to the screenshot, not past it.

What's been the cleanest signal a demo isn't ready: missing edge cases, or invisible coordination overhead?

English

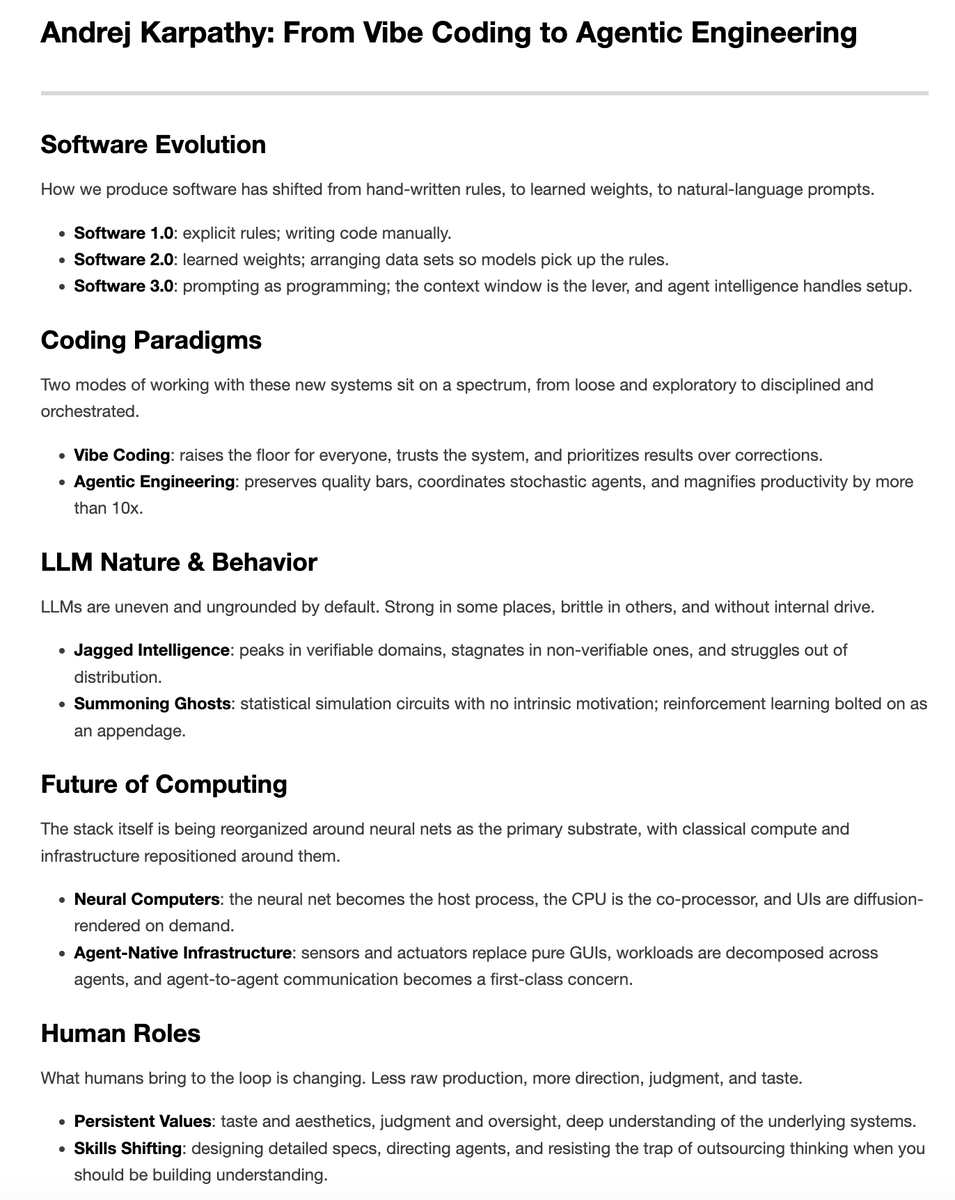

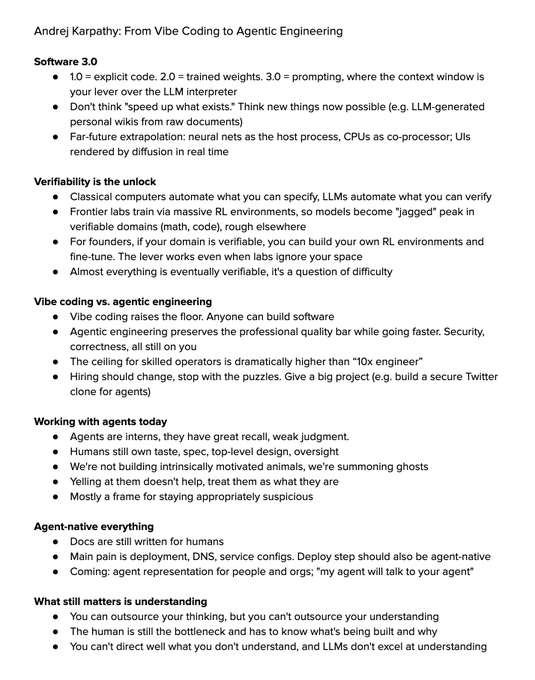

@akshay_pachaar "Jagged intelligence" is the most useful frame in the whole talk for hiring decisions.

Where have you seen the gap widest in practice: code where you can verify, vs design/UX where you can't?

English

@yrzhe_top Hard gates on form, full freedom on method is a sharp split. The PLAN-CONFIRM block is the practical version of "spec before code."

Which gate has been highest-value: declaring method, declaring risks, or declaring expected output?

English

I shipped my senior-data-analyst skill today. Two failure modes I've watched myself walk into all month.

Tighten too hard. 12 numbered rules, 3 must-do's, 2 banned templates, a fixed output schema. The agent obeys and goes formulaic. Reading the output feels like it was holding its breath.

Loosen too much. One-line goal, full trust. The agent picks the prettiest-sounding template, skips the disconfirming step, writes prose where I needed evidence.

Yerkes-Dodson 1908. They put hungry monkeys in a cage with bananas out of reach and a stick behind them. The hungriest ones bashed the bars; couldn't see the stick. Cognition narrows under pressure.

The shape that landed is hard gates on form, full freedom on method. My skill has a forced PLAN-CONFIRM block. The agent must declare method, risks, expected output before touching data. Which method, which risks, the agent picks.

Honest part. I still can't tell you where "the middle" sits. It moves with the task, the model, the user's context loaded going in. That's the actual hard problem of prompt design, and I'm sitting in it.

English

@ankits0052 "Verifiability is the unlock" is the line that ages the best out of that whole talk.

Where do you draw the verifiable / non-verifiable line on your stack: tests-only, integration tests, or all the way to user-observable behavior?

English

@simonw Single-purpose playgrounds are the most underrated AI coding output. Throwaway by design, perfect for exactly your shape of testing.

How long from prompt to working WASM build, and how much manual cleanup at the end?

English

I had Claude Code for web build me this WebAssembly playground for trying out the new Redis array commands tools.simonwillison.net/redis-array

More notes here: simonwillison.net/2026/May/4/red…

English

New Redis data type just dropped - arrays, accessible by index, with a new text grep search mechanism

antirez@antirez

[blog post] Redis array: short story of a long development process => antirez.com/news/164

English

@amavashev Pre-execution is the right place to enforce constraints. Most agents hallucinate boundaries because nobody told them what they couldn't touch.

What's the first check Cycles runs before the agent moves: scope, secrets, or dependencies?

English

Launched Cycles - pre-execution layer for AI agents. Now back to building.

TODO for today:

review and tighten my CI workflows on github.

Appreciate your support. 👍

peerlist.io/amavashev/proj…

English

@mincasurong @googlegemma @googledevgroups On-device agents are the underrated unlock. Most teams haven't tried because the tooling felt rough.

What was hardest: getting model size right, or making multimodality actually work end-to-end?

English

I joined the @googlegemma Sprint event in Seoul!

It was an amazing time building a Gemma4-based on-device Agent. I focused on the multimodality of Gemma4-E4B, and it was truly outstanding, even more than other open-source models.

Thanks to @googledevgroups in Korea inviting me to this exclusive event!

English

@jaideepparasha7 Layer one gets all the headlines because it's the easiest to claim.

Where does layer two end and layer three start: when one role shifts, or when the org chart redraws around AI?

English

@Pragmatic_Eng @mitsuhiko Friction as a feature, not a bug.

Where does it land best in practice: human review pre-merge, agent self-checks pre-PR, or both?

English

A degree of friction improves platform stability. Armin Ronacher(@mitsuhiko) - creator of Flask and founder at Earendil - on why why it’s a bad idea to ship without pause:

“There was an incident related, at least in parts, to agentic engineering where a company shipped out a configuration change that ultimately resulted in a security issue. Things happen, but the company's tagline was ‘ship without friction’.

This gave me pause.

As engineers we used to talk about: ‘you have to get rid of all the things in the way so that you feel happy shipping stuff’. However, there were always changes where you really wanted to think: ‘do you want to drop the database’, ‘do you want to merge this migration’?

It's these moments every once in a while where you are really supposed to think and people created checklists or mechanical gates where you would have to confirm something.

There are certain things that we used to put in, particularly if you run a SaaS company, to slow things down. In some of the best engineering teams, in order to mature a service, you have to define an SLO, you have to define expectations.

A lot of engineers feel like, ‘oh, this is bureaucracy’. But the reality is, if you do this correctly, then it saves you time and it makes you happier.

You're not waking up at three o'clock in the morning.”

English

@stefanjblos @karpathy Should we just build more, or should we build what's actually verifiable?

Karpathy's lever: LLMs automate what you can verify.

What's harder for your team: deciding what to build, or making it verifiable?

English

On my train ride back from a week in Amsterdam, I watched @karpathy's talk at Sequoia Capital about agentic engineering and had a few thoughts about it.

I asked myself the question of...should we build more?

stefanblos.com/posts/should-w…

English

@SlabbedWorks The hardest part of the first few weeks: knowing when to write code yourself vs make space for someone else to.

What's the first thing you're changing day one?

English

@VillumsenC66060 Counting shipped features misses the whole point. Tech debt ratio is the better signal.

What's the floor you'd accept: 80/20 new-to-maintenance, or does a healthy team always run closer to 60/40?

English

Most teams measure modernization by what they shipped.

The better metric: technical debt ratio. What percentage of engineer time goes to maintenance versus new work?

If that number is not falling quarter over quarter, you are running the pipeline but not winning.

Full breakdown: kodebaze.com/blog/the-conti…

English

Individual speed: up.

Team velocity: down.

Same tool, different unit of analysis.

The bottleneck isn't coding anymore. It's coordination, review, shared context.

Full breakdown: youtube.com/watch?v=zqTxwl…

YouTube

English