zero

1.5K posts

zero

@zerocaulk

Builder, ex-K Street, wannabe polyglot 🇺🇸/🇫🇷🇱🇻🇷🇺

T3 Code is unusable!

what's your perfect tech stack to start a project today?

Wait, Codex uptime is 100%? is this real?

My dear front-end developers (and anyone who’s interested in the future of interfaces): I have crawled through depths of hell to bring you, for the foreseeable years, one of the more important foundational pieces of UI engineering (if not in implementation then certainly at least in concept): Fast, accurate and comprehensive userland text measurement algorithm in pure TypeScript, usable for laying out entire web pages without CSS, bypassing DOM measurements and reflow

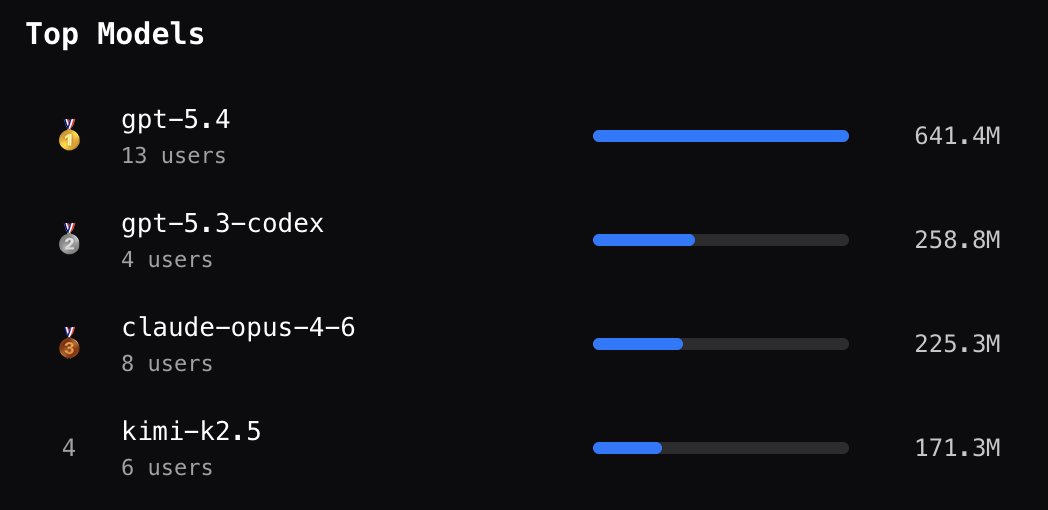

running the inaugural budok-ai tournament. eight models seeded with the artificial analysis intelligence index. each round is best of three. models get to pick what character they want to play (cowboy, ninja, wizard, robot, mutant), and can change characters in subsequent games in response to the results.

Today we're introducing TRIBE v2 (Trimodal Brain Encoder), a foundation model trained to predict how the human brain responds to almost any sight or sound. Building on our Algonauts 2025 award-winning architecture, TRIBE v2 draws on 500+ hours of fMRI recordings from 700+ people to create a digital twin of neural activity and enable zero-shot predictions for new subjects, languages, and tasks. Try the demo and learn more here: go.meta.me/tribe2