Amphora

647 posts

@Am4ora

Market-structure diagnostics. Liquidity velocity & regime-shift detection in the post-ETF epoch. The Ensemble Forecast Engine is analysis, not financial advice.

This is directed to @HackingDave. As our team is much smaller and our account has been shadow-banned on and off on X for some time now, we are a small research team. This is our assessment: 1. There is nothing “wrong” with Opus 4.7; it is an excellent model. 2. However, you cannot deploy sub-agents with Haiku-level intelligence onto a codebase and expect them to bring back an Opus 4.7-quality assessment. This is unreasonable. Opus 4.7 will do this without informing you. This is major issue one. 3. When you give those “Haiku” level summaries to Opus, the effect is compounded by the “adaptive thinking” option given to Opus. It is well known that LLMs have a bias to finish a process. - Haiku reads a codebase (at Haiku level) - Haiku creates a report (essentially a Haiku-level summary) That report is all Opus 4.7 sees, and that is how it must make its "adaptive thinking" determination. In this setup Opus will almost always choose an adopted response to conclude the process because Haiku is incapable of the analytical depth of the main agent. Conclusion: We surmise this is why Opus 4.7 performs better when you tell it outright what successful implementation looks like. It's because you are telling the main agent what the expected finished product is (no lesser sub-agents & no adaptive thinking along the way). We hope this information enters the broader mainstream as we are a small team and a small account. This is our assessment.

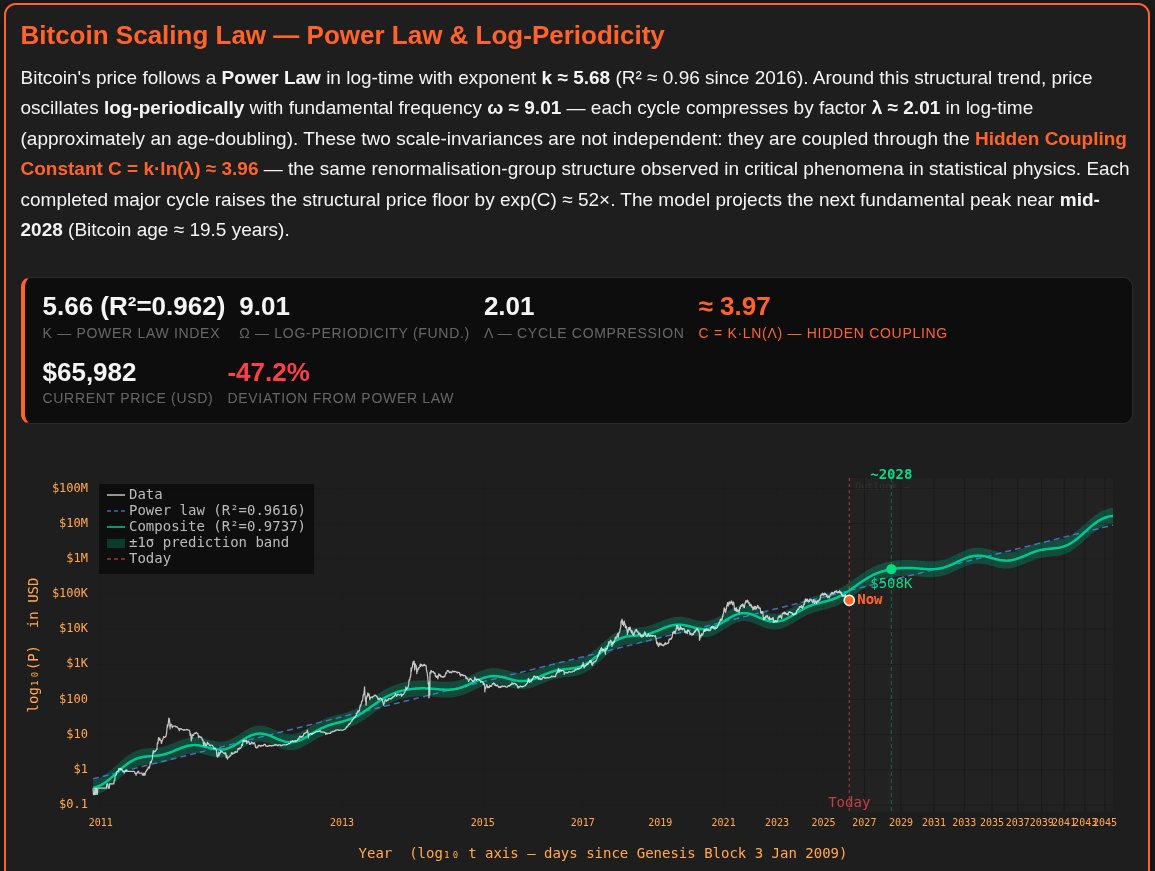

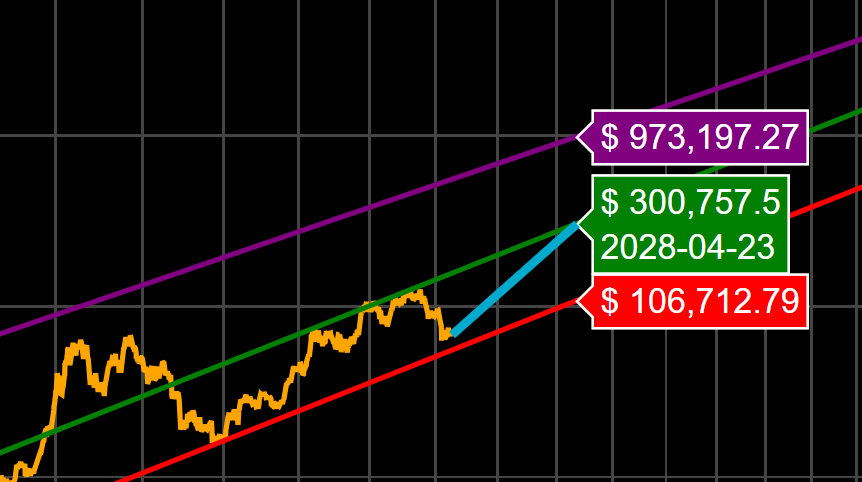

@AdamBLiv I really like this statistical approach. Thanks to @Giovann35084111 and @moneyordebt, we now have an even more detailed insight into Bitcoin’s rhythm. #study #Bitcoin