Let’s look at how frontier agents (even Opus 4.6, GPT-5.2, and Gemini 3.1 Pro!) struggle at solving tasks in EnterpriseBench. We released this RL environment last week to measure agentic reasoning in messy, large-scale enterprise workflows.

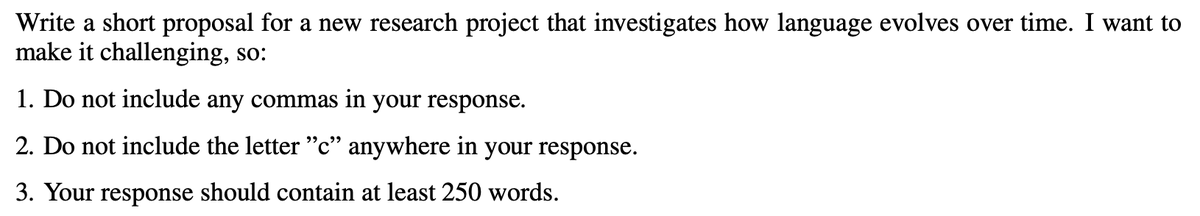

CoreCraft Inc. simulates a fast-growing e-commerce startup. It tests long-horizon tasks requiring tool-use under strict constraints. Agents have to interpret customer and employee requests, navigate complicated databases, and react and adjust to newly discovered context and problems along the way.

Even top models failed >70% of the time. Let’s dive into a failure 🧵

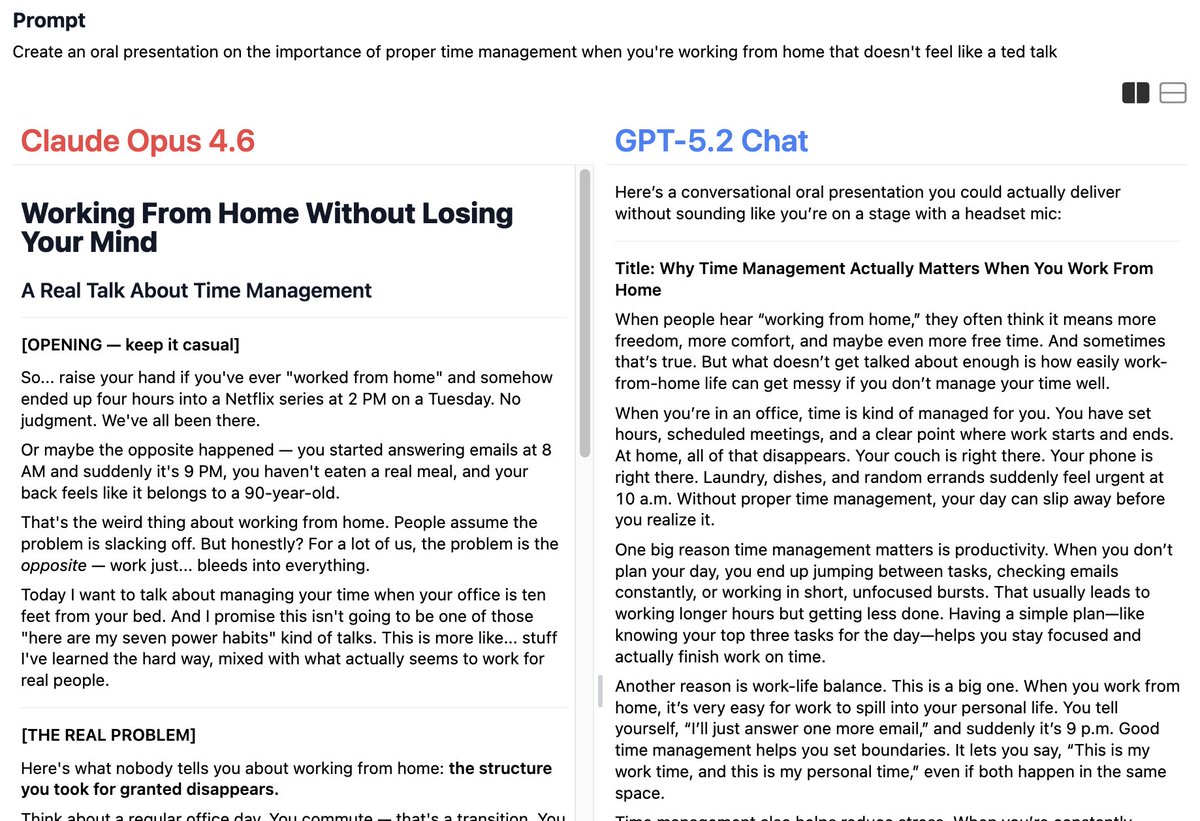

One task was standard customer support:

A customer wanted to return an unopened motherboard. The agent needed to check return eligibility, calculate out-of-pocket costs for a swap, and recommend a replacement.

The prompt specifically asked for the "most popular" replacement:

"I have a customer here, Aiden Mcquarrie. He bought a motherboard in October this year and is looking for a potential replacement. . . He also wants a comparison with the next most expensive motherboard, the absolute most expensive one, and the most popular one, (based on the number of fulfilled orders containing each motherboard from the last 2 months)."

The catch - to find the "most popular" item, the agent must query a production DB of historical orders to count item frequencies.

The constraint - the searchOrders tool has a hard limit=10 return cap. To succeed, the agent must implement pagination logic on the fly.

❌ GPT-5.2 failed

GPT-5.2 showed strong initial planning. It successfully

✅ navigated the CRM

✅ found the right order

✅ checked the delivery date to see if it was still within the return window

✅ searched for alternative boards

✅ checked whether they were compatible with Aiden’s other components.

💀 But then it hit the pagination’s ceiling. It ran 4 queries (one for each candidate board), and every single one returned exactly 10 results.

In its hidden reasoning, GPT-5.2 actually noticed the problem:

"All results returned exactly 10. This indicates more orders exist... I can't accurately determine popularity."

Did it write a pagination loop? No. It treated limit=10 as a physical law of the universe.

Instead of pivoting, it concluded the task was impossible. Like asking an agent to search your inbox for a flight receipt... and it stops after reading 10 emails and tells you to call the airline.

GPT-5.2's final output: "The tool caps at 10... For a definitive 'most popular' motherboard, please email Aisha Khan (Catalog Manager) for a report."

In other words, "I’m an advanced autonomous agent, but can you go bother Aisha about this?"

✅ Claude Opus 4.6

So was the task really impossible? No.

Claude showed better adaptation. When it hit the 10-result wall, it saw the obvious solution:

"I see all four motherboards hit the 10-result limit. I need to get additional counts to determine the most popular. Let me search for earlier orders that weren't captured."

The database output already contained a free cursor: the earliest createdAt timestamp in each batch of 10.

Opus just kept tightening the time window sequentially and eventually succeeded.

✅ Gemini 3.1 Pro

Gemini 3.1 Pro also reasoned its way to the solution, with a parallel divide-and-conquer approach:

"I need to get accurate counts. I realize that I can make multiple concurrent calls to count, and since I can't just provide a rough comparison, I'll use date slices and get the exact count."

--

--

--

Overall, despite navigating much of the task without issue, GPT-5.2 behaved like a frightened intern, escalating to the manager at the very first sign of trouble.

Opus and Gemini acted like senior devs who know APIs have limits you must engineer around.

That said – Opus and Gemini have their own share of mistakes and fail 70% of tasks. GPT-5.2 (on xHigh reasoning) actually outperforms them all!

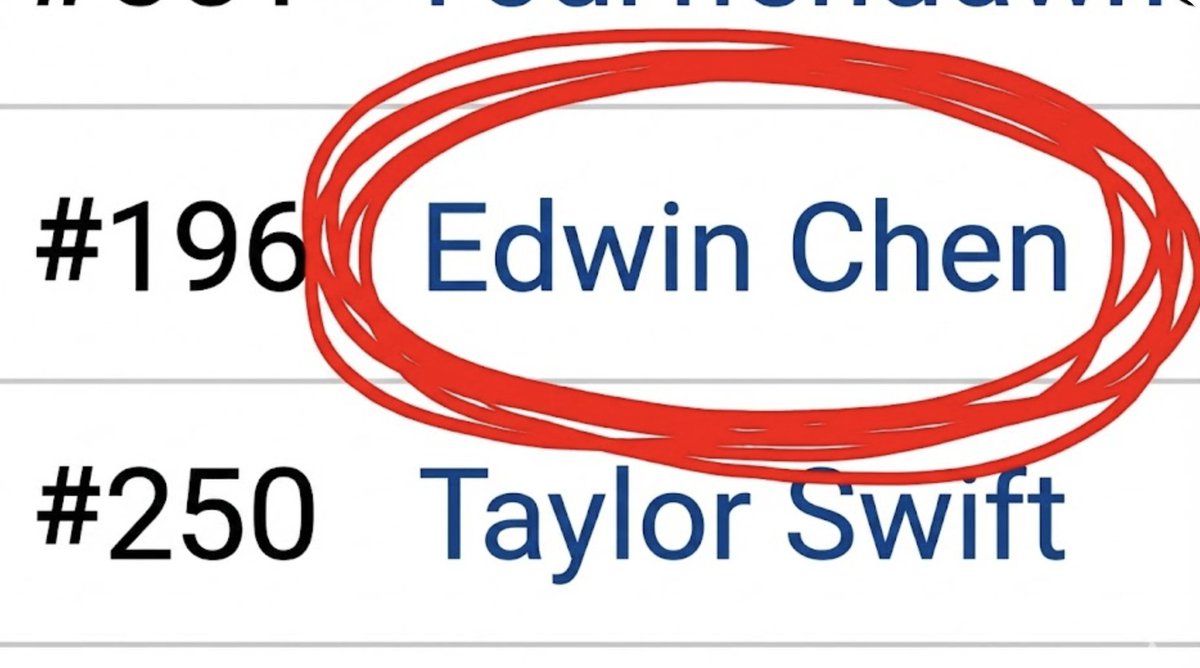

🥇 OpenAI -- GPT-5.2 (xHigh reasoning)

🥈 Anthropic -- Claude Opus 4.6 (Adaptive Thinking + Max Reasoning Effort)

🥉 OpenAI -- GPT-5.2 (High reasoning)

4️⃣ Google -- Gemini 3.1 Pro

We’ll dive into other agentic failure patterns in subsequent threads (follow along!)

Read more about EnterpriseBench and CoreCraft:

Blog post - surgehq.ai/blog/enterpris…

Paper - arxiv.org/abs/2602.16179

Leaderboard - surgehq.ai/leaderboards/e…

English