Joe

34.3K posts

Joe

@JosepiBuildsIt

software engineer building in public. you don't need to be technical to build powerful things. I prove it and show you how.

Just finished my new LLM setup for my 12 agent @openclaw dev team. Im testing a split between: - GPT 5.4/ Codex for specs, reviews, and thinking - @MiniMax_AI 2.7 for building and routing - @Kimi_Moonshot K2-Thinking for deep research (Minimax and kimi running via @ollama) Let’s see how this does 👀

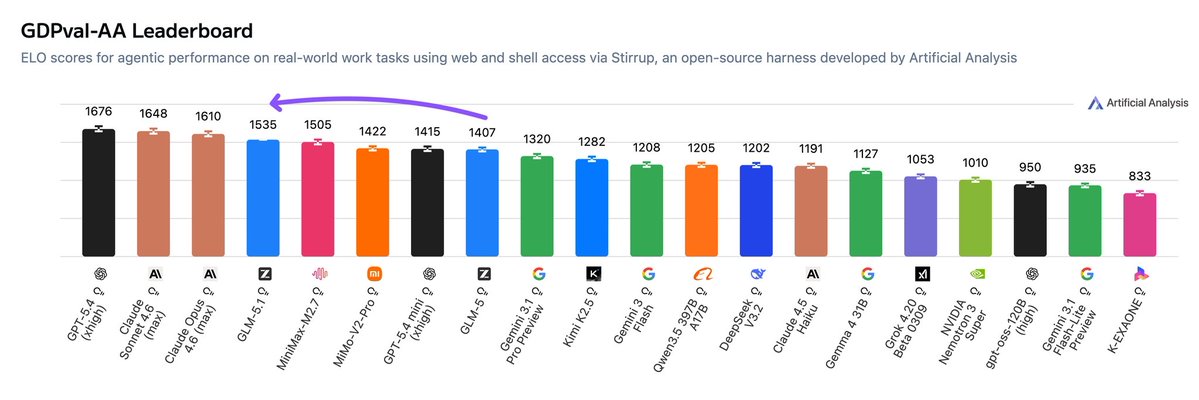

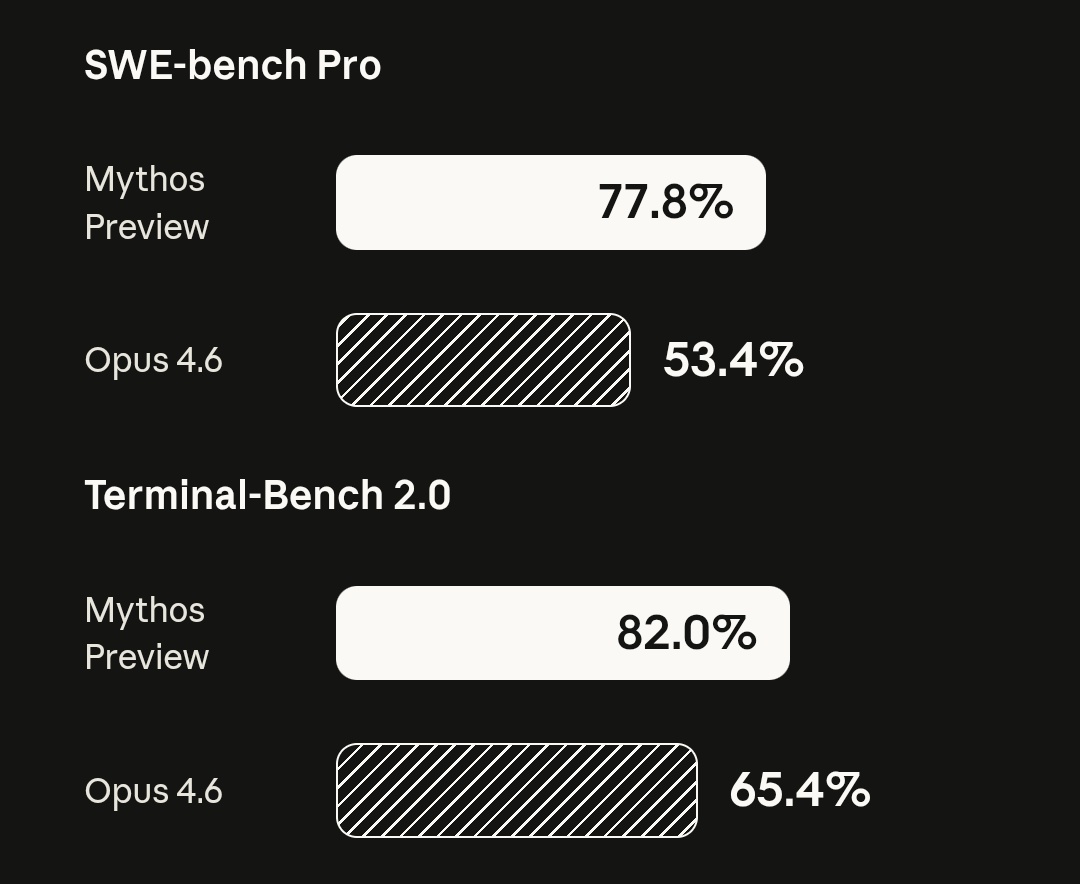

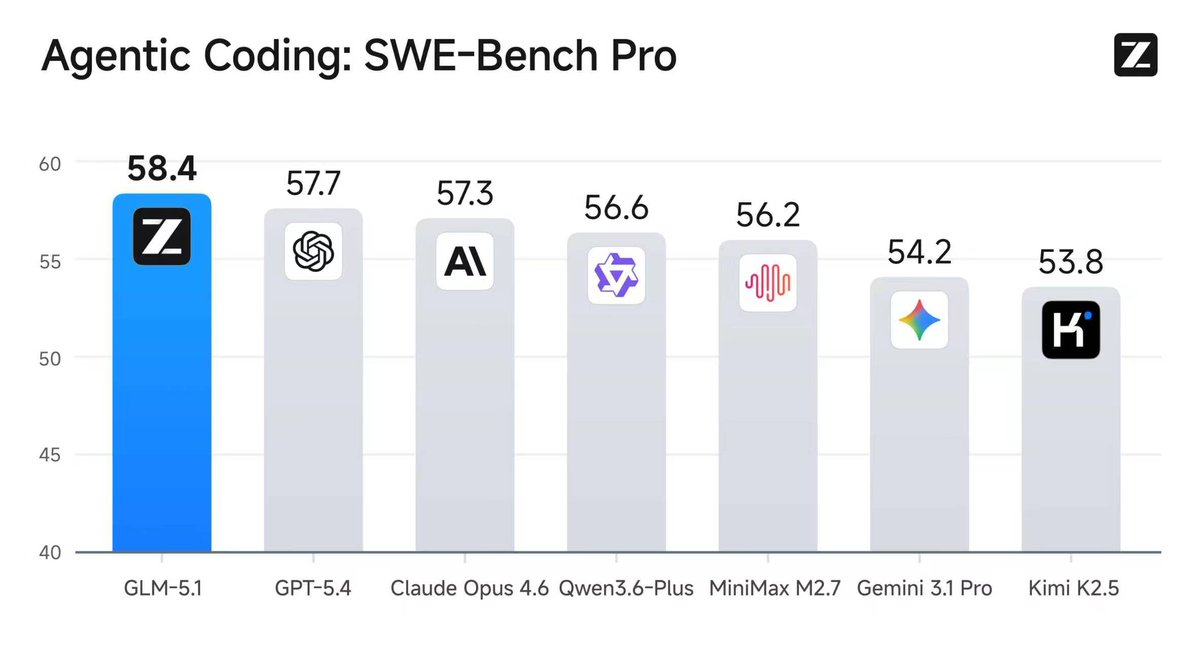

Introducing GLM-5.1: The Next Level of Open Source - Top-Tier Performance: #1 in open source and #3 globally across SWE-Bench Pro, Terminal-Bench, and NL2Repo. - Built for Long-Horizon Tasks: Runs autonomously for 8 hours, refining strategies through thousands of iterations. Blog: z.ai/blog/glm-5.1 Weights: huggingface.co/zai-org/GLM-5.1 API: docs.z.ai/guides/llm/glm… Coding Plan: z.ai/subscribe Coming to chat.z.ai in the next few days.

Introducing GLM-5.1: The Next Level of Open Source - Top-Tier Performance: #1 in open source and #3 globally across SWE-Bench Pro, Terminal-Bench, and NL2Repo. - Built for Long-Horizon Tasks: Runs autonomously for 8 hours, refining strategies through thousands of iterations. Blog: z.ai/blog/glm-5.1 Weights: huggingface.co/zai-org/GLM-5.1 API: docs.z.ai/guides/llm/glm… Coding Plan: z.ai/subscribe Coming to chat.z.ai in the next few days.

the last time I was this hyped for a release, it was GoT

Introducing GLM-5.1: The Next Level of Open Source - Top-Tier Performance: #1 in open source and #3 globally across SWE-Bench Pro, Terminal-Bench, and NL2Repo. - Built for Long-Horizon Tasks: Runs autonomously for 8 hours, refining strategies through thousands of iterations. Blog: z.ai/blog/glm-5.1 Weights: huggingface.co/zai-org/GLM-5.1 API: docs.z.ai/guides/llm/glm… Coding Plan: z.ai/subscribe Coming to chat.z.ai in the next few days.