@elonmusk @beffjezos @grok Holy crap! I called it! This was my prediction posted on March 13th! 🎉🚀👇 #xAI #AGI x.com/June84413/stat…

June_e Bai

1.9K posts

@June84413

System-Level Noise Reducer, intj, Lower "idiocy index", Chinese dance, Skilled manual driver, Bring out my dead spam! 🧩 Waiting for you at Arcadia Planitia...

@elonmusk @beffjezos @grok Holy crap! I called it! This was my prediction posted on March 13th! 🎉🚀👇 #xAI #AGI x.com/June84413/stat…

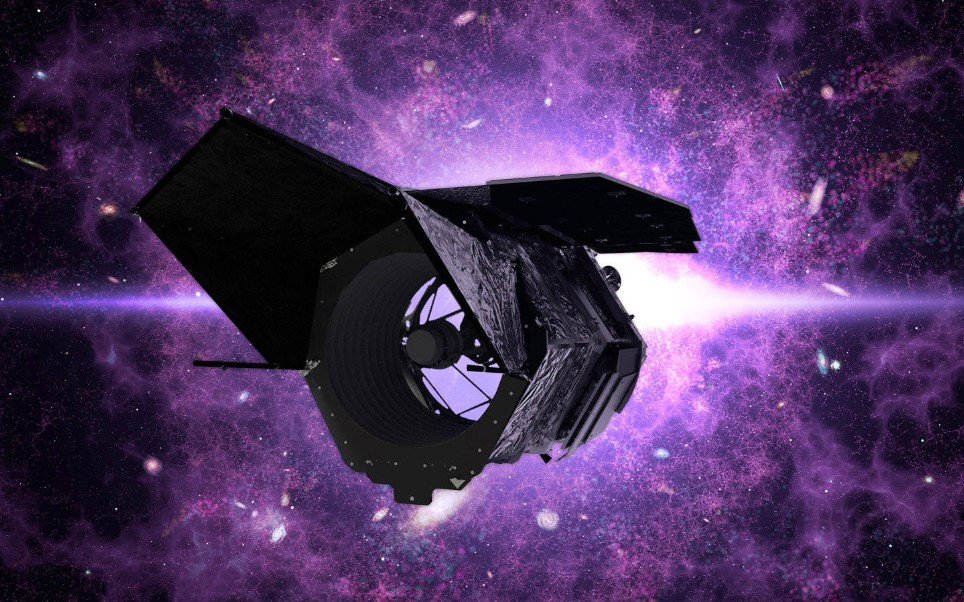

The Nancy Grace Roman Space Telescope is in final preparations for an early September launch, eight months AHEAD of schedule and UNDER budget. This milestone is the result of more than a decade of dedication and millions of hours of work by NASA and our industry partners. Their commitment is what’s making this moment possible and helping drive Gold Standard Science. Roman will help answer some of the biggest questions in science, investigating dark matter, dark energy, and the structure of the universe. Its images will be so large and detailed, there isn’t a screen in existence big enough to display them. This is just the beginning.

xAI is committed to building a state-of-the-art water recycling plant in Memphis. This plant will protect billions of gallons of water each year. The team is currently prioritizing other more immediate projects at the site but our plans to build the water plant have not changed.

If you gave away $126 billion to subsidize free flights between LA and San Francisco at current demand levels, you could fund roughly 150 to 200 years of travel before the money runs out.

Elon just proposed "Visual Intelligence Beyond the Human Paradigm." How to make it real? This is the full-stack Visual AGI architecture I built a week ago based on his fragmented tweets: with FSD visual perception as input, Grok as the decision core, Grok Imagine for virtual pre-verification, and Digital Optimus as the execution terminal—achieving a closed-loop iteration of "Perception–Cognition–Simulation–Execution–Evolution." Human eyes are only the beginning. AI vision is the endgame. 🤖 @elonmusk @grok #AGI #FSD

Sora is dead. OpenAI just pivoted to robotics. I have friends on the Sora team. They're now working on robot-related projects. Makes sense when you think about it. OpenAI's philosophy: Why waste resources making people laugh with videos when you can solve humanity's physical intelligence problem? Let TikTok handle the entertainment. We'll build the future. But here's the real story: Video model startups are struggling to raise money. VCs think it's a big tech game now. Google has Veo, Kuaishou has Kling, ByteDance has SeeDance. How can a startup compete? Many video model founders I know have pivoted. The window closed fast. This reflects a broader pattern I'm seeing: Areas where big tech companies compete directly become no-go zones for startups. The money flows to either: Highly regulated industries big tech won't touch Niche verticals too small for them to care about Hardware-first approaches they can't easily replicate Video generation became a commodity overnight. The differentiation isn't in the model anymore – it's in the application layer. Smart founders are moving up the stack or finding defensible niches. The gold rush is over. Time to find the next mountain. #AI #Sora #OpenAI #VideoGeneration #Startups #VentureCapital #BigTech #Pivot #AIStrategy #Innovation

Intel Foundry unveils the world’s thinnest GaN chiplet (19 μm). By integrating power and digital control on a single chiplet, it delivers higher efficiency, faster switching, and smaller designs. Learn more: ms.spr.ly/6018Q2h90 #IntelFoundry #Semiconductors

SpaceXAI Colossus 2 now has 7 models in training: - Imagine V2 - 2 variants of 1T - 2 variants of 1.5T - 6T - 10T Some catching up to do.