Lev Peker

2.2K posts

Lev Peker

@LevPeker

Husband. Father. CEO

1/10 The U.S. naval blockade of the Strait of Hormuz would cost Iran approximately $276M/day in lost exports and disrupt $159M/day in imports, a combined economic damage of ~$435M/day, or $13B/month. Over 90% of Iran's $109.7B in annual trade transits the Persian Gulf. Oil/gas accounts for 80% of government export earnings and 23.7% of GDP. Kharg Island alone generates ~$53B/year, or as I noted to @TIME, "$78 billion a year in energy revenue.

Sleeping <6h a night for 2 weeks reduces cognitive performance equal to 2 nights of total sleep deprivation.

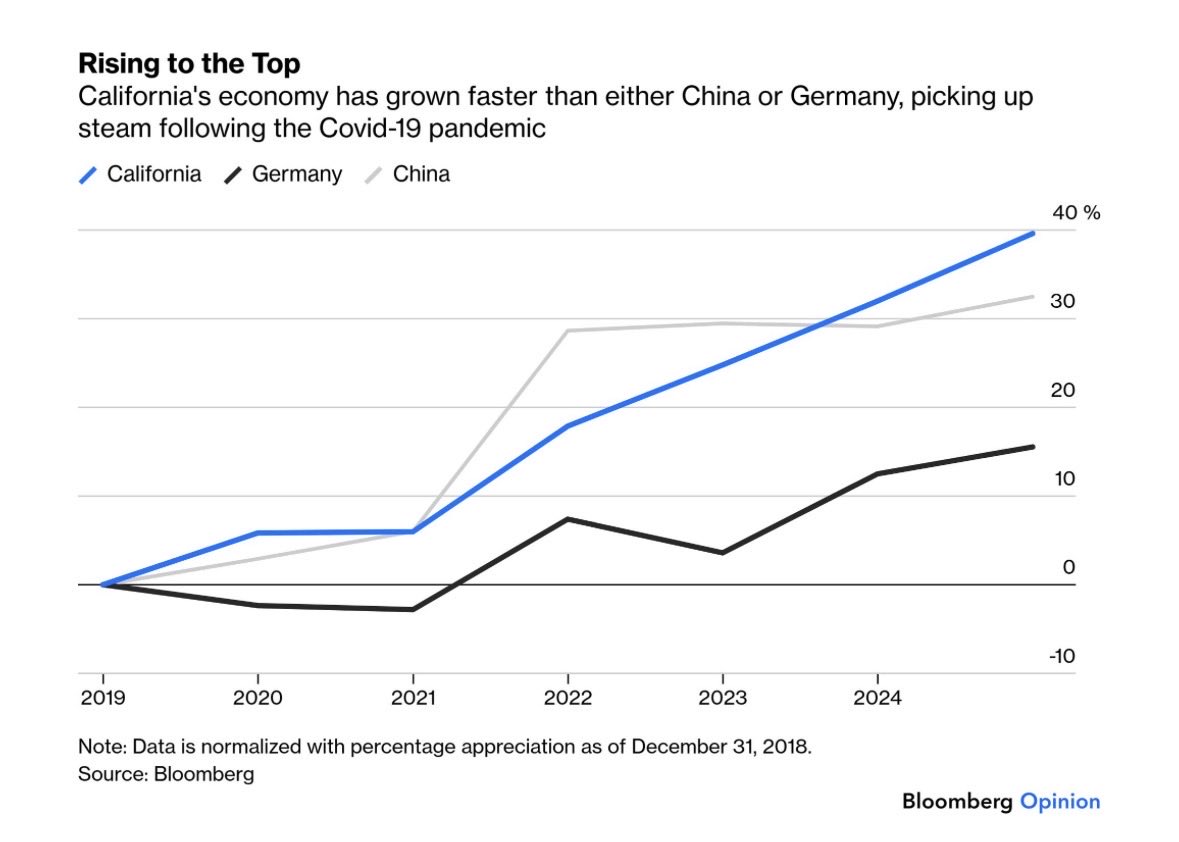

California gas prices are absolutely outrageous—all to pay for someone else’s stupid war. But any driver knows that this isn’t entirely new. Gas in this state has been way too expensive for way too long. The days of Trump and Big Oil screwing Californians are numbered.

Chinese carmaker BYD unveils a recharge as fast as filling up with gas

a moving man will meet his luck 🥀