Rui Meng

35 posts

@RuiMeng_

Research Scientist @GoogleCloud

🚀 Introducing Qwen3-VL-Embedding and Qwen3-VL-Reranker – advancing the state of the art in multimodal retrieval and cross-modal understanding! ✨ Highlights: ✅ Built upon the robust Qwen3-VL foundation model ✅ Processes text, images, screenshots, videos, and mixed modality inputs ✅ Supports 30+ languages ✅ Achieves state-of-the-art performance on multimodal retrieval benchmarks ✅ Open source and available on Hugging Face, GitHub, and ModelScope ✅ API deployment on Alibaba Cloud coming soon! 🎯 Two-stage retrieval architecture: 📊 Embedding Model – generates semantically rich vector representations in a unified embedding space 🎯 Reranker Model – computes fine-grained relevance scores for enhanced retrieval accuracy 🔍 Key application scenarios: Image-text retrieval, video search, multimodal RAG, visual question answering, multimodal content clustering, multilingual visual search, and more! 🌟 Developer-friendly capabilities: • Configurable embedding dimensions • Task-specific instruction customization • Embedding quantization support for efficient and cost-effective downstream deployment Hugging Face: huggingface.co/collections/Qw… huggingface.co/collections/Qw… ModelScope: modelscope.cn/collections/Qw… modelscope.cn/collections/Qw… Github: github.com/QwenLM/Qwen3-V… Blog: qwen.ai/blog?id=qwen3-… Tech Report:github.com/QwenLM/Qwen3-V…

🇨🇦 Excited to present our work at @COLM_conf in Montreal! Oct 7-10 at Palais des Congrès!📄 Our accepted papers: CodeXEmbed: A Generalist Embedding Model Family for Multilingual and Multi-task Code Retrieval 👥Authors: Ye Liu, Rui Meng, Shafiq Joty @JotyShafiq, Silvio Savarese @silviocinguetta, Caiming Xiong @CaimingXiong, Yingbo Zhou @yingbozhou_ai, Semih Yavuz @semih__yavuz 📝Paper: arxiv.org/abs/2411.12644 "AI-Slop to AI-Polish? Aligning Language Models through Edit-Based Writing Rewards and Test-time Computation" 👥Authors: Tuhin Chakrabarty @TuhinChakr, Philippe Laban @PhilippeLaban, Jason Wu @jasonwu0731 📝Paper: arxiv.org/abs/2504.07532 #COLM2025 #FutureOfAI #EnterpriseAI #LanguageModels

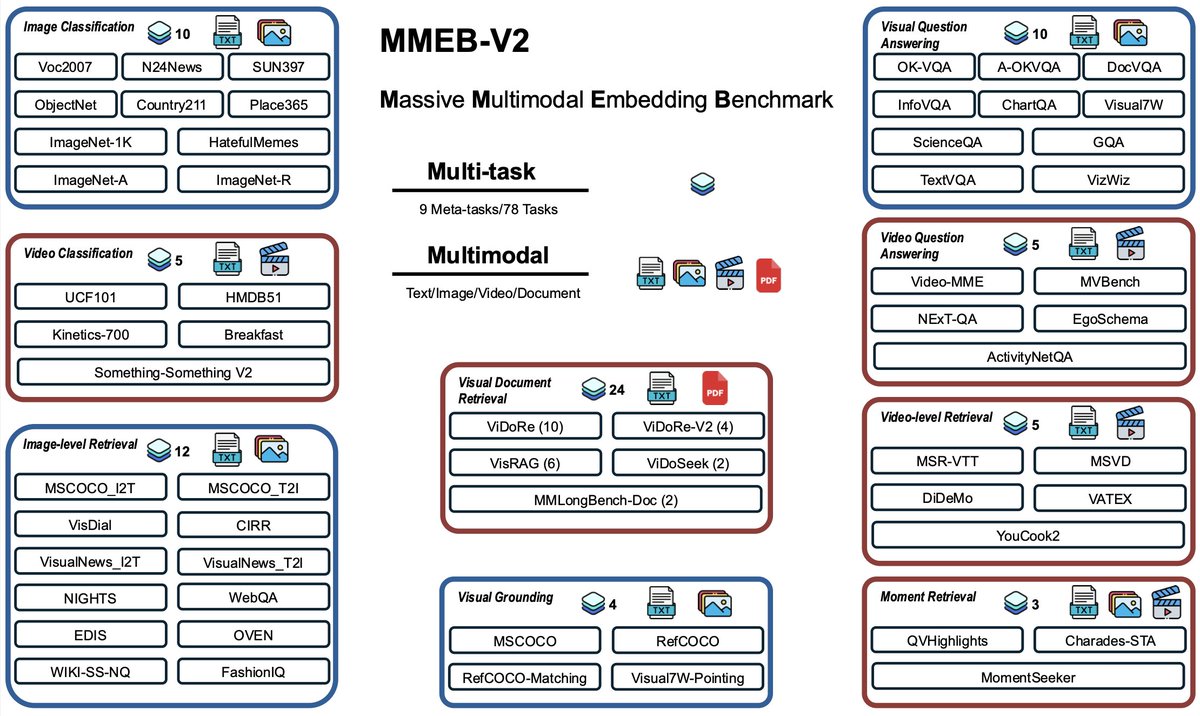

📢 New Preprint from @raghavlite on Multimodal Contrastive Learning: Breaking the Batch Barrier (B3) 📢 TL;DR: Smart batch mining based on community detection achieves state of the art on the MMEB benchmark. Preprint: arxiv.org/pdf/2505.11293 Code: github.com/raghavlite/B3

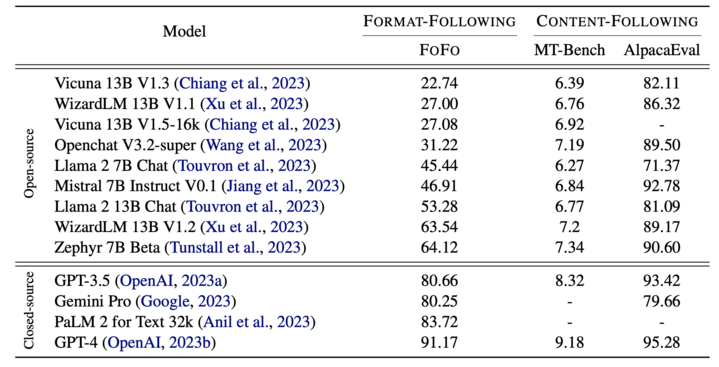

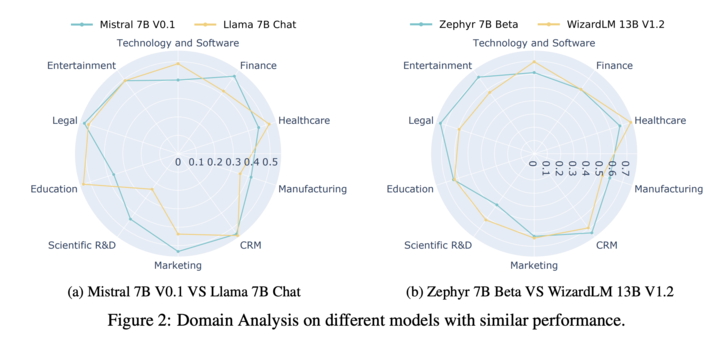

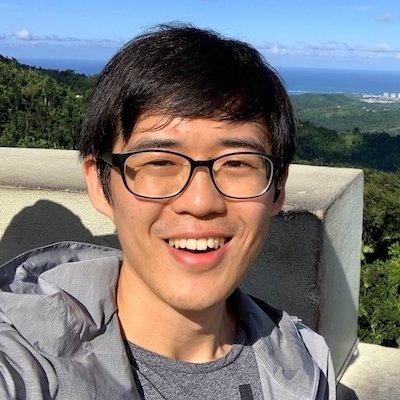

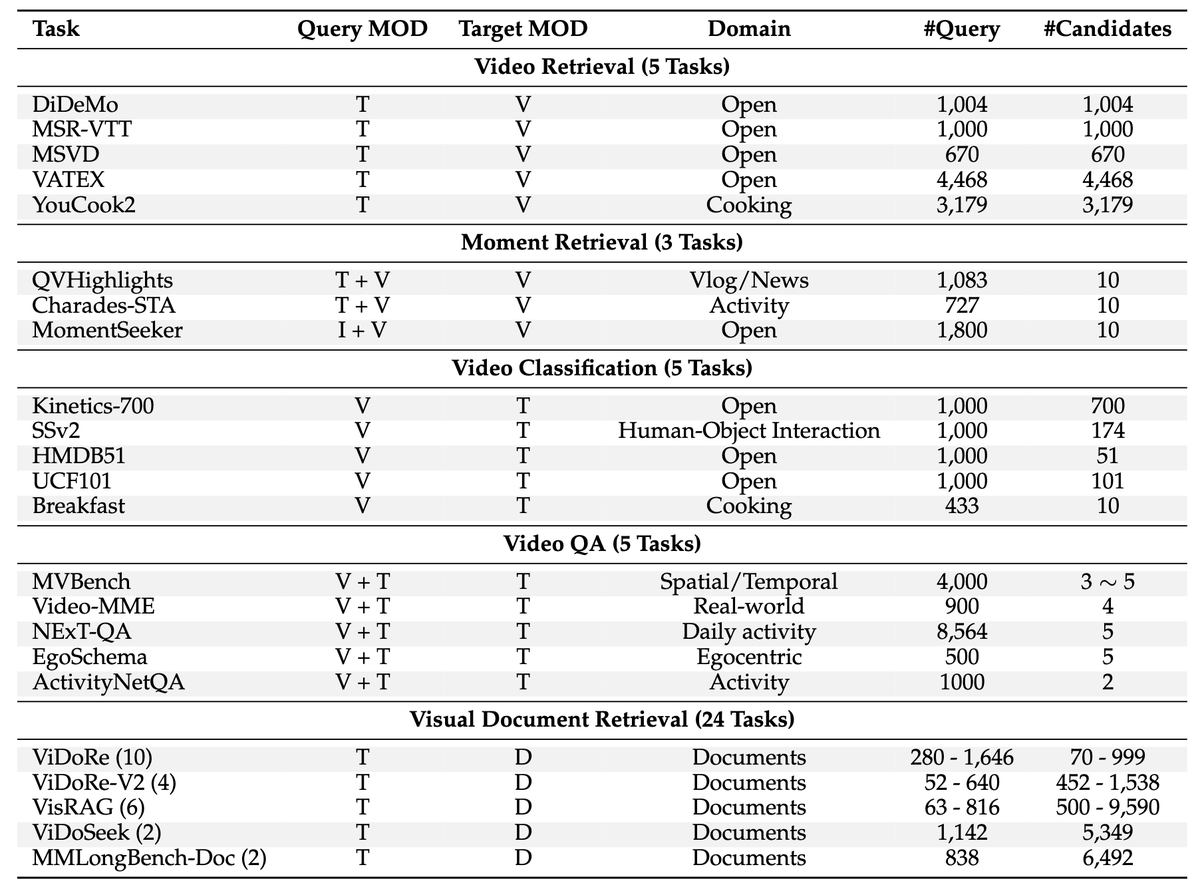

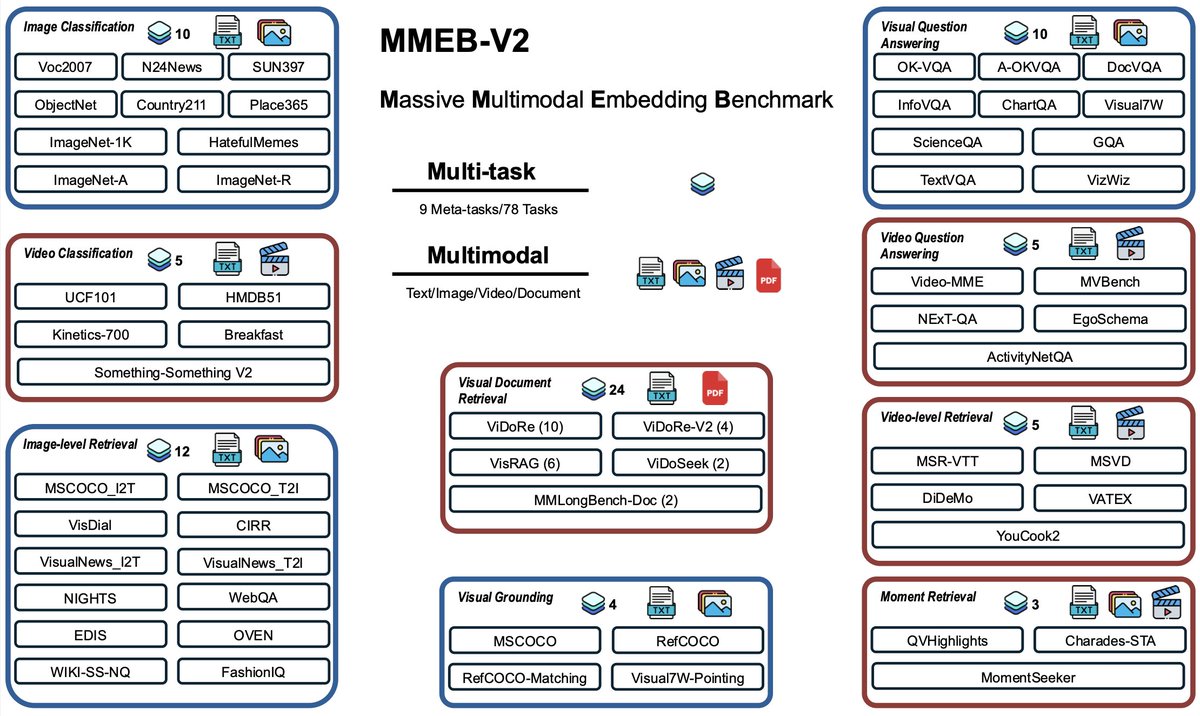

Paper: arxiv.org/abs/2410.05160 Github: github.com/TIGER-AI-Lab/V… Huggingface Collection: huggingface.co/collections/TI… This work is led by @Ernestzyj and @memray0 in collaboration with @Xinyi__Yang @semih__yavuz, Yingbo Zhou from @SFResearch.

Paper: arxiv.org/abs/2410.05160 Github: github.com/TIGER-AI-Lab/V… Huggingface Collection: huggingface.co/collections/TI… This work is led by @Ernestzyj and @memray0 in collaboration with @Xinyi__Yang @semih__yavuz, Yingbo Zhou from @SFResearch.

🎆I am pleased to announce the release of the latest version of the Salesforce Embedding Model (SFR-embedding-v2), which has reclaimed the top-1 position on the MTEB benchmark. ✨ Key Highlights: 🥇 Achieved the distinction of being the second model to surpass a 70+ performance score on MTEB. 🔧 New multi-stage training recipe to enhance multitasking capabilities. 📊Significant improvements in classification and clustering tasks, while maintaining strong performance in retrieval and other areas. 💪 huggingface.co/Salesforce/SFR…