Chenghao Yang

229 posts

Chenghao Yang

@chrome1996

Ph.D. student @UChicago Ex-SR @google Ex-Scientist @AWS. Ex-RA @jhuCLSP @columbianlp @TsinghuaNLP. Ex-Intern @IBM @AWS. Opinions are my own.

Big congratulations to @ziqiao_ma for winning the Towner Prize for Outstanding PhD Research.🎉 It's a major recognition of creativity, impact, and outstanding contributions to #AI research. A well-deserved recognition!👏 cse.engin.umich.edu/stories/martin…

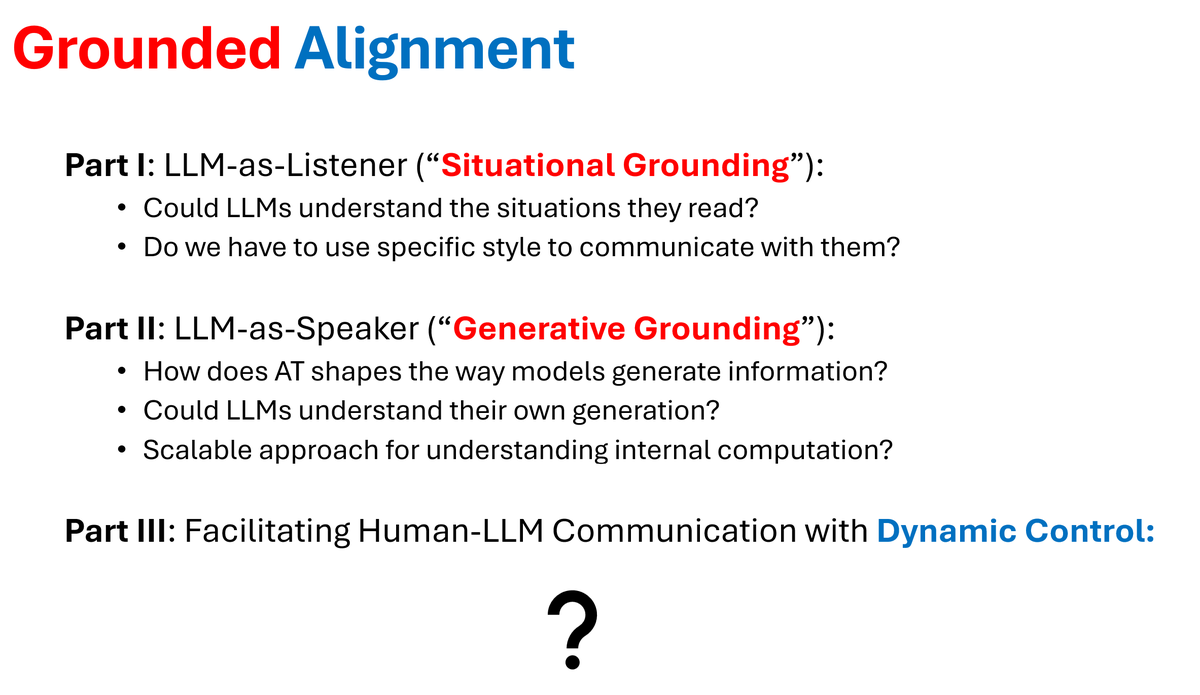

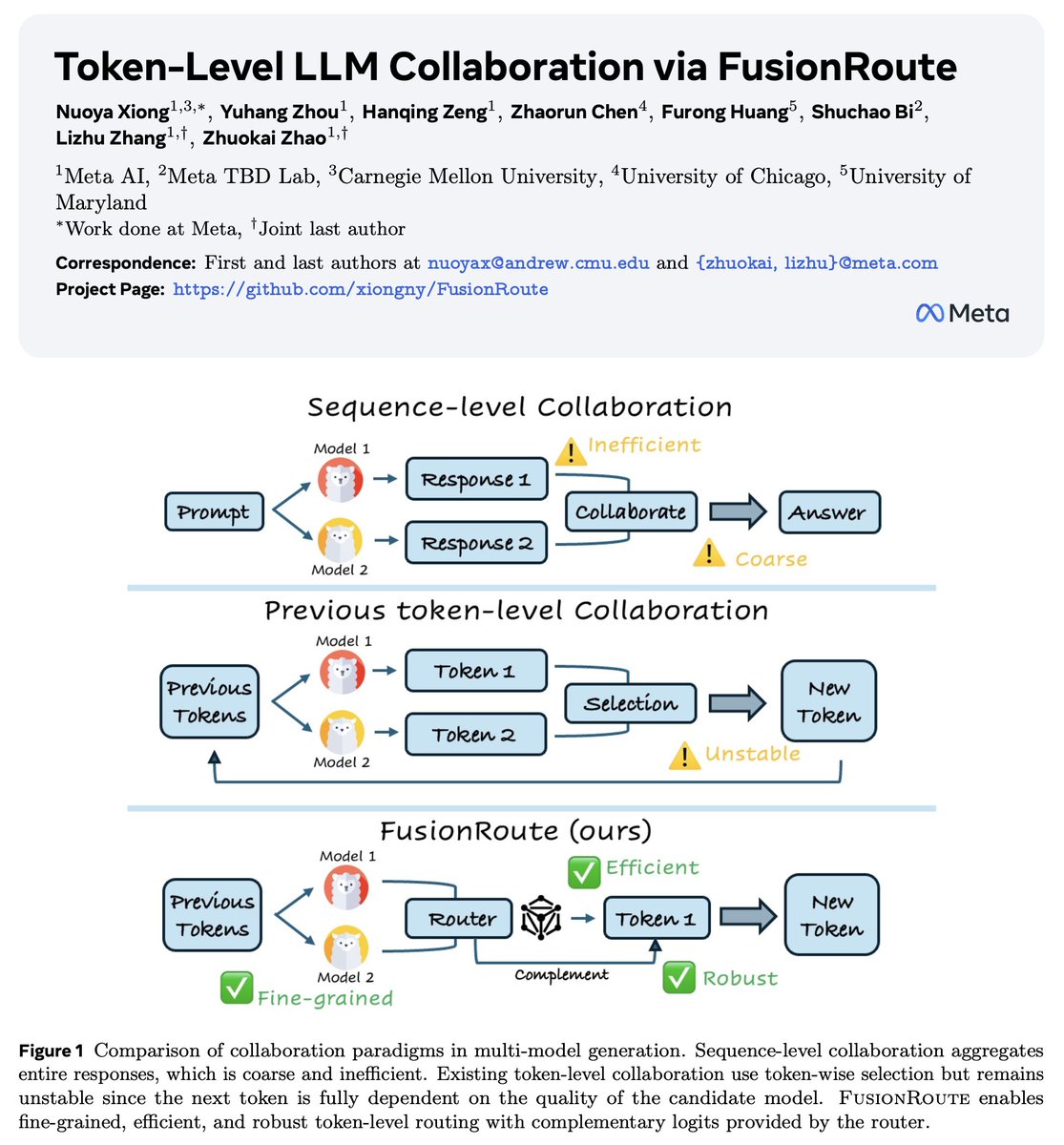

Lack of diversity in your LLM generation? (also noted by Artificial Hivemind, best paper @NeurIPSConf) Time to bring your base model back! An inference-time, token-level collaboration between a base and an aligned model can optimize and control diversity and quality!

Lack of diversity in your LLM generation? (also noted by Artificial Hivemind, best paper @NeurIPSConf) Time to bring your base model back! An inference-time, token-level collaboration between a base and an aligned model can optimize and control diversity and quality!

New paper: You can’t interpret LLM reasoning from one chain-of-thought. You must study a distribution of possible trajectories! Repeated sampling reveals: self-preservation doesn’t drive LLM blackmail, unfaithful reasoning reflects a biased path, & resampling steers behavior. 🧵

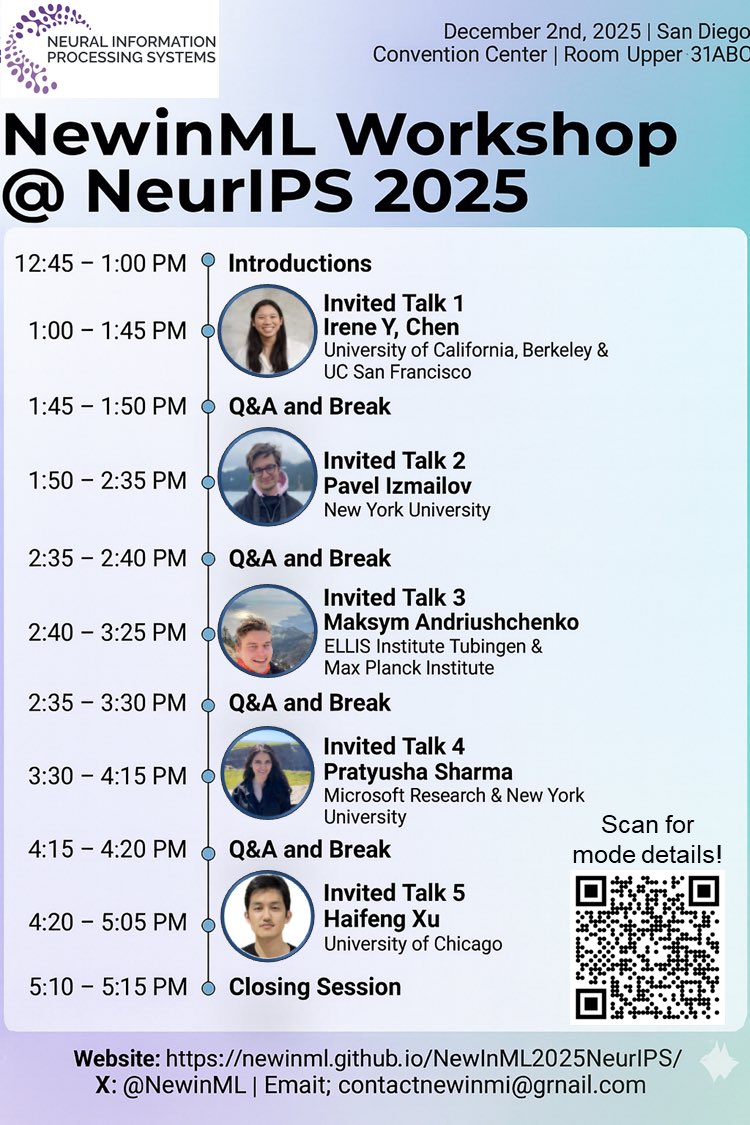

🚨 New in ML Workshop at @NeurIPSConf We're so excited to invite you to the New In ML Workshop (@NewInML), taking place on Tuesday, December 2nd, 2025, at the San Diego Convention Center! Great opportunity, specifically for people who are new in machine learning! Details🧵