mrciffa

146 posts

mrciffa

@davideciffa

Working on inference and computers. Engineer, researcher & founder.

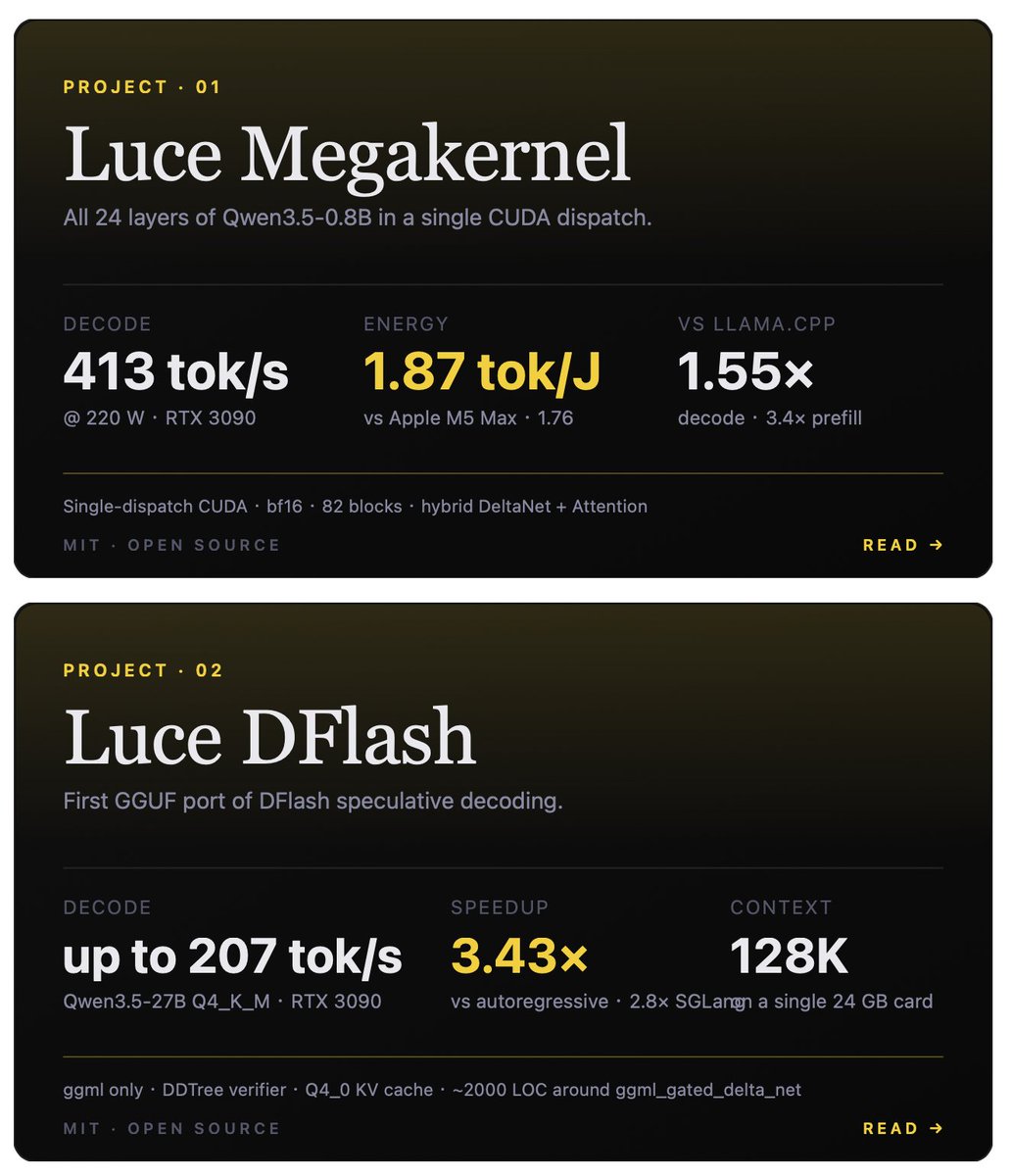

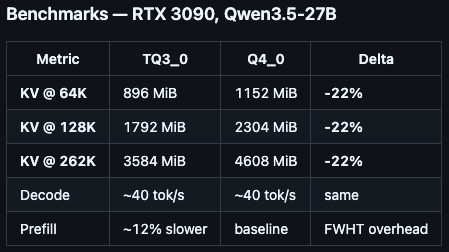

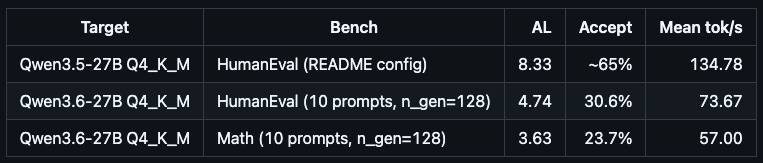

this guy just cracked 134 tok/s on qwen 3.5-27b dense and 73 on new qwen 3.6-27b on a single 3090. open source moves at godspeed in 2026. weights ship in the evening, dynamic ggufs land by midnight, fused kernel + speculative decoding stack runs the new model 12 hours after release. his dflash + ddtree stack loads qwen 3.6 asis because the architecture string matches 3.5. zero retraining of the draft model, zero waiting for upstream support. the same hand tuned consumer hardware kernel work that pushed 3.5 to 134 tok/s already eats 3.6 at 73, with a regression he is openly flagging because the draft model needs a dedicated pass for 3.6. this is the lane almost nobody is working on. major labs are stuck shipping framework abstractions optimized for h100 fleets. @pupposandro is hand tuning kernels for the silicon actual builders own. 3090 has 24 gigs of vram, mature cuda support, and almost zero kernel level optimization coming out of the big shops. it is the most underrated research platform in consumer ai right now. i am running honest baseline q4_k_m on llama.cpp now to set the dense floor without tricks. then sandro's stack runs on the same gpu, same model, same prompt. generic inference vs hand tuned kernels with speculative decoding. that delta is where the next 5 years of consumer ai live. receipts incoming.

this guy just cracked 134 tok/s on qwen 3.5-27b dense and 73 on new qwen 3.6-27b on a single 3090. open source moves at godspeed in 2026. weights ship in the evening, dynamic ggufs land by midnight, fused kernel + speculative decoding stack runs the new model 12 hours after release. his dflash + ddtree stack loads qwen 3.6 asis because the architecture string matches 3.5. zero retraining of the draft model, zero waiting for upstream support. the same hand tuned consumer hardware kernel work that pushed 3.5 to 134 tok/s already eats 3.6 at 73, with a regression he is openly flagging because the draft model needs a dedicated pass for 3.6. this is the lane almost nobody is working on. major labs are stuck shipping framework abstractions optimized for h100 fleets. @pupposandro is hand tuning kernels for the silicon actual builders own. 3090 has 24 gigs of vram, mature cuda support, and almost zero kernel level optimization coming out of the big shops. it is the most underrated research platform in consumer ai right now. i am running honest baseline q4_k_m on llama.cpp now to set the dense floor without tricks. then sandro's stack runs on the same gpu, same model, same prompt. generic inference vs hand tuned kernels with speculative decoding. that delta is where the next 5 years of consumer ai live. receipts incoming.

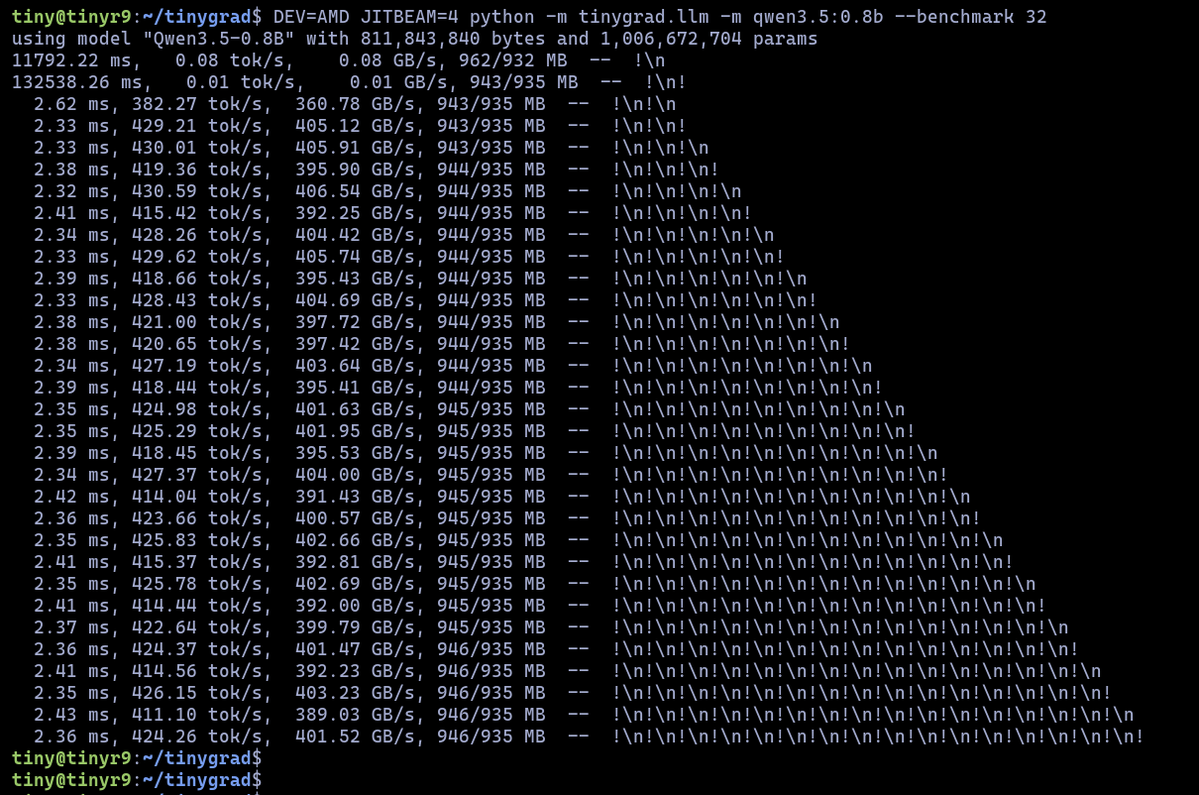

I love tinygrad, but with our megakernel you can go to 415 tok/s in decoding speed 🚄

We set out to replicate Kimi's 193 tok/s Qwen3.5-0.8B on M3 Max. Our baseline is already 178 tok/s, beating LMStudio (160) and llama.cpp (140) out of the box, but with tinygrad's custom kernel feature Claude cranked it to 195.7!

Completed a first hour side-by-side comparison between Qwen3.5 27b and Qwen3.6 27b on the same 4 canvas coding tests. Running the Qwen3.6 27b FP8, vLLM. What do you think?