hsdhcdev

2.4K posts

hsdhcdev

@hsdhcdev

Developing #DarkDescent - a dungeon crawling game integrated with ComfyUI. Alpha available at https://t.co/kIFWlQkRcJ

American logs into her federal student aid account so we can see her actual loans and payments - She took out a $49,548.74 loan - She’s made 120 payments, paying $25,558.36 - Her current balance is $50,121.33 So after paying $25,558.36, she now owes more than she took out “It's all such a scam”

🚨 Shocking: Frontier LLMs score 85-95% on standard coding benchmarks. We gave them equivalent problems in languages they couldn't have memorized. They collapsed to 0-11%. Presenting EsoLang-Bench. Accepted to the Logical Reasoning and ICBINB workshops at ICLR 2026 🧵

JUST IN - Iran's attacks on Qatar have wiped out 17% of Qatar's LNG capacity for up to five years, causing an estimated $20 billion in lost annual revenue — Reuters

Open source continues to catch up with closed source MiniMax 2.7 just dropped and will likely become the most used LLM on Claw in days 🚀

incredible.

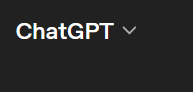

1) 91% of predictions in AI 2027 have come true so far 2) AI 2027 is on Jeopardy now

Using claude code to directly control a liquid handling robot is such a crazy experience

In the future, you'll turn DLSS off and see this