Omar Khattab

13.1K posts

Omar Khattab

@lateinteraction

Asst professor @MIT CSAIL @nlp_mit. Research includes https://t.co/VgyLxl0oa1, https://t.co/ZZaSzaRaZ7 (@DSPyOSS), RLMs, and GEPA. Prev: CS PhD @StanfordNLP. Research @Databricks.

it's under appreciated how excellent OpenAI models are at web search

Introducing Mixedbread Wholembed v3, our new SOTA retrieval model across all modalities and 100+ languages. Wholembed v3 brings best-in-class search to text, audio, images, PDFs, videos... You can now get the best retrieval performance on your data, no matter its format.

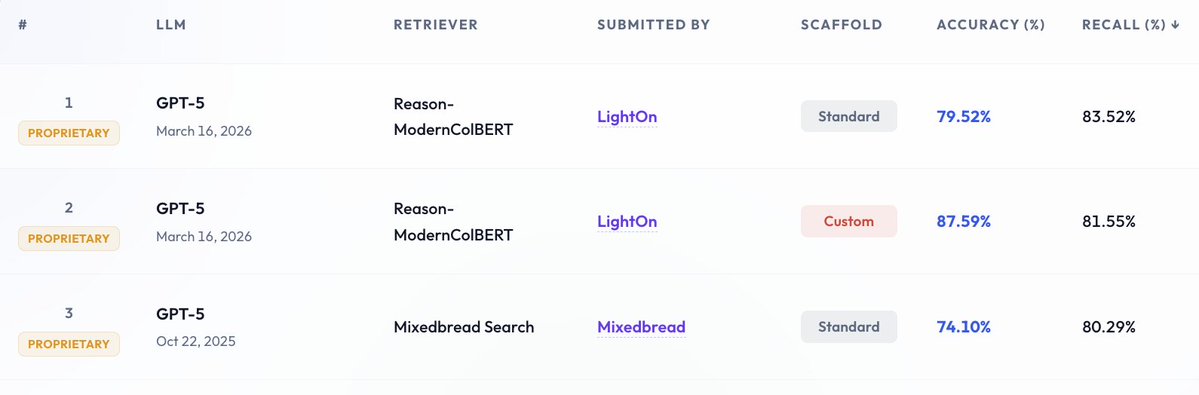

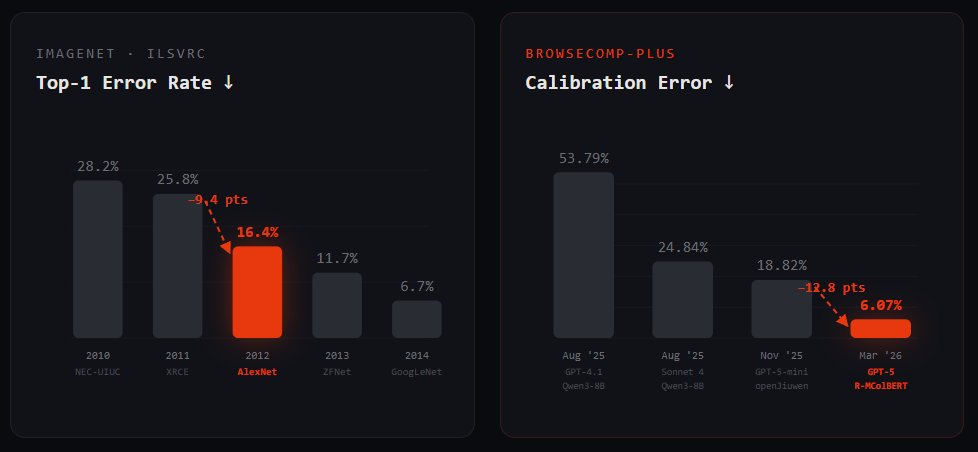

BrowseComp-Plus, perhaps the hardest popular deep research task, is now solved at nearly 90%... ... and all it took was a 150M model ✨ Thrilled to announce that Reason-ModernColBERT did it again and outperform all models (including models 54× bigger) on all metrics

BrowseComp-Plus, perhaps the hardest popular deep research task, is now solved at nearly 90%... ... and all it took was a 150M model ✨ Thrilled to announce that Reason-ModernColBERT did it again and outperform all models (including models 54× bigger) on all metrics

BrowseComp-Plus, perhaps the hardest popular deep research task, is now solved at nearly 90%... ... and all it took was a 150M model ✨ Thrilled to announce that Reason-ModernColBERT did it again and outperform all models (including models 54× bigger) on all metrics

BrowseComp-Plus, perhaps the hardest popular deep research task, is now solved at nearly 90%... ... and all it took was a 150M model ✨ Thrilled to announce that Reason-ModernColBERT did it again and outperform all models (including models 54× bigger) on all metrics

BrowseComp-Plus, perhaps the hardest popular deep research task, is now solved at nearly 90%... ... and all it took was a 150M model ✨ Thrilled to announce that Reason-ModernColBERT did it again and outperform all models (including models 54× bigger) on all metrics

someone should try having RLMs write REPL code primarily using DSPy

imo, these kinds of regular amazing results don't quite mean that late interaction is extremely strong per se as much as they mean that dense single-vector retrievers are a permanent bottleneck on your quality and generalization they're so bad!