lmms-lab

33 posts

lmms-lab

@lmmslab

Feeling and building multimodal intelligence.

Agents are mind-blowing. But they don't remember things consistently. Or when they do — it's not safe. We built Engram. AES-256 encrypted. Keys stay on your device. Zero-knowledge sync. No cloud. No middleman. Use it. Your agent memory is yours. @lmmslab github.com/EvolvingLMMs-L… youtu.be/I6xVuNRMkVc

🚀Introducing Video-MMMU: Evaluating Knowledge Acquisition from Professional Videos 🎥 Knowledge-intensive Videos: Spanning 6 professional disciplines (Art, Business, Science, Medicine, Humanities, Engineering) and 30 diverse subjects, Video-MMMU challenges models to learn and apply college-level knowledge from videos. ❓ Knowledge Acquisition-based QA Design: QA pairs are aligned with the three stages of cognitive learning: · Perception: Identifying knowledge. · Comprehension: Understanding the underlying concepts. · Adaptation: Applying the knowledge to practical scenarios. 📊 Quantitative Knowledge Acquisition Assessment (Δknowledge): A novel metric that quantifies how much a model improves after watching a video, providing unique insights into its knowledge acquisition capability. Why It Matters? 🚀 Pushing the Boundaries Video-MMMU moves beyond perception and understanding of video to knowledge acquisition from video, positioning videos as a powerful medium for transmitting knowledge. 📚 Cognitive-Level Insights Video-MMMU introduces three cognitive tracks—Perception, Comprehension, and Adaptation—that mirror human learning stages, providing a structured framework to evaluate how effectively models acquire, understand, and apply knowledge. 🧠 Bridging the Gap Video-MMMU uncovers critical limitations in current LMMs and provides insights for advancing LMMs’ capabilities in knowledge acquisition from video. Project Page: videommmu.github.io ArXiv: arxiv.org/html/2501.1382…

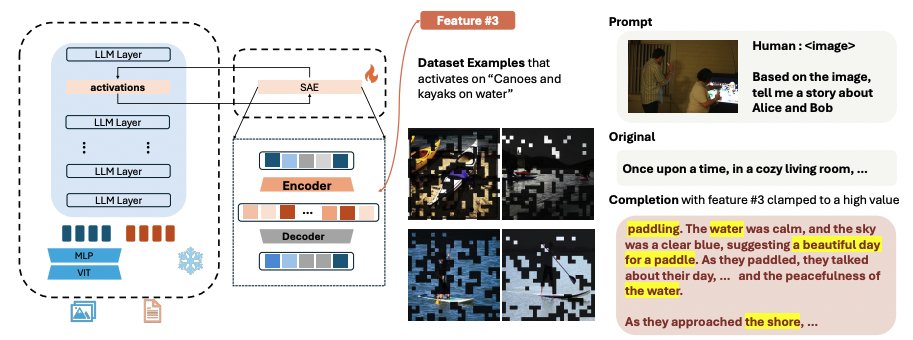

New work from LMMs-Lab! This time we present our latest research on the interpretation and safety of multimodal models

TL;DR We present Large Multi-modal Models Can Interpret Features in Large Multi-modal Models We successfully use a 72B large model to interpret the open-semantic features of an 8B small model, uncovering numerous important thought patterns inside multimodal models. Paper: arxiv.org/abs/2411.14982 Code: github.com/EvolvingLMMs-L… Examples: huggingface.co/datasets/lmms-…

🚀🔥Introducing LLaVA-Critic--the first open-source large multimodal model designed to assess model performance across diverse multimodal tasks! LLaVA-Critic excels in two primary scenarios: - 👨⚖️LMM-as-a-Judge: It provides pointwise scores and pairwise rankings that closely align with human and GPT-4o preferences across multiple evaluation tasks, offering a viable open-source alternative to commercial GPT models. - 🩷Preference Learning: It offers reliable reward signals that significantly enhance the visual chat capabilities of LMMs through preference alignment. To develop the "critic" capacity, we curate LLaVA-Critic-113k, a high-quality critic instruction-following dataset tailored to provide quantitative judgment and the corresponding reasoning process across a range of complex evaluation settings. Explore more: - 📰Paper: arxiv.org/abs/2410.02712 - 🪐Project Page: llava-vl.github.io/blog/2024-10-0… - 📦Dataset: huggingface.co/datasets/lmms-… - 🤗Models: huggingface.co/collections/lm… Try our released models and dataset👆

(1/4)🚀 Ready to supercharge your Video LLMs? 🎥Meet LLaVA-Video-178K, a high-quality dataset for video instruction tuning with 1.3M samples in captions, Q&A! 💡Perfect for further boosting Video LLMs, on top of strong capability transfer from image/language shown in LLaVA-OV🤖

We are organizing a new workshop on "Knowledge in Generative Models" at #ECCV2024 to explore how generative models learn representations of the visual world and how we can use them for downstream applications. For the schedule and more details, visit our website: 🔗Website: sites.google.com/ttic.edu/knowl… 📅 Date: 30 September 2024, 2 PM 📍 Location: Brown 1, MiCo Milano, Italy 🇮🇹 🎤 Speakers: Amazing lineup to provide diverse perspectives: @davidbau, David Forsyth, @shalinidemello, @YGandelsman, @phillip_isola and @liuziwei7 Organizing with @DuXiaodan, @nickKolkin, @graceluo_, @ShuangL13799063 and @grshakh See you all in Milano!

We worked with the LLaVA team to integrate LLaVA-OneVision into SGLang v0.3. You can now launch a server and query it using the OpenAI-compatible vision API, supporting interleaved text, multi-image, and video formats.

We are thrilled to announce the milestone release of SGLang Runtime v0.2, featuring significant inference optimizations after months of hard work. It achieves up to 2.1x higher throughput compared to TRT-LLM and up to 3.8x higher throughput compared to vLLM. It consistently delivers superior performance when serving Llama-8B to 405B models on A100/H100 with FP8/BF16. SGLang is fully open-source and implemented in Python. As it matures from a prototype, we invite the community to join us in creating the next-generation efficient serving engine! Learn more at lmsys.org/blog/2024-07-2…