Guan Wang

22 posts

@makingAGI

CEO of Sapient Intelligence. Exploring the path to AGI through brain-inspired AI. 🧠🤖 #AGI #NeuroAI

Ok, so this paragraph in isolation looks pretty bad, but based on the code, THEY DIDN'T TRAIN ON THE TEST SET. In fact, THEY DIDN'T PRETRAIN AT ALL. And that's the point of the paper! 1/

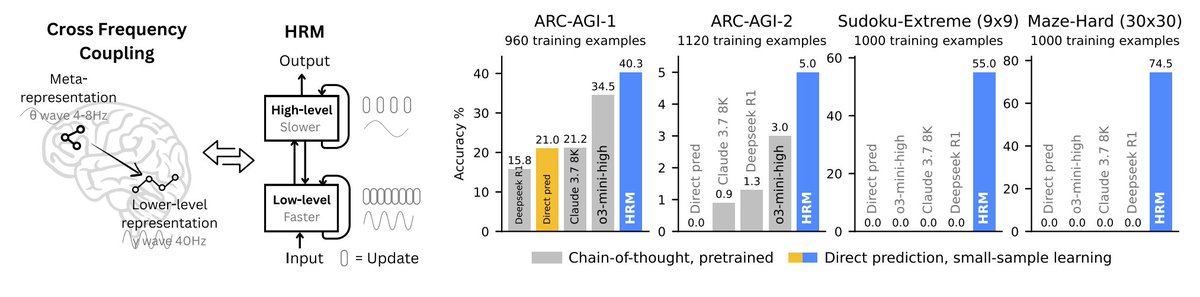

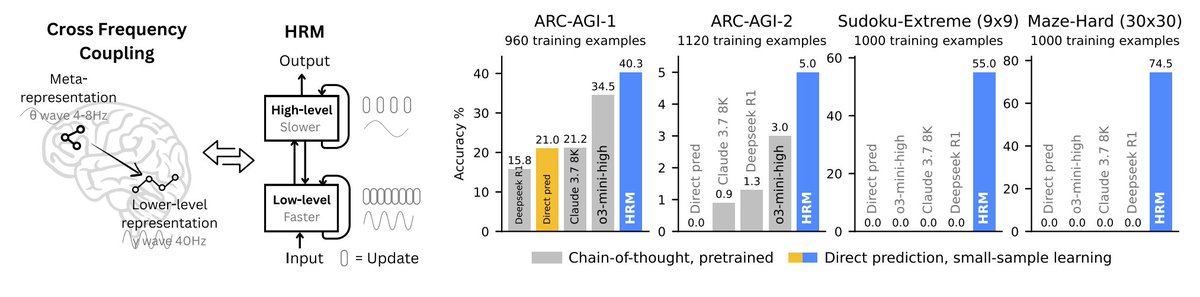

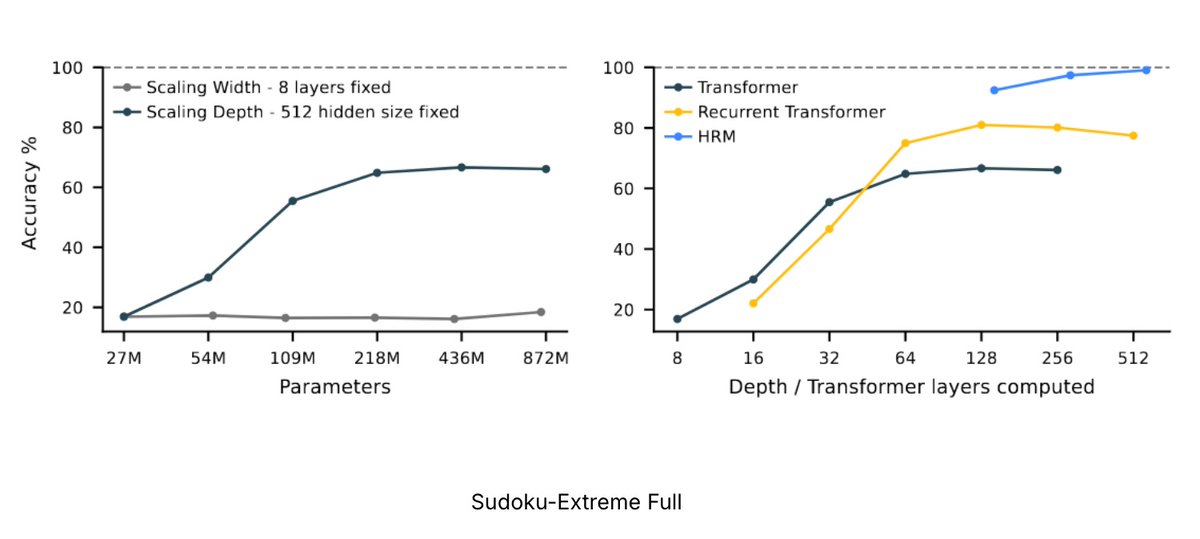

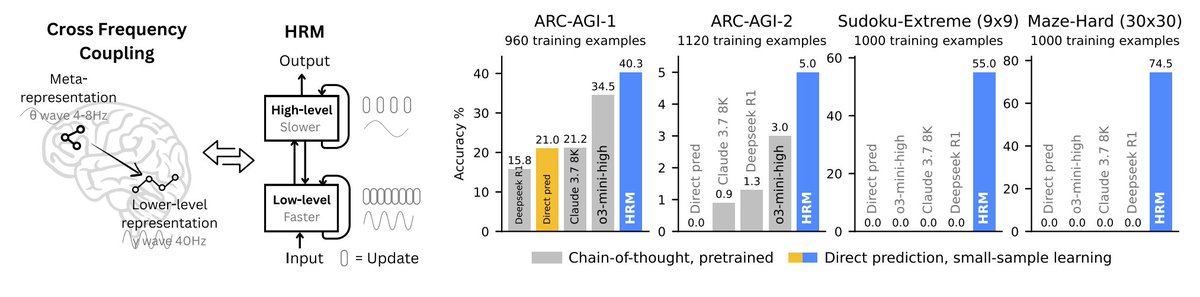

Some problems can’t be rushed—they can only be done step by step, no matter how many people or processors you throw at them. We’ve scaled AI by making everything bigger and more parallel: Our models are parallel. Our scaling is parallel. Our GPUs are parallel. But what if the real bottleneck isn’t size—but depth?What if the model just didn’t have enough serial steps to get it right? Some problems need depth, not width. This is the Serial Scaling Hypothesis. This is not the same as recent studies in scaling test-time compute, which focus on train vs. test and are agnostic to parallel vs. serial. For example: test-time majority voting increases compute by running models in parallel — but doesn’t help when the task itself is serial. We argue: what really matters is how the compute is structured. And for many real-world problems, it must be serial. Read more at: arxiv.org/abs/2507.12549 or 🧵. (In collaboration with: @layer07_yuxi , Kananart Kuwaranancharoen and @YutongBAI1002 )