Simon Willison

60.3K posts

Simon Willison

@simonw

Creator @datasetteproj, co-creator Django. PSF board. Hangs out with @natbat. He/Him. Mastodon: https://t.co/t0MrmnJW0K Bsky: https://t.co/OnWIyhX4CH

Alibaba said nothing about open source as part of its future AI strategy in its earnings call I thought it would at least pay some temporary lip service to open source Qwen, as we know it, is dead

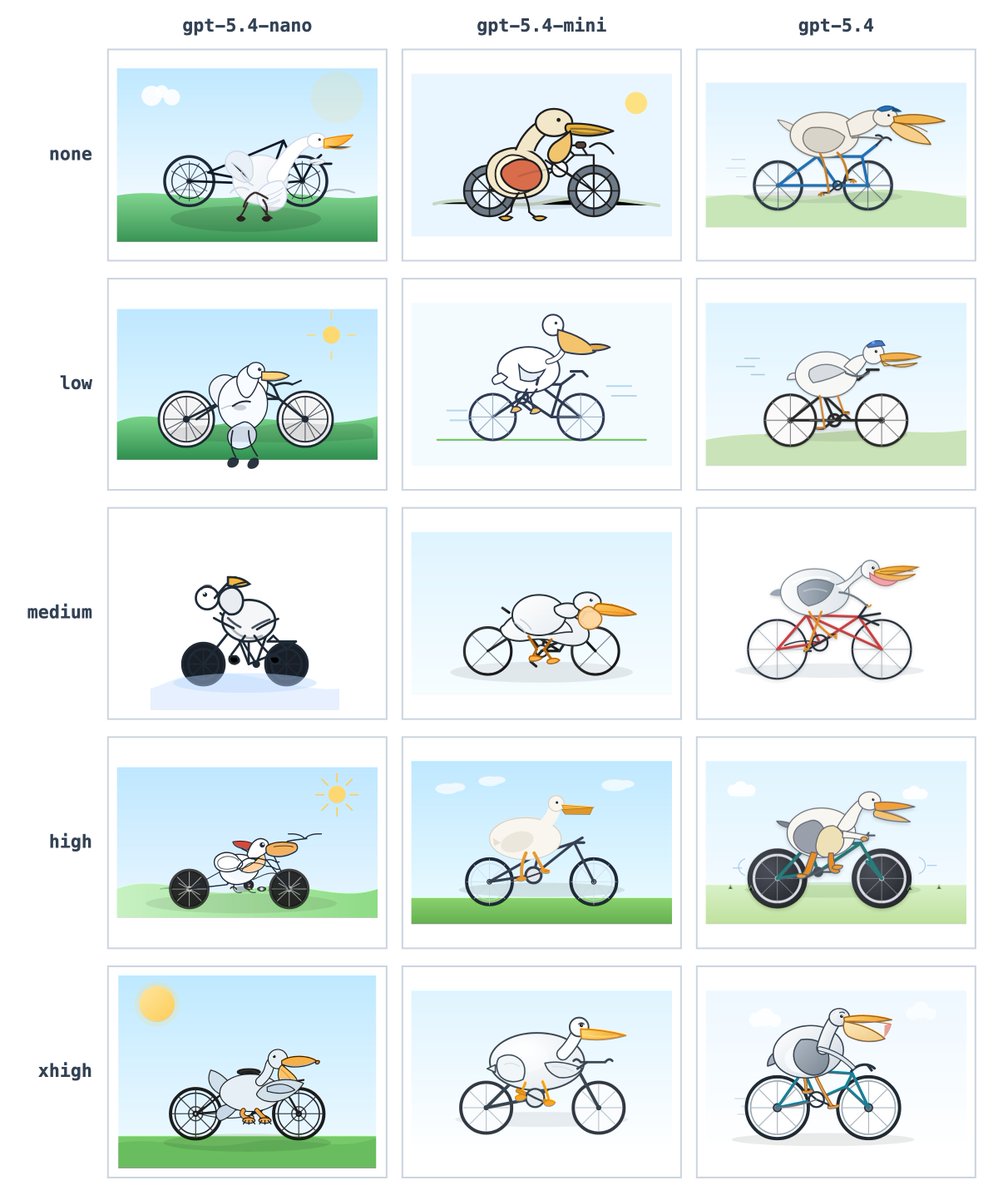

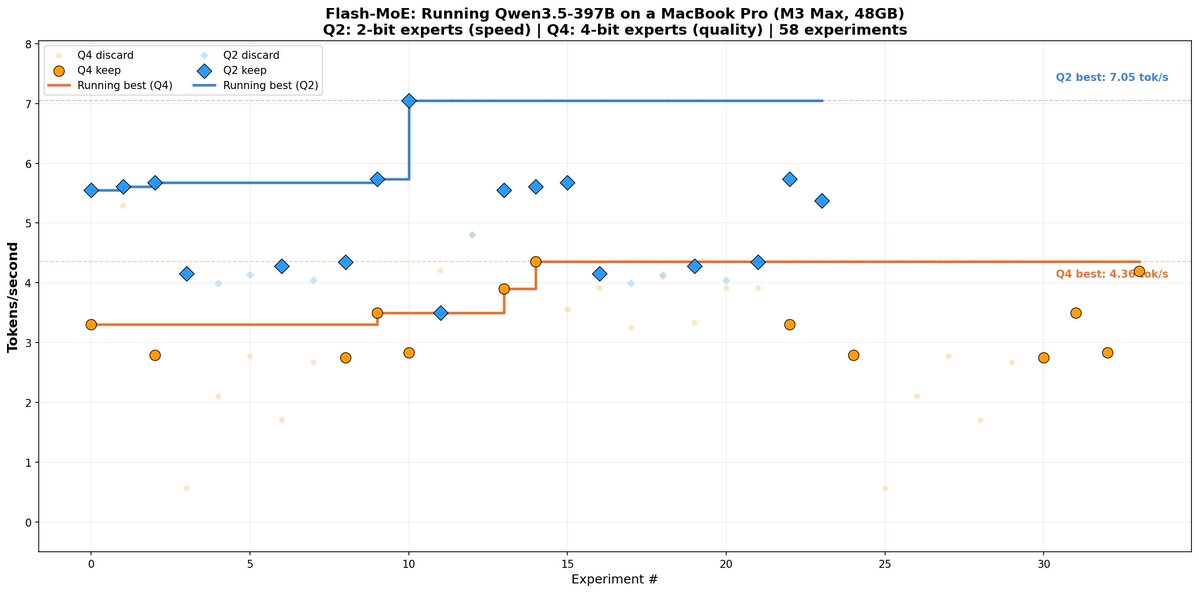

@simonw You bet. Literally, "tool calling" became the metric that got us back to Q4. Q2 was really great conversationally and very capable, but it's like running the model at temperature 10,000 for anything predictable.

Today is my last day at Apple. Building MLX with our amazing team and community has been an absolute pleasure. It's still early days for AI on Apple silicon. Apple makes the best consumer hardware on the planet. There's so much potential for it to be the leading platform for AI. And I'm confident MLX will continue to have a big role in that. To the future: MLX remains in the exceptionally capable hands of our team including @angeloskath, @zcbenz, @DiganiJagrit, @NasFilippova, @trebolloc (and others not on X). Follow them or @shasha for future updates.