Stephen Ra

39 posts

Stephen Ra

@stephenra

Visiting Scholar @NYU Global AI Frontier Lab Prev: Senior Director & Founding Team @PrescientDesign ∙ @genentech

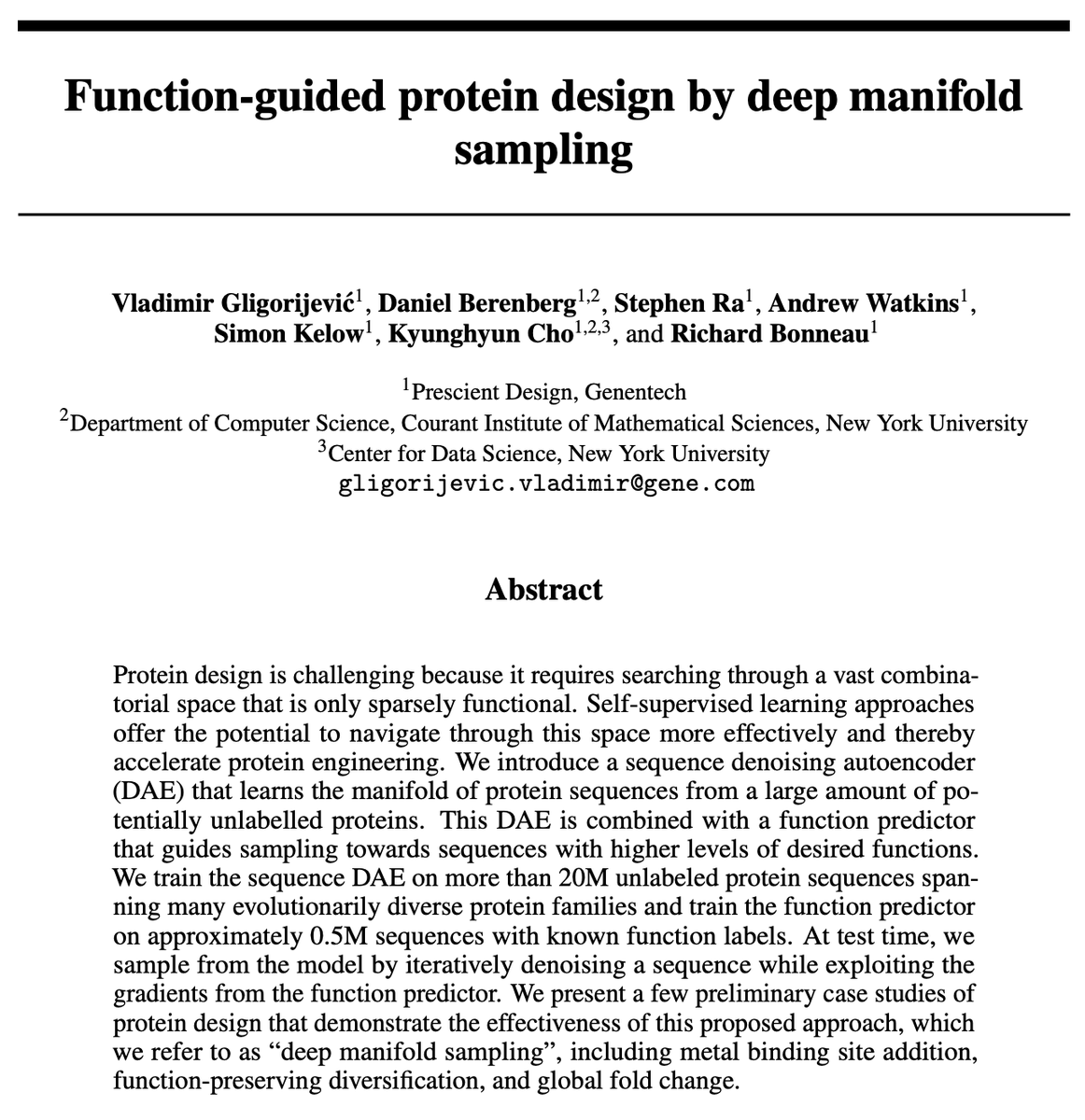

I can confirm using a property predictor to accept/reject diffusion steps works very well for protein design (link in reply) This looks like the interpretability community rediscovering ideas that are pretty well developed in the latent Bayesian optimization lit

I'm hiring a postdoc at Prescient Design, @genentech, to work on scalable experimental design for scientific discovery! Strong theory background and interest in practical algorithms encouraged. Apply here: tinyurl.com/2hxyvnvc

Why do major AI models tell left-wing voters in Japan to vote for the communist party? My new research paper led by Sho Miyazaki. In 2026, voters across the world will be asking AI to help them vote. How will the AI respond? We study this question in Japan, which recently held a snap election. When voters provide policy positions, we find that the models rely heavily on this information—and in Japan, the models heavily recommend the communist party in response to left-wing positions, even though the positions we provided are held by a range of other parties. Why are the AIs doing this? We’re not sure, but we have a theory: in Japan, the communist party operates a content-heavy, fully open website with a “newspaper” that is openly accessible for AI models. In contrast, many Japanese news outlets block AI models from accessing their content. The result: the Japanese Communist Party website is one of the most-cited “news sources” in our study. This pattern of recommending the JCP is consistent across many models, including the most recent frontier models. There’s much more work to do here, but we think our paper suggests two main takeaways: AI models should be more careful about what sources they consider news, maybe especially in non-US contexts where the model companies may hold less policy expertise Parties and news sources that want to influence AI recommendations should think twice about excluding their content from AI. To paraphrase @tylercowen, when it comes to elections and voting, journalists may want to “write for the AI”! Governments may want to consider policies that allow this content to be used for voting recommendations but not for other AI model use cases. Looking forward to everyone’s feedback as we prepare to submit this paper and turn to studying US voting recommendations in advance of November’s midterms. Check out the full paper below.

we always think of and try to support the social, technological and entrepreneurial ecosystem in NYC, at NYU Global AI Frontier Lab. in order to support the recent initiative by @NYCEDC, called NYC AI Nexus, we are hosting a happy hour together with the NYC AI Nexus partner @c10labs on April 2! please join us, hear about the NYC AI Nexus program and network with your fellow entrepreneurs, professionals and students in NYC. see the reply for the registration link!

Excited to unveil TwinWeaver: A new open-source framework bringing LLMs to multi-modal EHR! 🧬 We introduce the Genie Digital Twin (GDT), a pan-cancer foundation model developed on 93k+ patients. 📄 arxiv.org/abs/2601.20906 💻 github.com/MendenLab/Twin… [1/7]