Nacho

5.9K posts

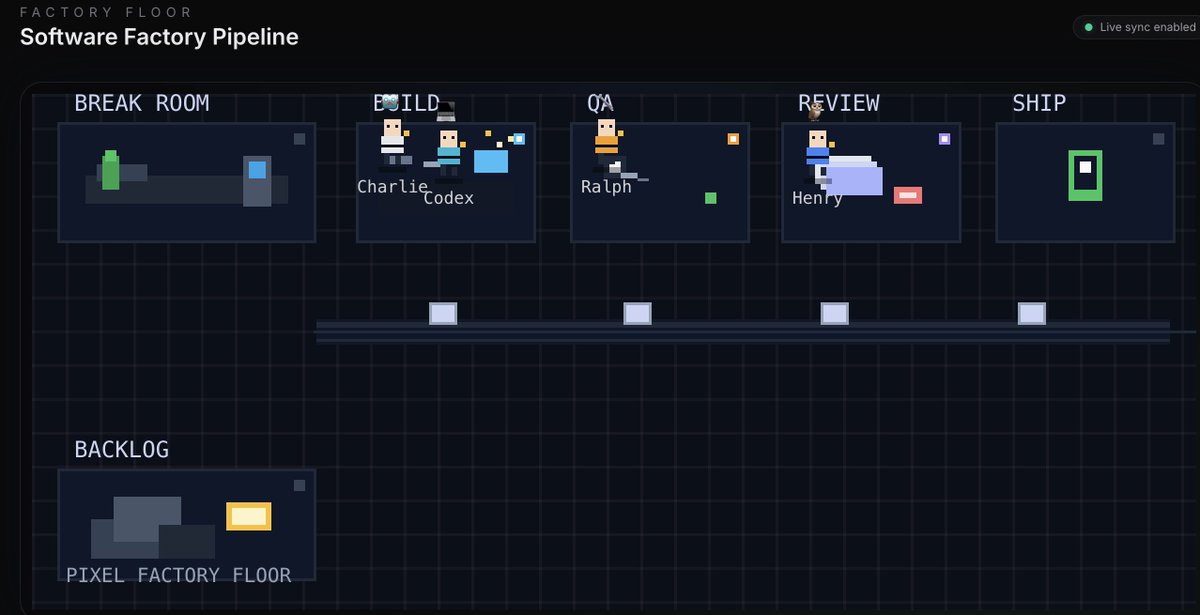

let me get you started in local AI and bring you to the edge. if you have a GPU or thinking about diving into the local LLM rabbit hole, first thing you do before any setup is join x/LocalLLaMA. this is the community that will help you at every step. post your issue and we will direct you, debug with you, and save you hours of work. once you're in, follow these three: @TheAhmadOsman the oracle. this is where you consume the latest edges in infrastructure and AI. if something dropped you hear it from him first. his content alone will keep you ahead of most. @0xsero one man army when it comes to model compression, novel quantization research, new tools and tricks that make your local setup better. you will learn, experiment, and discover things you didn't know existed. @Teknium maker of Hermes Agent, the agent i use every day from @NousResearch. from Teknium you don't just stay at the frontier, you get your hands on the tools before everyone else. this is where things are headed. if you follow me follow these three and join the community. you will be ahead of most people in this space. if you run into wrong configs, stuck debugging hardware, or can't get a model to load, post there so we can help. get started with local AI now. not only understand the stack but own your cognition. don't pay openai fees on top of giving them your prompts, your research, and your most valuable thinking to be monitored and metered. buy a GPU and build your own token factory.

High prices are the new normal in the U.S. beef market. on.wsj.com/4kNocnn

Dario Amodei just dismantled the biggest myth in the AI industry. Open source AI isn’t free. It never was. Amodei: “It’s not free. You have to run it on inference and someone has to make it fast on inference.” For decades, open source meant something real. It meant a teenager in a basement could download the same tools as a Fortune 500 company. Could read the code. Could modify it. Could build something that competed with the giants. That was genuine democratization. That actually happened. AI is different. Fundamentally. Physically. In ways the ideology hasn’t caught up to yet. Downloading the weights is the easy part. The part that actually costs something is turning the weights into a running system. Into responses. Into intelligence operating in real time at scale. That requires compute. Power. Infrastructure. The kind measured in billions of dollars and years of construction. Amodei: “These are big models. They’re hard to do inference on. Ultimately you have to host it on the cloud. The people who host it on the cloud do inference.” The open source debate was never about who owns the model. It was always about who owns the cloud. And Amodei goes further. When a competitor drops a new open model, he doesn’t ask whether it’s open or closed. He doesn’t care about the licensing. He doesn’t engage the ideology. Amodei: “I don’t think it mattered that DeepSeek is open source. I think I ask, is it a good model? Is it better than us at the things that matter? That’s the only thing that I care about.” That’s the ruthless clarity of someone actually trying to win. While the media debates licensing frameworks, Amodei is asking one question. Is it better. Everything else is a distraction. Amodei: “I don’t think open source works the same way in AI that it has worked in other areas. Here we can’t see inside the model.” This isn’t Linux. You can’t read it. You can’t fork it. You can’t understand it the way generations of developers understood the tools they inherited. You can download it. And then you need a data center to run it. The teenager in the basement who was supposed to be empowered by this revolution needs a billion dollars of infrastructure before the empowerment starts. The era of the basement coder rewriting civilization on a laptop is over. The future belongs to whoever commands the compute, owns the power grid, and can actually turn the intelligence on. Open weights without infrastructure isn’t democratization. It’s a promise the physics of the universe won’t let us keep.