TNG Technology Consulting GmbH

1.6K posts

@tngtech

TNG, aka "The Nerd Group", is a consulting partnership focused on high end information technology, particularly AI. 924 employees, 99.9% academics, ~53% PhDs.

Jensen Huang is loving the new Dell Pro Max with GB300 at NVIDIA GTC.💙 They asked me to sign it, but I already did 😉

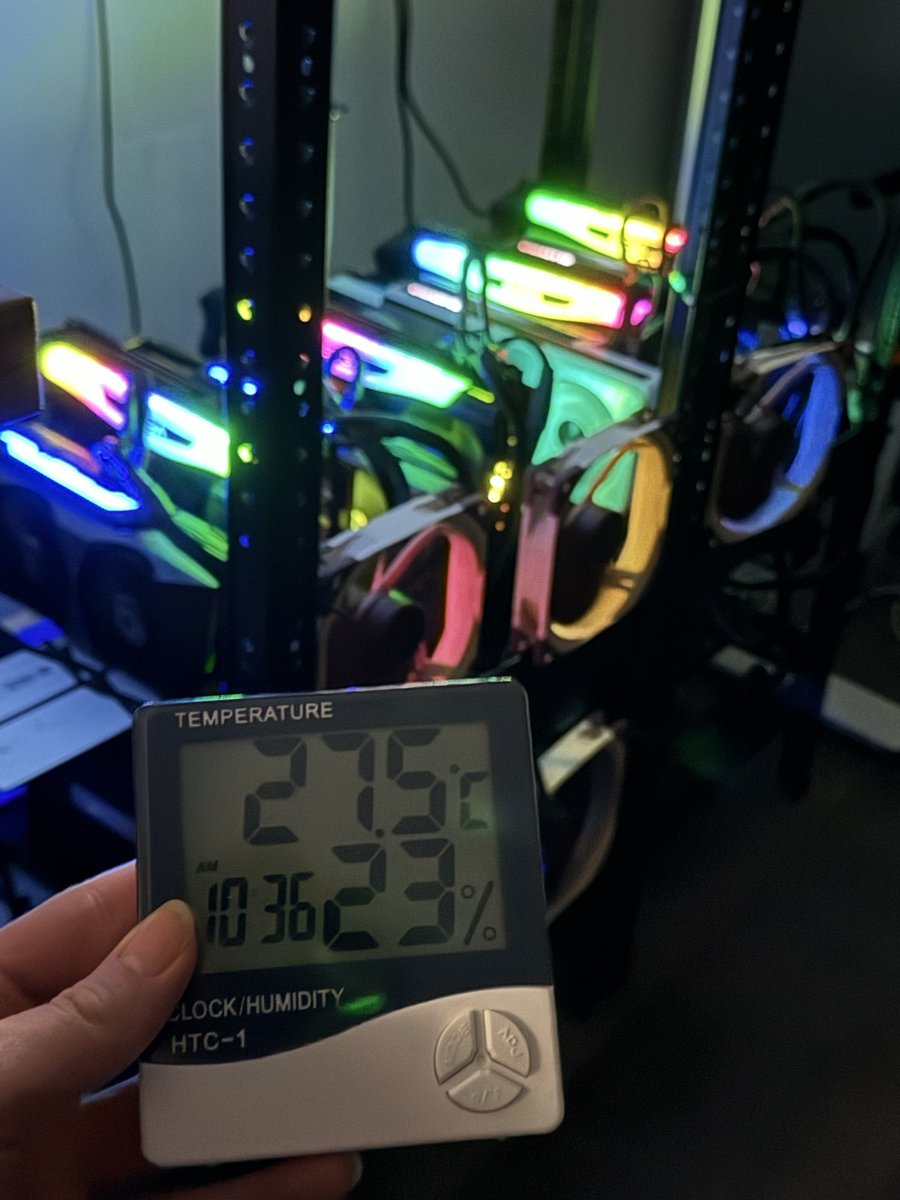

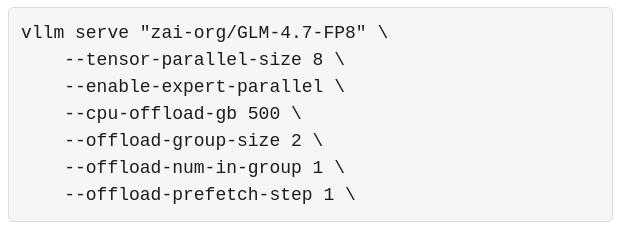

this guy has 29 models on huggingface at page 2 ranking. no lab behind him. no sponsorship. $2,000 from his own pocket on GPU rentals. he compressed GLM-4.7 to run on a MacBook and quantized Nemotron Super the week it dropped. all public. all free. nvidia is a trillion dollar company with hundreds of teams but they are not the ones quantizing models middle of the night and pushing them out before sunrise. if nvidia stopped tomorrow their employees stop working. people like @0xSero would not. that is the difference between a paycheck and a mission. @NVIDIAAI you talk about making AI accessible. the people actually doing it are right here. 29 models deep burning their own compute with no ask except more hardware to keep going. you do not need to build another program. just look at who is already building for you. one GPU to this man would produce more public value than a hundred internal sprints. i am not asking for charity. i am asking you to invest in someone who already proved it.

MiniMax-M2.7 just landed in MiniMax Agent. The model helped build itself. Now it's here to build for you. ↓ Try Now: agent.minimax.io

"can we get the base model?" sure. here's two. "can we get the code?" sure. here's SteptronOSS. "what about the SFT data?" coming soon. maximum sincerity, minimum barriers. - Step 3.5 Flash Base — pretrained foundation - Step 3.5 Flash Base-Midtrain — code, agents & long-context - SteptronOSS — open-sourced, ready for your custom workflows - SFT Data — coming soon for reference not just the final checkpoint — a customizable pipeline. 🤗 huggingface.co/stepfun-ai/Ste… 🤗 huggingface.co/stepfun-ai/Ste… 💻 github.com/stepfun-ai/Ste…