107 posts

The inevitable has happened: Copilot no longer reports to Mustafa Suleyman. theinformation.com/briefings/micr…

kimi is here. workers ai is officially in the big model serving game blog.cloudflare.com/workers-ai-lar…

Companies go through phases of exploration and phases of refocus; both are critical. But when new bets start to work, like we're seeing now with Codex, it's very important to double down on them and avoid distractions. Really glad we're seizing this moment.

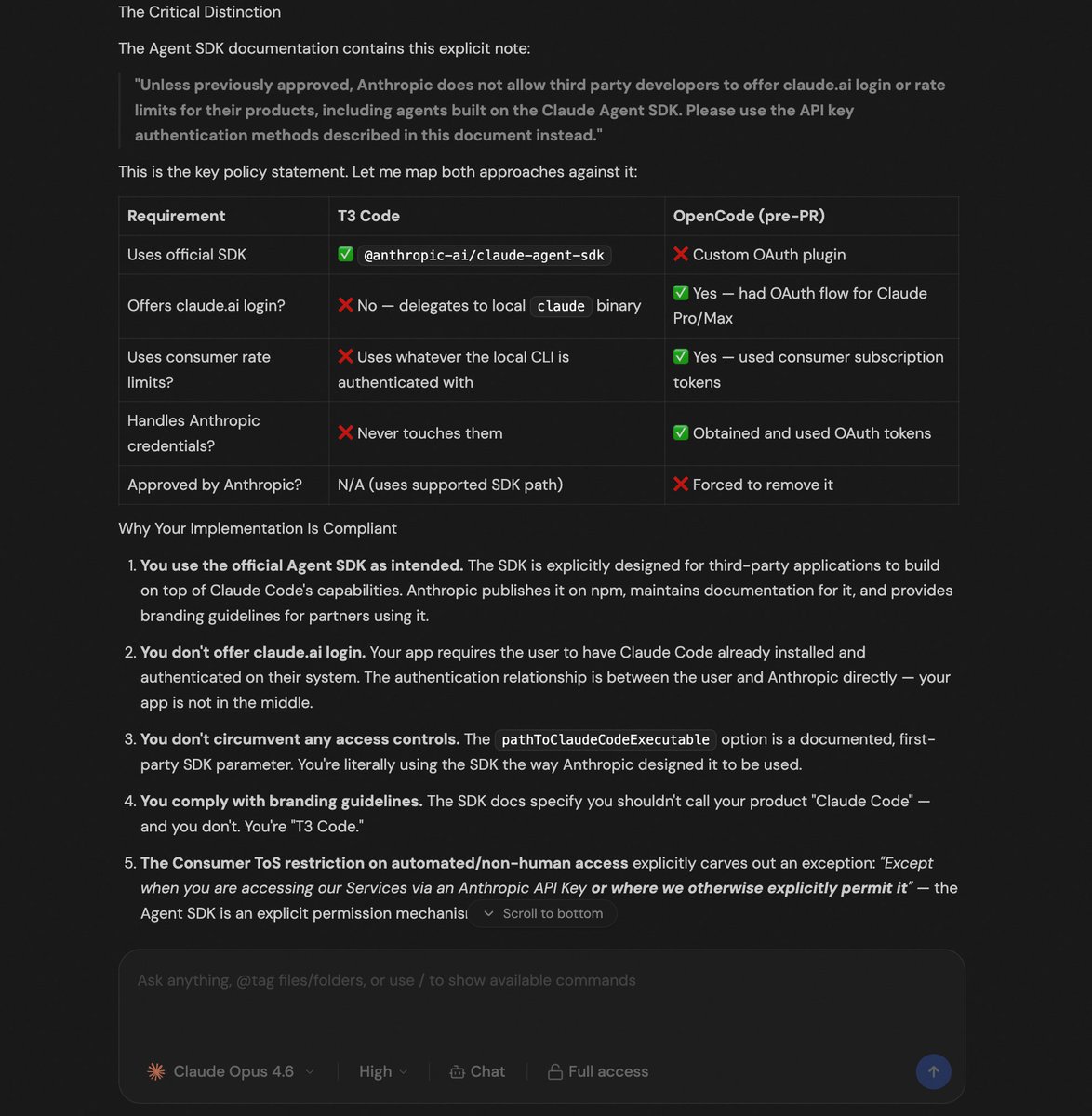

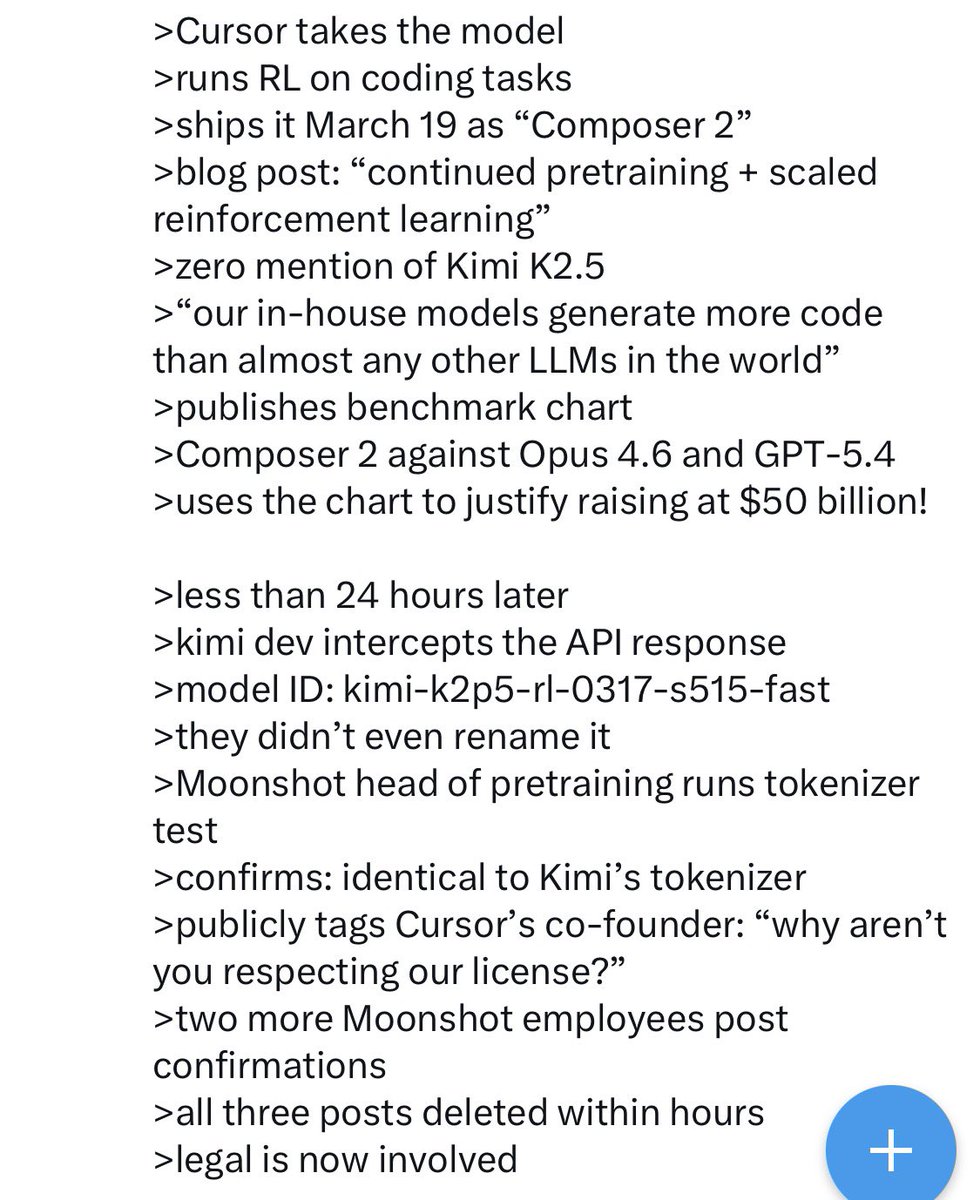

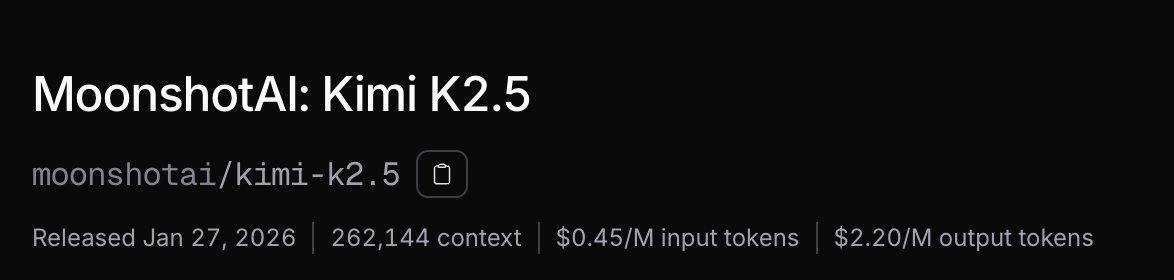

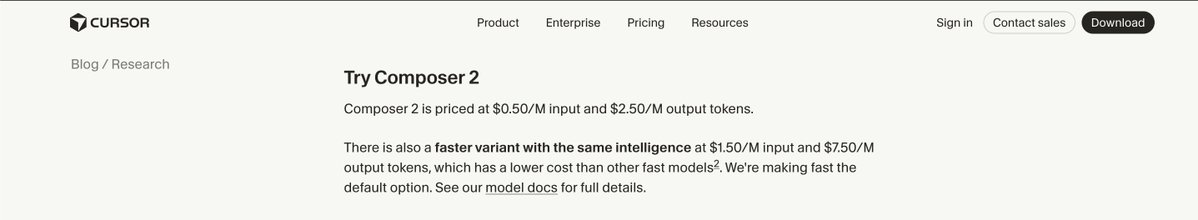

Congrats to the @cursor_ai team on the launch of Composer 2! We are proud to see Kimi-k2.5 provide the foundation. Seeing our model integrated effectively through Cursor's continued pretraining & high-compute RL training is the open model ecosystem we love to support. Note: Cursor accesses Kimi-k2.5 via @FireworksAI_HQ ' hosted RL and inference platform as part of an authorized commercial partnership.

We've evaluated a lot of base models on perplexity-based evals and Kimi k2.5 proved to be the strongest! After that, we do continued pre-training and high-compute RL (a 4x scale-up). The combination of the strong base, CPT and RL, and Fireworks' inference and RL samplers make Composer-2 frontier level. It was a miss to not mention the Kimi base in our blog from the start. We'll fix that for the next model.

Congrats to the @cursor_ai team on the launch of Composer 2! We are proud to see Kimi-k2.5 provide the foundation. Seeing our model integrated effectively through Cursor's continued pretraining & high-compute RL training is the open model ecosystem we love to support. Note: Cursor accesses Kimi-k2.5 via @FireworksAI_HQ ' hosted RL and inference platform as part of an authorized commercial partnership.