virtualuncle

143 posts

virtualuncle

@virtualunc

I test & review AI tools so you don't waste $20. News, tutorials, automation, side hustles. Just what actually matters.

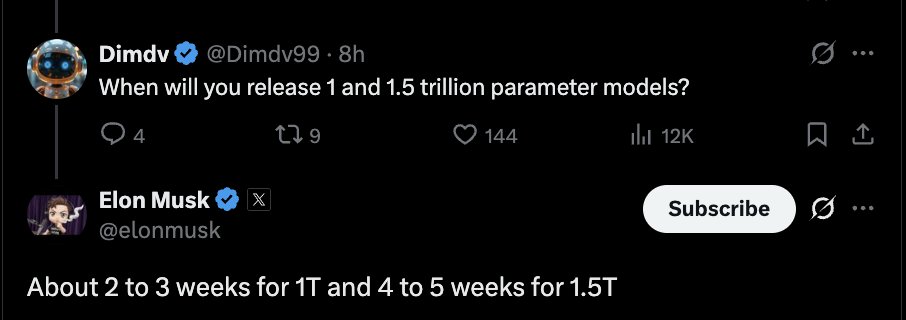

@Dimdv99 @testerlabor About 2 to 3 weeks for 1T and 4 to 5 weeks for 1.5T

Introducing Claude Managed Agents: everything you need to build and deploy agents at scale. It pairs an agent harness tuned for performance with production infrastructure, so you can go from prototype to launch in days. Now in public beta on the Claude Platform.

JUST IN: Nearly half of the U.S. data centers planned for 2026 are reportedly expected to be delayed or canceled.

Introducing Claude Managed Agents: everything you need to build and deploy agents at scale. It pairs an agent harness tuned for performance with production infrastructure, so you can go from prototype to launch in days. Now in public beta on the Claude Platform.

@marclou marc i don't understand why you are still using cursor. i a confused, am i missing something. Or is it your personal choice to code inside cursor. can you tell me what made you stick to cursor instead of using claude code or codex directly ??

1/ today we're releasing muse spark, the first model from MSL. nine months ago we rebuilt our ai stack from scratch. new infrastructure, new architecture, new data pipelines. muse spark is the result of that work, and now it powers meta ai. 🧵

Claude Mythos Preview is 5x as expensive as Claude Opus 4.6