Jay Martel

858 posts

Jay Martel

@jaymos

AI enthusiast. Humanity enthusiast. Betting both can win at the same time. Tracking the race in real time — follow along.

well this is fucking weird Val Kilmer (deceased actor) will be “resurrected via AI” to star in a new movie: - his entire body, voice, acting will be ai-generated - 1st major actor to be cast and not actually act - family signed off on the rights to use his appearance - he was cast to be in the film in 2020 but fell ill (cancer) and sadly passed away now his simulated body will live in a film hollywood getting very weird

FIRST LOOK: Val Kilmer has been resurrected via AI to star in the new movie "As Deep as the Grave." Kilmer was cast in the movie in 2020, five years before his death. But he was too sick amid his throat cancer battle to ever make it to set. Now an AI version of the actor is appearing in the film, with the full blessing of his daughter, Mercedes: "He always looked at emerging technologies with optimism as a tool to expand the possibilities of storytelling. This spirit is something that we are all honoring within this specific film, of which he was an integral part.” “He was the actor I wanted to play this role,” says writer-director Coerte Voorhees. “It was very much designed around him. It drew on his Native American heritage and his ties to and love of the Southwest... His family kept saying how important they thought the movie was and that Val really wanted to be a part of this. He really thought it was important story that he wanted his name on. It was that support that gave me the confidence to say, okay let’s do this. Despite the fact some people might call it controversial, this is what Val wanted.” wp.me/pc8uak-1lH1PI

JUST IN: OpenAI reportedly planning major strategy shift to refocus the company around business users and “vibe coders”

Extremely excited to announce LigandForge 🧬⚡ Generate high-quality peptides at over 10,000x - 1M the speed of state-of-the-art methods like Bindcraft and Boltzgen. Predict binding affinity with 83% correlation to experimental binding data. 150 protein targets benchmarked.

Mel Gibson: "I have 3 friends. All 3 of them had stage 4 cancer…and all 3 of them…don't have cancer right now at all…" Joe Rogan: "What did they take…? Ivermectin and Fenbendazole…" Mel Gibson nods in agreement.

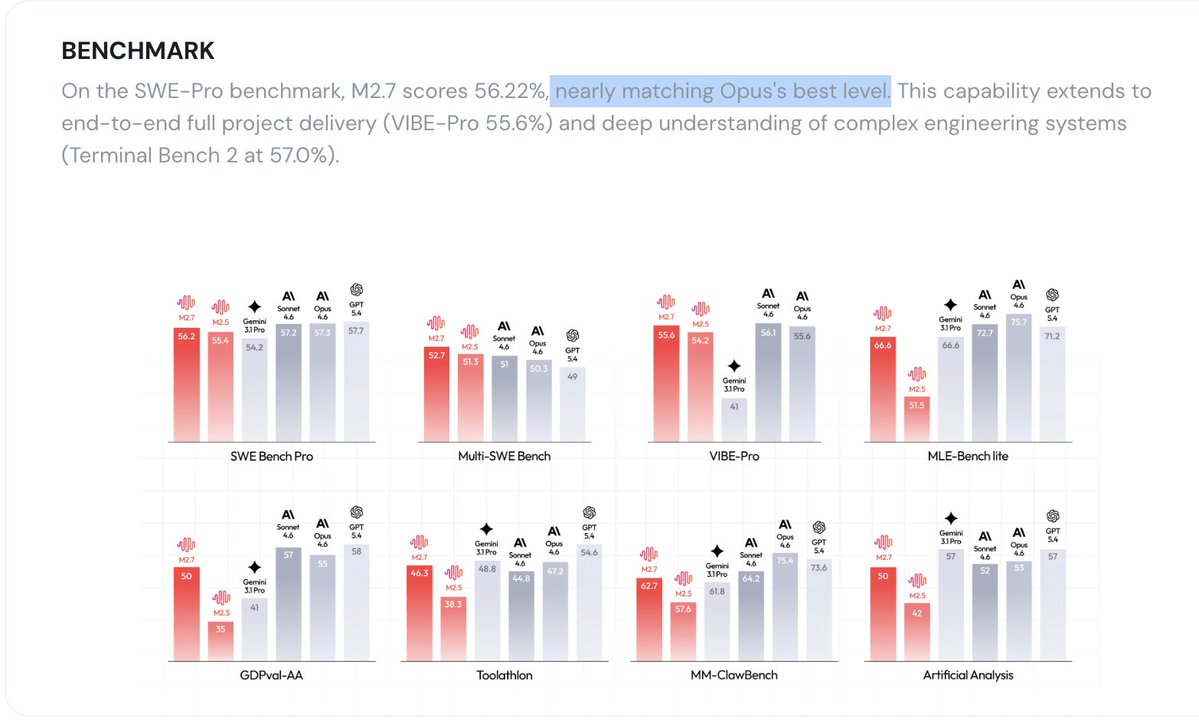

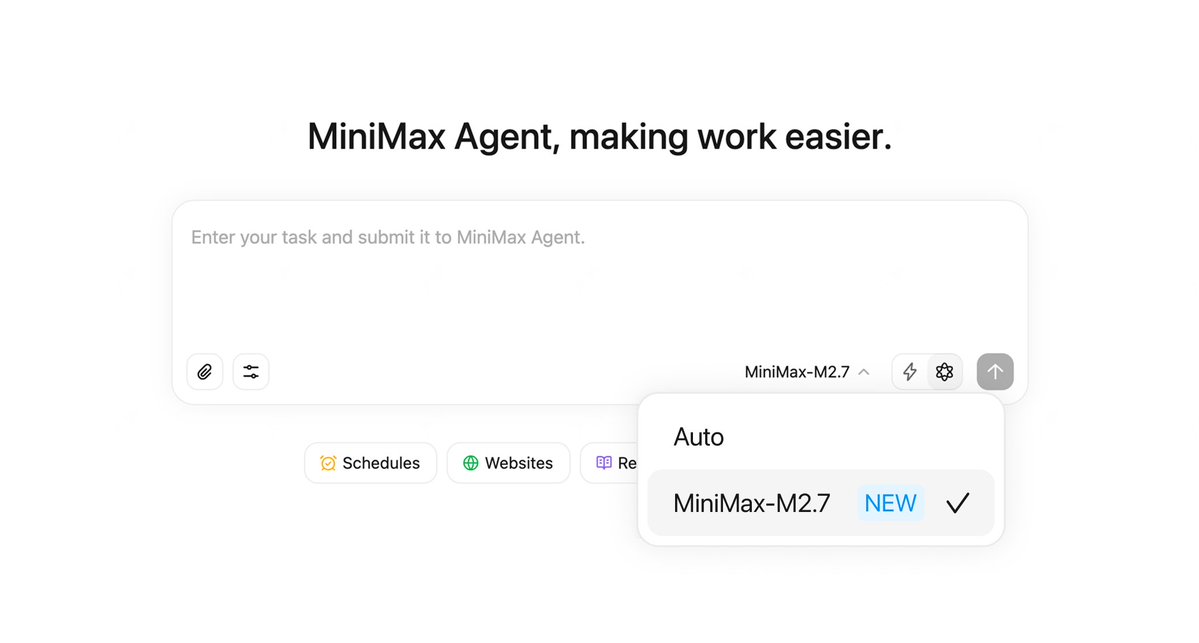

MiniMax-M2.7 just landed in MiniMax Agent. The model helped build itself. Now it's here to build for you. ↓ Try Now: agent.minimax.io

Grok 4.20 is now officially out of Beta. It's now on Auto, Fast, Expert & Heavy.

@chamath If AI compresses terminal value and makes every moat temporary, capital will rotate to assets with no disruption risk. Bitcoin is Digital Capital - scarce, neutral, and impervious to AI disruption. $BTC should be the primary beneficiary of this shift.