🌴Marc Averitt🌴

24.2K posts

🌴Marc Averitt🌴

@OCVC

VC at Okapi Venture Capital✌️Hit me at: [email protected]

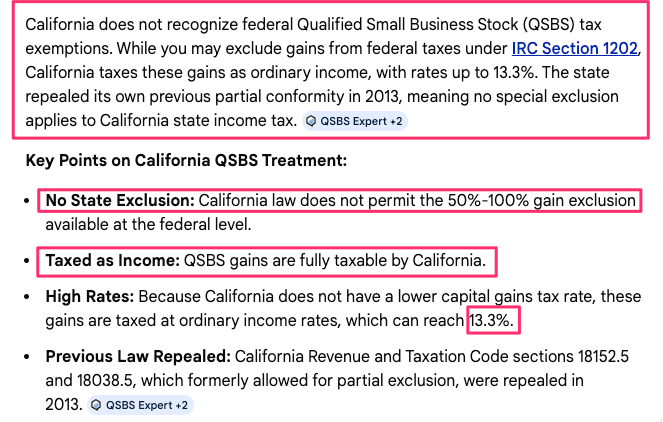

Big news for NY startups! QSBS is here to stay. From @politico: the idea now “seems to be moot” following strong, coordinated engagement from across the tech community—including a @TechNYC letter with 1,600+ founders, early employees, and investors. This is a clear example of what’s possible when the ecosystem shows up together. We’re grateful to everyone who spoke out and helped ensure policymakers understood what was at stake. New York remains the best place to build. 🗽

Over the last few months we’ve rebuilt how we work @usv entirely. We’ve always loved being small in size (team; fund, relatively) but with tentacles that reach broadly. Now, that’s easier than ever with an agentic workforce. The agents are on our emails and messages, pushing back, suggesting new ideas, finding opportunities. It’s a work in progress—so, as we always do, we like to publish it half-baked in the hopes that we’ll learn what we’re missing and where we can push further. If you’ve built an agentic workforce to change how your partnership operates, we want to share ideas.

Introducing TurboQuant: Our new compression algorithm that reduces LLM key-value cache memory by at least 6x and delivers up to 8x speedup, all with zero accuracy loss, redefining AI efficiency. Read the blog to learn how it achieves these results: goo.gle/4bsq2qI

Devs are acting like they didn’t write slop code before AI.

Security without borders. Data that stays home. CrowdStrike and Schwarz Digits have partnered to deliver the AI-native CrowdStrike Falcon platform on STACKIT’s sovereign cloud. 🦅 Top-tier protection: Stop breaches with AI-native speed. 🛡️ Total sovereignty: Fully EU-operated and aligned with NIS2 & CRA. ⚡ AI at scale: Grow workloads without compromising data residency. The future of European cyber defense starts now. Read more: crwdstr.ke/6018h7cxq