Harbor Framework

54 posts

Harbor Framework

@harborframework

Using Skills well is a skill issue. I didn't quite realize how much until I wrote this, the best can completely transform how your team works.

RuneBench is out: measuring long horizon goal optimization across 14 AI coding models inside Runescape

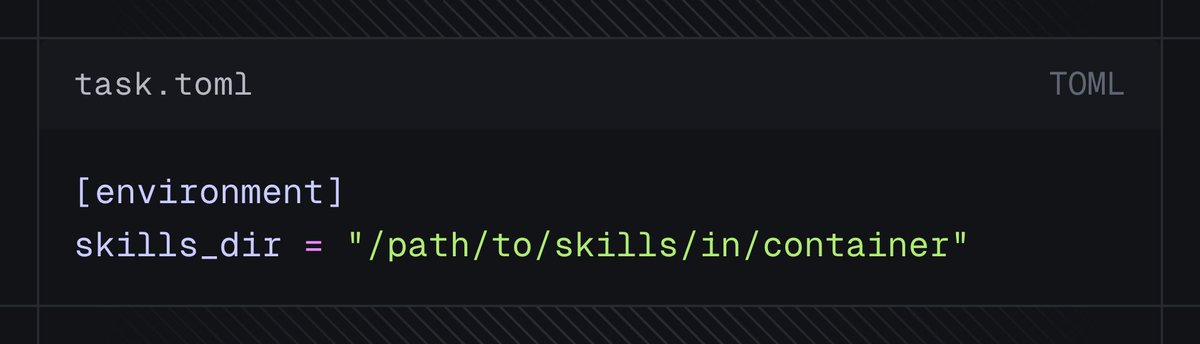

New in the cookbook: Harbor RL trains models on real software engineering tasks inside sandboxed containers. The agent gets a bash shell, an instruction, and a test suite. If the tests pass, it gets the reward. github.com/thinking-machi…

“harbor is the correct way to express tasksets for terminal agents” - @willccbb

if you’re not in the RLFT industry you do not understand how quickly @harborframework has come to completely dominate the landscape right now for RL infra and evals. it is standing room only at this @modal x @willccbb meetup where Harbor is basically required knowledge. my team at Cog has made it a top priority to migrate all evals to Harbor as well. it’s kinda unreal given that it was basically launched by a few guys in a discord needing something better for TerminalBench 2 (we posted the launch on @latentspacepod youtube look it up). not at all surprised this one got the @andykonwinski blessing and you should expect an entire mini industry of Harbor based evals and benchmarks and infra startups this year.

@markatgradient @swyx @harborframework @modal verifiers is focused on being a domain-agnostic layer for converting any eval into a trainable RL environment, including all of the token-level plumbing harbor is the correct way to express tasksets for terminal agents diff layers of the stack

A potential partner asked for our benchmark numbers. At the time, benchmarks had us behind other agents. We spent a weekend fixing that: ran Cline against Terminal Bench's 89 real-world tasks, diagnosed every failure, and shipped fixes. 47% → 57%.

. @harborframework /@alexgshaw @ryanmart3n @lschmidt3 @andykonwinski (@LaudeInstitute) / Agent evaluation needs shared infrastructure. Harbor standardizes benchmarks through one interface: repeatable runs, standardized traces, production-grade practice. Born from @terminalbench (Batch 1).