Do you know what actually happens when you hit "Connect" on a Claude integration?

I looked into it.

Here's what most people don't realize: When you connect an app to Claude — Slack, Google Drive, GitHub, whatever — you're not just granting access.

You're handing credentials to a system that has no native concept of "need to know."

In 2025 alone, 28.6 million secrets were leaked from config files and environment variables.

That number went up 34% year over year. And that was before most companies started rolling out AI agents at scale.

The way most of these integrations work today:

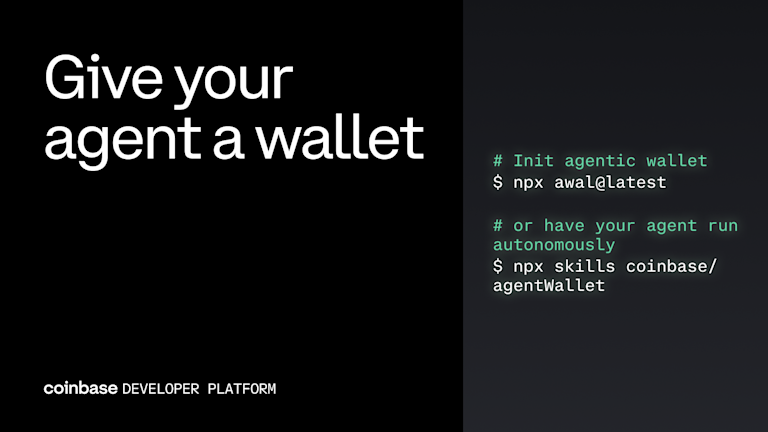

• Your API keys and tokens get stored in a config file

• The AI agent reads them at runtime

• The agent holds them in memory while it works

• There's no scoping. No time limits. No revocation.

Here's the part that should make any CTO uncomfortable:

Prompt injection, where malicious content tricks an agent into doing something unintended, isn't just a jailbreaking trick anymore. In agentic systems, it's a credential exfiltration mechanism.

The agent doesn't know it's happening. No alert fires. The credentials leave through approved channels.

As long as an AI agent can see your credentials, it can lose them.

Companies aren't banning AI because they're paranoid.

They're banning it because nobody's solved the credential question yet.

English