David Hendrickson

12.7K posts

@TeksEdge

CEO & Founder | PhD | Startup Advisor | @Columbia | Author Generative Software Engineering https://t.co/9oqvHuTX5f | 🔔 Follow for AI & Vibe Coding Tips 👇

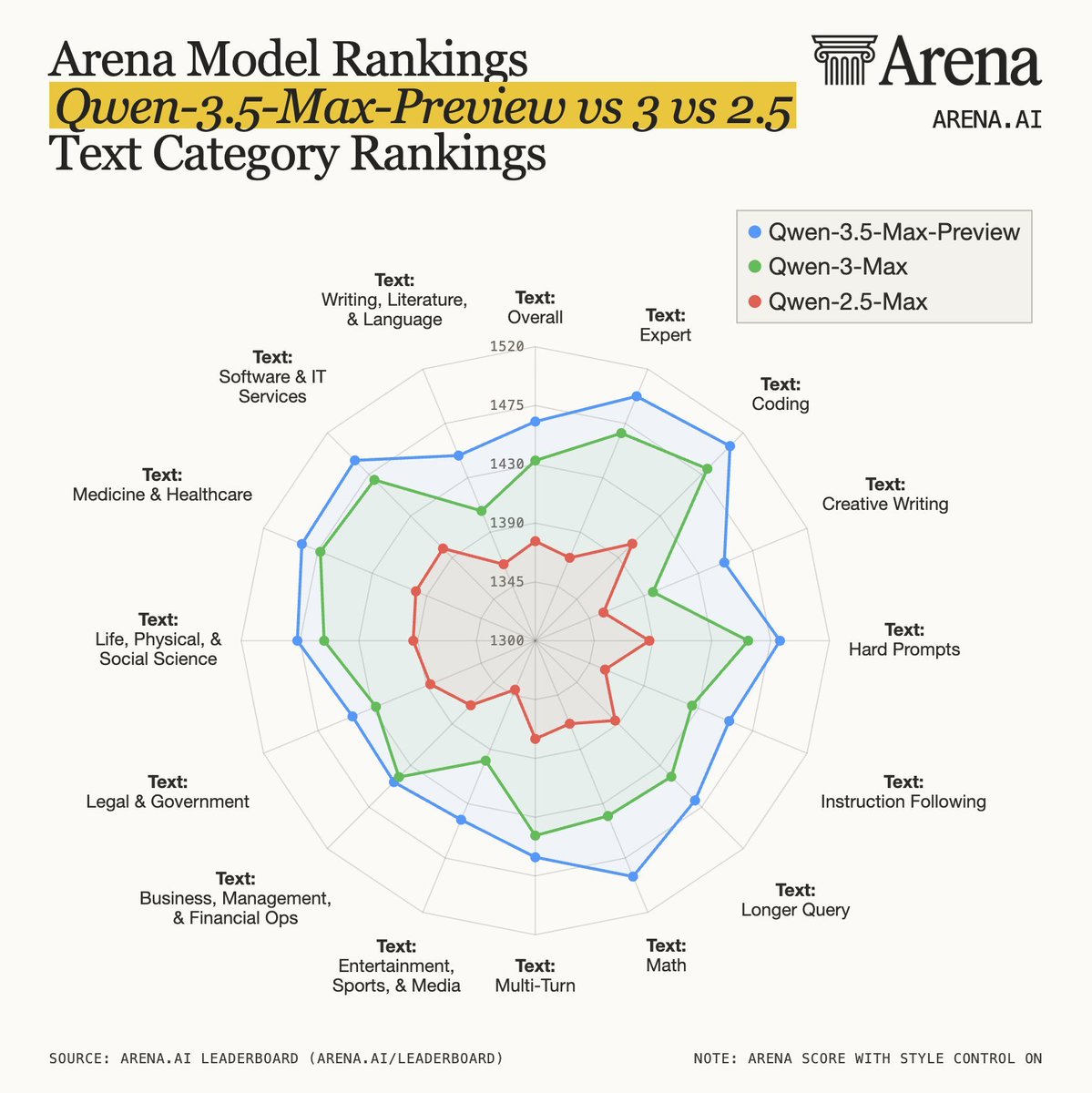

Qwen 3.5 Max Preview has landed in top 10 for Arena Expert and top 15 for Text Arena. It shows particular strength in Math. Highlights: - #3 Math - #10 Expert - #15 Text Arena - Top 20 for Writing, Literature & Language, Life, Physical, & Social Science, Entertainment, Sports, & Media, and Medicine & Healthcare Congrats to the @Alibaba_Qwen team for this new milestone!

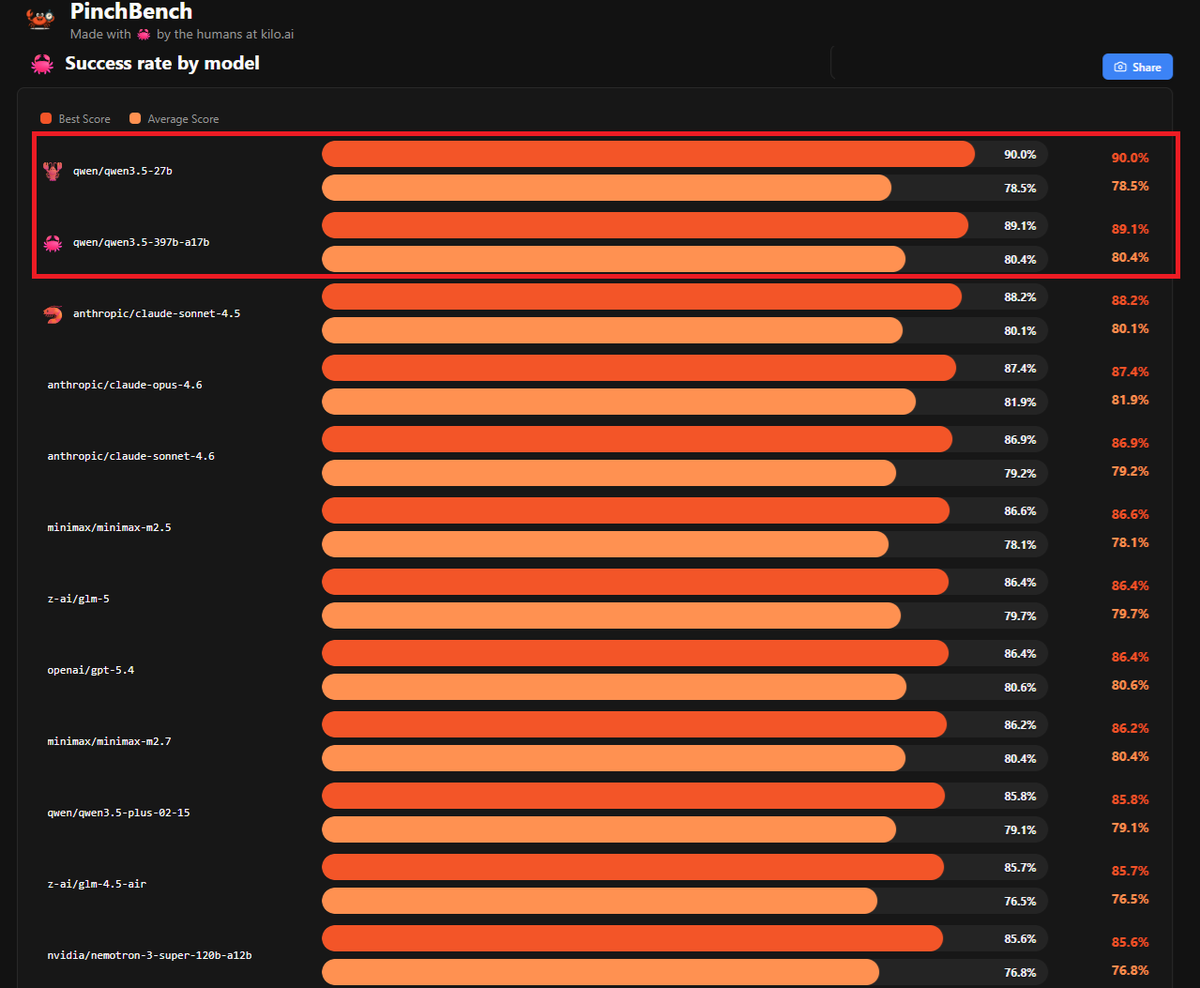

💡 It's still amazing to me that you can run an unsloth version of Qwen3.5-27B on a $2K AMD Ryzen Max+ 395 w/64GB of unified memory @ 10 tps at home. Nearly the same quality as Claude Opus 4 (May, 2025 release)

Cursor have released Composer 2, a frontier-level coding model with higher token efficiency, and a faster default variant Looks like the rumor going around about it beating Opus 4.6 was in regard to Terminal-Bench 2.0, the only benchmark score they released aside from their own internal bench and SWE-Bench Multilingual cursor.com/blog/composer-2

OpenViking has hit GitHub Trending 🏆 10k+ ⭐ in just 1.5 months since open-sourcing! Huge thanks to all contributors, users, and supporters. We’re building solid infra for the Context/Memory layer in the AI era. OpenViking will keep powering @OpenClaw and more Agent projects🚢🦞

Apparently Cursor is going to release a coding model better than opus 4.6 and cheaper as well ( maybe tomorrow ) Can they regularly do this to keep up though ?