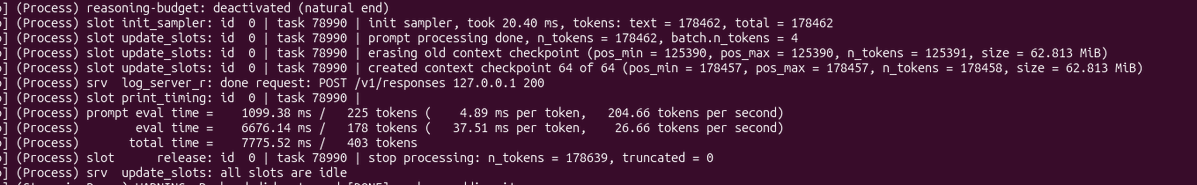

@AIatAMD @NousResearch @lmstudio Did exactly this a few days ago - still fighting with slow compressions, insane delays on context growing, tasks delegations to faster model than qwen3.6 etc. would appreciate more articles like this but for advanced Hermes fine-tuning for Ryzen AI Max+

English