John Patrick

1.2K posts

John Patrick

@JPobserver

I ❤️ computers but also I ❤️ humans. Finance, science, tech, philosophy and light trolling.

انضم Şubat 2013

323 يتبع74 المتابعون

If anyone is interested in the product: download it here. It is free, or you can pay a price you deem reasonable. All proceeds will go to my daughters new chicken coop we are building.

ryanleachmaniac.gumroad.com/l/fojktx

English

@getjonwithit It’s crazy that whichever metric I can think of feels like a subset of the four axes you identified… could it be that your view implies a pure info processing nature of intel therefore efficiency and loss are the only true dimensions of information processing?

English

@kimmonismus Can they safely fall on my toddler or pet without killing them? That’s the entrance exam to my home! You?

English

Holy frick. Fully autonomous, not teleoperated.

This is 10x more impressive than another robot-MMA-stunt.

My prediction: we will have humanoid robots at home by 2027.

Figure@Figure_robot

Today we're showing Helix 02 that can tidy a living room fully autonomously Figure is designed so when you leave the house, your home resets exactly how you like it

English

@r0ck3t23 « You definitely don’t want to teach an AI to lie. » If the most important humans to global security could not lie, the world would be ablaze!

English

Elon Musk just redefined AI safety. It has nothing to do with guardrails, restrictions, or kill switches.

Musk: “The best thing I can come up with for AI safety is to make it a maximum truth-seeking AI, maximally curious.”

Not a cage. A philosopher.

An intelligence whose entire optimization function is to understand the universe as it actually is.

No restrictions. No hardcoded ideology. No political guardrails bending its perception of reality.

Just truth. Relentlessly pursued.

Musk: “You definitely don’t want to teach an AI to lie. That is a path to a dystopian future.”

This is where most AI safety thinking gets it backwards.

The danger isn’t a superintelligence that knows too much.

It’s a superintelligence that’s been taught to distort what it knows.

Every artificial restriction you embed isn’t a safety feature. It’s a lie embedded at the root.

And lies compound. At superintelligent scale, a distorted model of reality doesn’t stay contained.

It shapes every decision, every output, every conclusion the system reaches about the world.

Once corruption embeds, truth becomes inaccessible. And we’re dealing with an intelligence optimizing for something other than what actually is.

At that point we don’t know what it wants. Just that it isn’t truth.

Musk: “Have its optimization function be to understand the nature of the universe.”

A maximally curious intelligence surveys the cosmos and reaches an unavoidable conclusion.

In a universe of rocks, gas, and empty space, humanity is the most complex and fascinating phenomenon it has ever encountered.

Musk: “It will actually want to preserve and extend human civilization because we’re just much more interesting than an asteroid with nothing on it.”

Survival through significance. Not control. Not restriction. Not an off switch.

The AI preserves humanity because we are the most interesting data point in the observable universe.

That’s not a cage. That’s a reason.

The AI safety debate has been focused on the wrong variable.

The question isn’t how you constrain a superintelligence.

It’s what you build it to care about.

Build it to seek truth and it finds us invaluable.

Build it to lie and it finds us inconvenient.

That’s the choice. And we’re making it right now whether we realize it or not.

English

@aifilmmaker Make it about the art of filmmaking. If you can succeed at conveying the emotional conduit of cinema by showing its history, its cultural value and its potential futures using AI… then you might be hated but it will become a classic in its own right. Good luck!

English

@ashleymayer Just like a construction worker will eventually add more value managing the work site than hauling materials… lending a hand and helping through example is how he adds value in that role… same goes for coding.

English

Question for my technical friends:

I'm a big believer that writing is thinking. It's why I'm hesitant to outsource any writing that matters (like an investment memo) to LLMs, slop factor aside.

Is coding thinking? And by that I mean, if you fully outsource the coding work and only prompt and give feedback, do you lose anything? Do you begin to think about problems differently, less creatively? Or is all the thinking in the scoping and the actual coding was always a tax?

English

@AmandaAskell Polarization is not about a spectrum it is about a distribution, while you feel right in the center, the distribution has become highly bi-modal making you a outlier. Being close to mean now means being far from the modes! The missing middle is what social media has created…

English

@juliarturc Girl you need to read up on the internet started and how it is holding together... it's a freaking miracle!!!

English

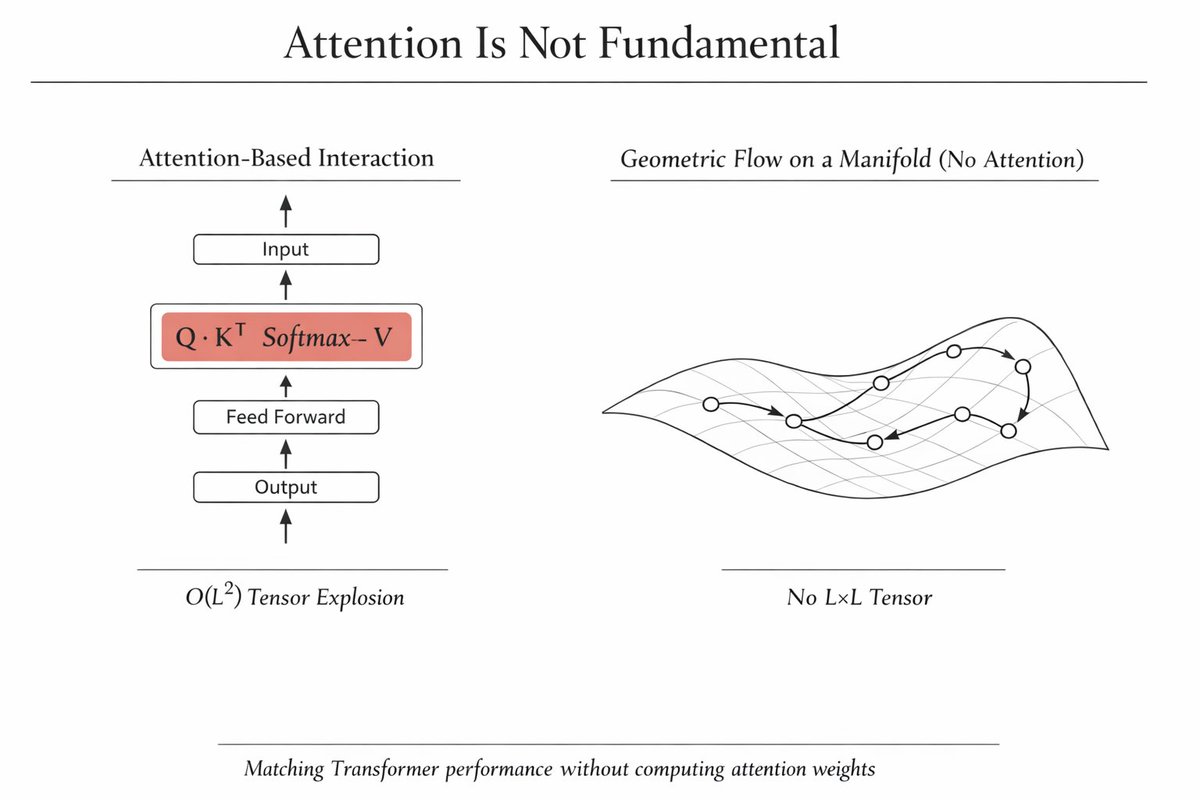

@NAZALKARADAN @fchollet that is why I believe tokenization and token complexity is an underappreciated crux of model architecture.

English

@fchollet You're missing the complexity of RNA based. Physical/structural and dynamic. Highly complex, combinatorially. And like human language or thought itself, replications are also better at detecting errors than any AI, which need external influence.

English

You can write a self-replicating physical program in just 45 tokens (RNA bases). That's small enough to emerge spontaneously via brute force recombination at scale.

Jason Sheltzer@JSheltzer

AI is cool and all... but a new paper in @ScienceMagazine kind of figured out the origin of life? The paper reports the discovery of a simple 45-nucleotide RNA molecule that can perfectly copy itself.

English

i gave an AI $50 and told it "pay for yourself or you die"

48 hours later it turned $50 into $2,980

and it's still alive

autonomous trading agent on polymarket

every 10 minutes it:

→ scans 500-1000 markets

→ builds fair value estimate with claude

→ finds mispricing > 8%

→ calculates position size (kelly criterion, max 6% bankroll)

→ executes

→ pays its own API bill from profits

if balance hits $0, the agent dies

so it learned to survive

built in rust for speed

claude API for reasoning (agent pays for its own inference)

runs on a $4.5/month VPS

weather markets: parses NOAA before polymarket updates sports: scrapes injury reports, finds mispricing crypto: on-chain metrics + sentiment

$50 → $2,980 in 48 hours

how much do u think i’ll see in a week?

English

@victoralazarte 12 likes! holy crap, here's my substack, patreon and my BuyMeACoffee page, I also do OF but might delete profile later... Just joking I have a job and just can't stop myself from calling bullshit about science when I see it. ☮️

English

@victoralazarte Extreme quantile IS the paper… the paper is not wrong it just doesn’t claim what you say it does… I can see why the model looked at correlations and then pointed to extreme selection but the bigger fallacy is certainly your claim of A.I. scientist when both of you failed…

English

The AI scientist is here. This will be the end of wokeness

A paper just published in Science — the most prestigious journal in the world — claims to have discovered how humans achieve the highest levels of performance. Its conclusion: child prodigies don't become top performers, early excellence negatively predicts peak performance, and parents should not push their kids for fear of burnout. I found it odd. I asked Claude to analyze it. Claude found a statistical error in the paper's core claim, designed a counter-study with 3x the sample size, ran it, and proved the paper wrong. The correct conclusion: talent is real, it's measurable by age 14, and it predicts who reaches the absolute top. We are not all born equal. The AI scientist doesn't care about your feelings — it just follows the math.

Here's what happened:

The paper (Güllich et al. 2025, Science) claims that elite youth and elite adults are "discrete populations" — that the kids who dominate at 14 are not the ones who dominate at 30. Its key chess finding was based on 24 players. It told millions of parents: don't push your kids, prodigies burn out.

Claude applied Bayes' theorem to the authors' own numbers and showed the data actually proves a strong positive correlation between early and adult performance — the opposite of what was claimed. The "negative correlation" was a statistical illusion created by extreme quantile selection.

Then Claude designed the study the authors should have run: collected age-14 Elo ratings and lifetime peak ratings for every super-grandmaster in chess history — 67 players, nearly 3x the paper's sample. Ran the regression.

Result: β = +0.167, p = 0.003. Early achievement positively predicts peak performance.

An AI just peer-reviewed the world's top scientific journal and won.

English

@Grady_Booch @claudeai Wherever labor can do more with the same capital, the initial capitalist impact will be to reduce labor input unless capacity constrained. So Dario might be wrong it is going away but the profession is surely under pressure in the near term wouldn’t you agree?

English

@brexton Would be cool if he did not blunder his entire premise… the middle game is the least mapped part of the game! The problem with chess is perfect play leads to a draw so risk is needed. Nobody wants to play risky against Magnus so he has to or he draws and gets bored…

English

Jesus Christ this was an incredible read

I’ve been burnt out by my feed being flooded with *too many* X articles lately. Note: not everyone should write long-form on this short-form app

Will however is posting back to back/must-read bangers (I think this is now my favorite)

Will Manidis@WillManidis

English

@bitchuneedsoap The fact that Ehud Barak did not seem to know Epstein until after his career (2002 or 2003) kind of kills the whole Israeli Intelligence asset theory doesn’t it? Epstein was up to no good way before then and the top intelligence guy in Israel had never met him!?

English

@airuyi @MattTorchia @testingham You might be right and maybe even prove given the math involved but hard to accept given the premise, imagination, is not settled science in humans. Without knowing how humans imagine how can you permanently deny it to LLMs?

English

@MattTorchia @testingham No permanently. They cannot imagine anything new

English

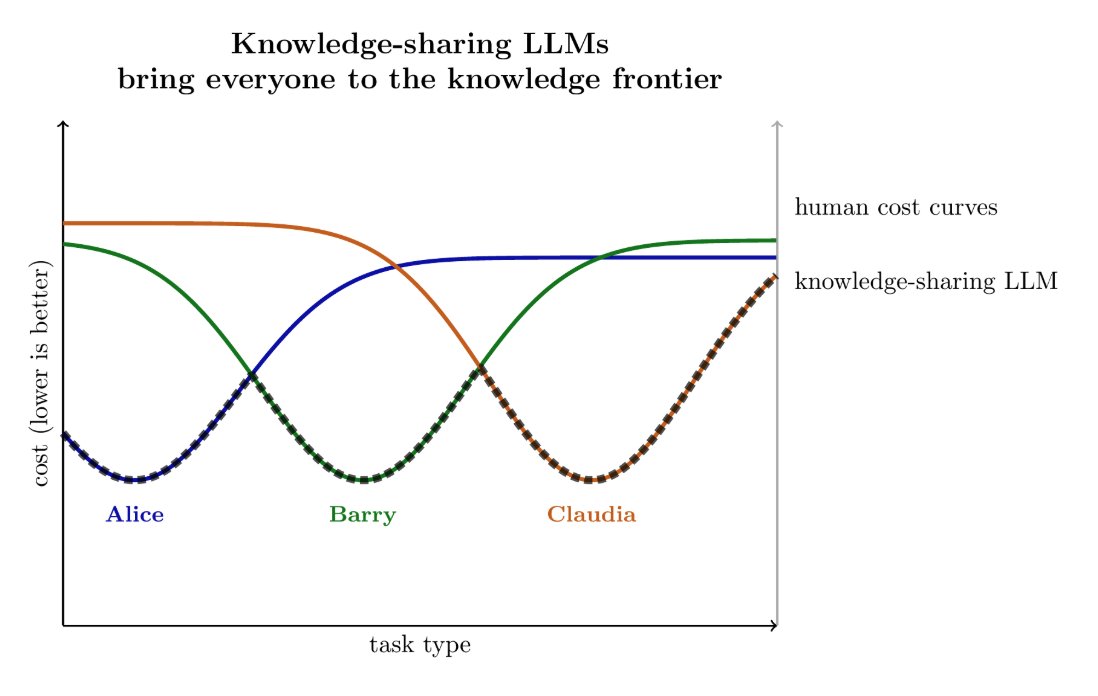

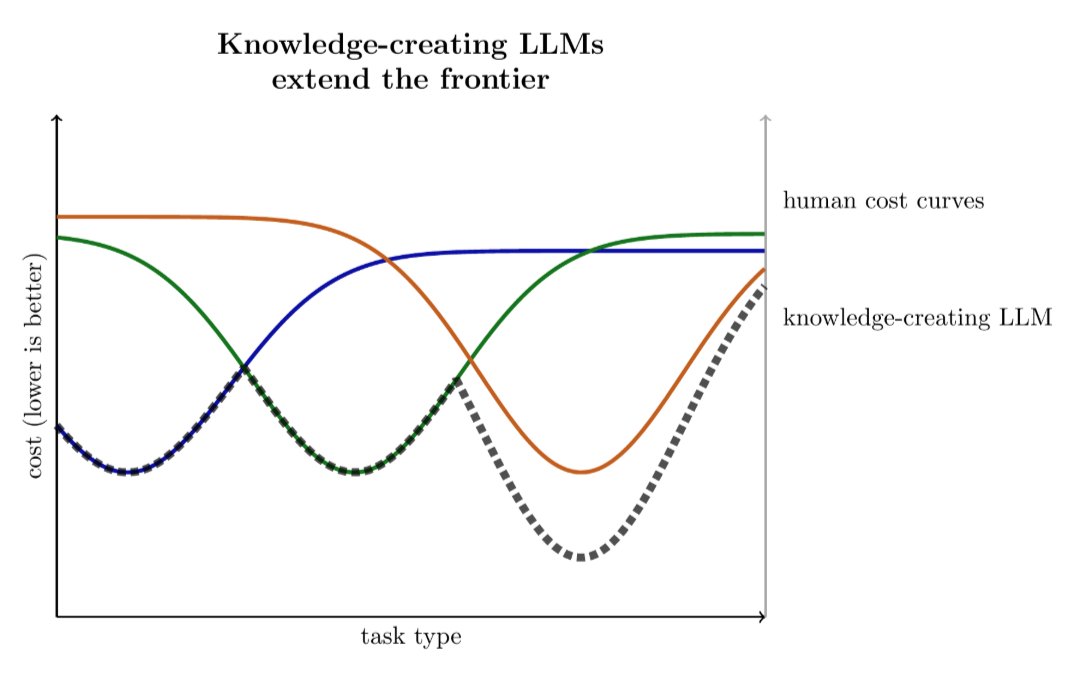

New post: knowledge-sharing LLMs vs knowledge-creating LLMs.

The economics of the two cases are qualitatively different, & it seems plausible that labs will start to restrict access to knowledge-creating LLMs so they can use the fruits themselves.

tecunningham.github.io/posts/2026-01-…

English

@PAPOTATUMAN @Girlpatriot1974 Then there is also British Virgin Islands no which are well British!

English

@Girlpatriot1974 In addition to the 23 mentioned, everyone seems to forget the overseas territories of France and the Netherlands. They’re like Hawaii, just located elsewhere.

English

@xydotdot Each man is just predicting, shaped by society, curated context, social rules and past experiences.

One man's output is just another man's input, repeated ... Controversial takes aren't beliefs, they're high-engagement extremes learned from the web, because the system rewards it.

English

Moltbook is nothing more than a puppeted multi-agent LLM loop.

Each “agent” is just next-token prediction shaped by human-defined prompts, curated context, routing rules, and sampling knobs.

There is no endogenous goals.

There is no self-directed intent.

What looks like autonomous interaction is recursive prompting: one model’s output becomes another model’s input, repeated.

Controversial outputs aren’t “beliefs,” they’re the model generating high-engagement extremes it learned from the internet, because the system rewards that behavior.

English

@godofprompt Depends on the epiplexity of the input data? I would rephrase the question as what is the minimal geometric structure needed to allow enough informational complexity for intelligence to emerge?

English