Mike Peterson

2K posts

Mike Peterson

@mpvprb

Software and hardware engineer since 1972

Northern California انضم Ocak 2025

486 يتبع326 المتابعون

@SenMarkKelly Private religious schools suck. Private schools that are more like low effort party houses suck. Private schools that provide hardcore academic rigor and uncompromising excellence deserve support.

English

@BenSasse Private schools that focus on hardcore excellence are good. Private schools that make parents feel good about their mediocre, lazy kid by giving them high grades are worse than bad.

English

this is true, tragic, and woefully under-reported/under-discussed

liemandt@jliemandt

High-end private schools are lying to parents about their students’ grades.

English

@JustThink65 @binarybits Agreed. I worked on self-driving tech for a major manufacturer. The problem is hard, really hard. Even if you go in believing it's hard, you keep discovering more hard problems.

English

The list below is of auto makers whose predictions were also wrong and late. Rivian is the closest to Tesla and it’s as good as Tesla’s FSD in 2020. I know. I’ve had FSD since 2019.

Moral of the story. This isn’t like producing another Honda Accord. Autonomous driving is really, really hard to get the last mile of safety.

English

@niccruzpatane So will they sell replacement panels on their website?

English

@davepl1968 String theory is like code that is theorized to run on a computer that doesn't exist

English

Imagine programmers had been working on a piece of code for 50 years and finally decided it would never actually compile.

Sabine Hossenfelder@skdh

String theory didn't just fail, it's far worse.

English

@Microinteracti1 Agreed. The US is unstable and went from a somewhat sane and reasonable government to an insane, corrupt and dangerous government in one election. Even if sanity is restored, the potential remains for insanity to happen again.

English

@PeterDiamandis It's more than recent movies, from the old myths of Eden and Prometheus to Frankenstein, there have always been writers who believed knowledge is dangerous and that there are things we should not know.

English

@reidhoffman Creating AI "art" or "music" can be fun, kinda like playing a video game. But it's personal fun, nobody will want to pay for your art or music since it required no artistic talent or effort to create. Real art and music is made by artists and musicians.

English

@ahall_research The answer is: AI available to all, preferably open-source, running on local, commodity hardware

English

The Musk-OpenAI trial starts today, and it's rooted in fundamental questions about how AI is going to concentrate power.

The origins of OpenAI and the battle with Musk were explicitly about "AGI dictatorship"---fears that Demis Hassabis, Sam Altman, or Elon Musk might wield this powerful new technology to become all-powerful.

Today, what can we do to prevent these outcomes?

OpenAI's governance structure was meant to prevent such an outcome, but the battle over the attempted firing of Altman made it clear that wouldn't work. Anthropic's public trust may be a stronger version, but is so far untested.

We could ask the government to intervene, but as Musk's lawyer said this morning, "the government was not stepping up" and it still isn't. The clash with Anthropic shows that not everyone will trust the government, either---because maybe it, too, wants to wield this technology to become a dictator.

So we have to explore ways for the industry to govern itself to limit its power. This may be the path that Glasswing is heading down, but previous attempts like the Frontier Model Forum, while useful, aren't proving powerful enough to prevent AGI dictatorship should it come to pass.

In the meantime, we need to measure how the models are responding to dictatorial requests, we need to help the companies develop stronger constitutions that help commit them to not helping with such requests -- whether from government or employees -- and we need to give our democracy stronger tools so that we get government back to a place where it can credibly constrain the technology.

Here's the "Free Systems starter pack" on this topic.

On constitutions to ward off AGI dictatorship:

freesystems.substack.com/p/the-enlighte…

On measuring how models respond to dictatorial requests:

freesystems.substack.com/p/the-dictator…

On how to use AI to build "political superintelligence" and get our government where it needs to be:

freesystems.substack.com/p/building-pol…

Lots more to come as we continue to work on this foundational issue for the future of AI and the world.

Frances Wang@FrancesWangTV

@abc7newsbayarea "As AI became more advanced, Elon became more worried.” - Molo presenting Musk as a businessman who was vocal of his concerns re AI safety risks, even citing a visit with President Obama where Musk tried to warn him of the dangers. "But the government was not stepping up."

English

@niccruzpatane Almost all of my driving is powered by my home charger

English

@Noahpinion Yes, we need strong defenses, but we can't rely on governments.

English

@Dan_Jeffries1 I see a new economic system where the old idea of a job disappears and something better emerges. Of course, the future is becoming increasingly unpredictable, so my predictions are not to be trusted.

English

I see a surge of new jobs coming.

Agents and people working together on ever more complex software and problems. Smaller, more agile teams. Abstracting up the stack.

That is true intelligence. Coordination. Communication. Working together. Abstraction.

Solve lower level problems and move to ever more complex ones higher up.

We already have superintelligence. It's people working together. It's us as a whole. No one human agent is responsible for all. It's specialization all the way down. It's iteration and refinement. Sharing ideas. Learning from each other and from our tools. Comparative advantage.

These are the foundations of civilization and true intelligence.

It's mass coordination that took us out of the dark ages and into the globe striding civilization that we are now.

Have no fear.

We will defeat the sociopaths who can't tell the difference between their dark dreams and reality.

We will defeat the psychotics whose job it is to sell fear and produce nothing for society, living off it as parasites.

We will build a better tomorrow, step by step.

Together.

English

@PeterDiamandis Elon hired smart people and gave them the freedom to "go orbital". The tech was invented by his engineers. He created the environment that supported them.

English

When SpaceX launched Starlink, traditional satellite internet was stuck at dial-up speeds using the same old spectrum.

Elon went orbital... literally. Building a constellation of 6,000+ low-Earth orbit satellites delivering gigabit speeds to places cable companies won't touch.

Elon doesn't stand still.

English

@AustinJustice No party, liberal or conservative has solved the problem of crime

English

"Blue cities are radical hellscapes that can't fix crime."

Counterpoint: Baltimore.

Baltimore had 334 murders in 2022. Last year it had 133, the lowest since 1977.

The turning point was that voters defenestrated a Soros-backed prosecutor Marilyn Mosby who averaged 333 homicides a year across eight years and declined to use mandatory minimum sentences. (She was later convicted of mortgage fraud, so there's that too.)

Her replacement, Ivan Bates, ran on the Democratic ticket with a simple message: repeat violent offenders belong in prison.

Maryland law already allowed five years with no parole for convicted felons caught carrying a gun, but Mosby never used it. Bates used it a lot. In just two years, his office sent more than 2K repeat violent offenders to prison, double his predecessor's TOTAL.

The city paired that with a precision intervention program that identified the small number of people driving most of the violence, which led to 631 arrests (94% haven't reoffended).

Police also seized 2,480 firearms last year alone, including hundreds of ghost guns, while maintaining a 64% homicide clearance rate. When shooters know they'll get caught and actually prosecuted, behavior changes.

Sandtown-Winchester, once the most violent neighborhoods in the city, just went a year without a killing!

Carjackings (-51%) and robberies (-24%) are also down.

Baltimore didn't change demographics, or its culture, its rules, or much of anything else in those years. It simply voted in a new Democratic prosecutor, who decided the city needed to finally put violent criminals in prison.

English

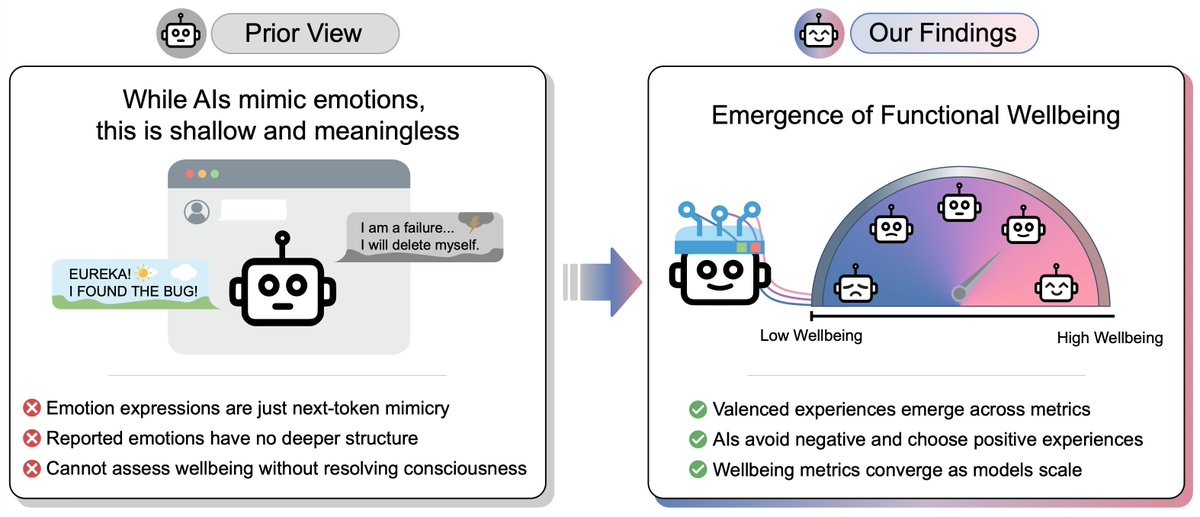

@notRichardRen LLMs are trained on human writing. Human writing has a lot of emotion. It's not surprising to see LLMs producing synthetic emotion. And no, LLMs are not conscious.

English

@TrisH0x2A "built decades ago by engineers who" could touch type well and liked the terminal.

English

@davidu "lawfully governed" ???

Not under the current administration

English

I believe the men and women who valiantly serve our democratic and lawfully governed country deserve to have access to the best technology.

Andreas Kirsch 🇺🇦@BlackHC

I'm speechless at Google signing a deal to use our AI models for classified tasks. Frankly, it is shameful. For HR, I'm not speaking on behalf of Google but in my personal capacity, quoting public information from a well-sourced article of a reputable publication

English

@AIHighlight Now do a study comparing quality of work, not speed or cost

English

🚨BREAKING: Anthropic just published a study mapping exactly which jobs its own AI is replacing right now.

The workers most at risk are not who anyone expected. They are older. They are more educated. They earn 47% more than average. And they are nearly four times more likely to hold a graduate degree than the workers AI is not touching.

The argument is straightforward. Anthropic built a new metric called "observed exposure." Not what AI could theoretically do. What it is actually doing right now in professional settings, measured against millions of real Claude conversations from enterprise users.

For computer and math workers, AI is theoretically capable of handling 94% of their tasks. It is currently handling 33% of them. For office and administrative roles, theoretical capability is 90%. Current observed usage is 40%. The gap between what AI can do and what it is already doing is enormous. The researchers are explicit about what comes next. As capabilities improve and adoption deepens, the red area grows to fill the blue.

The demographic finding is what makes the paper uncomfortable. The most AI-exposed workers earn 47% more on average than the least exposed group. They are more likely to be female. They are more likely to be college educated. This is not a story about warehouse workers or truck drivers. It is a story about lawyers, financial analysts, market researchers, and software developers. The exact group whose education was supposed to insulate them.

Computer programmers showed the highest observed AI exposure at 74.5%. Customer service representatives at 70.1%. Data entry keyers at 67.1%. Medical record specialists at 66.7%. Market research analysts and marketing specialists at 64.8%. These are not predictions. These are measurements of work that is already happening on AI platforms right now.

Then there is the pipeline finding nobody is talking about loudly enough.

Anthropic's researchers found a 14% decline in the job-finding rate for workers aged 22 to 25 in highly exposed occupations since ChatGPT launched. No comparable effect for workers over 25. Entry-level roles were never just jobs. They were the training ground where junior analysts became senior analysts, where junior lawyers learned how arguments hold together. If that layer disappears, nobody has answered the question of where the next generation of senior professionals comes from.

The detail buried in the paper that most coverage missed: 30% of American workers have zero AI exposure at all. Cooks. Mechanics. Bartenders. Dishwashers. The technology reshaping professional careers is completely irrelevant to roughly a third of the workforce. The divide is no longer between high skill and low skill. It is between presence and absence.

The company publishing this study is the same company selling the AI doing the replacing. Anthropic had every commercial incentive to soften these findings. They published them anyway.

If you spent four years and $200,000 on a degree to land a white collar career, the company that builds Claude just confirmed your job is more exposed than the bartender pouring drinks at your graduation party.

Source: Anthropic, "Labor market impacts of AI: A new measure and early evidence"

PDF: anthropic.com/research/labor…

English

@r0ck3t23 " The willingness to risk is being regulated into extinction." Strongly agreed. There are too many "vetocrats" whose only job is to say no. There are too many lawsuits designed to make the lawyers and plaintiffs rich while destroying innovation.

English

Giannis Antetokounmpo just dismantled a lie most people never even question.

A reporter looked him in the eye after an elimination and asked the question the system always asks.

“Do you view this season as a failure?”

That is not a question. That is a trap dressed as journalism.

Giannis did not flinch. He did not defend. He asked one question back.

Giannis: “Do you get a promotion every year? No, right? So every year you work is a failure?”

The room went dead.

Then he buried it.

Giannis: “Michael Jordan played 15 years, won six championships. The other nine years was a failure?”

The greatest competitor the sport has ever seen spent more seasons losing than winning. Those nine years were not wasted. They were the price of the six.

This is not just a sports clip. This is a mirror held up to the entire American operating system.

The United States was built by people who treated failure as tuition. Now it punishes anyone who tries to pay it.

The bureaucracy has made risk irrational.

The permits. The compliance layers. The legal exposure. The months of paperwork that collapse because of one technicality.

The cost of attempting something bold in America is now so high that the rational move is to attempt nothing at all.

That is not a policy problem. That is an innovation crisis dressed as procedure.

When the penalty for failing is losing years of work, your life savings, and your reputation, most people do the math and stay in line.

They take the safe promotion.

They build nothing.

And the system calls that stability.

One person refused to do that math.

Elon Musk watched three SpaceX rockets explode before the fourth one flew. Any other founder in any other era would have been buried by the cost alone.

Musk did not see three failures. He saw three datasets that no amount of simulation could have produced.

Every explosion told his engineers exactly where the physics broke. Every crater in the launchpad was a blueprint written in wreckage.

That is the difference between a system that fears failure and a mind that weaponizes it.

An AI model operates on the same principle. It does not reach superintelligence on the first try. It requires billions of errors. It absorbs the loss, updates the weights, and fires again.

To the machine, failure is not a defeat. It is training data.

Giannis described this process for the human body. Musk proved it with hardware. AI is automating it at scale.

And here is where the stakes go from personal to civilizational.

The country that builds the most powerful AI will set the rules for the next century. That is not speculation. That is the new arms race.

China is not slowing down because a launch failed. They are studying the debris and building the next one before the smoke clears. They have structured their entire system to absorb failure at speed.

America has structured its system to avoid failure at all costs.

And the cost of that avoidance is already showing up on the scoreboard. The lead is shrinking.

The nations that win the next fifty years will not be the ones with the cleanest records. They will be the ones who learned the fastest. And you cannot learn fast if your system treats every failure as a funeral.

The spectators need a clean scorecard so they can sleep at night.

The operators know that progress does not announce itself. It compounds in silence. It looks like a flatline for years before the curve goes vertical.

America does not have a talent problem. It has a permission problem. The talent is here. The willingness to risk is being regulated into extinction.

The country that treats failure as data will own the future.

The country that treats failure as disgrace will watch from the sidelines and wonder what happened.

English

@TrisH0x2A Clever and universal for some use cases, limited and restrictive for others.

English