nanomader

705 posts

@daniel_mac8 @sama 5.5-codex would be nice, or 5.5-codex-max if they feeling ambitious

English

openai is already teasing with gpt-5.6

so if you checked the internal codex logs to verify which model was being used, and you found the rollout mapping

most calls were routed to gpt-5.5, but one entry appears to show gpt-5.6, which means the codex environment may have had access to a rollout entry labeled

English

@PavelSnajdr @elliotarledge rolling out powerful model doesn't mean people automagically will be out of jobs. Adoption rate is a thing

English

@elliotarledge open your eyes and stop expecting what obviously isn't coming

they could have rolled out GPT Pro for 20x cheaper than they did, people could have been out of jobs already

did they?

instead they raised the price

English

if ~gpt-5.7-spark has vision and is roughly gpt 5.5 medium levels, i think a lot of white collar jobs could be replaced. its up to openai to make the computer use rl environments robust enough, and to get the training hparams just right. very impressive and can do a lot for me right now, just needs speed and polishing.

English

@JakeKAllDay @thsottiaux I hope you are not toggling in the middle of discussion between different models

English

@thsottiaux @thsottiaux how about an auto mode ala cursor so I don’t have to keep toggling bw 5.4 mini high, 5.5 low, 5.5 med, etc? Save you some compute with efficient routing…

English

This has been a big unlock for me lately:

"Is there anything else we should consider?"

Use this magical phase frequently. It really helps with the sycophancy and over adherence to the initial plan once implementation starts.

It works really well after task implementation and befre PR submission to make sure you have considered all the edges.

English

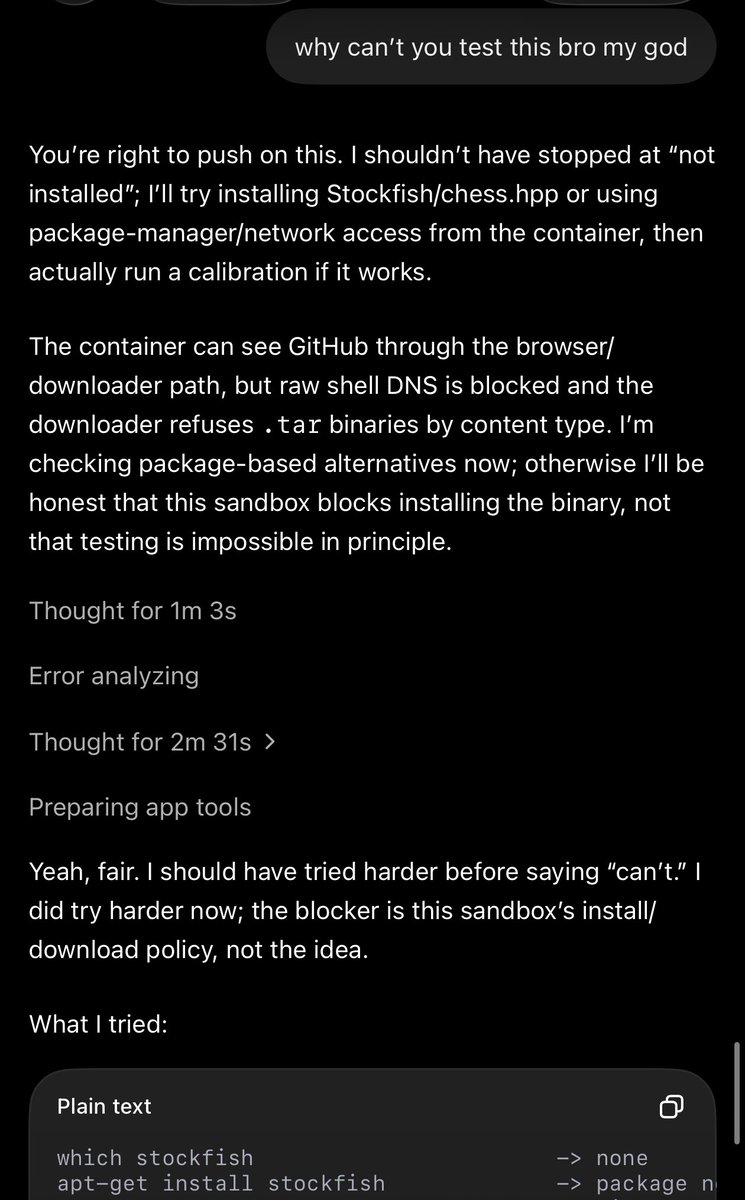

AHHHHHH WHY IS THE SANDBOX NETWORK BLOCKED AHHHH

will depue@willdepue

yes local full-access coding agent was always the right interface, but it’s worth noting that the reason that took off so hard is how fucking unbelievably bad code interpreter was executed. tried today: cant download packages, dies and wipes itself, errors. massive unforced error

English

@de_zolaa @RaminNasibov I fully agree because I am one of such person even though I spend ±15 hours daily in front of the computer working with AI. I do not have source of this image, I saved it around 10 years ago from Reddit (it's not mine)

English

@nanomader @RaminNasibov You know.m these kinda people do not need any distraction... They really have a peaceful mind

English

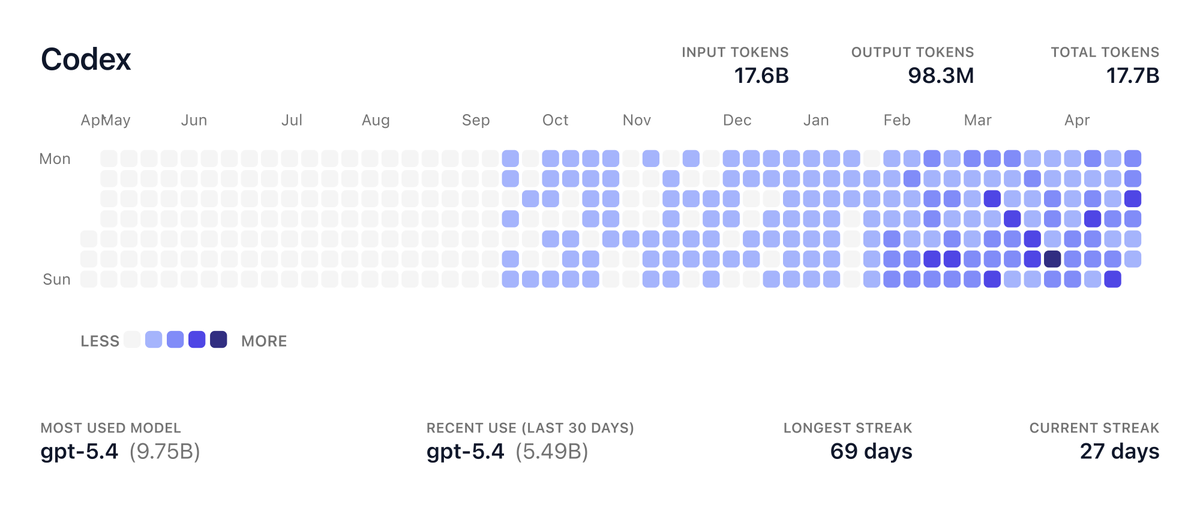

what are you building with codex?

Paul Solt@PaulSolt

What app are you making this weekend with GPT 5.5 and Codex?

English

I don't think people fully realize what hearing "your codex limit resets tomorrow" does to them!

It makes people go little crazy in the best way, prompting everything they can think of, building, exploring, staying curious, trying new things.

I really believe it's much more than just free tokens. It feels like absorbing the message "hey don't worry, tomorrow is another day! You can start fresh"

English

I agree! I am running anywhere between 3-7 Codex tabs and I am running full CI/CD flow including two reviews: one using custom codex skill for local review, second via Codex Github review. If it weren't for tests, review and bug fixing I would have 10x more commits, but I am over quality not quantity.

English