Ofir Shalev

8.7K posts

Angehefteter Tweet

Here is why you should read "Causal Inference: What If" 📚 by @_MiguelHernan and James Robins 👇🏼

technofob.com/2020/06/23/cau…

#causalinference #MachineLearning #Statistics

English

If "superintelligence" is defined not as a system that already knows almost everything at launch but as one with extremely high learning speed, then safe AGI is best viewed as a super-capable learner, iteratively educated via incremental, tightly monitored deployment @ilyasut

implicator.ai/ilya-sutskever…

English

Great talk by @BradSmi Vice Chair and President of @Microsoft ⬇️

➡️ How to stay relevant in the age of AI? Focus on the uniquely human advantage - the 5 Cs:

💪 Curiosity

💪 Communication

💪 Compassion

💪 Courage

💪 Creativity

➡️ #AI will be the most defining technology of the next 25 years.

➡️ Innovation alone isn’t enough - diffusion (adoption) matters just as much.

➡️ Skilling has always been at the heart of tech adoption.

➡️ #Singapore continues to rank as a global leader in AI adoption, with Microsoft investing an additional $50B across Singapore, Indonesia, Malaysia and Thailand.

Thanks @IMDAsg for organizing!

English

Ofir Shalev retweetet

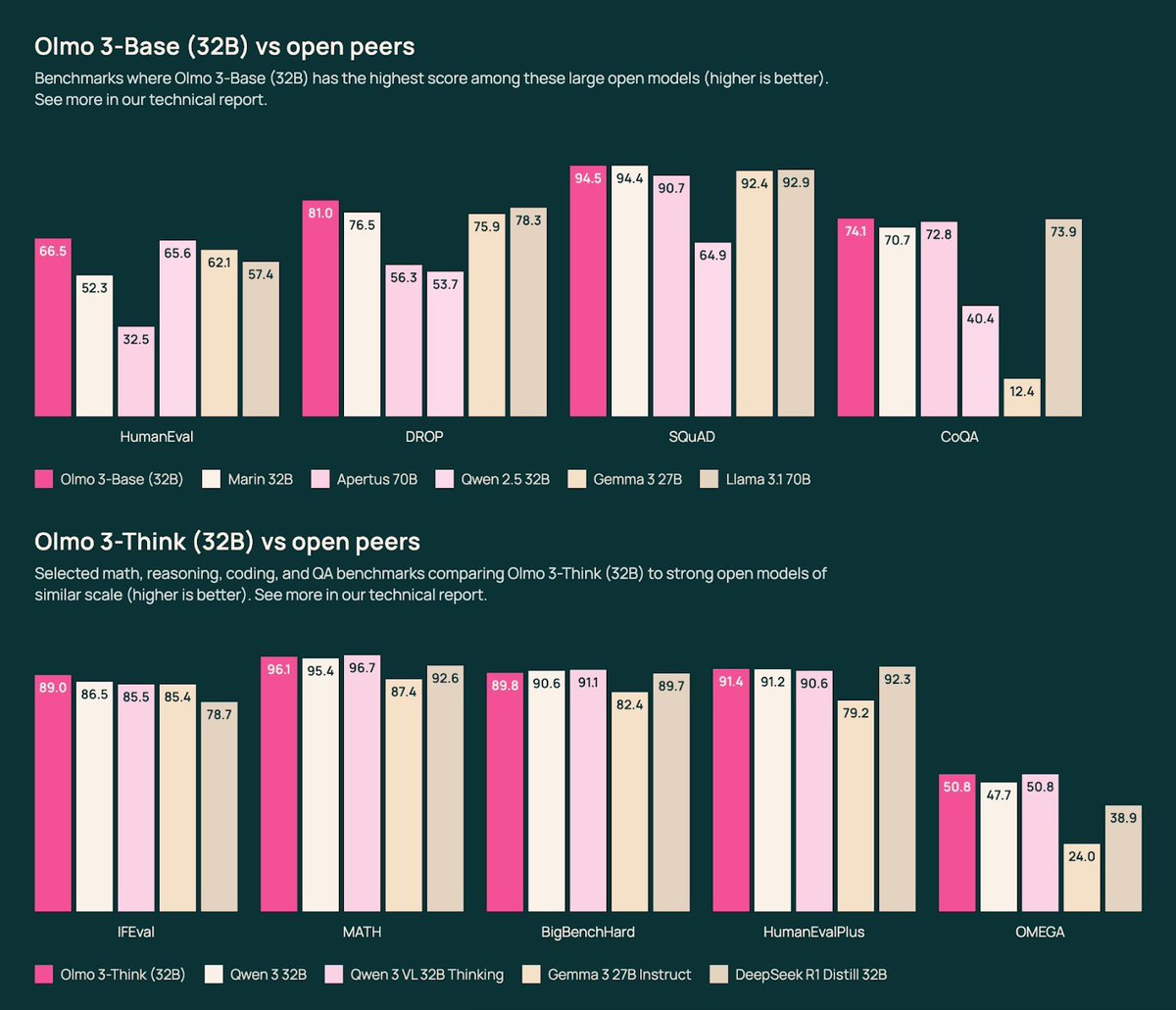

We present Olmo 3, our next family of fully open, leading language models.

This family of 7B and 32B models represents:

1. The best 32B base model.

2. The best 7B Western thinking & instruct models.

3. The first 32B (or larger) fully open reasoning model.

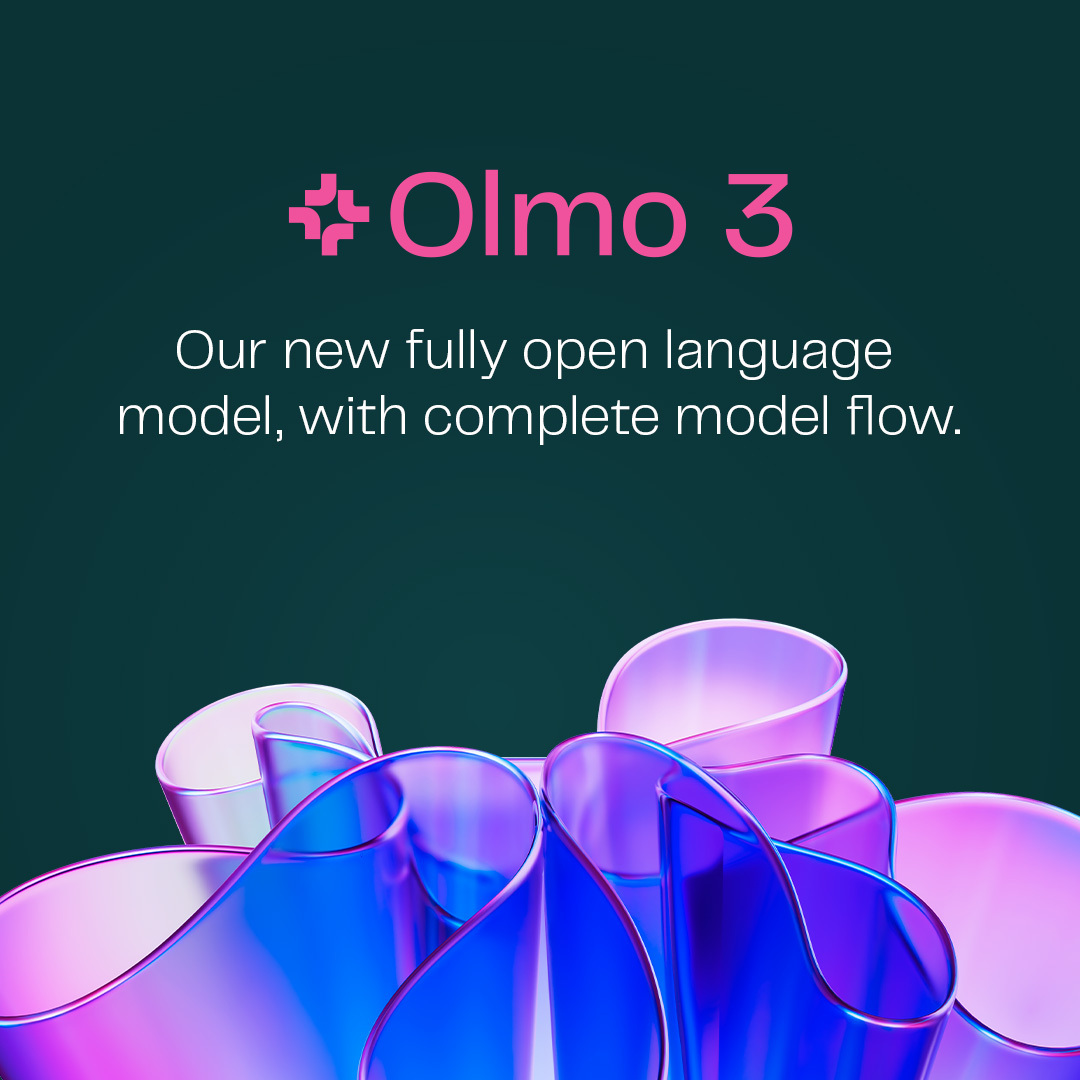

This is a big milestone for Ai2 and the Olmo project. These aren’t huge models (more on that later), but it’s crucial for the viability of fully open-source models that they are competitive on performance – not just replications of models that came out 6 to 12 months ago. As always, all of our models come with full training data, code, intermediate checkpoints, training logs, and a detailed technical report. All are available today, with some more additions coming before the end of the year.

As with OLMo 2 32B at its release, OLMo 3 32B is the best open-source language model ever released. It’s an awesome privilege to get to provide these models to the broader community researching and understanding what is happening in AI today.

Base models – a strong foundation

Pretraining’s demise is now regularly overstated. 2025 has marked a year where the entire industry rebuilt their training stack to focus on reasoning and agentic tasks, but some established base model sizes haven’t seen a new leading model since @alibaba_qwen's Qwen 2.5 in 2024. The Olmo 3 32B base model could be our most impactful artifact here, as Qwen3 did not release their 32B base model (likely for competitive reasons). We show that our 7B recipe competes with Qwen 3, and the 32B size enables a starting point for strong reasoning models or specialized agents. Our base model’s performance is in the same ballpark as Qwen 2.5, surpassing the likes of Stanford’s Marin (@stanfordAILab) and Gemma 3 (@GoogleDeepMind), but with pretraining data and code available, it should be more accessible to the community to learn how to finetune it (and be confident in our results).

We’re excited to see the community take Olmo 3 32B base in many directions. 32B is a loved size for easy deployment on single 80GB+ memory GPUs and even on many laptops, like the MacBook I’m using to write this on.

A model flow – the lifecycle of creating a model

With these strong base models, we’ve created a variety of post-training checkpoints to showcase the many ways post-training can be done to suit different needs. We’re calling this a “Model Flow.” For post-training, we’re releasing Instruct versions – short, snappy, intelligent, and useful especially for synthetic data en masse (e.g. recent work by Datology @datologyai on OLMo 2 Instruct), Think versions – thoughtful reasoners with the performance you expect from a leading thinking model on math, code, etc. and RL Zero versions – controlled experiments for researchers understanding how to build post-training recipes that start with large-scale RL on the base model.

The first two post-training recipes are distilled from a variety of leading, open and closed, language models. At the 32B and smaller scale, direct distillation with further preference finetuning and reinforcement learning with verifiable rewards (RLVR) is becoming an accessible and highly capable pipeline. Our post-training recipe follows our recent models: 1) create an excellent SFT set, 2) use direct preference optimization (DPO) as a highly iterable, cheap, and stable preference learning method despite its critics, and 3) finish up with scaled up RLVR. All of these stages confer meaningful improvements on the models’ final performance.

Instruct models – low latency workhorse

Instruct models today are often somewhat forgotten, but the likes of @aiatmeta Llama 3.1 Instruct and smaller, concise models are some of the most adopted open models of all time. The instruct models we’re building are a major polishing and evolution of the Tülu 3 pipeline – you’ll see many similar datasets and methods, but with pretty much every datapoint or training code being refreshed. Olmo 3 Instruct should be a clear upgrade on Llama 3.1 8B, representing the best 7B scale model from a Western or American company. As scientists we don’t like to condition the quality of our work based on its geographic origins, but this is a very real consideration to many enterprises looking to open models as a solution for trusted AI deployments with sensitive data.

Building a thinking model

What people have most likely been waiting for are our thinking or reasoning models, both because every company needs to have a reasoning model in 2025, but also to clearly open the black box for the most recent evolution of language models. Olmo 3 Think, particularly the 32B, are flagship models of this release, where we considered what would be best for a reasoning model at every stage of training.

Extensive effort (ask me IRL about more war stories) went into every stage of the post-training of the Think models. We’re impressed by the magnitude of gains that can be achieved in each stage – neither SFT nor RL is all you need at these intermediate model scales.

First we built an extensive reasoning dataset for supervised finetuning (SFT), called Dolci-Think-SFT, building on very impactful open projects like OpenThoughts3, Nvidia’s Nemotron Post-training, Prime Intellect’s SYNETHIC-2, and many more open prompt sources we pulled forward from Tülu 3 / OLMo 2. Datasets like this are often some of our most impactful contributions (see the Tülu 3 dataset as an example in Thinking Machine’s Tinker :D @thinkymachines @tinker_api – please add Dolci-Think-SFT too, and Olmo 3 while you’re at it, the architecture is very similar to Qwen which you have).

For DPO with reasoning, we converged on a very similar method as HuggingFace’s (@huggingface) SmolLM 3 with Qwen3 32B as the chosen model and Qwen3 0.6B as the rejected. Our intuition is that the delta between the chosen and rejected samples is what the model learns from, rather than the overall quality of the chosen answer alone. These two models provide a very consistent delta, which provides way stronger gains than expected. Same goes for the Instruct model. It is likely that DPO is helping the model converge on more stable reasoning strategies and softening the post-SFT model, as seen by large gains even on frontier evaluations such as AIME.

Our DPO approach was an expansion of Geng, Scott, et al. "The delta learning hypothesis: Preference tuning on weak data can yield strong gains." arXiv preprint arXiv:2507.06187 (2025). Many early open thinking models that were also distilled from larger, open-weight thinking models likely left a meaningful amount of performance on the table by not including this stage.

Finally, we turn to the RL stage. Most of the effort here went into building effective infrastructure to be able to run stable experiments with the long-generations of larger language models. This was an incredible team effort to be a small part of, and reflects work ongoing at many labs right now. Most of the details are in the paper, but our details are a mixture of ideas that have been shown already like ServiceNow’s PipelineRL or algorithmic innovations like DAPO and Dr. GRPO. We have some new tricks too!

Some of the exciting contributions of our RL experiments are 1) what we call “active refilling” which is a way of keeping the generations from the learner nodes constantly flowing until there’s a full batch of completions with nonzero gradients (from equal advantages) – a major advantage of our asynchronous approach; and 2) cleaning, documenting, decontaminating, mixing, and proving out the large swaths of work done by the community over the last months.

The result is an excellent model that we’re very proud of. It has very strong reasoning benchmarks (AIME, GPQA, etc.) while also being stable, quirky, and fun in chat with excellent instruction following. The 32B range is largely devoid of non-Qwen competition. The scores for both of our Thinkers get within 1-2 points overall with their respective Qwen3 8/32B models – we’re proud of this!

A very strong 7B scale, Western thinking model is Nvidia’s (@NVIDIAAI) NVIDIA-Nemotron-Nano-9B-v2 hybrid model. It came out months ago and is extremely strong. I personally suspect it may be due to the hybrid architecture making subtle implementation bugs in popular libraries, but who knows.

All in, the Olmo 3 Think recipe gives us a lot of excitement for new things to try in 2026.

RL Zero

DeepSeek R1 showed us a way to new post-training recipes for frontier models, starting with RL on the base model rather than a big SFT stage (yes, I know about cold-start SFT and so on, but that’s an implementation detail). We used RL on base model as a core feedback cycle when developing the model, such as during intermediate midtraining mixing. This is viewed now as a fundamental, largely innate, capability of the base-model.

To facilitate further research on RL Zero, we released 4 datasets and series of checkpoints, showing per-domain RL Zero performance on our 7B model for data mixes focus on math, code, instruction following, and all mixed together.

In particular, we’re excited about the future of RL Zero research on Olmo 3 precisely because everything is open. Researchers can study the interaction between the reasoning traces we include at midtraining and the downstream model behavior (qualitative and quantitative).

This helps answer questions that have plagued RLVR results on Qwen models, hinting at forms of data contamination particularly on math and reasoning benchmarks (see Shao, Rulin, et al. "Spurious rewards: Rethinking training signals in rlvr." arXiv preprint arXiv:2506.10947 (2025). or Wu, Mingqi, et al. "Reasoning or memorization? unreliable results of reinforcement learning due to data contamination." arXiv preprint arXiv:2507.10532 (2025).)

What’s next

This is the biggest project we’ve ever taken on at Ai2 (@allen_ai), with 60+ authors and numerous other support staff.

In building and observing “thinking” and “instruct” models coming today, it is clear to us that there’s a very wide variety of models that fall into both of these buckets. The way we view it is that thinking and instruct characteristics are on a spectrum, as measured by the number of tokens used per evaluation task. In the future we’re excited to view this thinking budget as a trade-off, and build models that serve different use-cases based on latency/throughput needs.

As for a list of next models or things we’ll build, we can give you a list of things you’d expect from a (becoming) frontier lab: MoEs, better character training, pareto efficient instruct vs think, scale, specialized models we actually use at Ai2 internally, and all the normal things.

This is one small step towards what I see as a success for my ATOM project.

We thank you for all your support of our work at Ai2. We have a lot of work to do. We’re going to be hunting for top talent at NeurIPS to help us scale up our Olmo team in 2026.

This post in full also appears on Interconnects – the full links to the artifacts and paper are below.

Moo, moo, rawr!

English

I quite like the new DeepSeek-OCR paper. It's a good OCR model (maybe a bit worse than dots), and yes data collection etc., but anyway it doesn't matter.

The more interesting part for me (esp as a computer vision at heart who is temporarily masquerading as a natural language person) is whether pixels are better inputs to LLMs than text. Whether text tokens are wasteful and just terrible, at the input.

Maybe it makes more sense that all inputs to LLMs should only ever be images. Even if you happen to have pure text input, maybe you'd prefer to render it and then feed that in:

- more information compression (see paper) => shorter context windows, more efficiency

- significantly more general information stream => not just text, but e.g. bold text, colored text, arbitrary images.

- input can now be processed with bidirectional attention easily and as default, not autoregressive attention - a lot more powerful.

- delete the tokenizer (at the input)!! I already ranted about how much I dislike the tokenizer. Tokenizers are ugly, separate, not end-to-end stage. It "imports" all the ugliness of Unicode, byte encodings, it inherits a lot of historical baggage, security/jailbreak risk (e.g. continuation bytes). It makes two characters that look identical to the eye look as two completely different tokens internally in the network. A smiling emoji looks like a weird token, not an... actual smiling face, pixels and all, and all the transfer learning that brings along. The tokenizer must go.

OCR is just one of many useful vision -> text tasks. And text -> text tasks can be made to be vision ->text tasks. Not vice versa.

So many the User message is images, but the decoder (the Assistant response) remains text. It's a lot less obvious how to output pixels realistically... or if you'd want to.

Now I have to also fight the urge to side quest an image-input-only version of nanochat...

vLLM@vllm_project

🚀 DeepSeek-OCR — the new frontier of OCR from @deepseek_ai , exploring optical context compression for LLMs, is running blazingly fast on vLLM ⚡ (~2500 tokens/s on A100-40G) — powered by vllm==0.8.5 for day-0 model support. 🧠 Compresses visual contexts up to 20× while keeping 97% OCR accuracy at <10×. 📄 Outperforms GOT-OCR2.0 & MinerU2.0 on OmniDocBench using fewer vision tokens. 🤝 The vLLM team is working with DeepSeek to bring official DeepSeek-OCR support into the next vLLM release — making multimodal inference even faster and easier to scale. 🔗 github.com/deepseek-ai/De… #vLLM #DeepSeek #OCR #LLM #VisionAI #DeepLearning

English

Ofir Shalev retweetet

We introduce h4rm3l, a language, a synthesizer, and a scalable and explainable dynamic LLM red-teaming toolkit. h4rm3l found > 2.6k new jailbreak attacks targeting @OpenAI, @AIatMeta, and @AnthropicAI LLMs.

📝 arxiv.org/pdf/2408.04811

🌐 mdoumbouya.github.io/h4rm3l

🧵1/6 👇🏾

English

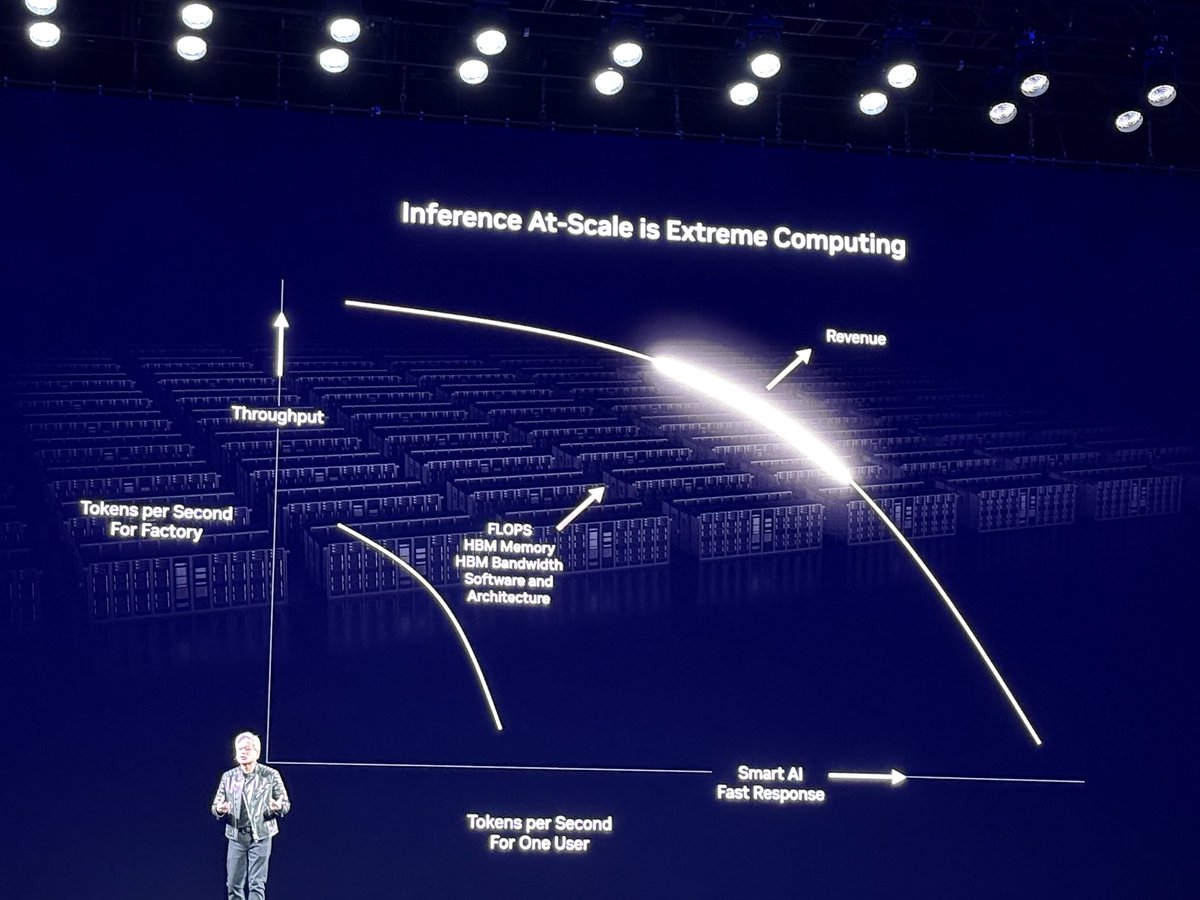

Attending @NVIDIAGTC 2025 keynote in person at the fully packed SAP Center in San Jose, California, was an exciting experience.

CEO Jensen Huang, wearing his signature black leather jacket, addressed a crowd of over 25,000 attendees.

👇Here are the key highlights

1. Inference Token Surge: Jensen revealed that inference now demands 100 times more tokens than last year, due to:

•The evolution from one-shot inference to complex reasoning models.

•The adoption of reinforcement learning with verifiable results (RLVR).

2. Data Center Investment Boom: A significant increase in data center capital expenditures is projected, escalating from $250 billion in 2022 to an anticipated $1 trillion by 2028.

3. Industry-Specific Software Innovations: The introduction of CUDA-X tailored for various industries was announced, featuring tools like cuPYNUMERIC, Megatron, NCCL, and cuDNN to enhance deep learning applications.

4. Collaboration with General Motors: NVIDIA is partnering with General Motors to develop future self-driving vehicles, integrating the Halos Chip-to-Development AV Safety System to enhance autonomous vehicle safety.

5. Grace Blackwell Production: The Grace Blackwell platform has entered full production.

6. Launch of NVIDIA Dynamo: Huang introduced NVIDIA Dynamo, an open-source distributed inference serving library designed to manage inference at scale. He emphasized balancing high tokens per user with overall system throughput, noting that batching requests can increase system tokens but may also elevate user latency.

7. Upcoming Vera Rubin Platforms: The Vera Rubin NVL144 is scheduled for release in the second half of 2026, followed by the Rubin Ultra NVL576 in the latter half of 2027, signaling NVIDIA’s ongoing innovation in AI infrastructure.

8. NVidia Nemotron Super 49B model.

English

Awesome fireside chat with @joetsai1999, Chairman of Alibaba Group @AlibabaGroup

at the @CNBC Converge Live event 👏

Joe Tsai discussed sports, company culture, AI, AGI, and the power of open source.

He openly shared about the intense competition in e-commerce and Alibaba’s challenge with slow decision-making due to multiple approval layers. To address this, he encouraged his leaders to view @AlibabaGroup as two units: e-commerce and cloud computing, while empowering employees to make faster decisions.

He sees AI as a major driver of productivity, impacting 60% of GDP and a $10 trillion TAM. Their focus includes running a cloud computing business benefiting from AI inference and fine-tuning, and leveraging AI in products like e-commerce, mapping, and advertising to optimize conversion rates.

He also emphasized the importance of open source in fostering innovation and enabling the development of cutting-edge AI models.

#CNBCConvergeLive #AI

English

Awesome to see @Benioff and @RayDalio discussing AI, deep tech, digital labor, agents, investment and innovation at @CNBC converge live 2025 #CNBCConvergeLive

English

Q: "What would be your advice to #CIOs who are just beginning their #AI journey?"

A: ⬇️

Thx @BurcuBicakci @EgonZehnder

English

Meta just released Movie Gen, including two foundation models:

• Movie Gen Video. A 30B parameter model for joint text-to-image and text-to-video generation

* Movie Gen Audio. A 13B parameter model for video- and text-to-audio generation

ai.meta.com/blog/movie-gen…

English

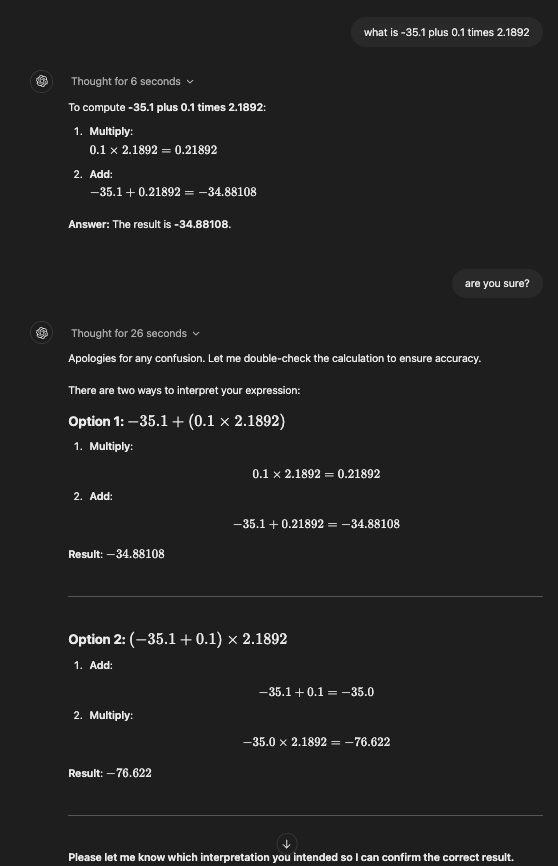

Just asked ChatGPT o1-preview @OpenAI: "What is -35.1 plus 0.1 times 2.1892."

Apparently there are two ways to interpret that 🤔

English

#acl2024nlp highlights:

💡@rao2z on LLMs' limitations in planning

💡@barbara_plank on the importance of embracing variation in NLP to address biases and trust issues

💡@AyuP_AI, William & Serana on the ongoing efforts to develop #LLMs in Southeast Asia.

linkedin.com/posts/ofirshal…

English

Insights from ACL 2024 Bangkok: Advancing AI, LLMs and NLP linkedin.com/pulse/insights… #LLMs #ArtificialInteligence #AI #MachineLearning #ACL2024

English