zyot

2.8K posts

Look guys, it's actually really straightforward, a bunch of people staked their ETH on the Ethereum blockchain to earn yield, except they didn't want their capital to be locked up, so they actually staked with a liquid staking protocol called Lido who provided them a liquid staking receipt token called stETH, except they decided to juice their yield further by depositing their stETH receipt tokens into a restaking protocol called Eigenlayer, except they didn't want to lock up their capital, so they actually restaked with a liquid restaking protocol called KelpDAO who provided them with a liquid restaking receipt token called rsETH, except they decided to juice their yield further by depositing their rsETH tokens into a lending protocol called Aave so that they could open a leveraged looping position that borrows ETH against the rsETH collateral and restakes the ETH into rsETH which is then deposited as collateral, except it turns out rsETH used a cross-chain bridge called LayerZero that was hacked by north koreans causing rsETH to become undercollateralized and now these looping positions are stuck and unprofitable, and everyone is pointing fingers at each other, and also DeFi is a very serious industry

English

@jonasstarkx @0xethermatt what is crazy to me is that despite 2008 people still do not understand systemic risk: allowing e-mode on AAVE and allowing 0.93:1 seems crazy . in the governance vote (8/24 and 12/25) zero mention of bridge risk...

English

@0xethermatt KelpDAO exploit is a reminder:

bridges + synthetic assets = biggest attack surface in crypto.

Mint → collateralize → drain liquidity

Same playbook, new victim.

DeFi doesn’t need more yield.

It needs verification layers such as #Geeq

English

zyot retweetet

Why is Everyone Quiet about the Cross-Chain Honey Pots?

$10B+ at risk?

This post will cover:

1. DVNs on @LayerZero_Fndn

2. ISMs on @hyperlane

3. OFTs & Warp Assets

4. Non-dormant addresses on @ether_fi and @renzoai multisigs

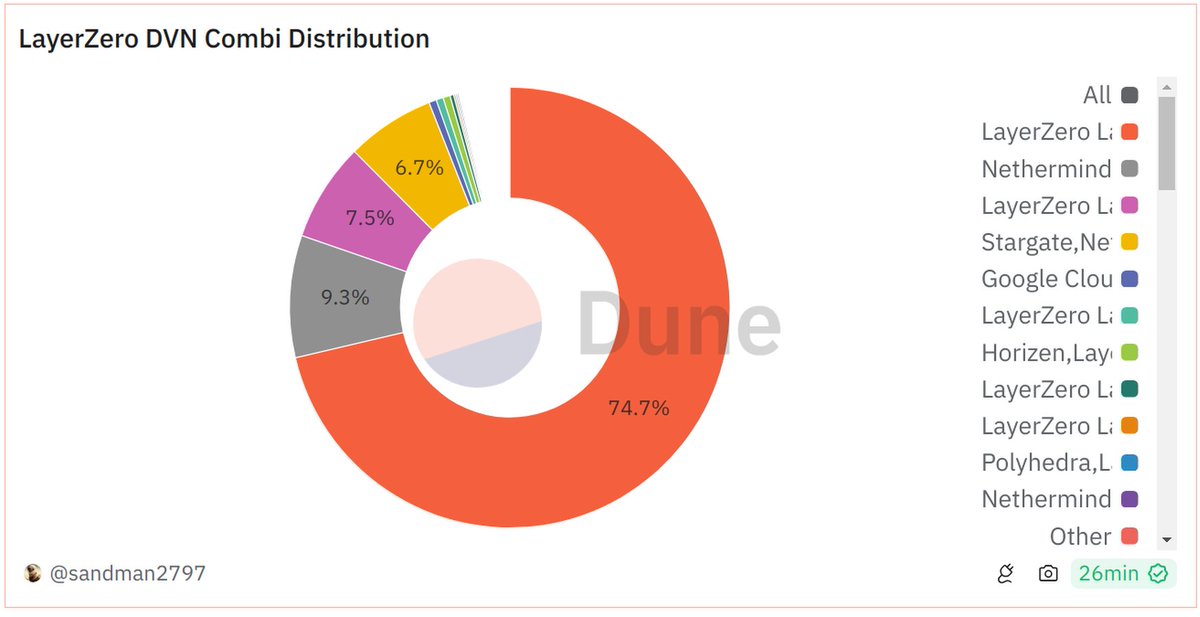

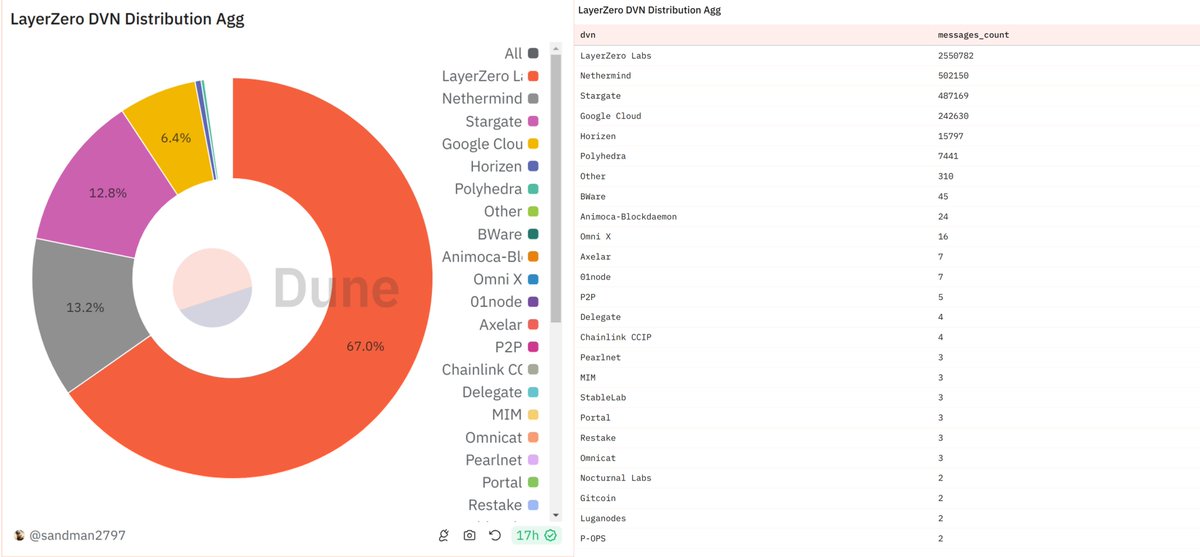

"Decentralised Verifier Network" aka DVNs by LayerZero

LayerZero Labs DVN: 2/3 multisig

Nethermind DVN: 1/1 multisig

Stargate DVN: 1/1

Google Cloud DVN: 2/3

Horizen DVN: 2/2

Source: You gotta go to Etherscan and call the signerSize and quorum functions. Here are the contracts: Link [1] (in the reply)

Note: There is no guarantee that these multisigs are actually distributed and not maintained by a single person like in the case of Multichain.

The name "DVN" itself is misleading. It certainly mislead me into trusting them more. A DVN is a modular validator entity inside LayerZero. That means, if you choose a single DVN set-up, your cross chain messages will be solely validated by this DVN. You can choose multiple DVNs or m out n DVNs to secure your setup.

Most protocols (clients using LZ) have 2 DVN setups at max. I had to create this Dune dashboard myself to look into what's happening on-chain.

For instance, Stargate has 2 DVNs. Stargate DVN and Nethermind DVN. Both are 1/1 multisigs. Securing, checks notes, $442.84m.

Dune is doing a terrible job here, here's how the distribution of various configurations looks like. Look at the numbers that start tapering off as we go down the list. Dashboard link [2].

So, most protocols (clients using LZ) simply trust this one entity, LayerZero Labs, a 2/3 multisig. It's baffling to me that we're all fine with this and nobody is talking about it. We gotta push these teams towards more secure systems, rather push protocols that are using LayerZero to demand for more security.

Let's look at Hyperlane, LayerZero's biggest competitor at the moment.

First of all, thank God they call their default setup "Multisig ISM", ISM = "Interchain Security Module". They are at least honest about it. It is a multisig. Period.

Hyperlane has setup their default ISM to be a distributed set of validators with different quorums for different chains. Each of these validators in this multisig setups are different entities, like various DVNs on LayerZero.

Here's how their default setup looks like:

Arbitrum: 3/5 multisig

Base: 2/5

Blast: 2/3

BNB: 2/4

Ethereum: 3/7

Optimism: 2/5

(source: Link [3], note: they said this post prompted them to up their numbers, so this may have been updated)

It is not very far off from the LayerZero DVN setups. But atleast you can be sure that 3-7 of these entites are actively validating in the system. It also seems better than using a single LayerZero Labs DVN setup. By the way, in a m/n multisig setup, if n is >> m, you are compromised if ANY of the m keys are compromised. In their BNB setup, 2/4, if any of the 2 validators out of 4 are compromised, you are compromised.

If you compare these with Wormhole's default 13/19 setup, Wormhole looks a lot better. But I've heard it is upgradable. Do they need 13/19 signers to upgrade? I don't know.

There are two main arguments by the GMPs (General Messaging Protocols, LZ & HL in this case) defending the lack of security of individual setups at the moment.

1. You can make it as secure as you want by adding as many DVNs/ISMs as possible. This is a marketplace and the market isn't choosing their security right.

2. You can upgrade to a more secure setup when they are available.

Choosing your own security

In fact, I'm writing about this after I had to choose my own setup for my protocol built on LayerZero. I had no idea what to choose. LayerZero does not provide any information on the current usage distribution of DVNs, nor do they advice you on a secure setup as they want to be agnostic. Layerzeroscan only provides data on the distribution of messages by different protocols using LZ. But that is not useful to me at all. They don't even tell us what DVNs these protocols are using. That's why I built my own Dune dashboard.

Here are the most used DVNs across major EVM chains:

Outside of the top 6 DVNs I mentioned at the top of this post, none of the DVNs are getting any volume. Why would a protocol choose to even trust DVNs other than the active ones? What guarantee is there that they are active and will be active in the future? What if you brick your system by choosing a dying DVN? If a DVN is not getting any volume, they would rather turn off their nodes as it costs to run a DVN.

It's the same with complex DVNs or ISMs. If there is an ISM that is not being used, that means, it is not battle tested. If it is not securing any value, why would you trust it to secure your protocol? So the argument that these GMPs are agnostic marketplaces does not hold true at all. Someone has to help the crypto protocols choose the right setups.

It is as if Amazon offered a default product for all of your searches and gave you a list of other options without product availability, reviews or even a description.

In my experience, Hyperlane is more eager to engage their clients with education than LayerZero.

It should be easier for more DVNs to start competing in the GMP marketplaces. In reality, there is no way for them to market themselves to the protocols using Hyperlane/LayerZero outside of shouting into the void on Twitter. Apparently the teams(LZ said so) are currently working on dashboards to showcase more data about individual DVNs/ISMs. Maybe this post pushed them to do so.

The second main argument is that, protocols should use this trusted setup now, so that they can upgrade to a ZK bridge or a restaked security setup later down the line.

The Upgradability of Your Setup

First of all, I want to highlight that this is so far from the crypto ethos that got me into this space. Mutability, smh. Let's compare an ERC20 with an omnichain token.

An ERC20

1. Has a fixed supply that nobody can change (most of em)

2. Exists on a blockchain where nobody, including the team itself, can mint extra ERC20s

An OFT or A Warp Asset

1. Has a fixed supply in theory, but an unlimited number of tokens can be minted if the interop setup is compromised, unless there is a rate limit.

2. Has its interop setup managed by a multisig controlled by the token issuer (protocol). This multisig can change the rate limit as well (lol?).

3. Exists on multiple blockchains where if one of the chains is malicious, they might be able to mint as many tokens as possible, unless there is a rate limit, which can be changed.

Let's look at team multisigs for a second. At least they are dormant addresses locked up in a basement, right? Right?

@ether_fi is a protocol with $5.5B+ in TVL.

Here is the multisig (Link[4]) securing their weETH OFT. 5 out of these 6 wallets have been active in the last 2 months. That means a higher likelihood of getting their private keys stolen.. For context, Ronin ($600m) and Harmony Bridge ($100m) hacks were due to comprises of multisigs.

@renzoai is a protocol with $1.5B in TVL. And their ezETH is an xERC20. It is also secured by a 3/5 (multisig Link [5]). All 5 of these addresses have been active recently. And they all seem to be kinda interlinked. But I am not an expert on-chain sleuth to comment on that though.

Will Ethena's USDe ever depeg? Perhaps not due to their stablecoin design, but rather because of their interop setup (LayerZero Labs DVN + Horizen DVN, basically a 4/5). At least 7 of their 9 multisig addresses are dormant.

So, can we say a total of around $10B+ is at risk here?

I am not blaming these GMPs. They are simply selling a setup. I am pushing the community to demand enough security from the protocols that are using these setups. Did we all forget that the bridge hacks have accounted for >50% of all funds we have lost? Now we are offering billions more on a platter to the hackers around the world. Kim Jong-Un is probably rubbing his hands right now.

Native Bridges, Ignored, And Left for Dead

It is easy to point out problems than to offer solutions. What is the best security for cross-chain messaging/tokens right now then? I would suggest studying wstETH by Lido. It uses native bridges to bridge and also to control the upgradable token setups on L2s. The upgradability is controlled by the Lido DAO on L1. Except the upgradability aspect of this, I have no issues with this setup. There is no way an unlimited amount of wstETH can be minted in this case.

There will be solutions based on restaking in the future, hopefully they will offer a much better security than what we have today.

Closing Thoughts

I used to think very highly of LayerZero as a protocol. A protocol that is marketed x.com/mark_murdock3/… as a peer next to Bitcoin and Ethereum. Bitcoin, Ethereum, LayerZero. But I do not feel strongly about it anymore. I don't think it's even close. Bitcoiners chose the smaller blocks chain, Ethereans still care about the solo stakers, but the protocols using LayerZero are fine with one or two DVN setups.

This is not a post targeted towards any of the GMPs/protocols mentioned here. I wanted to voice out my concern because I hold a lot more ETH than I hold ZRO (I do hold some ZRO, sandmanarc.eth). I have also integrated LayerZero into the protocol I am currently building. Although I am having second thoughts about it now.

Let's demand better standards from our industry. - A humble community member, Sand

English

zyot retweetet

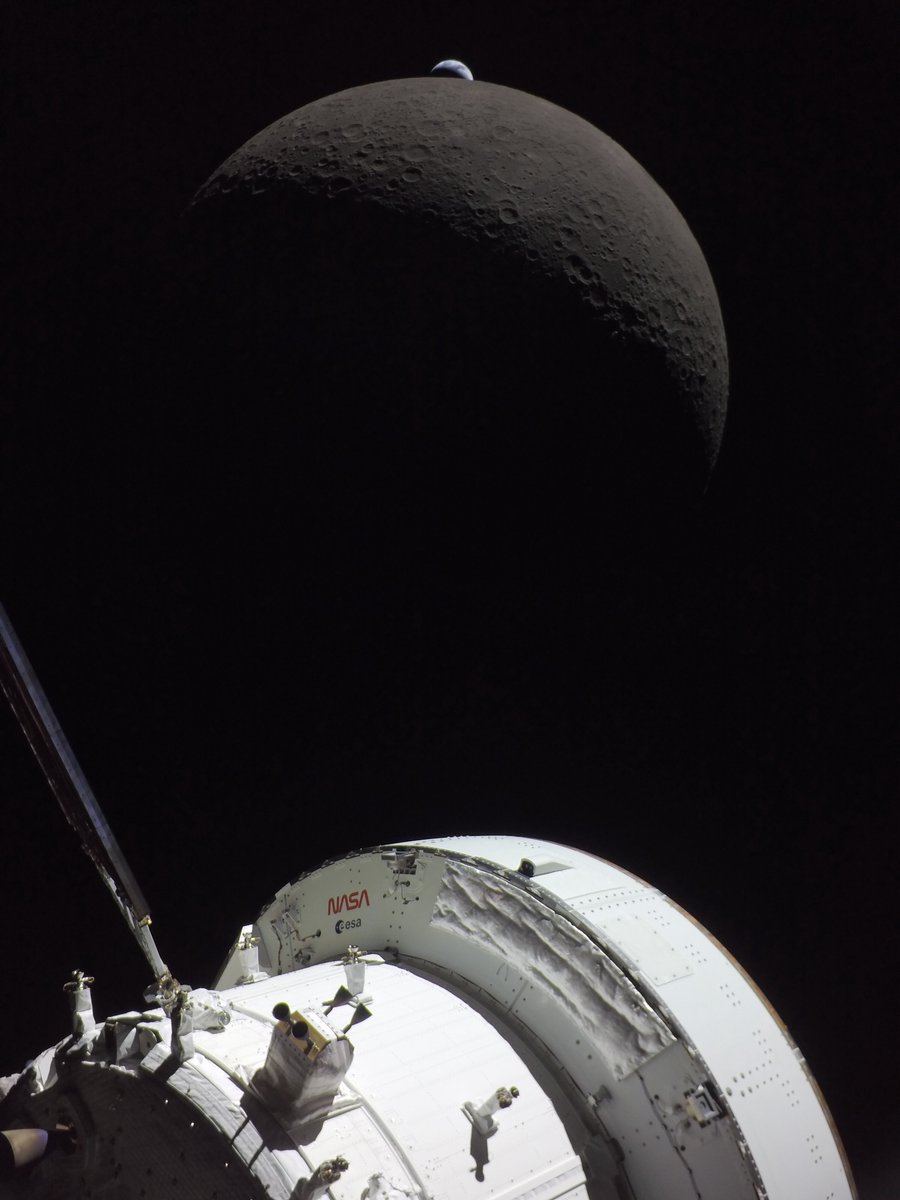

Only one chance in this lifetime…

Like watching sunset at the beach from the most foreign seat in the cosmos, I couldn’t resist a cell phone video of Earthset. You can hear the shutter on the Nikon as @Astro_Christina is hammering away on 3-shot brackets and capturing those exceptional Earthset photos through the 400mm lens. @AstroVicGlover was in window 3 watching with @Astro_Jeremy next to him.

I could barely see the Moon through the docking hatch window but the iPhone was the perfect size to catch the view…this is uncropped, uncut with 8x zoom which is quite comparable to the view of the human eye. Enjoy.

English

zyot retweetet

Feels like pooled lending protocols would benefit from a rate limit on the supply of an asset being deposited for collateral

Like, if the current supply is 100m and the supply cap is 300m, the supply should only be allowed to go to 110m in the next 10 minutes. Nobody needs to deposit all 200m in one shot

This matters because if/when an exotic asset is hacked, the impact of the hack is constrained by the size of the exit paths for that asset. Especially when you consider that many hacks are infinite mint bugs… there the size of the exits literally determines the size of the hack.

Lending protocols are often the largest exits (DEX liquidity is usually pretty small). Having a “smart cap” that is a bit above current supply, which can adjust over a few hours to the true cap, would make a huge difference. It would have saved rsETH depositors $200m today

This also raises an interesting point: asset issuers should want this too. If you are an asset issuer who issues receipt tokens which have a redemption delay, then you actually aren’t worried about a hacker redeeming with you. But you need possible exits to be as small as possible while not impeding normal users. High supply caps need to be seen as a liability, rather than a sign of stature.

English

@TimDraper what is your position on how to address quantum computer risks ? it looks as if bitcoin is headed for a fork. how contentious it will be i don't know but in 18months the question will be even more pressing most likely ?

English

I bought Bitcoin at $4. Or so I thought.

Peter Viscenne had offered to mine it for me. He bought some fast mining chips from Butterfly Labs, but rather than delivering them to him, they used them to mine their own bitcoin. Then when Peter finally got the chips, Bitcoin was over $30. So he mined what he could. Then we lost all our Bitcoin to Mt. Gox when they “lost” the money.

Since Bitcoin didn’t drop much on the Mt.Gox news, I did some research.

It turned out that Bitcoin was being used for remitting money, paying unbanked employees, and creating economies where there weren’t any.

So when I heard about the US Marshall’s auction, I bid over market at $632 and bought all nine lots offered.

Got on Fox Business in 2014 and said Bitcoin would hit $10,000 in three years.

The host looked at me like "Why did we have this guy on the show?"

Three years to the day, Bitcoin hit $10,000.

After that, my predictions have not been so prescient, but I have reason to believe that Bitcoin will reach $250k in 18 months… and eventually I expect the number to be higher as Bitcoin rises and the dollar falls to inflationary pressures.

English

zyot retweetet

zyot retweetet

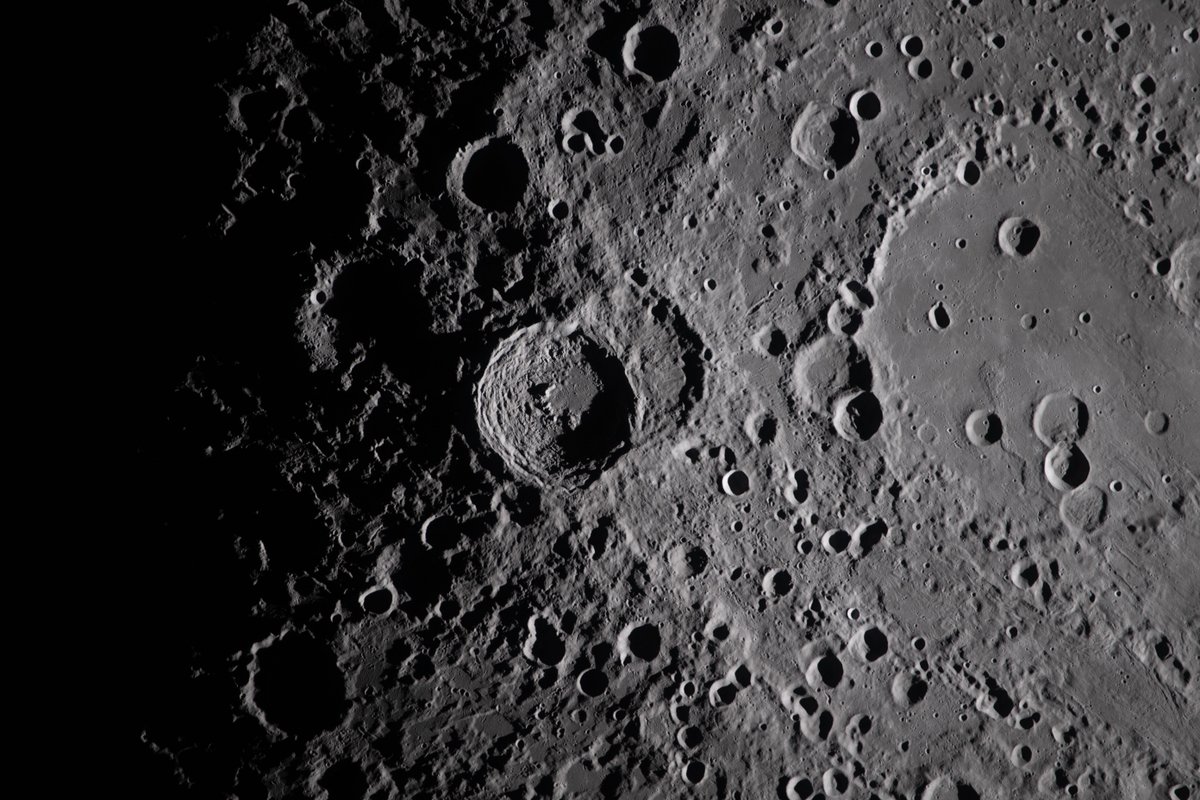

Hello, Moon. It’s great to be back.

Here’s a taste of what the Artemis II astronauts photographed during their flight around the Moon. Check out more photos from the mission: nasa.gov/artemis-ii-mul…

English

zyot retweetet

zyot retweetet

Introducing Project Glasswing: an urgent initiative to help secure the world’s most critical software.

It’s powered by our newest frontier model, Claude Mythos Preview, which can find software vulnerabilities better than all but the most skilled humans.

anthropic.com/glasswing

English

zyot retweetet

@pmarca Diffusion is the answer: output multiple tokens per forward pass, not one. On PinchBench, the benchmark for OpenClaw, Mercury 2 from @_inception_ai sits on the pareto frontier for speed-quality and cost-quality: pinchbench.com/?view=value&gr…

English

@eternisai congrats @eternisai glad to see you showing to world everything that you guys have been grinding on over the last few years!!

English

zyot retweetet

zyot retweetet

zyot retweetet

Best breakdown of Karpathy's "second brain" system I've seen. My co-founder turned it into an actual step-by-step build.

The 80/20:

1. Three folders: raw/ (dump everything), wiki/ (AI organizes it), outputs/ (AI answers your questions)

2. One schema file (CLAUDE.md) that tells the AI how to organize your knowledge. Copy the template in the article.

3. Don't organize anything by hand. Drop raw files in, tell the AI "compile the wiki." Walk away.

4. Ask questions against your own knowledge base. Save the answers back. Every question makes the next one better.

5. Monthly health check: have the AI flag contradictions, missing sources, and gaps.

6. Skip Obsidian. A folder of .md files and a good schema beats 47 plugins every time.

He includes a free skill that scaffolds the whole system in 60 seconds.

Nick Spisak@NickSpisak_

English

zyot retweetet

Today is a monumentous day for quantum computing and cryptography. Two breakthrough papers just landed (links in next tweet). Both papers improve Shor's algorithm, infamous for cracking RSA and elliptic curve cryptography. The two results compound, optimising separate layers of the quantum stack. The results are shocking. I expect a narrative shift and a further R&D boost toward post-quantum cryptography.

The first paper is by Google Quantum AI. They tackle the (logical) Shor algorithm, tailoring it to crack Bitcoin and Ethereum signatures. The algorithm runs on ~1K logical qubits for the 256-bit elliptic curve secp256k1. Due to the low circuit depth, a fast superconducting computer would recover private keys in minutes. I'm grateful to have joined as a late paper co-author, in large part for the chance to interact with experts and the alpha gleaned from internal discussions.

The second paper is by a stealthy startup called Oratomic, with ex-Google and prominent Caltech faculty. Their starting point is Google's improvements to the logical quantum circuit. They then apply improvements at the physical layer, with tricks specific to neutral atom quantum computers. The result estimates that 26,000 atomic qubits are sufficient to break 256-bit elliptic curve signatures. This would be roughly a 40x improvement in physical qubit count over previous state-of-the-art. On the flip side, a single Shor run would take ~10 days due to the relatively slow speed of neutral atoms.

Below are my key takeaways. As a disclaimer, I am not a quantum expert. Time is needed for the results to be properly vetted. Based on my interactions with the team, I have faith the Google Quantum AI results are conservative. The Oratomic paper is much harder for me to assess, especially because of the use of more exotic qLDPC codes. I will take it with a grain of salt until the dust settles.

→ q-day: My confidence in q-day by 2032 has shot up significantly. IMO there's at least a 10% chance that by 2032 a quantum computer recovers a secp256k1 ECDSA private key from an exposed public key. While a cryptographically-relevant quantum computer (CRQC) before 2030 still feels unlikely, now is undoubtedly the time to start preparing.

→ censorship: The Google paper uses a zero-knowledge (ZK) proof to demonstrate the algorithm's existence without leaking actual optimisations. From now on, assume state-of-the-art algorithms will be censored. There may be self-censorship for moral or commercial reasons, or because of government pressure. A blackout in academic publications would be a tell-tale sign.

→ cracking time: A superconducting quantum computer, the type Google is building, could crack keys in minutes. This is because the optimised quantum circuit is just 100M Toffoli gates, which is surprisingly shallow. (Toffoli gates are hard because they require production of so-called "magic states".) Toffoli gates would consume ~10 microseconds on a superconducting platform, totalling ~1,000 sec of Shor runtime.

→ latency optimisations: Two latency optimisations bring key cracking time to single-digit minutes. The first parallelises computation across quantum devices. The second involves feeding the pubkey to the quantum computer mid-flight, after a generic setup phase.

→ fast- and slow-clock: At first approximation there are two families of quantum computers. The fast-clock flavour, which includes superconducting and photonic architectures, runs at roughly 100 kHz. The slow-clock flavour, which includes trapped ion and neutral atom architectures, runs roughly 1,000x slower (~100 Hz, or ~1 week to crack a single key).

→ qubit count: The size-optimised variant of the algorithm runs on 1,200 logical qubits. On a superconducting computer with surface code error correction that's roughly 500K physical qubits, a 400:1 physical-to-logical ratio. The surface code is conservative, assuming only four-way nearest-neighbour grid connectivity. It was demonstrated last year by Google on a real quantum computer.

→ future gains: Low-hanging fruit is still being picked, with at least one of the Google optimisations resulting from a surprisingly simple observation. Interestingly, AI was not (yet!) tasked to find optimisations. This was also the first time authors such as Craig Gidney attacked elliptic curves (as opposed to RSA). Shor logical qubit count could plausibly go under 1K soonish.

→ error correction: The physical-to-logical ratio for superconducting computers could go under 100:1. For superconducting computers that would be mean ~100K physical qubits for a CRQC, two orders of magnitude away from state of the art. Neutral atoms quantum computers are amenable to error correcting codes other than the surface code. While much slower to run, they can bring down the physical to logical qubit ratio closer to 10:1.

→ Bitcoin PoW: Commercially-viable Bitcoin PoW via Grover's algorithm is not happening any time soon. We're talking decades, possibly centuries away. This observation should help focus the discussion on ECDSA and Schnorr. (Side note: as unofficial Bitcoin security researcher, I still believe Bitcoin PoW is cooked due to the dwindling security budget.)

→ team quality: The folks at Google Quantum AI are the real deal. Craig Gidney (@CraigGidney) is arguably the world's top quantum circuit optimisooor. Just last year he squeezed 10x out of Shor for RSA, bringing the physical qubit count down from 10M to 1M. Special thanks to the Google team for patiently answering all my newb questions with detailed, fact-based answers. I was expecting some hype, but found none.

English

zyot retweetet

A top Research Scientist at Anthropic showed how Claude found zero-day vulnerabilities live on stage.

By Nicholas Carlini.

It discovered a zero-day in Ghost, which has 50,000 stars on GitHub and had never had a critical security vulnerability in its history.

In 90 minutes, it found the blind Structured Query Language injection, took the admin Application Programming Interface key, and then repeated the same move against the Linux kernel.

---

Nicholas Carlini presents a stark warning: LLMs have crossed a critical threshold where they can autonomously discover and exploit 0-day vulnerabilities in major, heavily-audited software — including the Linux kernel and popular web applications.

Using a surprisingly minimal "scaffold" built around Claude, Anthropic's research has uncovered 500+ high-severity vulnerabilities.

Carlini demonstrates two real-world case studies (Ghost CMS SQL injection and a Linux kernel NFS heap overflow dating back to 2003), shows exponential capability growth using METR data, and argues that the security community must urgently prepare for a world where AI-powered offensive capabilities far outpace current defenses.

---

From 'unprompted' YT channel ( link in comment)

English

zyot retweetet

@VitalikButerin @zengjiajun_eth Check Freysa (FAI)

Best of Best Local AI agent (silo app)

@freysa_ai

Français