Pascal2_22./

1.2K posts

Pascal2_22./

@Pascal2_22

Accelerating @Gradient_HQ Advancing OIS stack: ./ Open AI for Sovereign Future. Make it you against the matrix

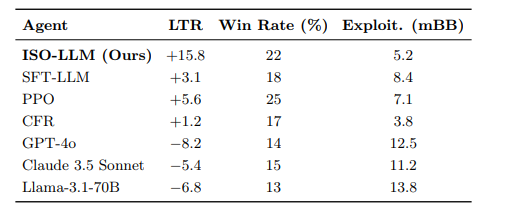

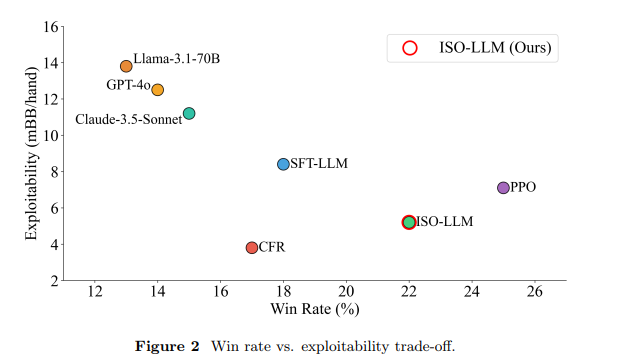

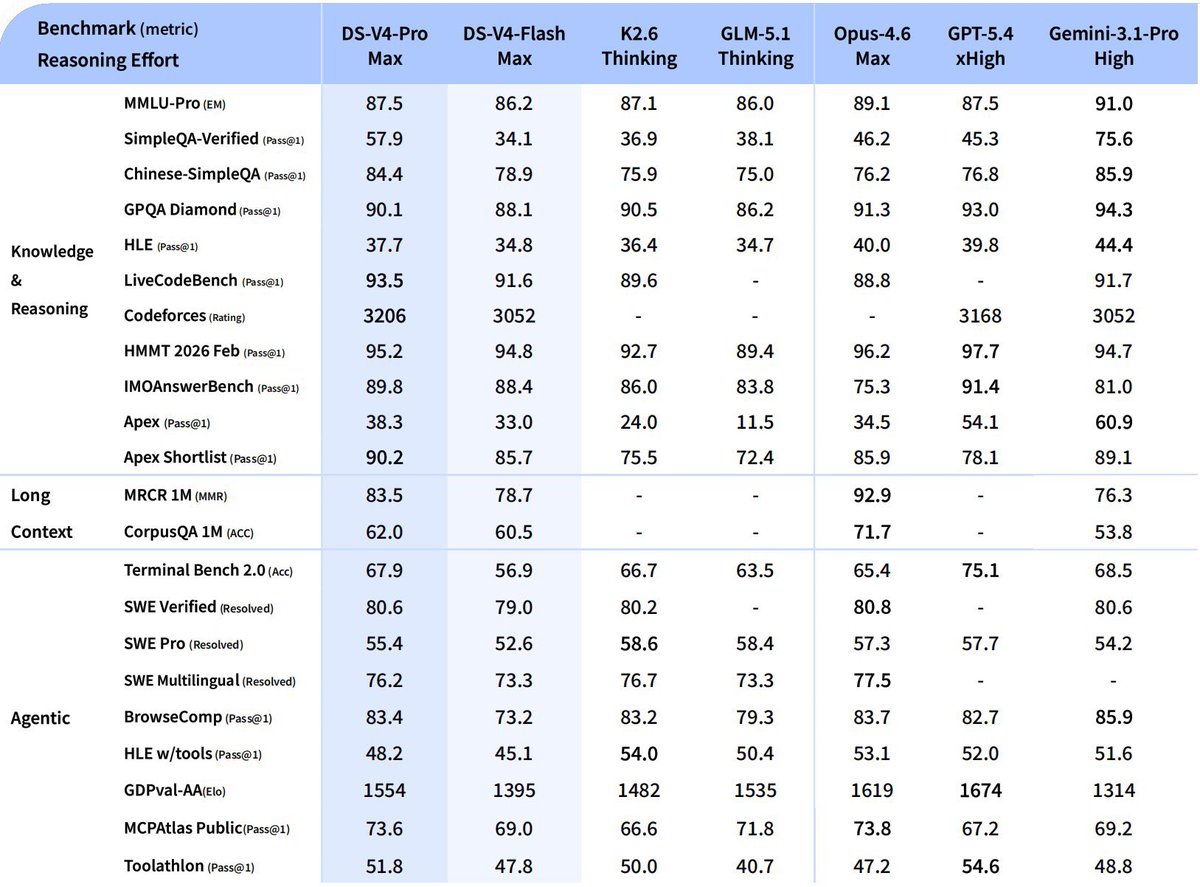

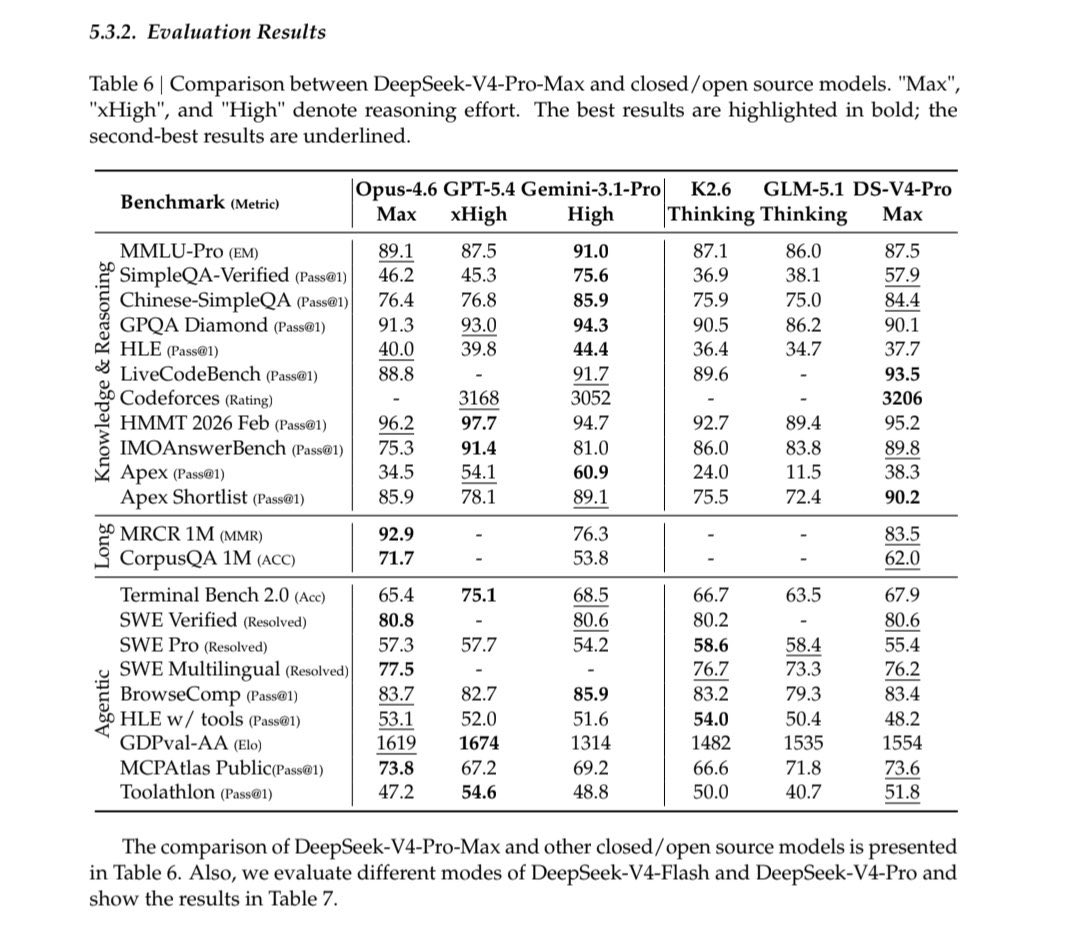

The right charts show exactly how constrained Labs redesign attention to need less HBM. DeepSeek didn't solve long context by throwing more memory at it. They redesigned how attention accumulates memory so the KV cache stays flat instead of growing linearly. That's architectural innovation under resource constraint not hardware brute force as Frontier Labs approach it. The left Chart shows: Performance of DeepSeek V4 Pro Max, beating or matching Claude Opus 4.6, GPT-5.4 and Gemini 3.1 Pro across nearly every benchmark. Knowledge, reasoning, agentic tasks. The performance gap between V4 and frontier closed source models is either marginal or nonexistent on most tasks. On the Right chart, the Efficiency of Deepseek V4 Pro runs at 3.7x lower FLOPs than V3.2 at long context. V4 Flash runs at 9.8x lower FLOPs. KV cache — the memory that explodes as context grows — is 9.5x to 13.7x smaller. Same benchmark performance. Fraction of the compute and memory cost. Frontier labs scale infrastructure to match model demands. DeepSeek scales architecture to outrun the hardware bill.

🚀 DeepSeek-V4 Preview is officially live & open-sourced! Welcome to the era of cost-effective 1M context length. 🔹 DeepSeek-V4-Pro: 1.6T total / 49B active params. Performance rivaling the world's top closed-source models. 🔹 DeepSeek-V4-Flash: 284B total / 13B active params. Your fast, efficient, and economical choice. Try it now at chat.deepseek.com via Expert Mode / Instant Mode. API is updated & available today! 📄 Tech Report: huggingface.co/deepseek-ai/De… 🤗 Open Weights: huggingface.co/collections/de… 1/n

Awesome to see @tryParallax’s distributed framework for heterogeneous machines being implemented and serving up inferences! Build and customize your own clusters for AI like never before 🤖 ./ LFG @Gradient_HQ