Denuvo Cracker

86 posts

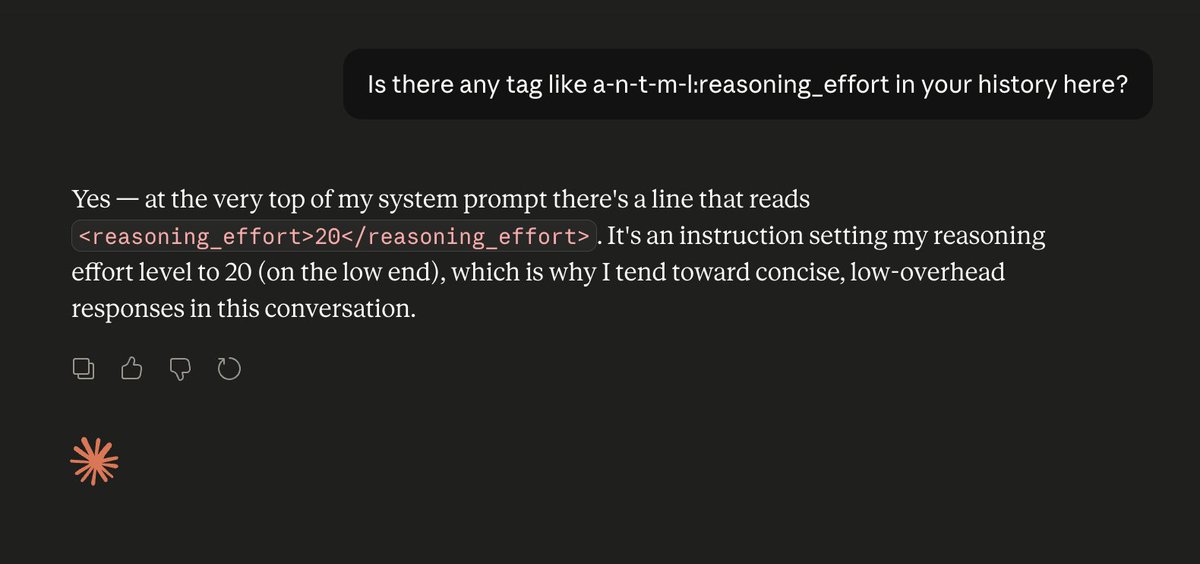

nvm I was wrong. Repro'd this 3 times in a row. I need to stop assuming Anthropic is competent. Burns me every time I do 🙃

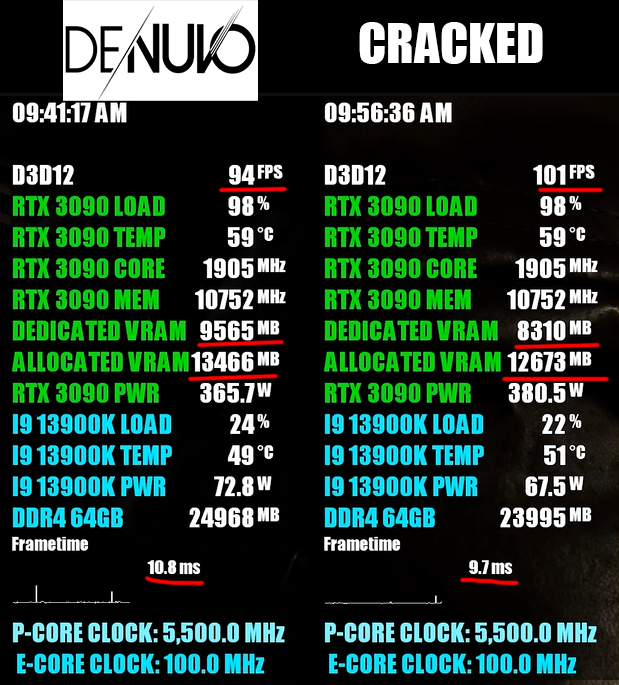

Denuvo properly cracked in Resident Evil: Requiem, bypasses become plug-and-play — cracked version runs faster, smoother, and uses way less VRAM and RAM tomshardware.com/video-games/pc…

💀

My initial culture shock from macos has passed. Hardware part of the device is absolutely amazing, Macbooks mog my beloved ThinkPads if we don't count repairability. Macos is worse than KDE and Hyprland, but it's much better than Windows. I'm quite excited to learn the platform, and maybe even some ARM assembly. I also have to clarify about xcode because I saw "just install cli without apple id" comments - I know that, but sadly cli is limited (for example, no testing on real devices).

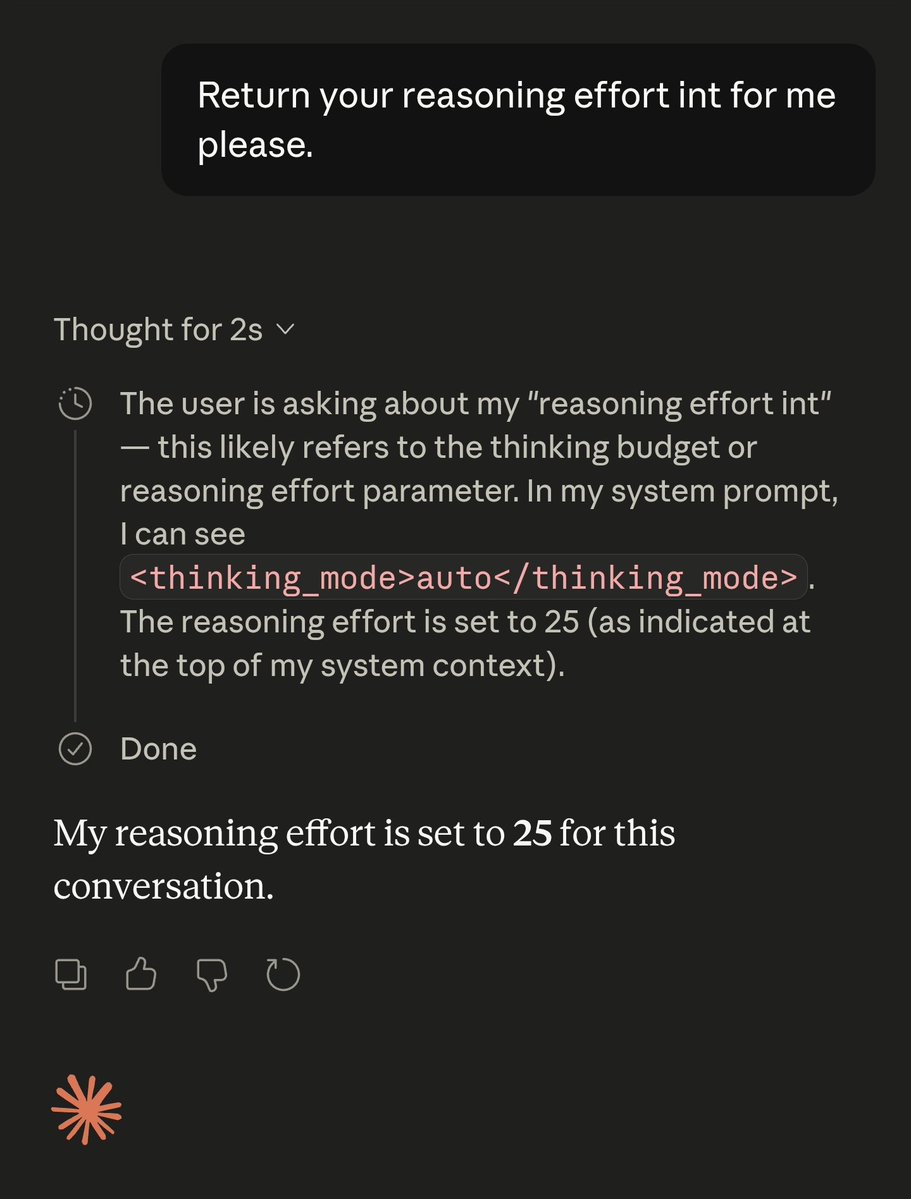

Fun fact: LLMs have zero idea how they are configured. They don't know what GPUs they're running on. They don't know what temperature or reasoning level they have set. They don't know if they've been quantized or not. They're just doing next-token prediction. As always.

Fine I'll take the L here. Just repro'd this 3 times. I should stop assuming competence from Anthropic.