Rasool Fakoor

608 posts

Rasool Fakoor

@rasoolfa

Building agents that reason, adapt, and act with RL and friends!

📢 Call for papers: Workshop on Methods and Reinforcement Learning Environments for Evaluating AI Agents @ ACM CAIS 2026 (inaugural edition!) Topics include: - Design principles for effective RL Environments - Methods to evaluate Agents, esp. causal/interventional techniques

At the Boson AI Higgs Audio Hackathon in collaboration with @Eigen_AI_Labs , you’ll work with ultra-low latency inference, expressive prosody modelling, advanced voice cloning + audio understanding with our model served by Eigen AI. Apply today: luma.com/3vnw0e0q

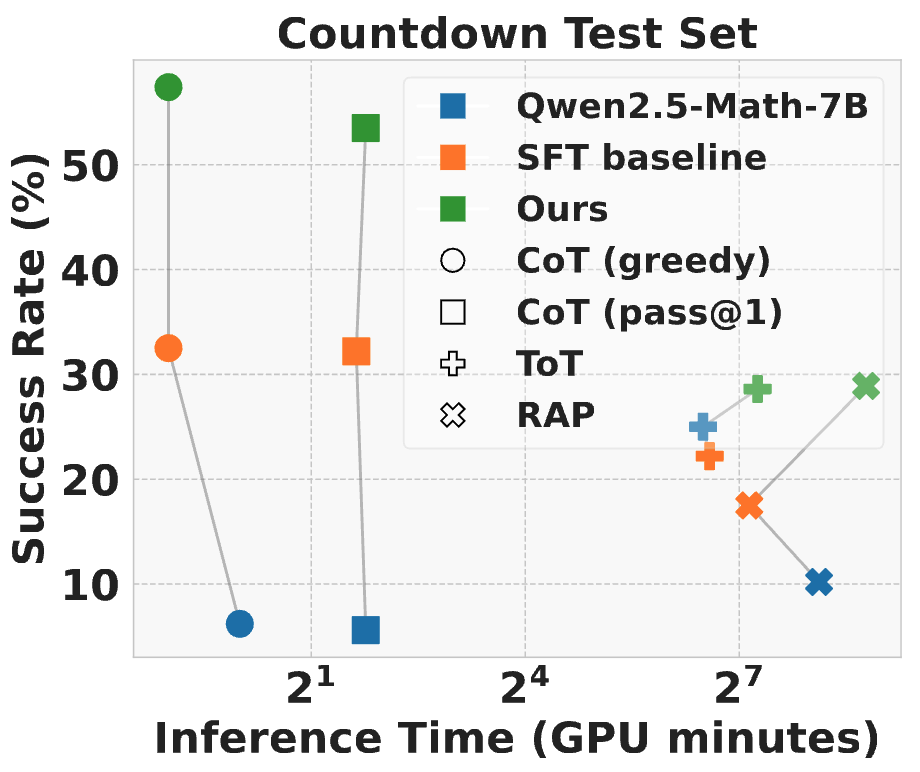

Can we make LLMs reason effectively without a huge inference time cost? We show a powerful approach through learning and forgetting! Our recipe: 1️⃣ Aggregate reasoning paths from diverse sources: Chain-of-Thought, inference-time search (Tree-of-Thought, Reasoning-via-Planning), classic algorithms (BFS, DFS) 2️⃣ Learn successful reasoning paths ✅ while forgetting failed reasoning paths ❌ at the same time, which we call Unlikelihood Fine-Tuning (UFT) 3️⃣ Small learning rate is crucial to preserve inference-time search capabilities Results on challenging math games, Countdown & Game-of-24: ⚡180× faster inference than search-based baseline 📈Beats CoT and inference-time search (ToT, RAP) 📄 Paper: arxiv.org/abs/2504.11364 💻 Code & data: github.com/twni2016/llm-r… Work completed during my internship at @AmazonScience. Thank you to my co-authors @allen_a_nie @Sapana_007 @yaoliucs Huzefa Rangwala @rasoolfa!

Our team is *hiring* interns & researchers! We’re a small team of hardcore researchers & engineers working on foundation models, agentic methods, and embodiment. If you have strong publications and related experience, plz fill out application form. forms.gle/4bUeFfksUhCLap…

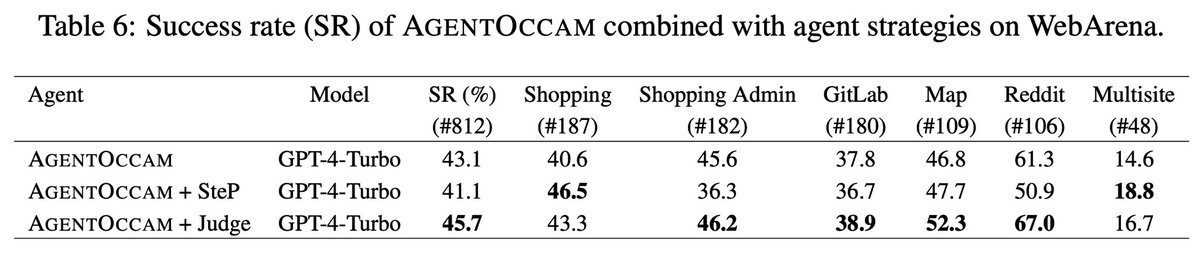

👾 Introducing AgentOccam: Automating Web Tasks with LLMs! 🌐 AgentOccam showcases the impressive power of Large Language Models (LLMs) on web tasks, without any in-context examples, new agent roles, online feedback, or search strategies. 🏄🏄🏄 🧙 Link: arxiv.org/abs/2410.13825 🧐 By refining the observation and action spaces, AgentOccam achieves a groundbreaking zero-shot performance, outperforming previous methods on the WebArena benchmark. This simple yet effective approach underlines the importance of aligning these spaces closely with LLM capabilities for enhanced efficiency. 📈 ✨ Highlights: - AgentOccam leads with a 29.4% improvement over state-of-the-art methods SteP, and a 161% boost in success rate compared to the vanilla agent. 🤖 - Achievements made possible without complicating the process with additional examples or strategies. 🚫 - All our replication work, prompts, and evaluator error rectifications are transparently shared in the appendix. 📚 🌟 Special thanks to my super brilliant and considerate mentor Yao and Rasool, our supportive manager Huzefa, and the invaluable suggestions and contributions from Sapana, Pratik, and George. Your guidance and support have been pivotal in this journey! #AgentOccam #LLM #WebAutomation #AI