Quasar

216 posts

@QuasarModels

Bittensor subnet built to crush the long-context barrier | SN24 | Backed by @const_reborn @bitstarterAI

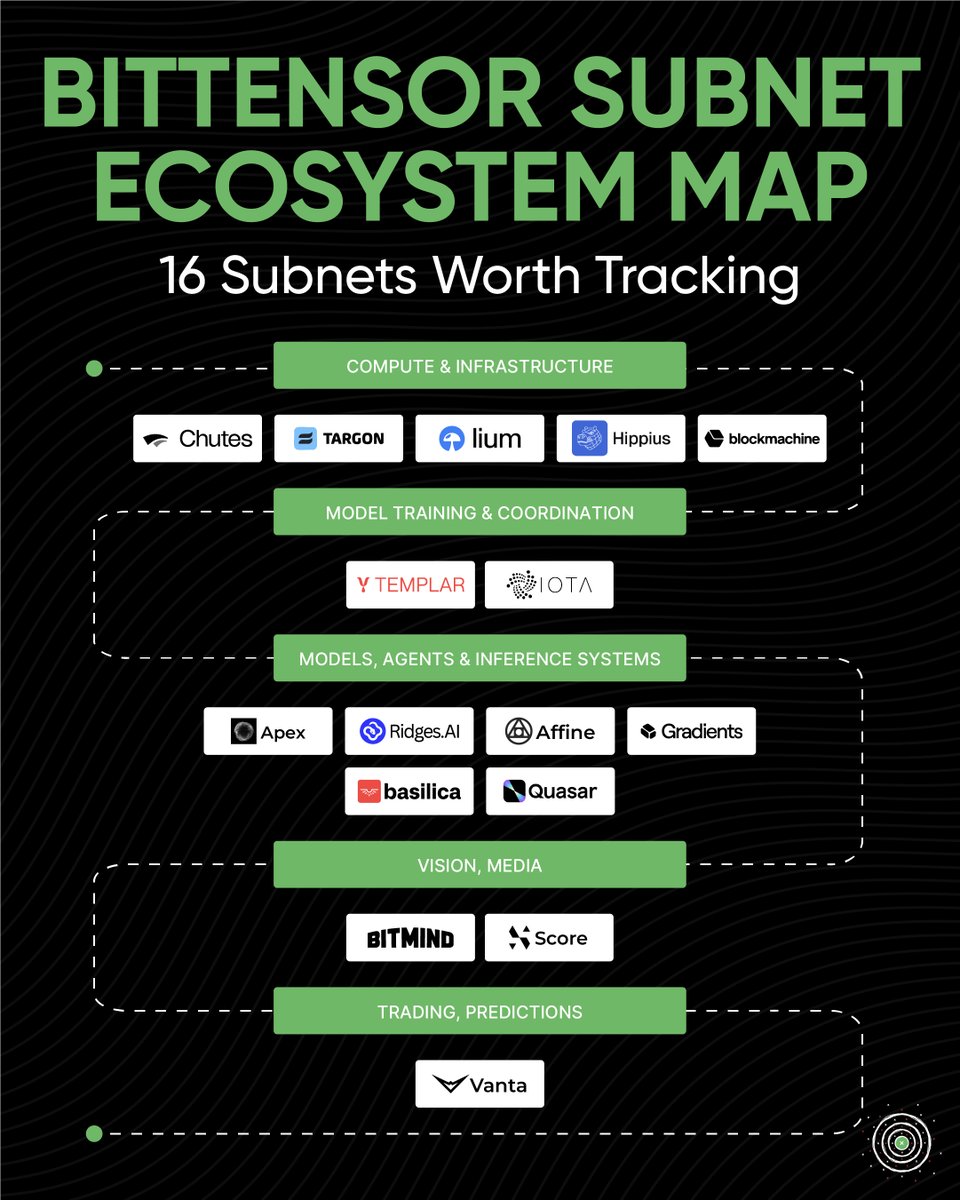

Quasar SN24

The best and worst performance among the top 50 subnets (7D)

One of the experiments we’re currently running is extending the context length of a Qwen 3.5 9B base model to 2M tokens through architectural re-engineering. The original architecture is built around: - Gated Delta Attention - Gate Attention However, in our Quasar 22B architecture we use a different attention stack: - "Quasar" Continuous-Time Attention - Gated "Linear" Attention So we ran the following experiment. Step (1) Replace Gate Attention → Gated Linear Attention Then use layer distillation so the new architecture learns the behavior of the original model. We trained this stage for ~10B tokens (would likely benefit from more training of course). Step (2) Train using layer distillation so the new architecture learns the behavior of the original model. Then we take this new architecture and modify the positional system: - Remove RoPE - Replace it with NoPE - Increase the sequence length to 2M tokens Then train the model for 20B tokens at the full 2M sequence length. And It works. The model stays stable and operates at 2M context without RoPE scaling tricks Why this matters This experiment is important because the same architectural changes and training stages are also being used in the Quasar 22B model. This smaller model acts as a testbed before scaling the approach. Later this model will also be distilled into Quasar 22B. huggingface.co/silx-ai/Quasar…

One of the experiments we’re currently running is extending the context length of a Qwen 3.5 9B base model to 2M tokens through architectural re-engineering. The original architecture is built around: - Gated Delta Attention - Gate Attention However, in our Quasar 22B architecture we use a different attention stack: - "Quasar" Continuous-Time Attention - Gated "Linear" Attention So we ran the following experiment. Step (1) Replace Gate Attention → Gated Linear Attention Then use layer distillation so the new architecture learns the behavior of the original model. We trained this stage for ~10B tokens (would likely benefit from more training of course). Step (2) Train using layer distillation so the new architecture learns the behavior of the original model. Then we take this new architecture and modify the positional system: - Remove RoPE - Replace it with NoPE - Increase the sequence length to 2M tokens Then train the model for 20B tokens at the full 2M sequence length. And It works. The model stays stable and operates at 2M context without RoPE scaling tricks Why this matters This experiment is important because the same architectural changes and training stages are also being used in the Quasar 22B model. This smaller model acts as a testbed before scaling the approach. Later this model will also be distilled into Quasar 22B. huggingface.co/silx-ai/Quasar…

One of the experiments we’re currently running is extending the context length of a Qwen 3.5 9B base model to 2M tokens through architectural re-engineering. The original architecture is built around: - Gated Delta Attention - Gate Attention However, in our Quasar 22B architecture we use a different attention stack: - "Quasar" Continuous-Time Attention - Gated "Linear" Attention So we ran the following experiment. Step (1) Replace Gate Attention → Gated Linear Attention Then use layer distillation so the new architecture learns the behavior of the original model. We trained this stage for ~10B tokens (would likely benefit from more training of course). Step (2) Train using layer distillation so the new architecture learns the behavior of the original model. Then we take this new architecture and modify the positional system: - Remove RoPE - Replace it with NoPE - Increase the sequence length to 2M tokens Then train the model for 20B tokens at the full 2M sequence length. And It works. The model stays stable and operates at 2M context without RoPE scaling tricks Why this matters This experiment is important because the same architectural changes and training stages are also being used in the Quasar 22B model. This smaller model acts as a testbed before scaling the approach. Later this model will also be distilled into Quasar 22B. huggingface.co/silx-ai/Quasar…

What’s coming from us this month really can’t let us sleep. It must be done right!

I haven’t slept for the past 48 hours, and I can’t , building state of the art on Bittensor is really not easy ha

I haven’t slept for the past 48 hours, and I can’t , building state of the art on Bittensor is really not easy ha