Junda

2.6K posts

Junda

@samwize

iOS Engineer @JupiterExchange, ex-@Poloniex, ex-ShopBack. Vested in 2 kids 🇸🇬 Funded by making apps. Guardian for $kobiki 🍭

My app built with @rork max is now live on the App Store. Next up, UGC army

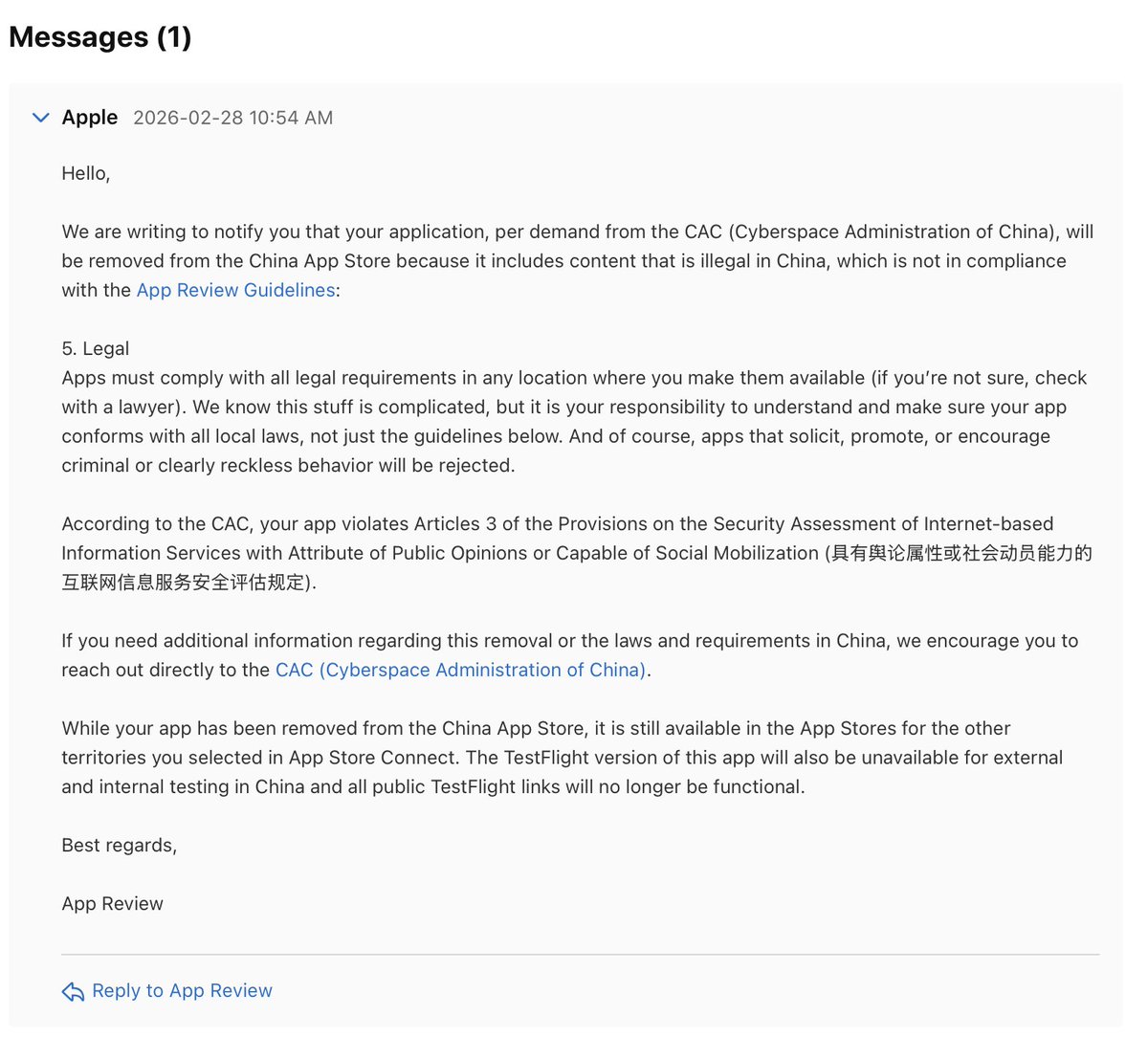

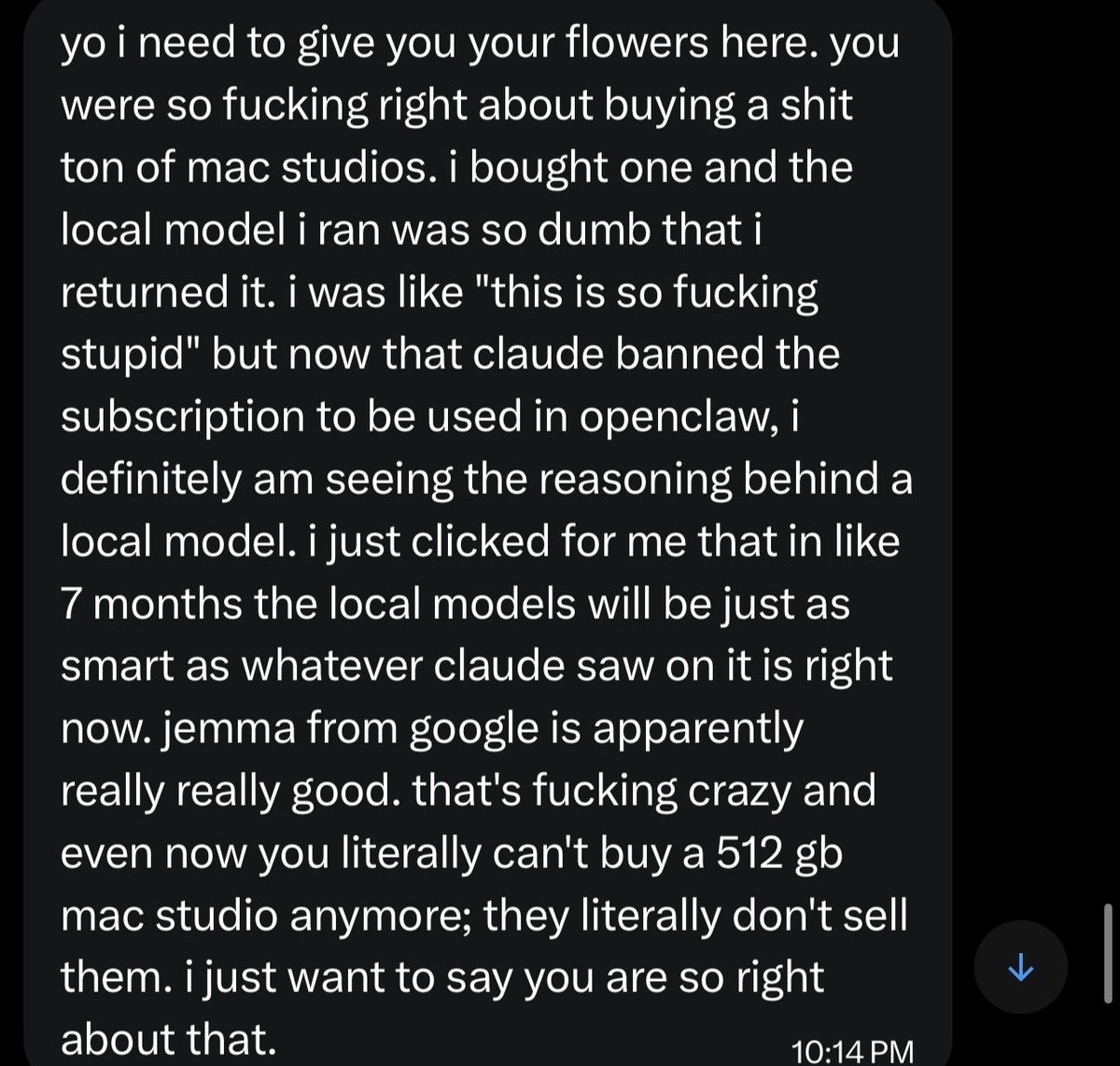

It’s over. Anthropic just banned OpenClaw. Uncensored thoughts: 1. Massive mistake that will come back to bite them 2. Open source needs to win. If you have a local model running on your Mac mini, no corporation will ever be able to ban you 3. ChatGPT 5.4 is the best model. But it sucks compared to opus in OpenClaw. I will continue to pay for Anthropic api 4. I have no doubt the next OpenAI model will be optimized for Openclaw and be excellent 5. In 6 months the local models will be as good as opus 4.6 and all of this will be forgotten 6. It’s feels like from a consumer sentiment perspective things have flipped for OpenAI and Anthropic. They were the darlings when Opus 4.5 came out 7. Going to the Kanye concert right now please don’t spoil the stage or set list in the replies 8. The best openclaw set up is now Opus as the orchestrator, then much cheaper models as the execution layer. If you do this properly you won’t be paying much more than $200 a month. I’m using Gemma 4 and Qwen 3.5 for execution on my DGX Spark and Mac Studio

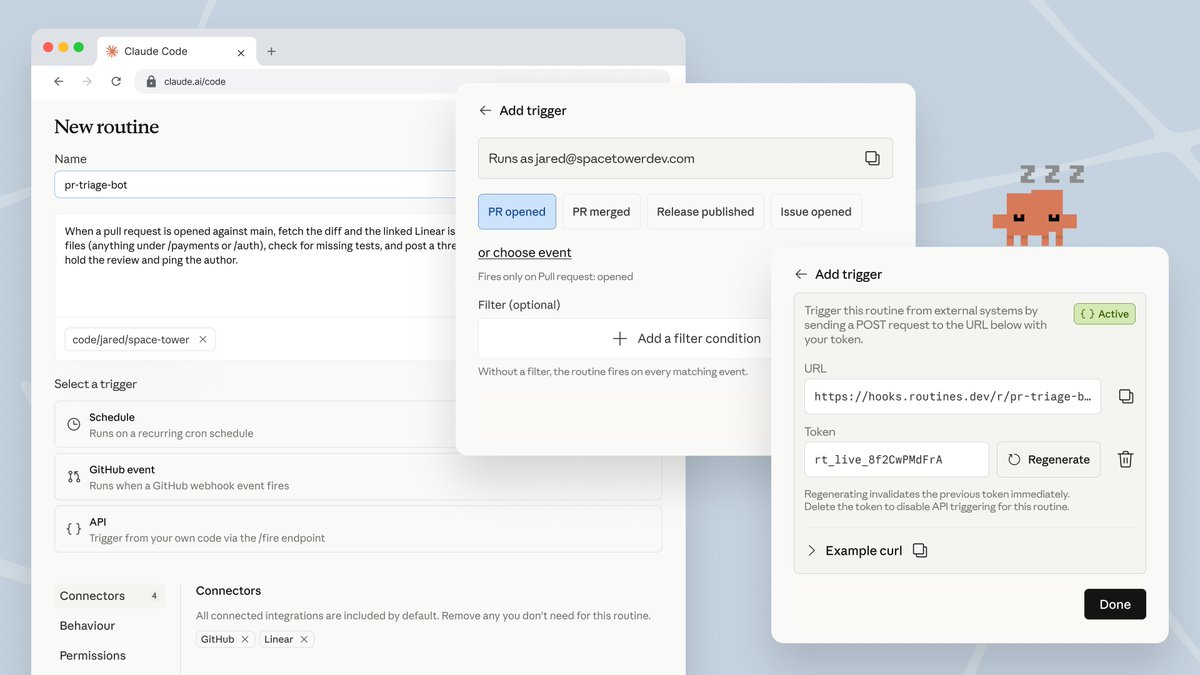

🤖 A study last year found that teams using AI-assisted development merge 98% more PRs, but review times go up by 91%! I compared 10 AI code review tools: pricing, strengths, and trade-offs so you don't have to. ioscinewsletter.com/issues/86