고정된 트윗

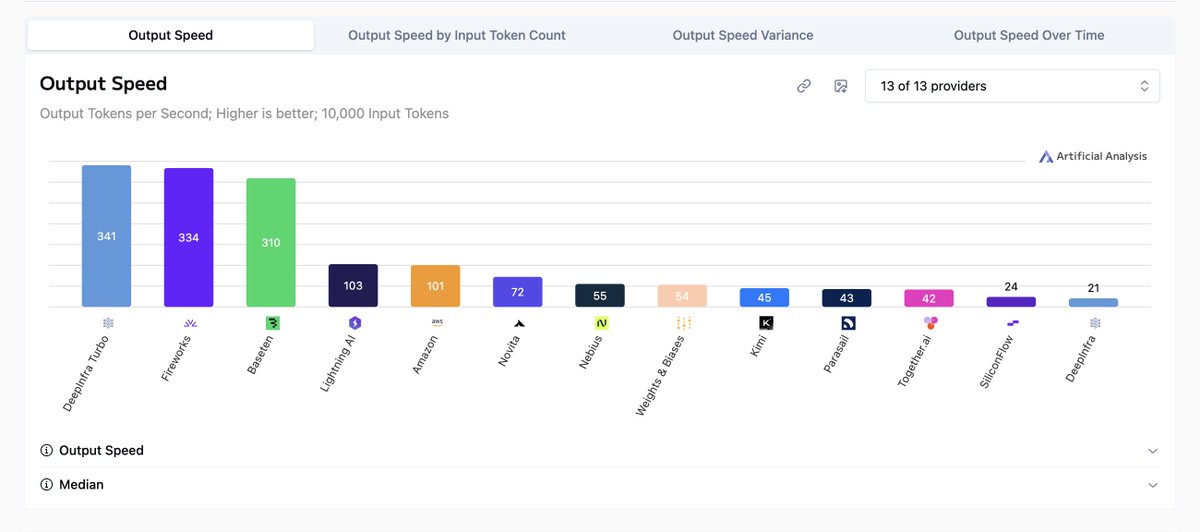

Deep Infra and NVIDIA are working together on NVIDIA NemoClaw - an open-source stack that simplifies running OpenClaw always-on assistants, more safely, with a single command.

As part of the @nvidia Agent Toolkit, it installs the NVIDIA OpenShell runtime - a secure environment for running autonomous agents, and open-source models like NVIDIA Nemotron.

nvidia.com/nemoclaw

English