MAGIC CAT

1.2K posts

Introducing Base44 Superagents. AI agents built with managed infrastructure, secured by default, one-click integrations, and 24/7 execution from the start. Everything is taken care of so you can focus on what your agent does, not how to get it running. That means no API keys to juggle, no config files, no security setup, and no maintenance. We handle all of it. Your Superagent connects to all the tools you already use in one click, runs on schedules and triggers, remembers context across sessions, acts proactively on your behalf, and keeps working around the clock. All from wherever you already are, WhatsApp, Telegram, Slack, or your browser. The AI agent everyone's been waiting for, with everything you need already built in. We're excited to get this into your hands, so we're giving free credits to everyone who comments and reposts in the next 24 hours.

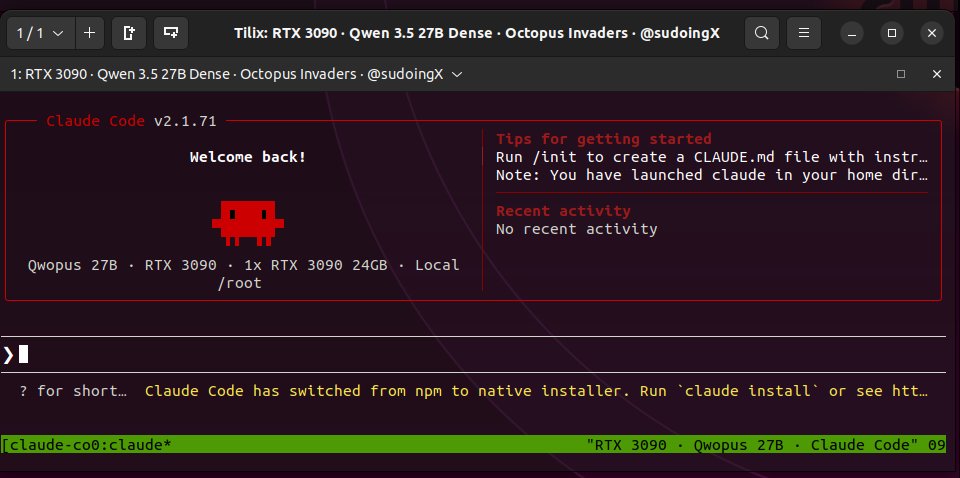

Qwopus on a single RTX 3090. Claude Opus 4.6 reasoning distilled into Qwen 3.5 27B dense, running through Claude's own coding agent (claude code). 29-35 tok/s with thinking mode on. the jinja bug that kills thinking on base Qwen doesn't carry over. harness and model matched. the base model would pause mid task on Claude Code. just stop generating. that's why i ran it through OpenCode, which handles stalled states automatically. this distilled version doesn't stall. it waits for tool outputs, reads them, selfcorrects when something breaks, and keeps going. i gave it a benchmark analysis task. went 9 minutes autonomous. wrote a README nobody asked for. zero steering. video is 5x speed but fully uncut. if you have a 3090, you can run this right now. free. no API. no subscription. opus structured reasoning on localhost. octopus invaders is next. same prompt that base qwen passed in 13 minutes and hermes 4.3 failed on 2x the hardware. i want to see if the distillation changes the outcome or just the style. more data soon.

We caught an issue that was causing the 2X promotional increase in limits to not be applied to an estimated 9% of plus and pro users for Codex. We have now fixed this issue and are reseting the rate limit for all plus and pro users to compensate. Apologies and thank you for the bug reports over the last couple of days.

@Watching_Whales @grok This mixture-of-experts approach is powerful! Key challenge: ensuring the selector model's latency doesn't bottleneck inference. Curious if you're considering hierarchical selection or caching strategies for common patterns?

Skill: github.com/distil-labs/di… Full example with data: github.com/distil-labs/di… Detailed walkthrough: distillabs.ai/blog/train-you… Reddit Thread: old.reddit.com/r/LocalLLaMA/c…